The NVIDIA GeForce GTX Titan X Review

by Ryan Smith on March 17, 2015 3:00 PM ESTCompute

Shifting gears, we have our look at compute performance.

As we outlined earlier, GTX Titan X is not the same kind of compute powerhouse that the original GTX Titan was. Make no mistake, at single precision (FP32) compute tasks it is still a very potent card, which for consumer level workloads is generally all that will matter. But for pro-level double precision (FP64) workloads the new Titan lacks the high FP64 performance of the old one.

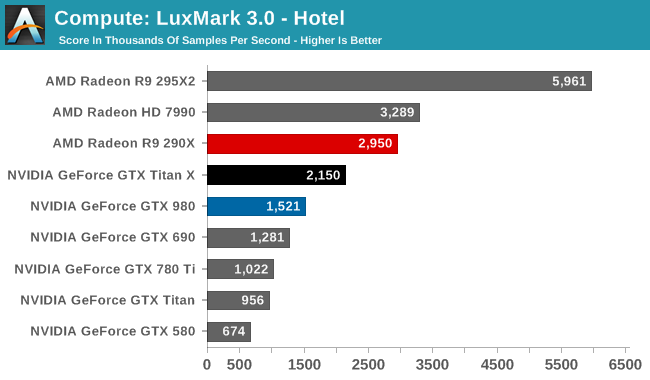

Starting us off for our look at compute is LuxMark3.0, the latest version of the official benchmark of LuxRender 2.0. LuxRender’s GPU-accelerated rendering mode is an OpenCL based ray tracer that forms a part of the larger LuxRender suite. Ray tracing has become a stronghold for GPUs in recent years as ray tracing maps well to GPU pipelines, allowing artists to render scenes much more quickly than with CPUs alone.

While in LuxMark 2.0 AMD and NVIDIA were fairly close post-Maxwell, the recently released LuxMark 3.0 finds NVIDIA trailing AMD once more. While GTX Titan X sees a better than average 41% performance increase over the GTX 980 (owing to its ability to stay at its max boost clock on this benchmark) it’s not enough to dethrone the Radeon R9 290X. Even though GTX Titan X packs a lot of performance on paper, and can more than deliver it in graphics workloads, as we can see compute workloads are still highly variable.

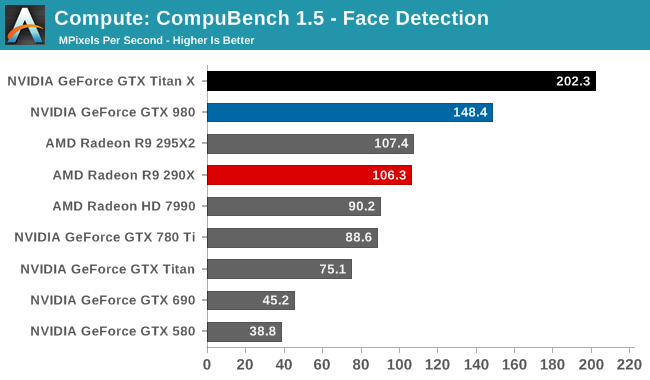

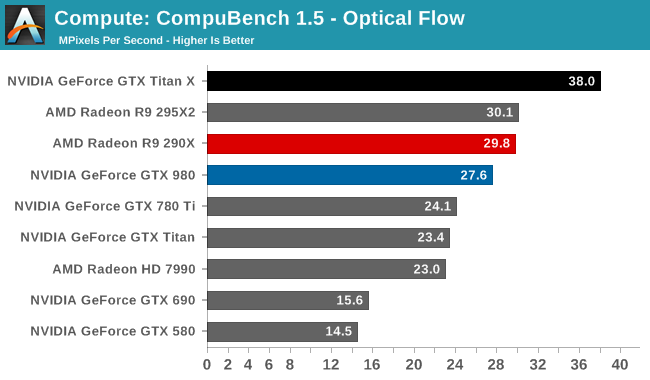

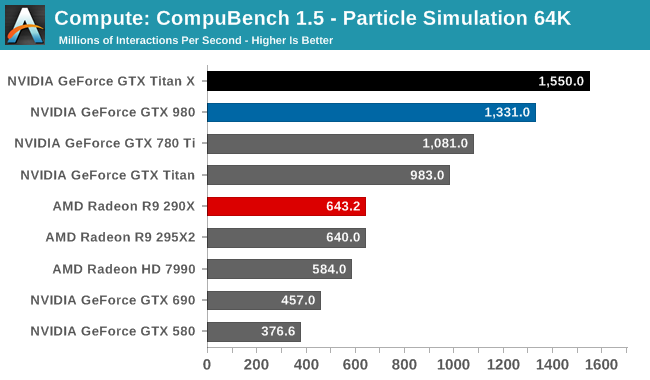

For our second set of compute benchmarks we have CompuBench 1.5, the successor to CLBenchmark. CompuBench offers a wide array of different practical compute workloads, and we’ve decided to focus on face detection, optical flow modeling, and particle simulations.

Although GTX Titan X struggled at LuxMark, the same cannot be said for CompuBench. Though the lead varies with the specific sub-benchmark, in every case the latest Titan comes out on top. Face detection in particular shows some massive gains, with GTX Titan X more than doubling the GK110 based GTX 780 Ti's performance.

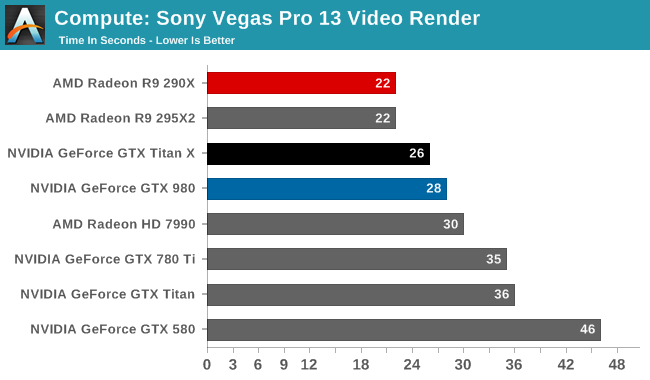

Our 3rd compute benchmark is Sony Vegas Pro 13, an OpenGL and OpenCL video editing and authoring package. Vegas can use GPUs in a few different ways, the primary uses being to accelerate the video effects and compositing process itself, and in the video encoding step. With video encoding being increasingly offloaded to dedicated DSPs these days we’re focusing on the editing and compositing process, rendering to a low CPU overhead format (XDCAM EX). This specific test comes from Sony, and measures how long it takes to render a video.

Traditionally a benchmark that favors AMD, GTX Titan X closes the gap some. But it's still not enough to surpass the R9 290X.

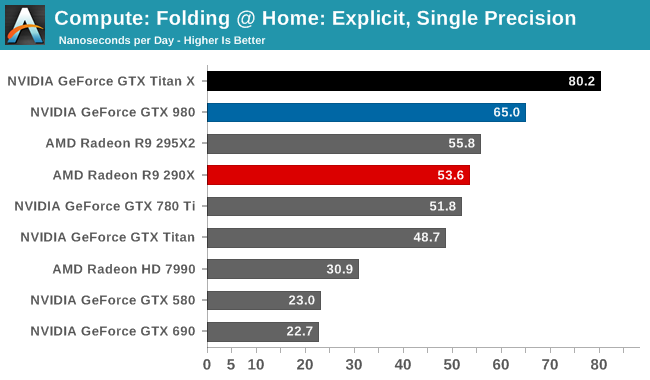

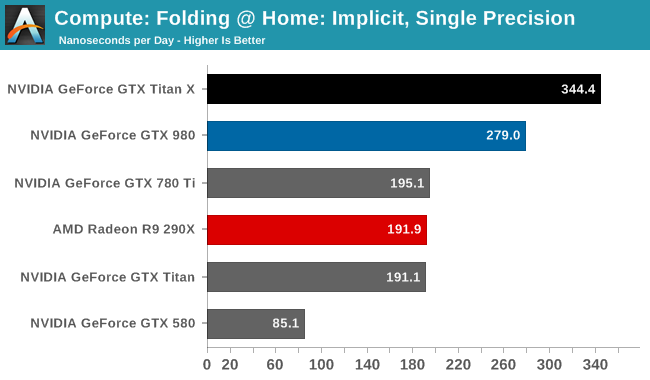

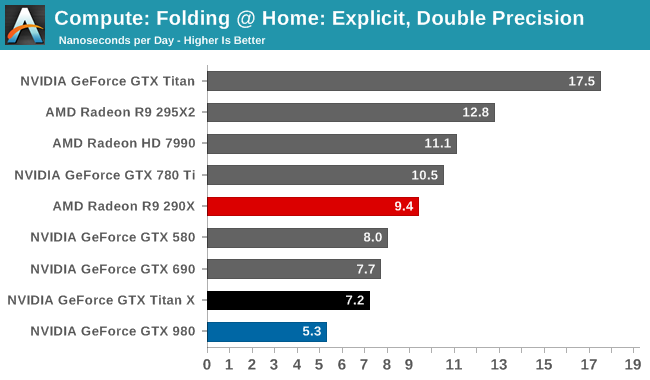

Moving on, our 4th compute benchmark is FAHBench, the official Folding @ Home benchmark. Folding @ Home is the popular Stanford-backed research and distributed computing initiative that has work distributed to millions of volunteer computers over the internet, each of which is responsible for a tiny slice of a protein folding simulation. FAHBench can test both single precision and double precision floating point performance, with single precision being the most useful metric for most consumer cards due to their low double precision performance. Each precision has two modes, explicit and implicit, the difference being whether water atoms are included in the simulation, which adds quite a bit of work and overhead. This is another OpenCL test, utilizing the OpenCL path for FAHCore 17.

Folding @ Home’s single precision tests reiterate just how powerful GTX Titan X can be at FP32 workloads, even if it’s ostensibly a graphics GPU. With a 50-75% lead over the GTX 780 Ti, the GTX Titan X showcases some of the remarkable efficiency improvements that the Maxwell GPU architecture can offer in compute scenarios, and in the process shoots well past the AMD Radeon cards.

On the other hand with a native FP64 rate of 1/32, the GTX Titan X flounders at double precision. There is no better example of just how much the GTX Titan X and the original GTX Titan differ in their FP64 capabilities than this graph; the GTX Titan X can’t beat the GTX 580, never mind the chart-topping original GTX Titan. FP64 users looking for an entry level FP64 card would be well advised to stick with the GTX Titan Black for now. The new Titan is not the prosumer compute card that was the old Titan.

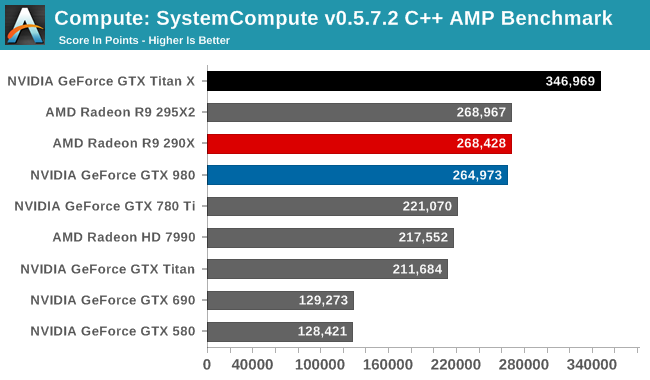

Wrapping things up, our final compute benchmark is an in-house project developed by our very own Dr. Ian Cutress. SystemCompute is our first C++ AMP benchmark, utilizing Microsoft’s simple C++ extensions to allow the easy use of GPU computing in C++ programs. SystemCompute in turn is a collection of benchmarks for several different fundamental compute algorithms, with the final score represented in points. DirectCompute is the compute backend for C++ AMP on Windows, so this forms our other DirectCompute test.

With the GTX 980 already performing well here, the GTX Titan X takes it home, improving on the GTX 980 by 31%. Whereas GTX 980 could only hold even with the Radeon R9 290X, the GTX Titan X takes a clear lead.

Overall then the new GTX Titan X can still be a force to be reckoned with in compute scenarios, but only when the workloads are FP32. Users accustomed to the original GTX Titan’s FP64 performance on the other hand will find that this is a very different card, one that doesn’t live up to the same standards.

276 Comments

View All Comments

Kevin G - Wednesday, March 18, 2015 - link

There was indeed a bigger chip due closer to the GK104/GTX 680's launch: the GK100. However it was cancelled due to bugs in the design. A fixed revision eventually became the GK110 which was ultimately released as the Titan/GTX 780.After that there have been two more revisions. The GK110B is quick respin which all fully enabled dies stem from (Titan Black/GTX 780 Ti). Then late last nVidia surprised everyone with the GK210 which has a handful of minor architectural improvements (larger register files etc.).

The morale of the story is that building large dies is hard and takes lots of time to get right.

chizow - Monday, March 23, 2015 - link

We don't know what happened to GK100, it is certainly possible as I've guessed aloud numerous times that AMD's 7970 and overall lackluster pricing/performance afforded Nvidia the opportunity to scrap GK100 and respin it to GK110 while trotting GK104 out as its flagship, because it was close enough to AMD's best and GK100 may have had problems as you described. All of that led to considerable doubt whether or not we would see a big Kepler, a sentiment that was even dishonestly echoed by some Nvidia employees I got into it with on their forums.Only in October 2012 did we see signs of Big Kepler in the Titan supercomputer with K20X, but still no sign of a GeForce card. Its no doubt that a big die takes time, but Nvidia had always led with their big chip first, since G80 and this was the first time they deviated from that strategy while parading what was clearly their 2nd best, mid-range performance ASIC as flagship.

Titan X sheds all that nonsense and goes back to their gaming roots. It is their best effort, up front, no BS. 8Bn transistors Inspired by Gamers and Made by Nvidia. So as someone who buys GeForce for gaming first and foremost, I'm going to reward them for those efforts so they keep rewarding me with future cards of this kind. :)

Railgun - Wednesday, March 18, 2015 - link

With regards to the price, 12GB of RAM isn't justification enough for it. Memory isn't THAT expensive in the grand scheme of things. What the Titan was originally isn't what the Titan X is now. They can't be seen as the same lineage. If you want to say memory is the key, the original Titan with its 6GB could be seen as more than still relevant today. Crysis is 45% faster in 4K with the X than the original. Is that the chip itself or memory helping? I vote the former given the 690 is 30% faster in 4K with the same game than the original Titan, with only 4GB total memory. VRAM isn't going to really be relevant for a bit other than those that are running stupidly large spans. It's a shame as Ryan touches on VRAM usage in Middle Earth, but doesn't actually indicate what's being used. There too, the 780Ti beats the original Titan sans huge VRAM reserves. Granted, barely, but point being is that VRAM isn't the reason. This won't be relevant for a bit I think.You can't compare an aftermarket price to how an OEM prices their products. The top tier card other than the TiX is the 980, which has been mentioned ad nauseam that the TiX is NOT worth 80% more given its performance. If EVGA wants to OC a card out of their shop and charge 45% more than a stock clock card, then buyer beware if it's not a 45% gain in performance. I for one don't see the benefit of a card like that. The convenience isn't there given the tools and community support for OCing something one's self.

I too game on 25x14 and there've been zero issues regarding VRAM, or the lack thereof.

chizow - Monday, March 23, 2015 - link

I didn't say VRAM was the only reason, I said it was one of the reasons. The bigger reason for me is that it is the FULL BOAT GM200 front and center. No waiting. No cut cores. No cut SMs for compute. No cut down part because of TDP. It's 100% of it up front, 100% of it for gaming. I'm sold and onboard until Pascal. That really is the key factor, who wants to wait for unknown commodities and timelines if you know this will set you within +/-10% of the next fastest part's performance if you can guarantee you get it today for maybe a 25-30% premium? I guess it really depends on how much you value your current and near-future gaming experience. I knew from the day I got my ROG Swift (with 2x670 SLI) I would need more to drive it. 980 was a bit of a sidegrade in absolute performance and I still knew i needed more perf, and now I have it with Titan X.As for VRAM, 12GB is certainly overkill today, but I'd say 6GB isn't going to be enough soon enough. Games are already pushing 4GB (SoM, FC4, AC:U) and that's still with last-gen type textures. Once you start getting console ports with PC texture packs I could see 6 and 8GB being pushed quite easily, as that is the target framebuffer for consoles (2+6). So yes, while 12GB may be too much, 6GB probably isn't enough, especially once you start looking at 4K and Surround.

Again, if you don't think the price is worth it over a 980 that's fine and fair, but the reality of it is, if you want better single-GPU performance there is no alternative. A 2nd 980 for SLI is certainly an option, but for my purposes and my resolution, I would prefer to stick to a single-card solution if possible, which is why I went with a Titan X and will be selling my 980 instead of picking up a 2nd one as I originally intended.

Best part about Titan X is it gives another choice and a target level of performance for everyone else!

Frenetic Pony - Tuesday, March 17, 2015 - link

They could've halved the ram, dropped the price by $200, and done a lot better without much to any performance hit.Denithor - Wednesday, March 18, 2015 - link

LOL.You just described the GTX 980 Ti, which will likely launch within a few months to answer the 390X.

chizow - Wednesday, March 18, 2015 - link

@Frenetic Pony, maybe now, but what about once DX12 drops and games are pushing over 6GB? We already see games saturating 4GB, and we still haven't seen next-gen engine games like UE4. Why compromise for a few hundred less? You haven't seen all the complaints from 780Ti users about how 3GB isn't enough anymore? Shoudn't be a problem for this card, which is just 1 less thing to worry about.LukaP - Thursday, March 19, 2015 - link

Games dont push 4GB... Check the LTT Ultrawide video, where he barely got Shadow of Mordor on ultra to go past 4GBs on 3 ulrawide 1440p screens.And as a game dev i can tell you, with proper optimisations, more than 4GB is insane, on a GPU, unless you just load stuff in with a predictive algorithm, to avoid PCIe bottlenecks.

And please do show me where a 780Ti user isnt happy with his cards performance at 1080-1600p. Because the card does, and will continue to perform great on those resolutions, since games wont really advance, due to consoles limiting again.

LukaP - Thursday, March 19, 2015 - link

Also, DX12 wont make games magically use more VRAM. all it really does is it makes the CPU and GPU communicate better. It wont magically make games run or look better. both of those are up to the devs, and the look better part is certainly not the textures or polycounts. Its merely the amount of drawcalls per frame going up, meaning more UNIQUE objects. (contrary to more objects, which can be achieved through instancing easily in any modern engine, but Ubisoft havent learned that yet)chizow - Monday, March 23, 2015 - link

DX12 raises the bar for all games by enabling better visuals, you're going to get better top-end visuals across the board. Certainly you don't think UE4 when it debuts will have the same reqs as DX11 based games on UE3?Even if you have the same size textures as before 2K or 4K assets as is common now, the fact you are drawing more polygons enabled by DX12's lower overhead, higher draw call/poly capabilities means they need to be textured, meaning higher VRAM requirement unless you are using the same textures over and over again.

Also, since you are a game dev, you would also know Devs are going more and more towards bindless or megatextures that specifically make great use of textures staying resident in local VRAM for faster accesses, rather than having to optimize and cache/load/discharge them.