The OCZ Vector 180 (240GB, 480GB & 960GB) SSD Review

by Kristian Vättö on March 24, 2015 2:00 PM EST- Posted in

- Storage

- SSDs

- OCZ

- Barefoot 3

- Vector 180

AnandTech Storage Bench - Heavy

While The Destroyer focuses on sustained and worst-case performance by hammering the drive with nearly 1TB worth of writes, the Heavy trace provides a more typical enthusiast and power user workload. By writing less to the drive, the Heavy trace doesn't drive the SSD into steady-state and thus the trace gives us a good idea of peak performance combined with some basic garbage collection routines.

| AnandTech Storage Bench - Heavy | ||||||||||||

| Workload | Description | Applications Used | ||||||||||

| Photo Editing | Import images, edit, export | Adobe Photoshop | ||||||||||

| Gaming | Pllay games, load levels | Starcraft II, World of Warcraft | ||||||||||

| Content Creation | HTML editing | Dreamweaver | ||||||||||

| General Productivity | Browse the web, manage local email, document creation, application install, virus/malware scan | Chrome, IE10, Outlook, Windows 8, AxCrypt, uTorrent, AdAware | ||||||||||

| Application Development | Compile Chromium | Visual Studio 2008 | ||||||||||

The Heavy trace drops virtualization from the equation and goes a bit lighter on photo editing and gaming, making it more relevant to the majority of end-users.

| AnandTech Storage Bench - Heavy - Specs | ||||||||||||

| Reads | 2.17 million | |||||||||||

| Writes | 1.78 million | |||||||||||

| Total IO Operations | 3.99 million | |||||||||||

| Total GB Read | 48.63 GB | |||||||||||

| Total GB Written | 106.32 GB | |||||||||||

| Average Queue Depth | ~4.6 | |||||||||||

| Focus | Peak IO, basic GC routines | |||||||||||

The Heavy trace is actually more write-centric than The Destroyer is. A part of that is explained by the lack of virtualization because operating systems tend to be read-intensive, be that a local or virtual system. The total number of IOs is less than 10% of The Destroyer's IOs, so the Heavy trace is much easier for the drive and doesn't even overwrite the drive once.

| AnandTech Storage Bench - Heavy - IO Breakdown | |||||||||||

| IO Size | <4KB | 4KB | 8KB | 16KB | 32KB | 64KB | 128KB | ||||

| % of Total | 7.8% | 29.2% | 3.5% | 10.3% | 10.8% | 4.1% | 21.7% | ||||

The Heavy trace has more focus on 16KB and 32KB IO sizes, but more than half of the IOs are still either 4KB or 128KB. About 43% of the IOs are sequential with the rest being slightly more full random than pseudo-random.

| AnandTech Storage Bench - Heavy - QD Breakdown | ||||||||||||

| Queue Depth | 1 | 2 | 3 | 4-5 | 6-10 | 11-20 | 21-32 | >32 | ||||

| % of Total | 63.5% | 10.4% | 5.1% | 5.0% | 6.4% | 6.0% | 3.2% | 0.3% | ||||

In terms of queue depths the Heavy trace is even more focused on very low queue depths with three fourths happening at queue depth of one or two.

I'm reporting the same performance metrics as in The Destroyer benchmark, but I'm running the drive in both empty and full states. Some manufacturers tend to focus intensively on peak performance on an empty drive, but in reality the drive will always contain some data. Testing the drive in full state gives us valuable information whether the drive loses performance once it's filled with data.

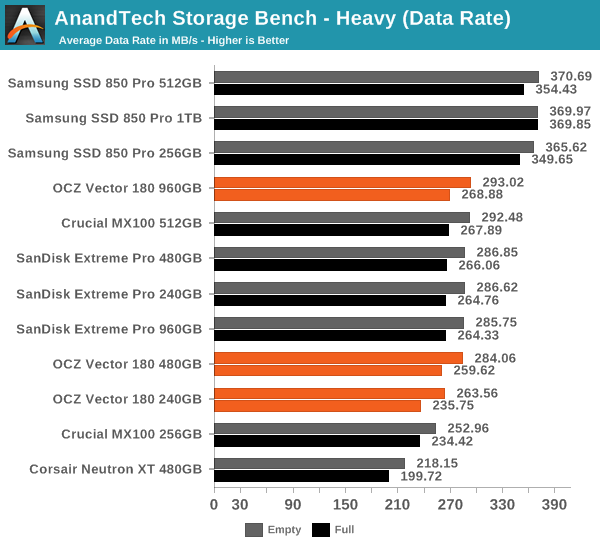

It sure seems like Samsung is the only manufacturer that has figured out a secret recipe to boost throughput with SATA 6Gbps because all the other drives are hitting a wall at ~290MB/s.

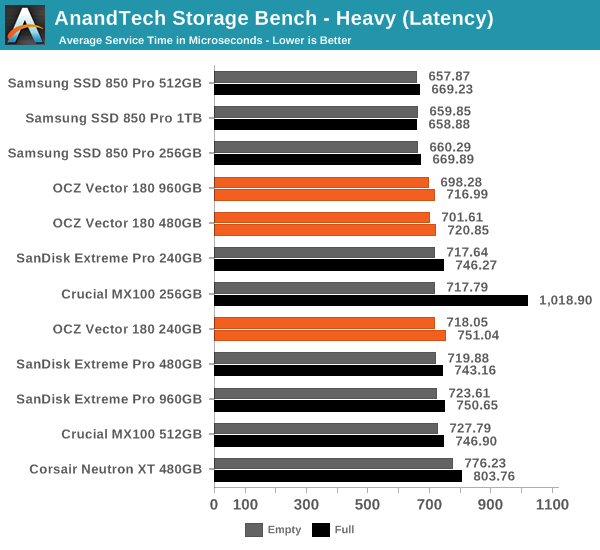

In terms of latency the difference between all drives is much more marginal. The Vector 180 has a small advantage over the Extreme Pro at larger capacities, although once again the 850 Pro tops the charts.

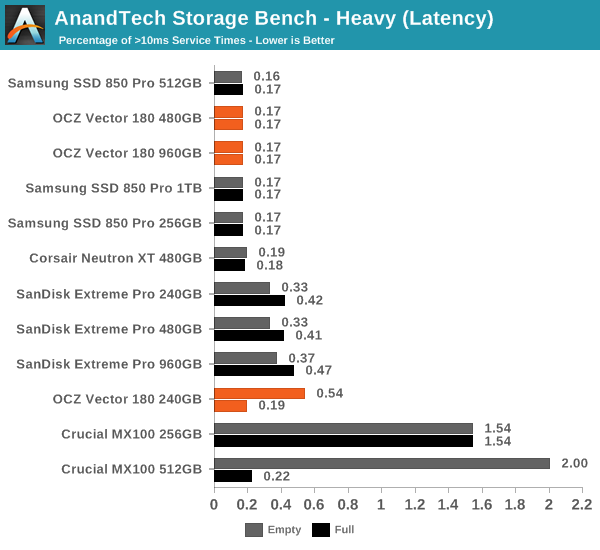

The Vector 180 is also very consistent with only a small number of >10ms IOs. Oddly enough, the 240GB does better when it's full, although I think that might be just an anomaly since it practically makes no sense at all.

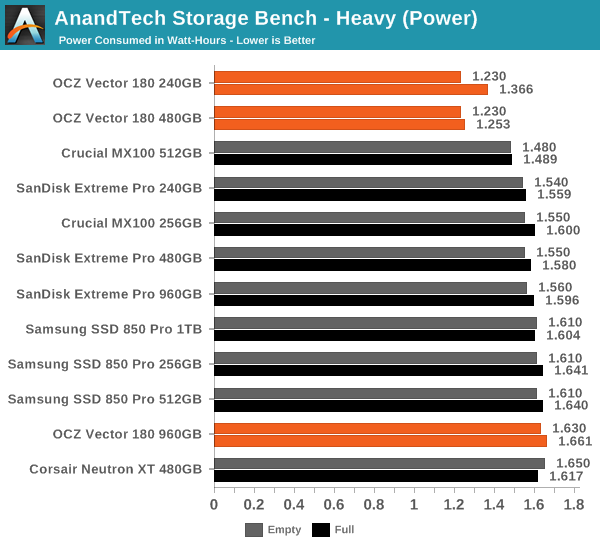

Similar to what we saw in The Destroyer benchmark, the 240GB and 480GB Vector 180 has wonderful load power characteristics and the difference to other drives is actually fairly significant.

89 Comments

View All Comments

nathanddrews - Tuesday, March 24, 2015 - link

This exactly. LOLSamus - Wednesday, March 25, 2015 - link

Isn't it a crime to put Samsung and support in the same sentence? That companies Achilles heal is complete lack of support. Look at all the people with GalaxyS3's and smart tv's that were left out to dry the moment next gen models came out. And on a polarizingly opposite end of the spectrum is Apple who still supports the nearly 4 year old iPhone 4S. I'm no Apple fan but that is commendable and something all companies should pay attention too. Customer support pays off.Oxford Guy - Wednesday, March 25, 2015 - link

Apple did a shit job with the white Core Duo iMacs which all develop bad pixel lines. We had fourteen in a lab and all of them developed the problem. Apple also dropped the ball on people with the 8600 GT and similar Nvidia GPUs in their Macbook Pros by refusing to replace the defective GPUs with anything other than new defective GPUs. Both, as far as I know, caused class-action lawsuits.Oxford Guy - Wednesday, March 25, 2015 - link

I forgot to mention that not only did Apple not actually fix the problem with those bad GPUs, customers would have to jump through a bunch of hoops like bringing their machines to an Apple Store so someone there could decide if they qualify or not for a replacement defective GPU.matt.vanmater - Tuesday, March 24, 2015 - link

I am curious, does the drive return a write IO as complete as soon as it is stored in the DRAM?If so, this drive could be fantastic to use as a ZFS ZIL.

Think of it this way: you partition it so the size does not exceed the DRAM size (e.g. 512MB), and use that partition as ZIL. The small partition size guarantees that any writes to the drive fit in DRAM, and the PFM guarantees there is no loss. This is similar in concept to short-stroking hard drives with a spinning platter.

For those of you that don't know, ZFS performance is significantly enhanced by the existence of a ZIL device with very low latency (and DRAM on board this drive should fit that bill). A fast ZIL is particularly important for people who use NFS as a datastore for VMWare. This is because VMWare forces NFS to Sync write IOs, even if your ZFS config is to not require sync. This device may or may not perform as well as a DDRDRIVE (ddrdrive.com) but it comes in at about 1/20th the price so it is a very promising idea!

ocztosh -- has your team considered the use of this device as a ZFS array ZIL device like i describe above?

Kristian Vättö - Tuesday, March 24, 2015 - link

PFM+ is limited to protecting the NAND mapping table, so any user data will still be lost in case of a sudden power loss. Hence the Vector 180 isn't really suitable for the scenario you described.matt.vanmater - Wednesday, March 25, 2015 - link

OK good to know. To be honest though, what matters more in this scenario (for me) is if the device returns a write io as successful immediately when it is stored in DRAM, or if it waits until it is stored in flash.As nils_ mentions below, a UPS is another way of partially mitigating a power failure. In my case, the battery backup is a nice to have rather than a must have.

matt.vanmater - Tuesday, March 24, 2015 - link

One minor addition... OCZ was clearly thinking about ZFS ZIL devices when they announced prototype devices called "Aeon" about 2 years ago. They even blogged about this use case:http://eblog.ocz.com/ssd-powered-clouds-times-chan...

Unfortunately OCZ never brought these drives to market (I wish they did!) so we're stuck waiting for a consumer DRAM device that isn't 10+ year old technology or $2k+ in price tag.

nils_ - Wednesday, March 25, 2015 - link

Something like the PMC Flashtec devices? Those are boards with 4-16GiB of DRAM backed by the same size of flash chips and capacitors with a NVMe interface. If the system loses power the DRAM is flushed to flash and restored when the power is back on. This is great for things like ZIL, Journals, doublewrite buffer (like in MySQL/MariaDB), ceph journals etc..And before it comes up, a UPS can fail too (I've seen it happen more often than I'd like to count).

matt.vanmater - Wednesday, March 25, 2015 - link

I saw those PMC Flashtec devices as well and they look promising, but I don't see any for sale yet. Hopefully they don't become vaporware like OCZ Aeon drives.Also, in my opinion I prefer a SATAIII or SAS interface over PCI-e, because (in theory) a SATA/SAS device will work in almost any motherboard on any Operating System without special drivers, whereas PCI-e devices will need special device drivers for each OS. Obviously, waiting for drivers to be created limits which systems a device can be used in.

True PCI-e will definitely have greater throughput than SATA/SAS, but the ZFS ZIL use case needs very low latency and NOT necessarily high throughput. I haven't seen any data indicating that PCI-e is any better/worse on IO latency than SATA/SAS.