The OCZ Vector 180 (240GB, 480GB & 960GB) SSD Review

by Kristian Vättö on March 24, 2015 2:00 PM EST- Posted in

- Storage

- SSDs

- OCZ

- Barefoot 3

- Vector 180

Performance Consistency

We've been looking at performance consistency since the Intel SSD DC S3700 review in late 2012 and it has become one of the cornerstones of our SSD reviews. Back in the days many SSD vendors were only focusing on high peak performance, which unfortunately came at the cost of sustained performance. In other words, the drives would push high IOPS in certain synthetic scenarios to provide nice marketing numbers, but as soon as you pushed the drive for more than a few minutes you could easily run into hiccups caused by poor performance consistency.

Once we started exploring IO consistency, nearly all SSD manufacturers made a move to improve consistency and for the 2015 suite, I haven't made any significant changes to the methodology we use to test IO consistency. The biggest change is the move from VDBench to Iometer 1.1.0 as the benchmarking software and I've also extended the test from 2000 seconds to a full hour to ensure that all drives hit steady-state during the test.

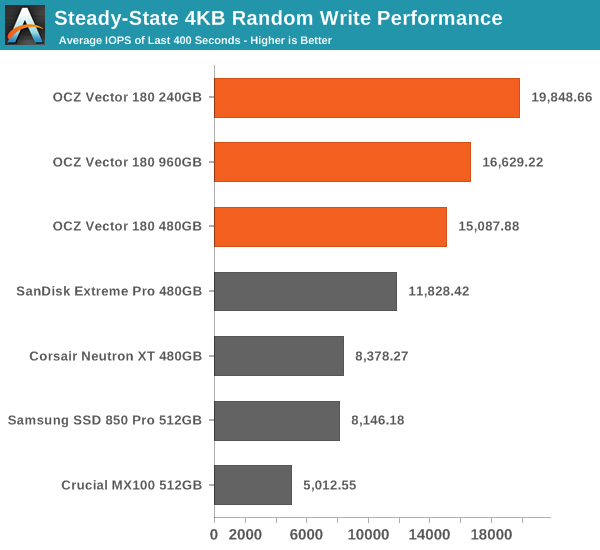

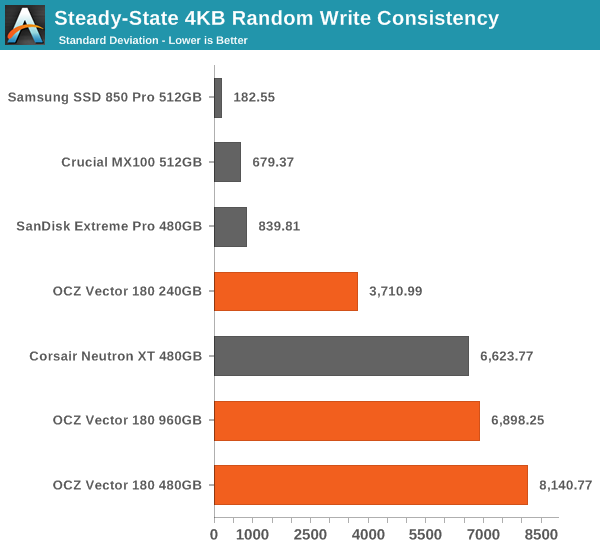

For better readability, I now provide bar graphs with the first one being an average IOPS of the last 400 seconds and the second graph displaying the standard deviation during the same period. Average IOPS provides a quick look into overall performance, but it can easily hide bad consistency, so looking at standard deviation is necessary for a complete look into consistency.

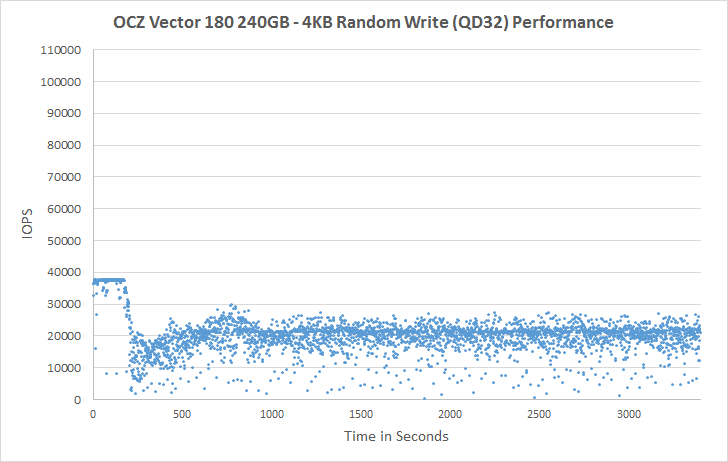

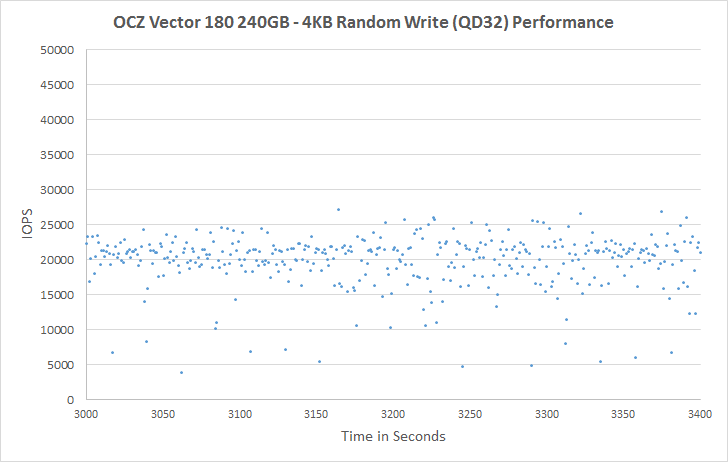

I'm still providing the same scatter graphs too, of course. However, I decided to dump the logarithmic graphs and go linear-only since logarithmic graphs aren't as accurate and can be hard to interpret for those who aren't familiar with them. I provide two graphs: one that includes the whole duration of the test and another that focuses on the last 400 seconds of the test to get a better scope into steady-state performance.

Barefoot 3 has always done well in steady-state performance and the Vector 180 is no exception. It provides the highest average IOPS by far and the advantage is rather significant at ~2x compared to other drives.

But on the down side, the Vector 180 also has the highest variation in performance. While the 850 Pro, MX100 and Extreme Pro are all slower in terms of average IOPS, they are a lot more consistent and what's notable about the Vector 180 is how the consistency decreases as the capacity goes up.

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

Looking at the scatter graph reveals the source of poor consistency: the IOPS reduce to zero or near zero even before we hit any type of steady state. This is known behavior of the Barefoot 3 platform, but what's alarming is how the 480GB and 960GB drives frequently drop to zero IOPS. I don't find that acceptable for a modern high-end SSD, no matter how good the average IOPS is. Increasing the over-provisioning helps a bit by shifting the dots up, but it's still clear that 240GB is the optimal capacity for Barefoot 3 because after that the platform starts to run into issues with consistency due to metadata handling.

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

89 Comments

View All Comments

nathanddrews - Tuesday, March 24, 2015 - link

This exactly. LOLSamus - Wednesday, March 25, 2015 - link

Isn't it a crime to put Samsung and support in the same sentence? That companies Achilles heal is complete lack of support. Look at all the people with GalaxyS3's and smart tv's that were left out to dry the moment next gen models came out. And on a polarizingly opposite end of the spectrum is Apple who still supports the nearly 4 year old iPhone 4S. I'm no Apple fan but that is commendable and something all companies should pay attention too. Customer support pays off.Oxford Guy - Wednesday, March 25, 2015 - link

Apple did a shit job with the white Core Duo iMacs which all develop bad pixel lines. We had fourteen in a lab and all of them developed the problem. Apple also dropped the ball on people with the 8600 GT and similar Nvidia GPUs in their Macbook Pros by refusing to replace the defective GPUs with anything other than new defective GPUs. Both, as far as I know, caused class-action lawsuits.Oxford Guy - Wednesday, March 25, 2015 - link

I forgot to mention that not only did Apple not actually fix the problem with those bad GPUs, customers would have to jump through a bunch of hoops like bringing their machines to an Apple Store so someone there could decide if they qualify or not for a replacement defective GPU.matt.vanmater - Tuesday, March 24, 2015 - link

I am curious, does the drive return a write IO as complete as soon as it is stored in the DRAM?If so, this drive could be fantastic to use as a ZFS ZIL.

Think of it this way: you partition it so the size does not exceed the DRAM size (e.g. 512MB), and use that partition as ZIL. The small partition size guarantees that any writes to the drive fit in DRAM, and the PFM guarantees there is no loss. This is similar in concept to short-stroking hard drives with a spinning platter.

For those of you that don't know, ZFS performance is significantly enhanced by the existence of a ZIL device with very low latency (and DRAM on board this drive should fit that bill). A fast ZIL is particularly important for people who use NFS as a datastore for VMWare. This is because VMWare forces NFS to Sync write IOs, even if your ZFS config is to not require sync. This device may or may not perform as well as a DDRDRIVE (ddrdrive.com) but it comes in at about 1/20th the price so it is a very promising idea!

ocztosh -- has your team considered the use of this device as a ZFS array ZIL device like i describe above?

Kristian Vättö - Tuesday, March 24, 2015 - link

PFM+ is limited to protecting the NAND mapping table, so any user data will still be lost in case of a sudden power loss. Hence the Vector 180 isn't really suitable for the scenario you described.matt.vanmater - Wednesday, March 25, 2015 - link

OK good to know. To be honest though, what matters more in this scenario (for me) is if the device returns a write io as successful immediately when it is stored in DRAM, or if it waits until it is stored in flash.As nils_ mentions below, a UPS is another way of partially mitigating a power failure. In my case, the battery backup is a nice to have rather than a must have.

matt.vanmater - Tuesday, March 24, 2015 - link

One minor addition... OCZ was clearly thinking about ZFS ZIL devices when they announced prototype devices called "Aeon" about 2 years ago. They even blogged about this use case:http://eblog.ocz.com/ssd-powered-clouds-times-chan...

Unfortunately OCZ never brought these drives to market (I wish they did!) so we're stuck waiting for a consumer DRAM device that isn't 10+ year old technology or $2k+ in price tag.

nils_ - Wednesday, March 25, 2015 - link

Something like the PMC Flashtec devices? Those are boards with 4-16GiB of DRAM backed by the same size of flash chips and capacitors with a NVMe interface. If the system loses power the DRAM is flushed to flash and restored when the power is back on. This is great for things like ZIL, Journals, doublewrite buffer (like in MySQL/MariaDB), ceph journals etc..And before it comes up, a UPS can fail too (I've seen it happen more often than I'd like to count).

matt.vanmater - Wednesday, March 25, 2015 - link

I saw those PMC Flashtec devices as well and they look promising, but I don't see any for sale yet. Hopefully they don't become vaporware like OCZ Aeon drives.Also, in my opinion I prefer a SATAIII or SAS interface over PCI-e, because (in theory) a SATA/SAS device will work in almost any motherboard on any Operating System without special drivers, whereas PCI-e devices will need special device drivers for each OS. Obviously, waiting for drivers to be created limits which systems a device can be used in.

True PCI-e will definitely have greater throughput than SATA/SAS, but the ZFS ZIL use case needs very low latency and NOT necessarily high throughput. I haven't seen any data indicating that PCI-e is any better/worse on IO latency than SATA/SAS.