The OCZ Vector 180 (240GB, 480GB & 960GB) SSD Review

by Kristian Vättö on March 24, 2015 2:00 PM EST- Posted in

- Storage

- SSDs

- OCZ

- Barefoot 3

- Vector 180

AnandTech Storage Bench - Heavy

While The Destroyer focuses on sustained and worst-case performance by hammering the drive with nearly 1TB worth of writes, the Heavy trace provides a more typical enthusiast and power user workload. By writing less to the drive, the Heavy trace doesn't drive the SSD into steady-state and thus the trace gives us a good idea of peak performance combined with some basic garbage collection routines.

| AnandTech Storage Bench - Heavy | ||||||||||||

| Workload | Description | Applications Used | ||||||||||

| Photo Editing | Import images, edit, export | Adobe Photoshop | ||||||||||

| Gaming | Pllay games, load levels | Starcraft II, World of Warcraft | ||||||||||

| Content Creation | HTML editing | Dreamweaver | ||||||||||

| General Productivity | Browse the web, manage local email, document creation, application install, virus/malware scan | Chrome, IE10, Outlook, Windows 8, AxCrypt, uTorrent, AdAware | ||||||||||

| Application Development | Compile Chromium | Visual Studio 2008 | ||||||||||

The Heavy trace drops virtualization from the equation and goes a bit lighter on photo editing and gaming, making it more relevant to the majority of end-users.

| AnandTech Storage Bench - Heavy - Specs | ||||||||||||

| Reads | 2.17 million | |||||||||||

| Writes | 1.78 million | |||||||||||

| Total IO Operations | 3.99 million | |||||||||||

| Total GB Read | 48.63 GB | |||||||||||

| Total GB Written | 106.32 GB | |||||||||||

| Average Queue Depth | ~4.6 | |||||||||||

| Focus | Peak IO, basic GC routines | |||||||||||

The Heavy trace is actually more write-centric than The Destroyer is. A part of that is explained by the lack of virtualization because operating systems tend to be read-intensive, be that a local or virtual system. The total number of IOs is less than 10% of The Destroyer's IOs, so the Heavy trace is much easier for the drive and doesn't even overwrite the drive once.

| AnandTech Storage Bench - Heavy - IO Breakdown | |||||||||||

| IO Size | <4KB | 4KB | 8KB | 16KB | 32KB | 64KB | 128KB | ||||

| % of Total | 7.8% | 29.2% | 3.5% | 10.3% | 10.8% | 4.1% | 21.7% | ||||

The Heavy trace has more focus on 16KB and 32KB IO sizes, but more than half of the IOs are still either 4KB or 128KB. About 43% of the IOs are sequential with the rest being slightly more full random than pseudo-random.

| AnandTech Storage Bench - Heavy - QD Breakdown | ||||||||||||

| Queue Depth | 1 | 2 | 3 | 4-5 | 6-10 | 11-20 | 21-32 | >32 | ||||

| % of Total | 63.5% | 10.4% | 5.1% | 5.0% | 6.4% | 6.0% | 3.2% | 0.3% | ||||

In terms of queue depths the Heavy trace is even more focused on very low queue depths with three fourths happening at queue depth of one or two.

I'm reporting the same performance metrics as in The Destroyer benchmark, but I'm running the drive in both empty and full states. Some manufacturers tend to focus intensively on peak performance on an empty drive, but in reality the drive will always contain some data. Testing the drive in full state gives us valuable information whether the drive loses performance once it's filled with data.

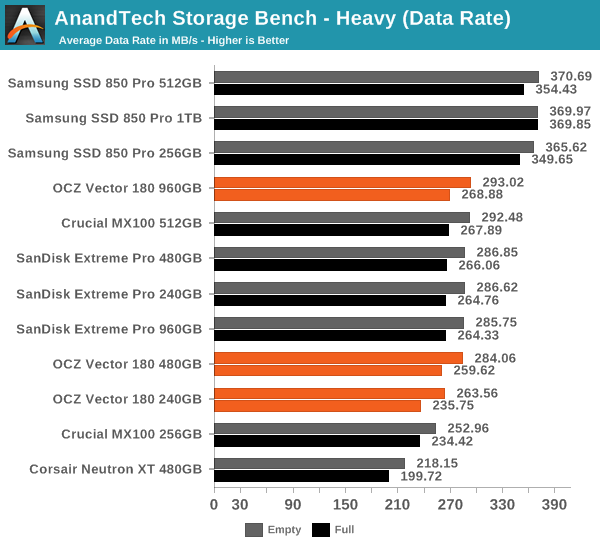

It sure seems like Samsung is the only manufacturer that has figured out a secret recipe to boost throughput with SATA 6Gbps because all the other drives are hitting a wall at ~290MB/s.

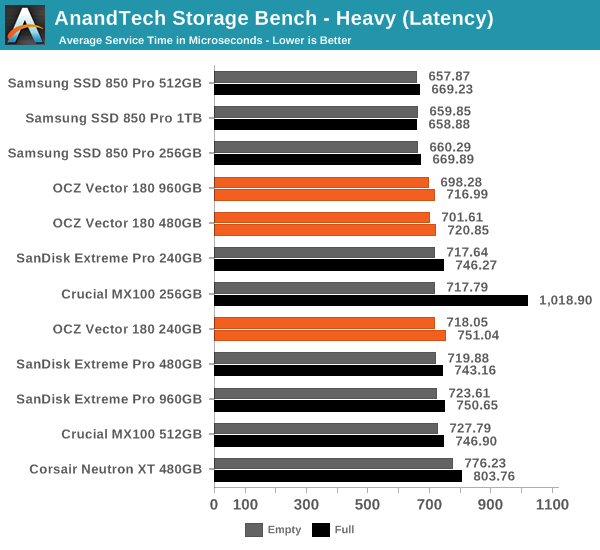

In terms of latency the difference between all drives is much more marginal. The Vector 180 has a small advantage over the Extreme Pro at larger capacities, although once again the 850 Pro tops the charts.

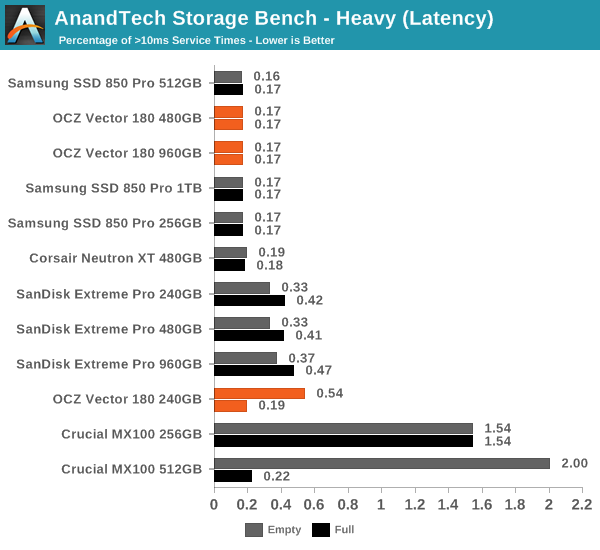

The Vector 180 is also very consistent with only a small number of >10ms IOs. Oddly enough, the 240GB does better when it's full, although I think that might be just an anomaly since it practically makes no sense at all.

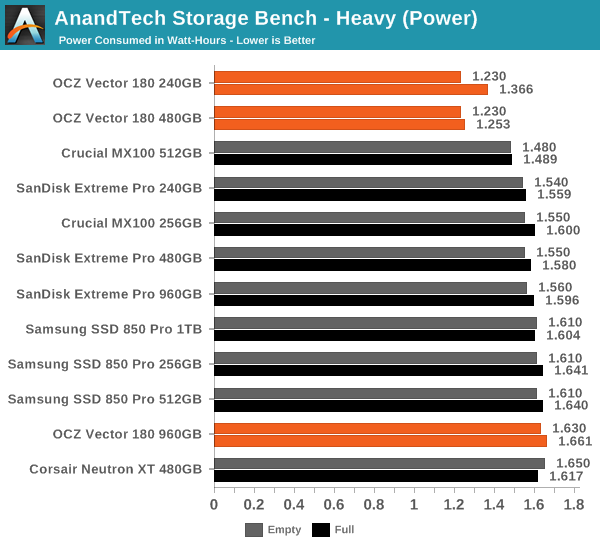

Similar to what we saw in The Destroyer benchmark, the 240GB and 480GB Vector 180 has wonderful load power characteristics and the difference to other drives is actually fairly significant.

89 Comments

View All Comments

Shark321 - Wednesday, March 25, 2015 - link

Tosh, it's a pity PFM does not work on the internal cache of the drive. You can still get file system damage during a power loss.AVN6293 - Sunday, December 20, 2015 - link

Does this drive support Opal 2.0 eDrive (FIPS/Hippa compliance) ?AVN6293 - Sunday, December 20, 2015 - link

...And can the over provisioning be increased by the user ?ats - Wednesday, March 25, 2015 - link

Actually, all consumer drives need power loss protection and they realistically need it much more than drives targeted at the actual enterprise side of the market. It comes down to simple probabilities. The average enterprise SSD is going to be backed by at least 1 additional layer of power loss prevention (UPS et al), have a robust backup infrastructure, and likely mirroring (offsite) on top.In contrast, consumer drives are unlikely to have any power loss prevention, unlikely to have anything approaching a backup infrastructure, and highly unlikely to have robust data resiliency(offsite mirroring et al).

So like many others, Anandtech gets it exactly wrong wrt PLP and SSDs. The fact that manufacturers have been able to get away without providing PLP on consumer SSDs is almost criminal. The fact that review sites accept this as perfectly OK is pretty much criminal on their part.

And what should pretty much be a rage storm for consumers is the actual cost of providing PLP on an SSD is literally a couple of $ in capacitors. Not to mention many consumer drives without PLP have enterprise drives using the exact same PCB with PLP. That we as consumers have allowed companies to have PLP as a point of differentiation is to our great detriment, esp when the actual cost of PLP is in the noise even for cheap low capacity SSDs.

If a drive cannot survive a power loss with data integrity then it certainly shouldn't get a recommendation nor should any consumer even consider it.

Shiitaki - Wednesday, March 25, 2015 - link

You do raise some very good points. I think the enterprise still needs it because they want as many ways to protect the data that they can get, after all it's only a couple of bucks. The consumer would benefit to a greater degree since that is likely all they would have is the caps in the SSD. However the consumer is their own worse enemy, a couple of bucks makes a difference for most consumers.I've had no issues, and until I read this article, gave no thought to pulling power on a system using an SSD! And I've done it ALOT! Not a single bad block yet! And that is with 6 SSD's in various machines from 4 manufacturers and 8 product lines. Though none of them with Windows, all Linux and OS X.

Sometimes I wonder just how wide spread issues really are. On the internet it's hard to tell since it's the angry people doing most of the posting.

In the end though whether you area company or individual, if it isn't backed up. you really don't need it.

trparky - Wednesday, April 29, 2015 - link

I do an image of my system SSD every week and my computer is always plugged into a UPS, and yes, that's my home setup. The power is my area is known to be dirty power, not complete drop-outs but if you measured the voltage output it would make most electrical engineers shake their heads and smack their foreheads.zodiacsoulmate - Tuesday, March 24, 2015 - link

also the Arconis 2013 is basically useless since it only runs on windows 7....ocztosh - Tuesday, March 24, 2015 - link

Hi Zodiassoulmate, just wanted to confirm that the Vector 180 drives are shipping with Acronis 2014.DanNeely - Tuesday, March 24, 2015 - link

That's a step in the right direction; but is still last years product. Acronis 2015 is already out. Am I overly cynical for thinking Acronis offered the 2014 version at a discount hoping to make it up by convincing some of the SSD buyers to upgrade to the new version after installing?Samus - Tuesday, March 24, 2015 - link

Acronis TrueImage 2015 is complete shit. Check the Acronis forums: most people (like myself - a paying annual customer since 2010) have gone back to 2014. The most recent update (October) still did not fix issues with image compatibility, GPT partition compatibility (added for 2015) and UEFI boot mapping. Aside from the lingering compatibility, reliability and stability issues, the interface is terrible. They've basically turned it into a backup product for single PC's instead of a imaging product. Even the USB bootable ISO I typically boot off a flash drive for imaging/cloning is inherently unstable and occasionally even corrupts the destination. Nobody has confirmed the "Universal Restore" works for Windows 7, yet another broken feature that worked FINE in 2014.Acronis lost me as a long-time customer to Miray because 2015 was SO botched and after waiting months for them to fix it, I gave up and had to find a product that could adequately clone UEFI OS's installed on GPT partitions. I use this product almost daily to upgrade PC's to SSD's. Unfortunately Miray's boot environment is a little slower, even with the verification disabled and "fast copy" turned on, likely because it runs a different USB stack.

I don't blame OCZ for sticking with 2014 like every other Acronis licensee has, including Crucial and Intel. 2014 is mature and stable, but it is not the modern solution - especially with Windows 10 around the corner. Acronis will forfeit this market to Miray or in-house solutions like Samsungs' Clonemaster if they don't get their act together. It's just astonishing how well Acronis was doing until 2015.