Dell XPS 13 Review

by Brett Howse on February 19, 2015 9:00 AM EST- Posted in

- Laptops

- Dell

- Ultrabook

- Broadwell-U

- XPS 13

Gaming Performance

Normally on an Ultrabook we would not dedicate an entire page to gaming performance, because the integrated GPUs do not perform very well on our gaming tests. However, with this being our first example of Broadwell-U, it is a good time to revisit this and see how the new graphics capabilities of Broadwell compare to the Haswell processors.

With the Core i5-5200U in both of the XPS 13s that we received, we have 24 execution units, compared to only 20 on Haswell-U. In addition, the 14nm process should help with throttling. The FHD model (1920x1080) arrived with a two 2GB memory modules and the QHD+ version came with 2 x 4GB.

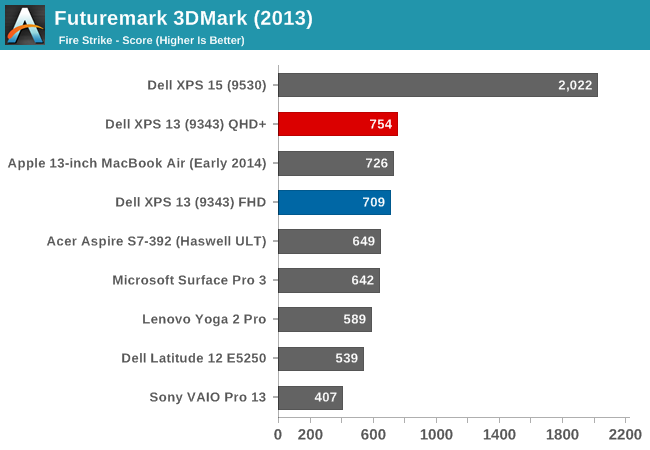

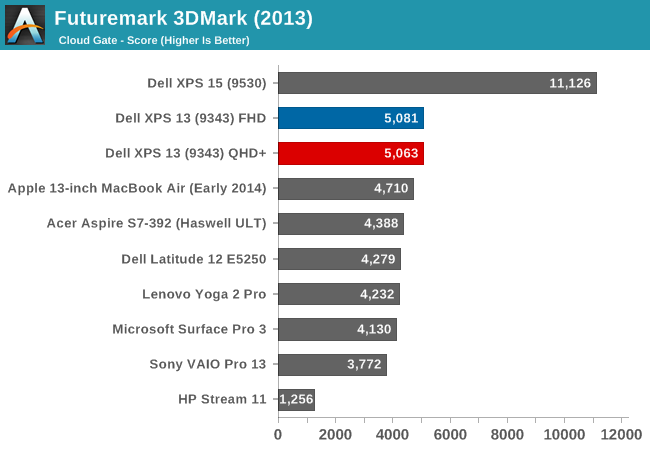

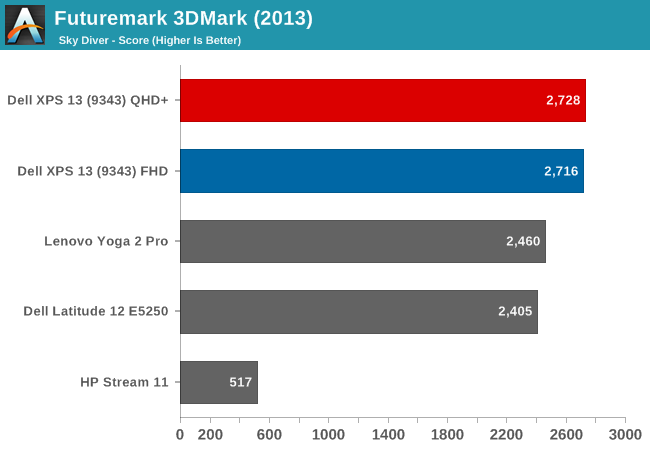

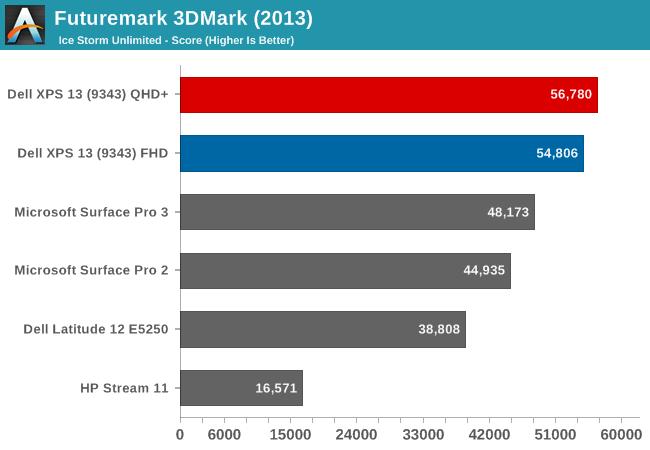

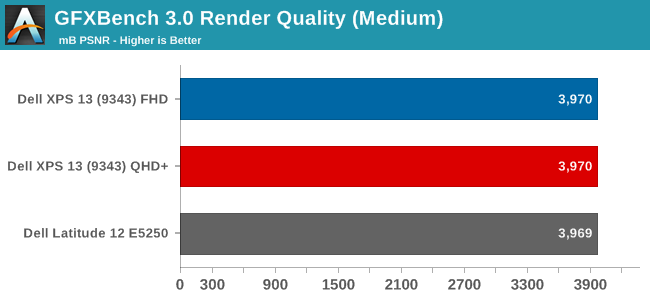

First, let's look at the synthetic benchmarks, starting with 3DMark and then moving on to GFXBench.

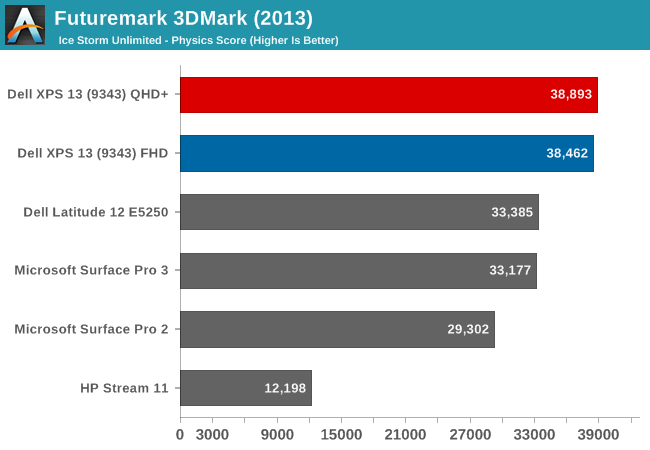

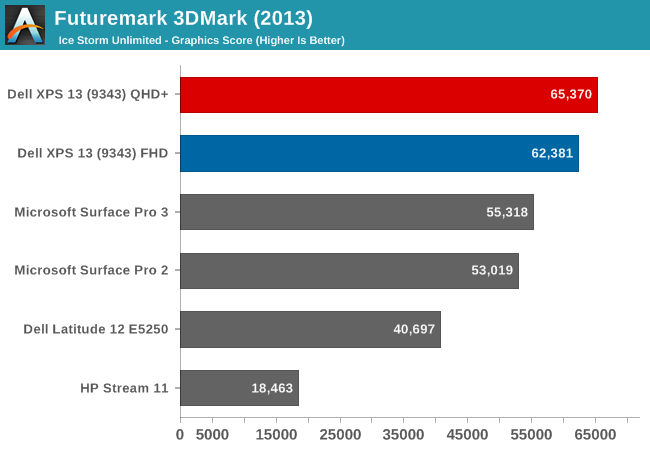

The 3DMark results begin to show the increased GPU performance of the Gen8 graphics. Broadwell-U outperforms all of the Haswell-U parts on all of the tests, and the QHD+ model gave a fraction more performance as well in a few tests.

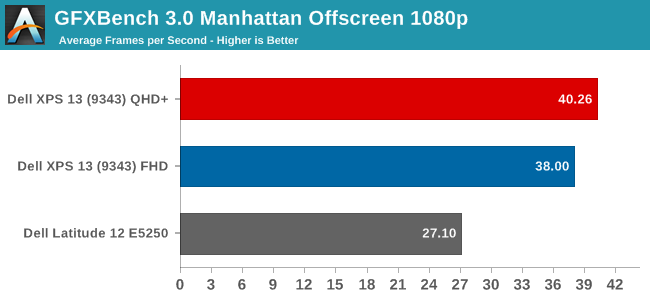

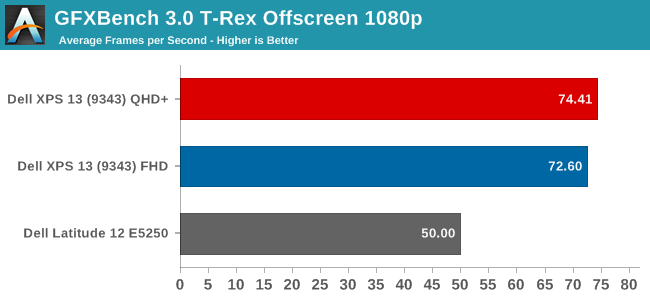

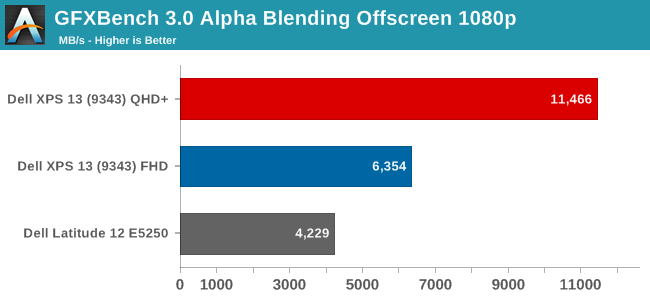

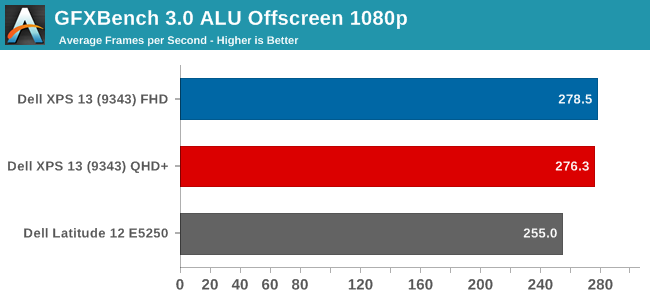

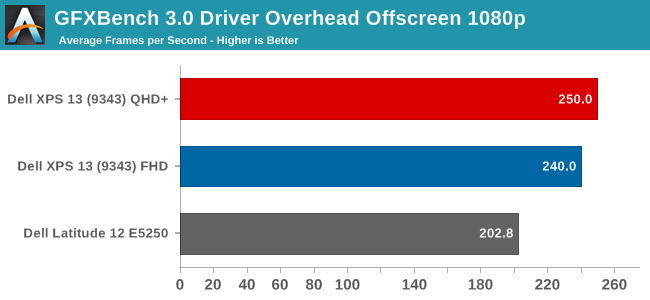

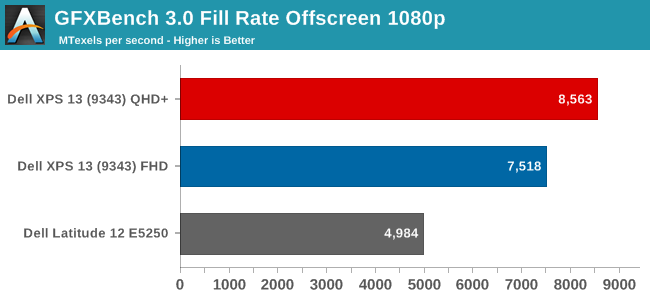

The initial results for the new GPU look pretty good, with the new GPU soundly beating the Haswell-U parts. The HP Stream 11, with just 4 EUs, trails quite far behind. GFXBench is one of our newer benchmark choices for Windows 8, and we will add more data as we get a few more devices to test.

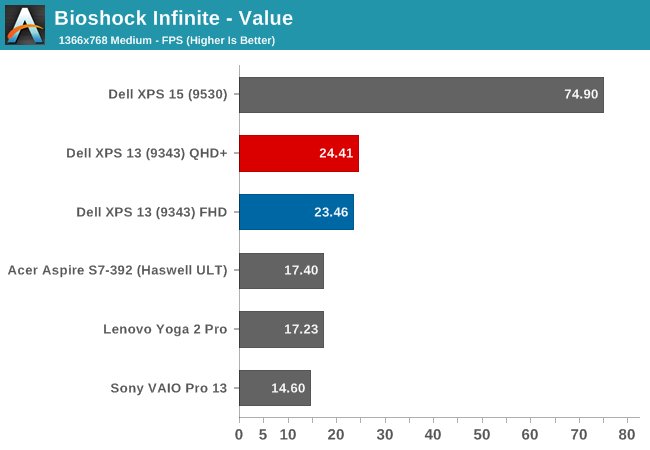

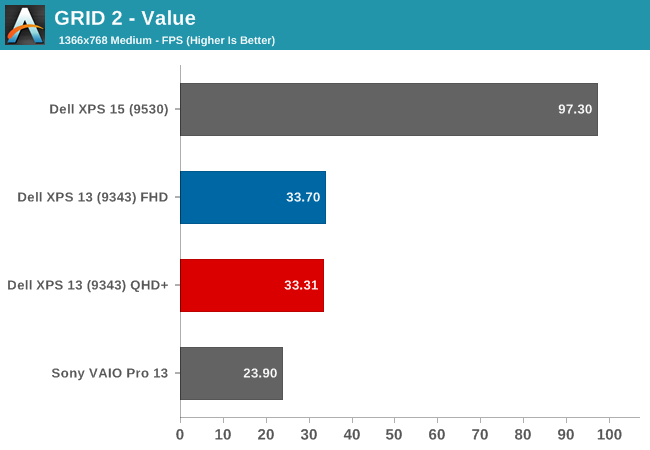

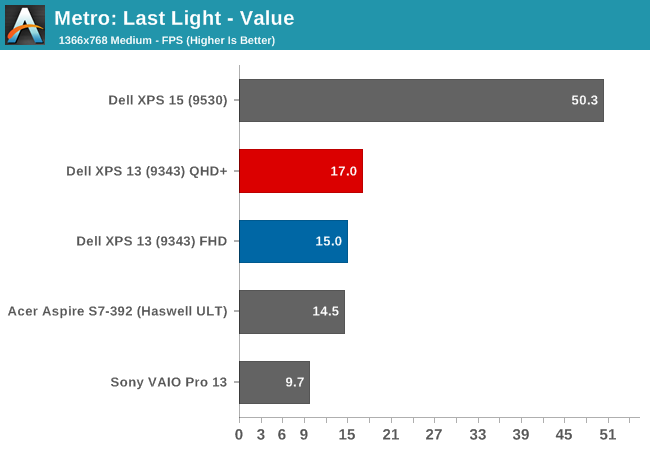

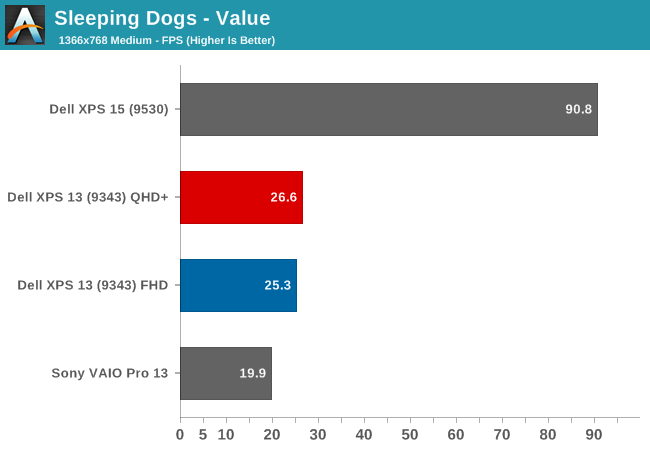

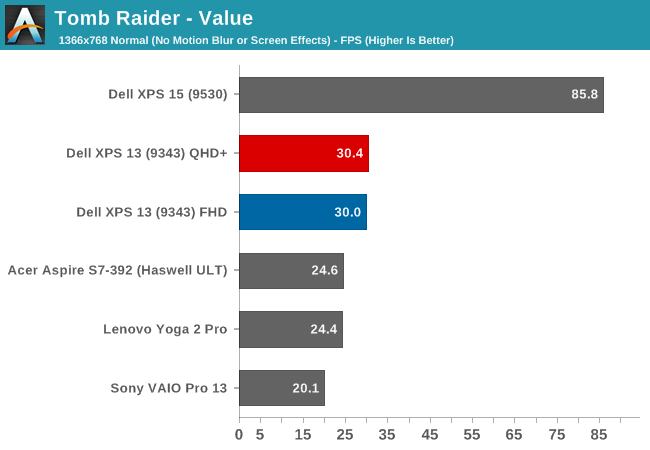

Next, let's look at our gaming benchmarks. Due to the low performance of the integrated GPUs, I just ran our gaming tests at the Value (1366x768 ~Medium) settings.

Here we can see once again that the new GPU is certainly stronger, but it is still not quite enough to make any of these games very playable on our Value settings. The Dell XPS 15, with its discrete GPU, carries a huge lead over the integrated GPU offerings. Still, the new Gen8 Graphics with more execution units per processor, as well as a change to the architecture of each execution unit, has made a healthy improvement. The new GPU has only eight EUs per sub-slice now, as compared to ten in Haswell-U, which help in many workloads. Ian has a nice writeup on the changes.

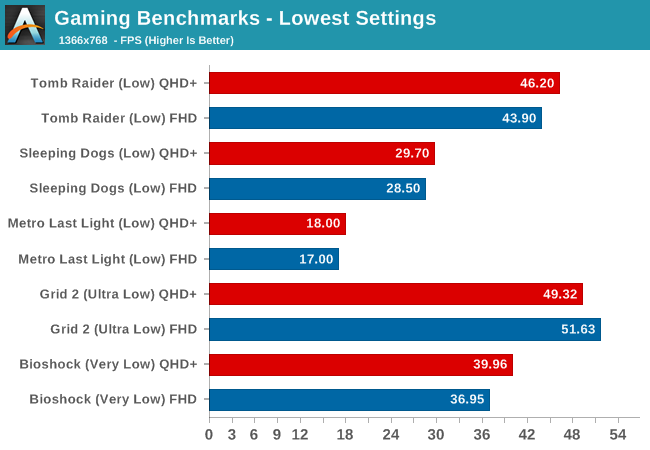

However, our gaming benchmarks are not tested at the lowest possible settings. All of the benchmarks start at 1366x768 with medium settings, so let's drop down another notch.

By setting the games to their lowest settings, some of them are now playable. We are still a long ways off of the performance of a discrete GPU, but slowly integrated graphics are improving.

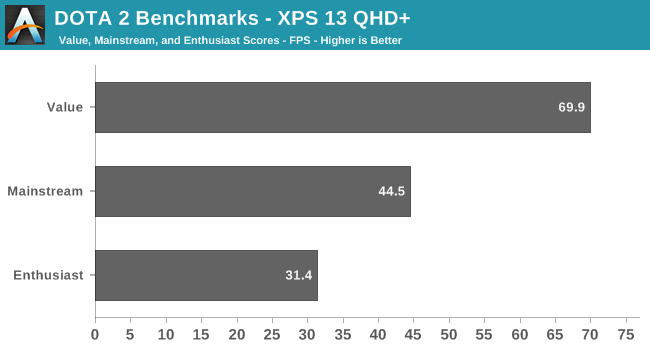

Finally, we have a new gaming benchmark to add to our repertoire. Anand first used the DOTA 2 bench for the Surface Pro 3 review and it will be our go-to benchmark for devices like this without a discrete GPU. Our Value setting will be 1366x768 with all options off, low quality shadows, and medium textures. Midrange will be 1600x900 with all options enabled, medium shadows, and medium textures, and Enthusiast will be 1920x1080 with all options maxed out.

We do not have any other comparison points at the moment, but it is very clear that a game like DOTA 2 is very playable on a device with an integrated GPU. Frame rates, even with good settings, are very reasonable.

So Broadwell has raised the stakes again, but the end result is Intel's Integrated GPU is still not going to let you play AAA titles with good frame rates. Hopefully we can get some good comparisons between Broadwell-U and the AMD APUs in the near future. It will also be interesting to see what happens on the higher wattage Broadwell parts, some of which will contain significantly more EUs.

201 Comments

View All Comments

trane - Thursday, February 19, 2015 - link

This must deserve an Editor's Choice award?The only major drawback is the auto-brightness, which I'm sure will be fixed with an update soon. IINM, the original Acer S7 had a similar issue which they fixed with a firmware update. Once that is fixed, I don't think there's any real con! Yes, we would all love it to do Yoga style acrobatics, and have touch for $800, but let's be realistic. Even with the battery life hit the QHD+ option is still class leading! Dell can't fight the the current state of technology...

But the fact that these are brought up as shortcomings just tell us how great this laptop is. I mean, no one's complaining that the $1000 Macbook Air sports an archaic non-touch non-matte low-res TN panel.

Some other sites seem to be reporting much lower battery life versus Macbook Air. I wonder if that is because they are penalising the display for being brighter? Or using Chrome? Chrome has godawful efficiency right now with Hi DPI support, I'm surprised that this is not more commonly known! My laptop does 8 hours with IE, 5 with Chrome.

RT81 - Thursday, February 19, 2015 - link

Believe me, PLENTY of people are complaining about the screen on the current Macbook Air. Nobody cares about touch on a Mac, but they certainly care about the TN and low-res part.repoman27 - Thursday, February 19, 2015 - link

"The FHD model (1920x1080) arrived with a single 4GB memory module and the QHD+ version came with 2x4GB, which gives us the chance to check the performance differences between the single-channel memory and dual-channel memory."These machines are memory down (soldered RAM) configurations. There are no modules, and Lenovo would be insane to not keep both channels populated and simply use different density packages for the different models. Are you sure they're shipping single channel setups?

repoman27 - Thursday, February 19, 2015 - link

D'oh! Dell, not Lenovo.Brett Howse - Thursday, February 19, 2015 - link

Sorry I made a mistake there. It is in fact 2x2GB and the article has been updated.Hulk - Thursday, February 19, 2015 - link

Is it possible that Dell sent the laptops without the ability to change auto brightness on purpose so that the battery life tests would be extraordinarily high? I'm sure they'll be good when the update to turn auto brightness off arrives but most people will remember the hype of the original amazing battery life tests. With all due respect to Anandtech I think they should not have posted battery life tests until they auto brightness can be turned off. It's not really an apples-to-apples (no pun intended) comparison.trane - Thursday, February 19, 2015 - link

To be fair, he did check the brightness from time to time. If there were any notable changes I'm sure they would have withheld the results.Hulk - Thursday, February 19, 2015 - link

You are right and I'm not in ANY way implying there was an bias on Anandtech's part. It's a tough call. Publish the battery life to get the results out there quickly for readers with the caveat of not being able to disable auto brightness or just write "the battery life results look to be very impressive but we are not going to publish them until we can disable auto brightness." I'm just saying I would have gone with the 2nd option for two reasons. First, if with auto brightness off the results are dramatically lower than Dell pulled one over. And second, to send a message to manufacturers that if they don't allow fair comparisons due to locked software not all testing results will be published.As I wrote above it's a tough call and I respect Anandtech's decision I just disagree with it.

andrewaggb - Thursday, February 19, 2015 - link

The battery results are fantastic, I wonder how much the auto-brightness plays into it. I wonder if you pointed a bright light at the light sensor if that would force the brightness to stay at maximum.JarredWalton - Thursday, February 19, 2015 - link

FWIW, I've run tests with laptops at 100 nits before just to see how much that would help. The difference between 100 and 200 nits is usually on the order of 30-60 minutes at most, and often less. However, to get 15 hours from a 42Wh battery means that the laptop is using around 2.74W in our Light workload. If the display adaptive brightness saves 1W, battery life would drop to 11.24 hours (give or take). Which is what happens with the QHD+ panel I should note, though how much of that is the display alone and how much is caused by a higher load on RAM and CPU/GPU to handle the higher resolution is difficult to say.