GIGABYTE GB-BXi7H-5500 Broadwell BRIX Review

by Ganesh T S on January 29, 2015 7:00 AM ESTHTPC Credentials

The GIGABYTE GB-BXi7H-5500 is a compact PC, but, thanks to the 15W TDP CPU inside, it doesn't require a noisy thermal solution like what we saw in the BRIX Pro and BRIX Gaming units. Subjectively speaking, the unit is silent for most common HTPC use-cases. Only under heavy CPU / GPU loading does the fan become audible. However, as mentioned before, it still makes a good HTPC for folks who don't want to pay the premium for a passively cooled system.

Refresh Rate Accurancy

Starting with Haswell, Intel, AMD and NVIDIA have been on par with respect to display refresh rate accuracy. The most important refresh rate for videophiles is obviously 23.976 Hz (the 23 Hz setting). As expected, the GIGABYTE GB-BXi7H-5500 has no trouble with refreshing the display appropriately in this setting. In fact, in our recent tests, Intel's accuracy has been the best of the three.

The gallery below presents some of the other refresh rates that we tested out. The first statistic in madVR's OSD indicates the display refresh rate.

Network Streaming Efficiency

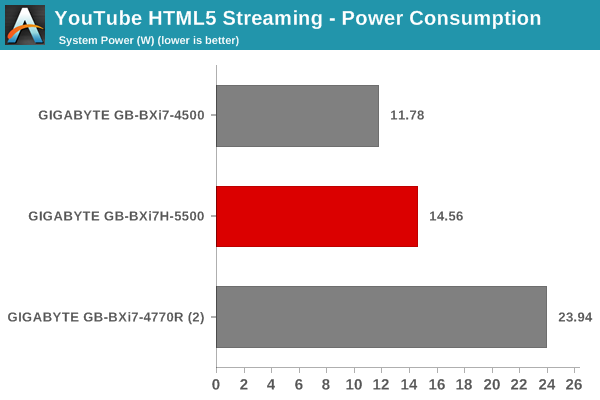

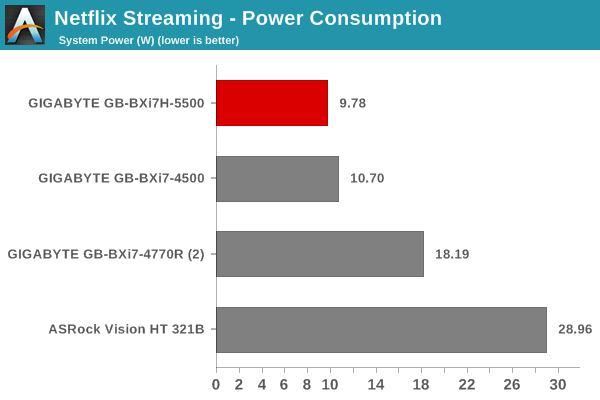

Evaluation of OTT playback efficiency was done by playing back our standard YouTube test stream and five minutes from our standard Netflix test title. Using HTML5, the YouTube stream plays back a 720p encoding. Since YouTube now defaults to HTML5 for video playback, we have stopped evaluating Adobe Flash acceleration. Note that only NVIDIA exposes GPU and VPU loads separately. Both Intel and AMD bundle the decoder load along with the GPU load. The following two graphs show the power consumption at the wall for playback of the HTML5 stream in Mozilla Firefox (v 35.0).

Differences in the power consumption numbers for the Broadwell and Haswell BRIX units can be attributed to changes in the version of Firefox as well as the drivers. Ideally, the Haswell-based unit ought to consume more power for the same workload - something brought out by the Netflix power consumption numbers shown below.

GPU load was around 13.03% for the YouTube HTML5 stream and 4.25% for the steady state 6 Mbps Netflix streaming case.

Decoding and Rendering Benchmarks

In order to evaluate local file playback, we concentrate on EVR-CP, madVR and Kodi. We already know that EVR works quite well even with the Intel IGP for our test streams. Under madVR, we used the default settings (as it is well known that the stressful configurations don't work even on the Iris Pro-equipped processors). The decoder used was LAV Filters bundled with MPC-HC v1.7.8. LAV Video was configured to make use of Quick Sync.

| GIGABYTE GB-BXi7H-5500 - Decoding & Rendering Performance | ||||||

| Stream | EVR-CP | madVR - Default | XBMC | |||

| GPU Load (%) | Power (W) | GPU Load (%) | Power (W) | GPU Load (%) | Power (W) | |

| 480i60 MPEG2 | 23.28 | 12.54 | 65.46 | 14.79 | 12.93 | 10.34 |

| 576i50 H264 | 20.18 | 11.46 | 74.94 | 15.44 | 22.15 | 10.73 |

| 720p60 H264 | 28.03 | 14.56 | 72.32 | 18.64 | 27.91 | 11.65 |

| 1080i60 MPEG2 | 29.87 | 14.40 | 48.78 | 19.73 | 27.75 | 11.88 |

| 1080i60 H264 | 32.24 | 16.06 | 49.99 | 20.24 | 31.04 | 12.21 |

| 1080i60 VC1 | 31.01 | 15.23 | 49.06 | 19.91 | 28.77 | 12.20 |

| 1080p60 H264 | 31.87 | 15.88 | 65.89 | 18.54 | 30.58 | 12.07 |

| 1080p24 H264 | 12.71 | 13.47 | 20.95 | 12.49 | 11.58 | 10.39 |

| 4Kp30 H264 | 29.87 | 20.01 | 93.85 | 38.24 | 17.67 | 12.32 |

The Intel HD Graphics 5500 throws us a nice surprise by managing to successully keep its cool with the madVR default settings. Only the 4Kp30 stream downscaled after decode for 1080p playback choked and dropped frames. Otherwise, there was no trouble for our test streams with either Kodi or MPC-HC / EVR-CP.

53 Comments

View All Comments

gonchuki - Thursday, January 29, 2015 - link

Please define "decimates". In non-OpenCL and non-GPU bound tests, it's a 5-7% win at most, which can be easily explained by the 33% higher base clock of the CPU cores, plus the die shrink that allows for better thermals (more headroom for higher bins of turbo boost).All of the test results point to Broadwell having the exact same IPC as Haswell in all situations. If anything improved it can only be because of the new stepping that might have fixed some errata.

nathanddrews - Thursday, January 29, 2015 - link

Agreed, the word "decimates" is a bit extreme - but I consider anything in the 10-20% range to be significantly better.Refuge - Thursday, January 29, 2015 - link

These days I agree, long gone are the days of Sandybridge... Tis a shame, they were fun.Laststop311 - Friday, January 30, 2015 - link

I'm glad some 1 else noticed this. In the benchmarks that strictly use only the cpu, broadwells haswell equivalent is barely and i mean barely any faster. Sure the gpu is a pretty decent improvement but who cares about intels integrated gpu's? Anyone that relies heavily on an integrated gpu is going to get an apu from amd. The only reason the gpu is so much better is its such a poor performing part to begin with, it's a lot easier to improve lower performing things than things that are already highly optimized like the cpu.This is bad news for people using desktops with discrete gpu's and were hoping broadwell would be a decent boost. In those situations the iGPU means nothing so big deal it got better. This also means broadwell-e is going to rly suck and be basically identical to haswell-e almost no reason to even bother designing broadwell-e chips since they dont even use iGPU there is no performance increase at all to talk about in those.

The silver lining though is we get to save money another year. With intel having no pressure on them we get to save our money till there is a real performance boost. Basically anyone with an i7-920 or higher doesn't have to spend money on a pc upgrade till maybe skylake/skylake-e MAYBE, intel has put out underwhelming tocks lately as well. My x58 i7-980x system still has no cpu bottleneck. This allowed me to buy a 55" LG OLED tv as normally i was buying a new pc every 2-3 years before the core i7 series started then all the sudden performance upgrades became pathetic, my new pc fund built up and i found the oled tv for 3000 and figured why not i can easily go another couple years with the same pc. So thanks intel for making no progress i got a new oled tv.

BrokenCrayons - Friday, January 30, 2015 - link

I care about iGPU benchmarks and the computer I use for gaming has an Intel HD3000 and probably will do so for at least another year or more before even thinking about an upgrade. Having dedicated graphics in my laptop seems pointless when I can just wait 5-7 years or so to play a game after it's fully patched and usually avaiable with all of it's DLC for very little cost plus runs well on something that doesn't need a higher end graphics processor. So yes, for serious gaming, iGPUs are fine if you manage expectations and play things your computer can easily handle.purerice - Saturday, January 31, 2015 - link

BrokenCrayons, agreed 100%!! I recently upgraded from Merom to Ivy Bridge myself.There are tons of games now selling for $5-$10 that wouldn't run on Merom when the games cost $40-$60. In addition to being patched and DLC'd, guides and walkthroughs exist to get through any of the "less awesome" parts. More money saved for real life and less frustration to interrupt gaming. Patience pays indeed.

Seeing the ~20% boost over 4500U in Ice Storm and Cinebench Open GL was actually exciting, even if it represents performance below 90% of other Anandtech users' current levels.

DrMrLordX - Tuesday, February 3, 2015 - link

Decimates means to destroy something by 10% of its whole. All things considered, I'd rather be decimated than . . . you know, devastated, or annihilated.DanNeely - Thursday, January 29, 2015 - link

While I agree that replacing 1280x1024 is past due; I disagree with picking 1280x720. Back when it was picked 1280x1024 was the most common resolution on low end monitors. Today the default low end resolution is 1366x768 (26.65% on steam); it's also the second most commonly used one (after 1080p).Oxford Guy - Thursday, January 29, 2015 - link

Agreed. It's pretty silly to "replace" a higher resolution with a lower one.frozentundra123456 - Saturday, January 31, 2015 - link

I would disagree. It is quite reasonable, because many laptops use 768p, as well as cheap TVs.