Intel Releases Broadwell-U: New SKUs, up to 48 EUs and Iris 6100

by Ian Cutress on January 5, 2015 10:00 AM ESTBroadwell GPU Improvements

The new integrated graphics for Broadwell from Intel in the form of HD 5500, HD 6000 and Iris 6100 open up some very important functionality. DirectX 11.2, OpenGL 4.3 and OpenCL 2.0 are big scoring points, especially OpenCL 2.0 when it comes to graphics compute. This includes features such as shared memory coherency between CPU and GPU to allow transfer of pointer-rich memory structures and ultimately the beginnings of heterogeneous CPU + GPU compute.

‘DirectX 12 Ready’ is somewhat of an interesting case, and it depends on Microsoft’s final specifications. At this point in time Intel state that they conform to as much of DirectX 12 feature set that they can get their hands on with their relationship with Microsoft, and barring any major addition or change, should be compatible. UHD-4K is also now supported through the HDMI 1.4b interface, albeit limited to 24 Hz.

Support for HEVC is also present in terms of a combined (hybrid) hardware and software solution, just as it was on Core-M. Intel stated that as parts of the H.264 algorithm are near if not identical to those of the H.265, with a small tweak to the hardware it can be used for both. This is still not an all-encompassing hardware acceleration, but it does aim at some parts of the codec. I would speculate that if a full section of the silicon could be made for complete hardware acceleration, it might eat into certain power budgets.

GPU Slices

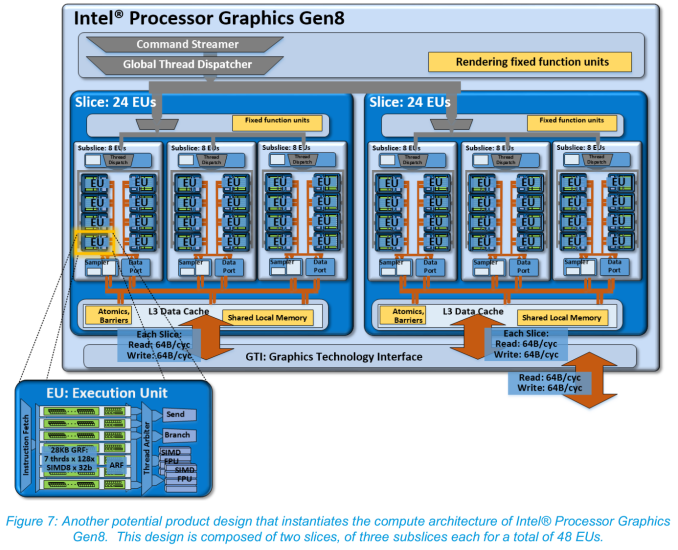

Although mentioned in our Broadwell architecture overview, for Broadwell-U we should cover the basics of the GPU layout. Below is an image of a HD 6000 implementation featuring two slices of 24 EUs each, with each slice having three sub-slices of 8 EUs. Each EU can handle 7 threads at once when in a 128xSIMD8 32-bit configuration. When in the right data structure, this offers a good amount of power, especially to coalesced 16-bit computation.

For Broadwell, Intel has reduced the number of EUs in a sub-slice from 10 down to 8. This is a big change, as it eases up the Thread Dispatch, Data Port and L1/L2 samplers in each EU as they have to deal with less data overall. By this logic, with two equivalent designs, one with 8 EUs per sub-slice should be able to get through more data due to less data bandwidth pressure and less competition for each sub-slice’s shared local memory.

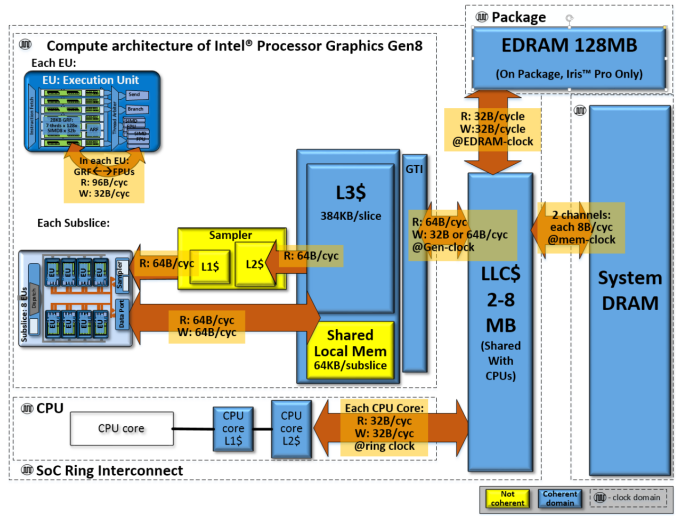

Each slice has an L3 Data Cache, which for Gen8 is improved from 384 KB to 576 KB per slice. This ends up being split anyway as 64 KB per sub-slice as shared local memory and 384 KB for inter-slice L3 communication. More slices mean more caching overall, and as an aid to the last level cache outside the GPU, the Graphics Technology Interface has doubled the write bandwidth.

The principle behind caches is to provide a small amount of memory that can be accessed faster at the expense of size. The algorithms in place to predict which data is needed next (or preprogrammed data fetching) helps increase the overall speed of any CPU function, but increasing the size of a cache decreases the need for later caches by reducing the data misses that require trips further out. GPUs are historically bandwidth starved, both for gaming and for compute, so by virtue of having four named caches for the GPU to use and increasing at least the third, this should contribute to the large performance numbers Intel is producing in comparison to the previous generation.

In terms of throughput, Intel gives the following numbers for GT2 / 24 EU configurations:

32b FP: 384 FLOP/cycle = 24 EUs * (2 * SIMD-4 FPU) * (MUL + ADD)

64b DP: 96 FLOP/cycle = 24 EU * SIMD-4 FPU * (MUL + ADD) * 0.5 throughput

32b INT: 192 IOP/cycle = 24 EU * (2 * SIMD-4 FPU) * ADD

The Gen8 EUs will support 16-bit floats (half-floats) natively, as well as 16-bit integers.

We can construct a table calculating out to full performance:

| Intel Gen8 Graphics Throughput | |||

| Processor | 32b FP GFLOPs |

64b DP GFLOPS |

32b INT GFLOPs |

| i7-5557U (48 EUs at 1100 MHz) | 844.8 | 211.2 | 422.4 |

| i5-5257U (48 EUs at 1050 MHz) | 806.4 | 201.6 | 403.2 |

| i3-5157U (48 EUs at 1000 MHz) | 768 | 192 | 384 |

| i7-5250U (48 EUs at 950 MHz) | 729.6 | 182.4 | 364.8 |

| i7-5600U (24 EUs at 950 MHz) | 364.8 | 91.2 | 182.4 |

| i5-5300U (24 EUs a 900 MHz) | 345.6 | 86.4 | 172.8 |

| i3-5010U (23 EUs at 900 MHz) | 331.2 | 82.8 | 165.6 |

| i3-5005U (23 EUs at 850 MHz) | 312.8 | 78.2 | 156.4 |

| Pentium 3805U (12 EUs at 800 MHz) | 153.6 | 38.4 | 76.8 |

Having doubled the EUs not only doubles the performance but the extra bit of frequency also helps.

As we mentioned back in August, the sub-slice also gets some minor improvements to increase pixel and Z-fill rates, and the front end’s geometry units are also beefed up. Further reading can be found on Intel’s PDF on the Gen 8 Graphics Overview from IDF.

85 Comments

View All Comments

Drumsticks - Monday, January 5, 2015 - link

Thanks. I hope we see the power improvements here coupled with the same or larger batteries (maybe with the extra space...) i am really hoping the Surface Pro 4 can consistently break ten hours (which is the "great enough" spot for me) per charge, which would make me happily drop my SP1.fallaha56 - Monday, January 5, 2015 - link

then let's hope for a Skylake Surface 4 -too little change hereWalkop - Monday, January 5, 2015 - link

Brianandforce? ;)Agreed here definitely. I'd really like a Skylake SP4, we'd see some serious jumps there. It deserves Skylake anyway, we've skipped a generation of performance improvement.

ws3 - Monday, January 5, 2015 - link

I was right with you until the last phrase. You need a 10-hour Surface Pro 4 in order to drop your 4-hour Surface Pro 1, when both the Surface Pro 2 & Surface Pro 3 already offer significantly improved battery life?Aftershocker - Friday, January 9, 2015 - link

Love my SP 2. Skipped the SP3 because my SP2 was only a couple of months old and because of the throttling issues. I do hope they do a SP3 refresh with these chips though, ahead of a full Skylake SP4. I'm assuming they would likely go for the 5500HD parts because of the lower cTDP requirement?aegisofrime - Monday, January 5, 2015 - link

Any word on desktop parts?TiGr1982 - Monday, January 5, 2015 - link

According to last year rumors, desktop parts are supposed to happen as Broadwell-K Core i5 and i7 for LGA1150 (for 90-series chipsets M/B only) around Q3 2015 (Q2 2015 at best).kspirit - Monday, January 5, 2015 - link

It's articles like this that make me keep coming back to this website. Thanks a lot for the info. I hope we get to see some direct benchmarks against Haswell, especially in the battery life area. 5% IPC improvement isn't too big, but then again it is just a die shrink and we shouldn't expect too much more. I am much more interested in battery life, since now Intel and MS seem to be really collaborating on that end since W8 was launched.I'm not holding my breath on some big battery improvements though. Excellent battery life with Haswell was already possible, but except for some OEMs (like Samsung with Ativ Book 9 and ASUS with Zenbooks), none put in the effort to really make the most of it.

Nice article. *thumbs up*

Galatian - Monday, January 5, 2015 - link

We really have to wait for benchmarks. Right now it feel too much like "meh" to me. TDP stayed the same. Sure there are some performance increases especially on the GPU side, but I would say they mostly come from more transistors. I was hoping that 14 nm would bring them more energy saving.And the elephant in the room is still Skylake. I have both a 13" rMBP on Ivy and a Surface Pro 2 on Haswell, which both could use a upgrade (the MacBook more on the CPU side and the Surface more on the GPU side in my use cases). I was hoping for Quad Cores in the 28 W range on 14 nm, so a 13" rMBP could go all Quad. Also weren't there plans for a complete overhaul off the GPU architecture? Is that now scheduled for Skylake?

kspirit - Monday, January 5, 2015 - link

Does Intel update architecture at all in the year of the die shrink? That's news to me, I didn't know any GPU rework was planned. :o