NVIDIA Tegra X1 Preview & Architecture Analysis

by Joshua Ho & Ryan Smith on January 5, 2015 1:00 AM EST- Posted in

- SoCs

- Arm

- Project Denver

- Mobile

- 20nm

- GPUs

- Tablets

- NVIDIA

- Cortex A57

- Tegra X1

Final Words

With the Tegra X1, there have been a great deal of changes when compared to Tegra K1. We see a move from Cortex A15 to A57 on the main cluster, and a move from a single low power Cortex A15 to four Cortex A53s which is a significant departure from previous Tegra SoCs. However, the CPU design remains distinct from what we see in SoCs like the Exynos 5433, as NVIDIA uses a custom CPU interconnect and cluster migration instead of ARM’s CCI-400 and global task scheduling. Outside of these CPU changes, NVIDIA has done a great deal of work on the uncore, with a much faster ISP and support for new codecs at high resolution and frame rate, along with an improved memory interface and improved display output.

Outside of CPU, the GPU is a massive improvement with the move to Maxwell. The addition of double-speed FP16 support for the Tegra X1 helps to improve performance and power efficiency in applications that will utilize FP16, and in general the mobile-first focus on the architecture makes for a 2x improvement in performance per watt. While Tegra K1 set a new bar for mobile graphics for other SoC designers to target, Tegra X1 manages to raise the bar again in a big way. Given the standards support of Tegra X1, it wouldn’t be a far leap to see more extensive porting of games to a version of SHIELD Tablet with Tegra X1.

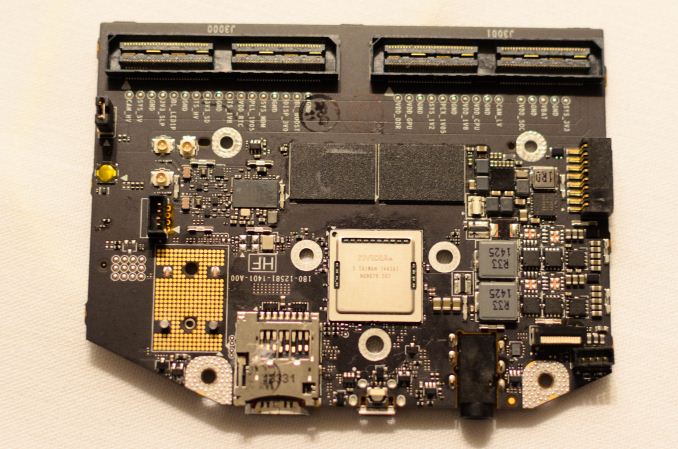

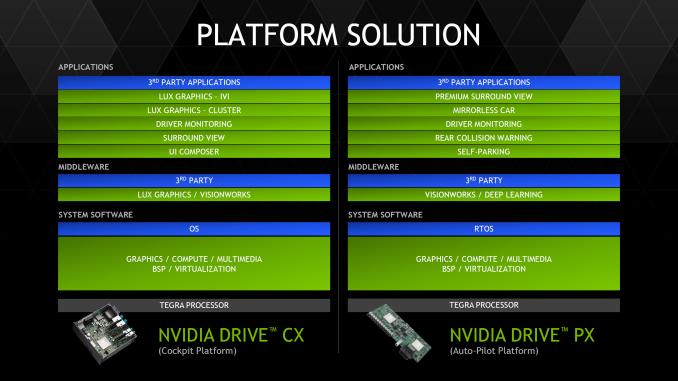

NVIDIA has also made automotive applications a huge focus in Tegra X1 in the form of DRIVE CX, a cockpit computing platform, and DRIVE PX, an autopilot platform. Given the level of integration and compute present in both DRIVE CX and PX, there seems to be a significant amount of value in NVIDIA’s solutions. However, it remains to be seen whether OEMs will widely adopt these solutions as car manufacturers can take multiple years to implement a new SoC. Compared to the 3-4 month adoption rate of an SoC in a phone or tablet, it's hard to pass any judgment on whether or not NVIDIA's automotive endeavors will be a success.

Overall, Tegra X1 represents a solid improvement over Tegra K1, and now that NVIDIA has shifted their GPU architectures to be targeted at mobile first, we’re seeing the benefits that come with such a strategy. It seems obvious that this would be a great SoC to put in a gaming tablet and a variety of other mobile devices, but it remains to be seen whether NVIDIA can get the design wins necessary to make this happen. Given that all of the high-end SoCs in the Android space will be shipping with A57 and A53 CPUs, the high-end SoC space will see significant competition once again.

194 Comments

View All Comments

juicytuna - Monday, January 5, 2015 - link

Well said. Apple's advantage is parallel development and time to market. Their GPU architecture is not that much *better* than their competitors. In fact I'd say that Nvidia has had a significant advantage when it comes to feature set and performance per watt on a given process node since the K1.GC2:CS - Monday, January 5, 2015 - link

Maybe an adventage in feature set, but performance per watt ?So if you want to compare than For example xiaomi miPad, consumes around 7,9W, when running gfx bench battery life test and that is with performance throttled down to around 30,4 fps on screen a very similar tablet, the iPad mini with retina display and it's A7 processor (actually a 28nm part !) consumes just 4,3W and that is running at 22,9 fps for the whole time.

So I am asking where is that "class leading" efficiency and "significant adventage when it comes to performace per watt" that nvidia is claiming to achieve, because I actually don't see anything like that.

Yojimbo - Monday, January 5, 2015 - link

Looking at the gfxbench website, under "long-term performance" I see 21.4 fps listed for the iPad Mini Retina and 30.4 fps listed for the Mi Pad, maybe this is what you are talking about. That is a roughly 40% advantage in performance for the Mi Pad. I can't find anything that says about throttling or the number of Watts being drawn during this test. What I do see is another category listed immediately below that says "battery lifetime" where the iPad Mini Retina is listed at 303 minutes and the Mi Pad is listed at 193 minutes. The iPad Mini Retina has a 23.8 watt-hour battery and the Mi Pad has a 24.7 watt-hour battery. So this seems to imply that the iPad Mini Retina is drawing about 4.7 watts and the Mi Pad is drawing about 7.7 watts, and it comes out to the Mi Pad using about a 63% more power. 40% more performance for 63% more power is a much closer race than the numbers you quoted (Yours come out to about a 33% increase in performance and an 84% increase in power consumption, which is very different.), and one must remember the circumstances of the comparison. Firstly, it is a comparison at different performance levels (this part is fair, since juicytuna claimed that NVIDIA has had a performance per watt advantage), secondly, it is a long-term performance comparison for a particularly testing methodology, and lastly and most importantly, it is a whole-system comparison, not just comparing the GPU power consumption or even the SOC power consumption.GC2:CS - Monday, January 5, 2015 - link

Yeah exactly, when you got two similar platforms with different chips, I think it's safe to say that tegra pulls significally more than A7, because those ~3 additional wats (I don't know where you got your numbers, I know xiaomi got 25,46Wh, and that iPad lasts 330 minutes, A7 iPad's also push out T-rex at around 23 fps since iOS8 update) have to go somewhere. What I am trying to say that imagine how low powered the A7 is if the entire iPad mini at half brightness consumes 4,7W, how huge those 3W that more or less come from the SoC actually are.You will increase the power draw of the entire tablet by over a half, just to get 40% more performance out of your SoC. The tegra K1 in miPad has a 5W TDP, or more than entire iPad mini ! Yet it can't deliver performance that's competitive enough at that power.

Like you are a 140 lb man, that can lift a 100 pounds, but you will train a lot untill you will put on 70 pounds of muscles (pump more power intro the soc) to weight 210 or more and you could still only lift like 140 pounds. What a dissapointment !

What I see is a massive increase in power compustion, with not-so massive gains in performace, which is not typical to efficient architectures like nvidia is claiming Tegra k1 is.

That's why I think nvidia just kind of failed to deliver on their promise of "revolution" in mobile graphics.

Yojimbo - Monday, January 5, 2015 - link

I got my benchmark and battery life numbers from the gfxbench.com website as I said in my reply. I got the iPad's battery capacity from the Apple website. I got the Mi Pad's battery capacity from a review page that I can't find again right now, but looking from other places it may have been wrong. WCCFtech lists 25.46 W-h like you did. I don't know where you got YOUR numbers. You cannot say they are "two similar platforms" and conclude that the comparison is a fair comparison of the underlying SOCs. Yes the screen resolutions are the same, but just imagine that Apple managed to squeeze an extra .5 watts from the display, memory, and all other parts of the system than the "foolish chinesse manufacteurs (sic)" were able to do. Adding this hypothetical .5 watts back would put the iPad Mini Retina at 5.2 watts, and the Mi Pad would then be operating at 40% more performance for 48% (or 52%, using the larger battery size you gave for the MiPad) more power usage . Since power usage does not scale linearly with performance this could potentially be considered an excellent trade-off.Your analogy, btw, is terrible. The Mi Pad does not have the same performance as does the bulked-up man in your analogy, it has a whole 40% more. Your use of inexact words to exaggerate is also annoying: "I see massive increases in power compustion, with not-so massive gains in performace"and "You increase the power draw by over half just to get 40% more performance". You increase the power by 60% to get 40% more performance. That has all the information. But the important point is that it is not an SOC-only measurement and so the numbers are very non-conclusive from an analytical standpoint.

GC2:CS - Tuesday, January 6, 2015 - link

What I see from those numbers is a fact that Tegra is nowhere near 50% more efficient than A7 like nvidia is claiming.When Gfx bench battery life test runs the display and the SoC are two major power drawers so I thought is reasonable to make other power using parts neglible.

So the entire iPad mini pulls 4,9W (I don't know why I should add another 0,5 W if it doesn't pull that much) and miPad pulls 7,9W. Those are your numbers which actually favor nvidia a bit.

To show you that there is no way around that fact I will lower the compustion of miPad by a W just to favor nvidia even more.

Now when we got 4,9 and 6,9W for both tablets I will substract around 1,5W for the display power, which should be more or less the same for both tablets.

So we got 3,4 and 5,4W of all things but the display power compustion, and most of this will be the SoC power. And we got that the tegra k1 uses more or less 50% more power than A7 for 40% more performance in a scenario that favors nvidia so much it's extremelly unfair.

And even if we take this absurd scenario and scale back the power compustion of tegra K1 down quadratically: 1,5*(1,4)^(-2) we still get that even at A7 level of performance K1 will consume over 75% power of A7 for the same performance.

That is an number that is way, way, way off in favor of nvidia and yet it still doesn't come close to "50% more efficient" claim that would require the K1 to consume just 2/3 the power for the same performance.

So please tell me how can you assume that increasing the power draw of the ENTIRE tablet by 60%, just to get 40% more GPU performance out of your SoC, which is a SINGLE part, just a subset of total tablet power draw, can be interpreted as nvidia's SoC is more efficient. Because whatever I will spin that I am not seeing 3x performance and 50% more efficiency from K1 tablets compared to A7 tablets. I see that that K1 tablets throttle to nowhere near 3x faster than A7 iPads and they run down their battery significally faster. And if the same is true for the tegra X1, I don't know why anybody should be excited about those chips.

Yojimbo - Tuesday, January 6, 2015 - link

You don't think it's possible to save power in any other component of the system than the SOC? I think that's a convenient and silly claim. You can't operate under the assumption that the rest of the two very different systems draw the exact same amount of power and so all power difference comes from the SOC. Obviously if you want to compare SOC power draw you look at SOC power draw. Anything else is prone to great error. You can do lots of very exact and careful calculations and you will probably be completely inaccurate.juicytuna - Monday, January 5, 2015 - link

That's comparing whole SOC power consumption. There's now doubt Cyclone is a much more efficient architecture than A15/A7. Do we know how much this test stresses the CPU? Can it run entirely on the A7s or is it lighting up all 4 A15s? Not enough data.Furthermore, the performance/watt curve on these chips is non linear so if the K1 was downclocked to match the performance of the iPad I've no doubt its results would look much more favourable. I suspect that is why they compare the X1 to the A8X at same FPS rather than at the same power consumption.

Jumangi - Monday, January 5, 2015 - link

No it should be done on the actual real world products people can buy. That's the only thing that should matter ever.Yojimbo - Monday, January 5, 2015 - link

Not if one wants to compare architectures, no. There is no reason why in an alternate universe Apple doesn't use NVIDIA's GPU instand of IMG's. In this alternate universe, NVIDIA's K1 GPU would then benefit from Apple's advantages the same way the Series 6XT GPU benefits in the Apple 8X, and then the supposed point that GC2:CS is trying to make, that the K1 is inherently inferior, would, I think, not hold up.