NVIDIA Tegra X1 Preview & Architecture Analysis

by Joshua Ho & Ryan Smith on January 5, 2015 1:00 AM EST- Posted in

- SoCs

- Arm

- Project Denver

- Mobile

- 20nm

- GPUs

- Tablets

- NVIDIA

- Cortex A57

- Tegra X1

Automotive: DRIVE CX and DRIVE PX

While NVIDIA has been a GPU company throughout the entire history of the company, they will be the first to tell you that they know they can’t remain strictly a GPU company forever, and that they must diversify themselves if they are to survive over the long run. The result of this need has been a focus by NVIDIA over the last half-decade or so on offering a wider range of hardware and even software. Tegra SoCs in turn have been a big part of that plan so far, but NVIDIA of recent years has become increasingly discontent as a pure hardware provider, leading to the company branching out in unusual ways and not just focusing on selling hardware, but selling buyers on whole solutions or experiences. GRID, Gameworks, and NVIDIA’s Visual Computing Appliances have all be part of this branching out process.

Meanwhile with unabashed car enthusiast Jen-Hsun Huang at the helm of NVIDIA, it’s slightly less than coincidental that the company has also been branching out in to automotive technology as well. Though still an early field for NVIDIA, the company’s Tegra sales for automotive purposes have otherwise been a bright spot in the larger struggles Tegra has faced. And now amidst the backdrop of CES 2015 the company is taking their next step into automotive technology by expanding beyond just selling Tegras to automobile manufacturers, and into selling manufacturers complete automotive solutions. To this end, NVIDIA is announcing two new automotive platforms, NVIDIA DRIVE CX and DRIVE PX.

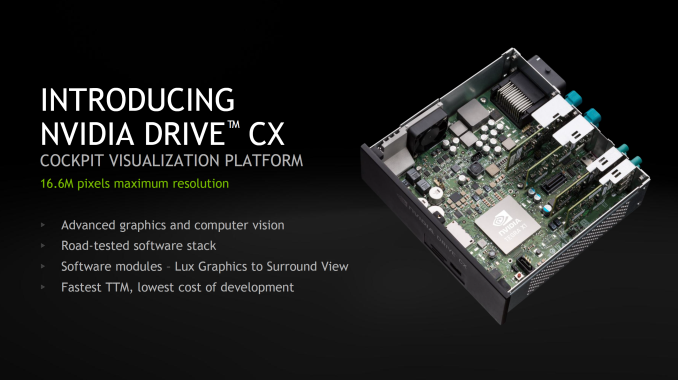

DRIVE CX is NVIDIA’s in-car computing platform, which is designed to power in-car entertainment, navigation, and instrument clusters. While it may seem a bit odd to use a mobile SoC for such an application, Tesla Motors has shown that this is more than viable.

With NVIDIA’s DRIVE CX, automotive OEMs have a Tegra X1 in a board that provides support for Bluetooth, modems, audio systems, cameras, and other interfaces needed to integrate such an SoC into a car. This makes it possible to drive up to 16.6MP of display resolution, which would be around two 4K displays or eight 1080p displays. However, each DRIVE CX module can only drive three displays. In press photos, it appears that this platform also has a fan which is likely necessary to enable Tegra X1 to run continuously at maximum performance without throttling.

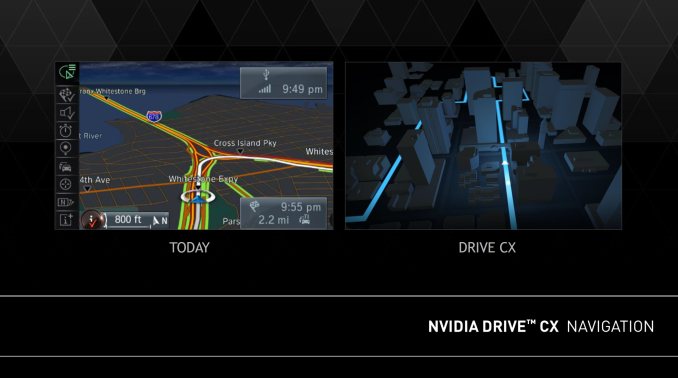

NVIDIA showed off some examples of where DRIVE CX would improve over existing car computing systems in the form of advanced 3D rendering for navigation to better convey information, and 3D instrument clusters which are said to better match cars with premium design. Although the latter is a bit gimmicky, it does seem like DRIVE CX has a strong selling point in the form of providing an in-car computing platform with a large amount of compute while driving down the time and cost spent developing such a platform.

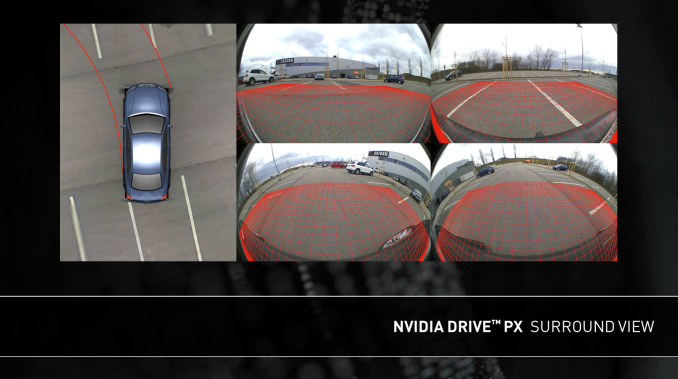

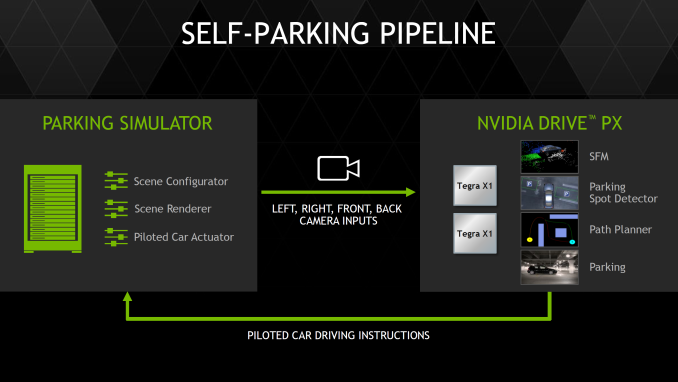

While DRIVE CX seems to be a logical application of a mobile SoC, DRIVE PX puts mobile SoCs in car autopilot applications. To do this, the DRIVE PX platform uses two Tegra X1 SoCs to support up to twelve cameras with aggregate bandwidth of 1300 megapixels per second. This means that it’s possible to have all twelve cameras capturing 1080p video at around 60 FPS or 720p video at 120 FPS. NVIDIA has also made most of the software stack needed for autopilot applications already, so there would be comparatively much less time and cost needed to implement features such as surround vision, auto-valet parking, and advanced driver assistance.

In the case of surround vision, DRIVE PX is said to deliver a better experience by improving stitching of video to reduce visual artifacts and compensate for varying lighting conditions.

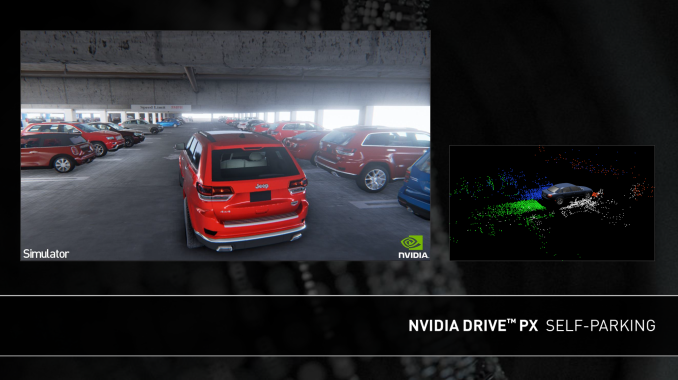

The valet parking feature seems to build upon this surround vision system, as it uses cameras to build a 3D representation of the parking lot along with feature detection to drive through a garage looking for a valid parking spot (no handicap logo, parking lines present, etc) and then autonomously parks the car once a valid spot is found.

NVIDIA has also developed an auto-valet simulator system with five GTX 980 GPUs to make it possible for OEMs to rapidly develop self-parking algorithms.

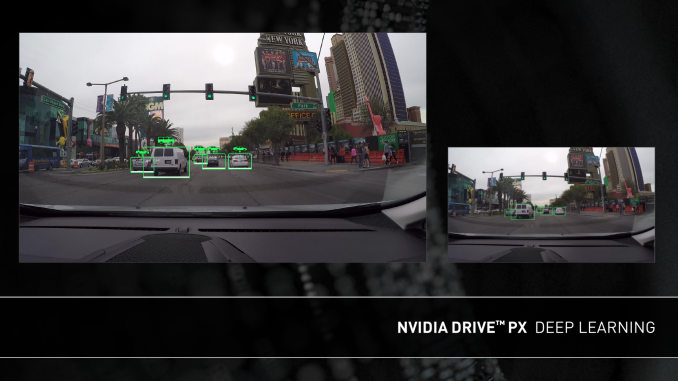

The final feature of DRIVE PX, advanced driver assistance, is possibly the most computationally intensive out of all three of the previously discussed features. In order to deliver a truly useful driver assistance system, NVIDIA has leveraged neural network technologies which allow for object recognition with extremely high accuracy.

While we won’t dive into deep detail on how such neural networks work, in essence a neural network is composed of perceptrons, which are analogous to neurons. These perceptrons receive various inputs, then given certain stimulus levels for each input the perceptron returns a Boolean (true or false). By combining perceptrons to form a network, it becomes possible to teach a neural network to recognize objects in a useful manner. It’s also important to note that such neural networks are easily parallelized, which means that GPU performance can dramatically improve performance of such neural networks. For example, DRIVE PX would be able to detect if a traffic light is red, whether there is an ambulance with sirens on or off, whether a pedestrian is distracted or aware of traffic, and the content of various road signs. Such neural networks would also be able to detect such objects even if they are occluded by other objects, or if there are differing light conditions or viewpoints.

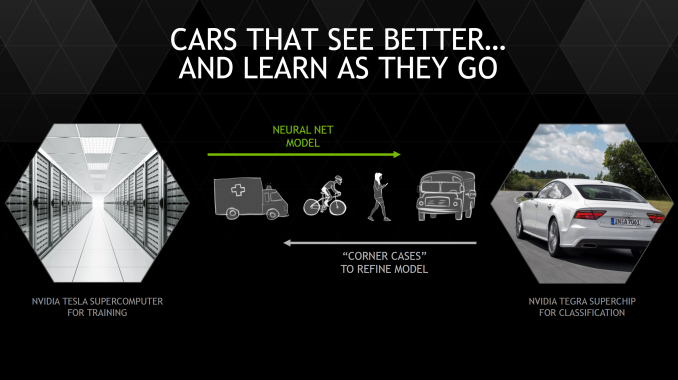

While honing such a system would take millions of test images to reach high accuracy levels, NVIDIA is leveraging Tesla in the cloud for training neural networks that are then loaded into DRIVE PX instead of local training. In addition, failed identifications are logged and uploaded to the cloud in order to further improve the neural network. Both of these updates can be done either over the air or at service time, which should mean that driver assistance will improve with time. It isn’t a far leap to see how such technology could also be leveraged in self-driving cars as well.

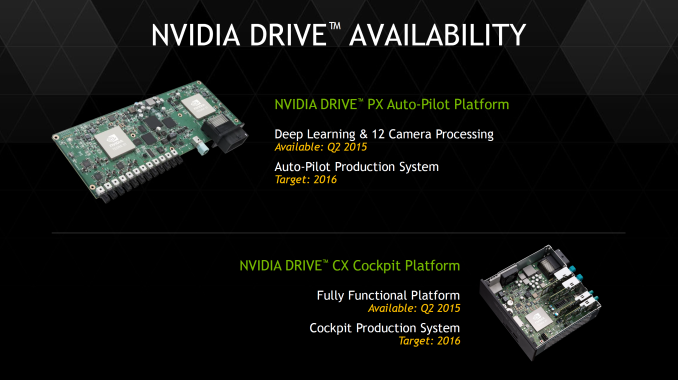

Overall, NVIDIA seems to be planning for the DRIVE platforms to be ready next quarter, and production systems to be ready for 2016. This should mean that it's possible for vehicles launching in 2016 to have some sort of DRIVE system present, although it's possible that it would take until 2017 to see this happen.

194 Comments

View All Comments

eanazag - Wednesday, January 7, 2015 - link

I'm not totally sure why all the NV and Apple back and forth. I see this as an Apple competitive chip for Android tablets. Why would Apple jump the Imag. Tech ship? At release time they have had the best GPU in a SOC for their iPads. ImgTech has been good for Apple iteration over iteration.May Apple be interested in licensing some IP from NV? Maybe. Apple does a lot of custom work and has a desire to remain in the lead on the mobile SOC front at device release.

lucam - Wednesday, January 7, 2015 - link

Old news, after 2 years nobody knows what happened since then.mpeniak - Thursday, January 8, 2015 - link

Totally!!!jwcalla - Monday, January 5, 2015 - link

It seems like Denver was a huge investment that has produced virtually no fruit so far.Time to market seemed to be NVIDIA's problem with Tegra in the past so it does make sense to get Maxwell out the door ASAP.

syxbit - Monday, January 5, 2015 - link

Exactly. Denver has been a massive disappointment. It really needs to be on 20nm. At 28nm performance is too inconsistent, and throttles too much.I just find it funny how arrogant Nvidia is. They're always boasting and boasting with these announcements, and yet by the time they ship, they're rarely leading (or in the case of the K1, they're leading, but in literally only 1 shipping device).

djboxbaba - Monday, January 5, 2015 - link

Yes! How can they keep going about this on a yearly basis, disappointment after disappointment from NVidia in the mobile sector.Krysto - Monday, January 5, 2015 - link

Denver needs to be at 16nm. And we might still see it, at the end of the year/early next year if Nvidia releases the X1 on 20nm, and then X2 (I really hope they don't release another "X1", like they did this year with K1, making things very confusing) with Denver and on 16nm.name99 - Monday, January 5, 2015 - link

Unlikely that nV will release 16FF this year or early next year. Apple has likely booked all 16FF capacity for the next year or so, just like they did with 20nm. nV (and Qualcomm and everyone else) with get 16FF when Apple has satisfied the world's iPhone 6S and iPad 2015 demands...GC2:CS - Monday, January 5, 2015 - link

Yep.Like on the presentation, boasting about how Tegra K1 is still the best mobile chip (despite A8X matching it at significally lower power (which they don't have a graph for)) despite being released a "year before", while A8X has been released just "now". (While taking nVidia's logic A8X was "released" just few hours after K1 because imagination had annonced Series 6XT GPU's at CES 2014).

Yojimbo - Monday, January 5, 2015 - link

Tegra K1 is a 28nm part and the A8X is a 20nm device. The Shield tablet did launch 3 months before the iPad Air 2. Apple has a huge advantage in time to market. They can leverage the latest manufacturing technologies, and they don't have to demonstrate a product and secure design wins. Even though the shield tablet is an NVIDIA design, I doubt they have such tight control of their suppliers as Apple has, and they can't leverage the same high-volume orders. Apple designs a chip and designs a known product around that chip while the chip is being designed, and they can count on it selling in high volume. So you are making an unfair comparison of what NVIDIA is able to do and of the strength of the underlying architecture. If you want to compare the Series 6XT GPU architecture with K1's GPU architecture I think it should be done on the same manufacturing technology.