Intel Haswell Low Power CPU Review: Core i3-4130T, i5-4570S and i7-4790S Tested

by Ian Cutress on December 11, 2014 10:00 AM ESTProfessional Performance: Windows

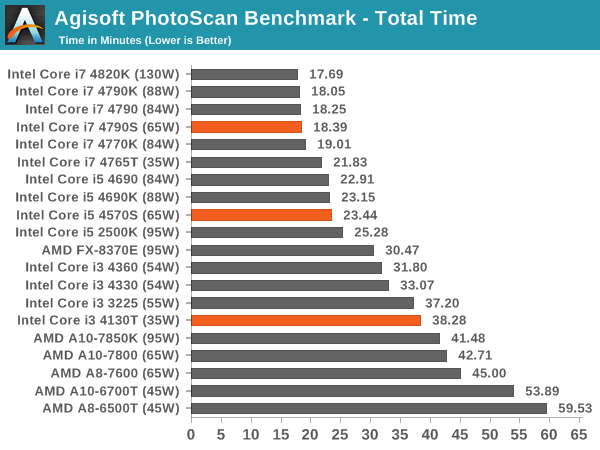

Agisoft Photoscan – 2D to 3D Image Manipulation: link

Agisoft Photoscan creates 3D models from 2D images, a process which is very computationally expensive. The algorithm is split into four distinct phases, and different phases of the model reconstruction require either fast memory, fast IPC, more cores, or even OpenCL compute devices to hand. Agisoft supplied us with a special version of the software to script the process, where we take 50 images of a stately home and convert it into a medium quality model. This benchmark typically takes around 15-20 minutes on a high end PC on the CPU alone, with GPUs reducing the time.

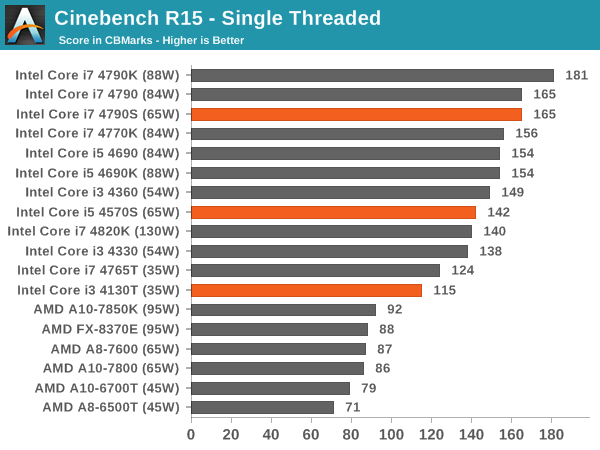

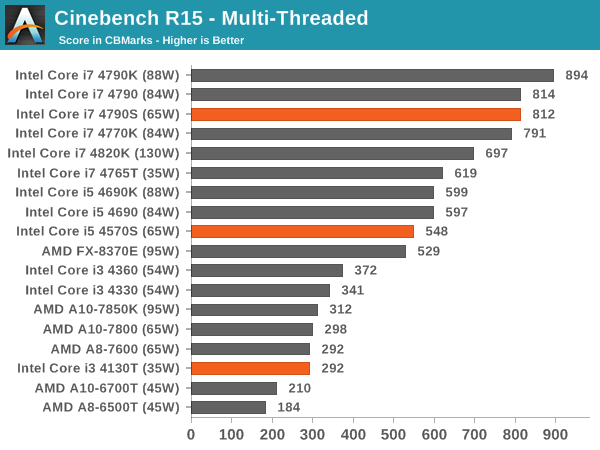

Cinebench R15

Professional Performance: Linux

Built around several freely available benchmarks for Linux, Linux-Bench is a project spearheaded by Patrick at ServeTheHome to streamline about a dozen of these tests in a single neat package run via a set of three commands using an Ubuntu 14.04 LiveCD. These tests include fluid dynamics used by NASA, ray-tracing, molecular modeling, and a scalable data structure server for web deployments. We run Linux-Bench and have chosen to report a select few of the tests that rely on CPU and DRAM speed.

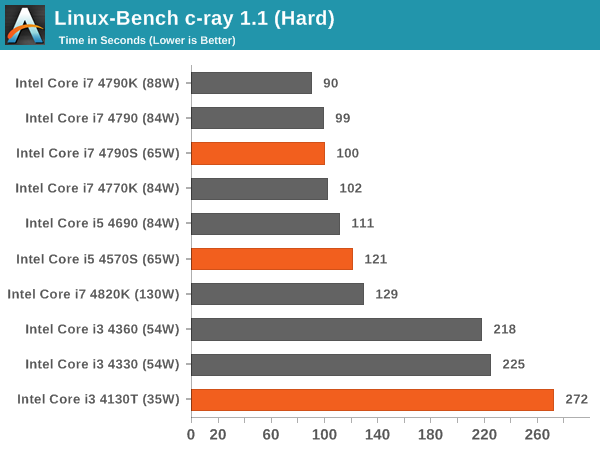

C-Ray: link

C-Ray is a simple ray-tracing program that focuses almost exclusively on processor performance rather than DRAM access. The test in Linux-Bench renders a heavy complex scene offering a large scalable scenario.

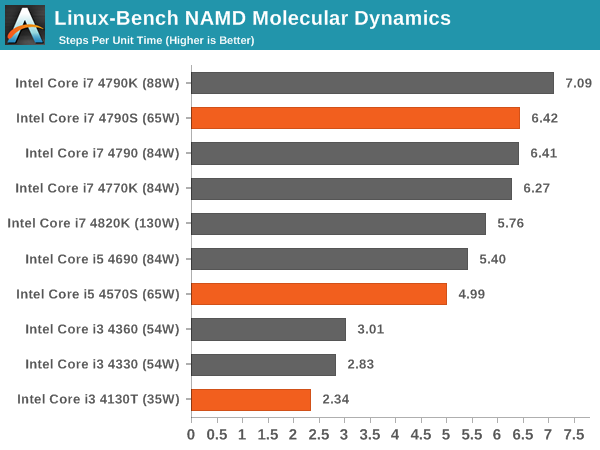

NAMD, Scalable Molecular Dynamics: link

Developed by the Theoretical and Computational Biophysics Group at the University of Illinois at Urbana-Champaign, NAMD is a set of parallel molecular dynamics codes for extreme parallelization up to and beyond 200,000 cores. The reference paper detailing NAMD has over 4000 citations, and our testing runs a small simulation where the calculation steps per unit time is the output vector.

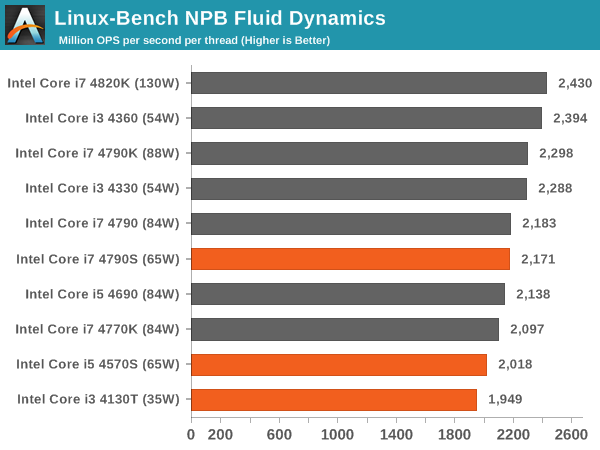

NPB, Fluid Dynamics: link

Aside from LINPACK, there are many other ways to benchmark supercomputers in terms of how effective they are for various types of mathematical processes. The NAS Parallel Benchmarks (NPB) are a set of small programs originally designed for NASA to test their supercomputers in terms of fluid dynamics simulations, useful for airflow reactions and design.

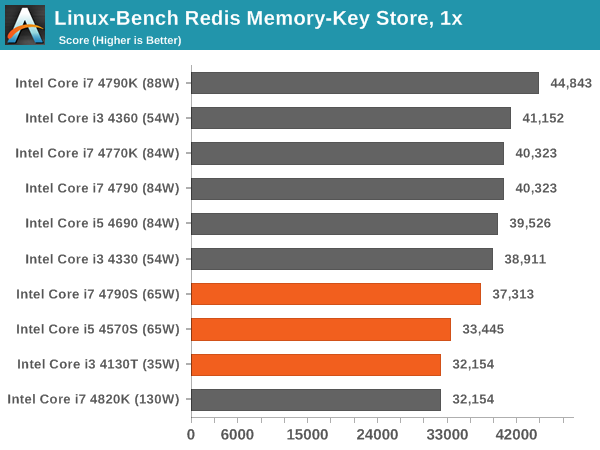

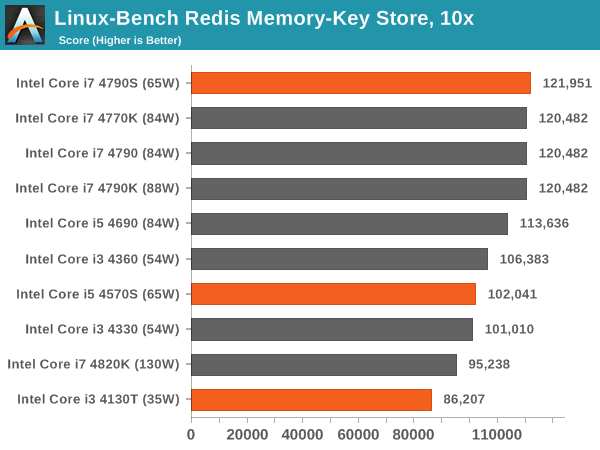

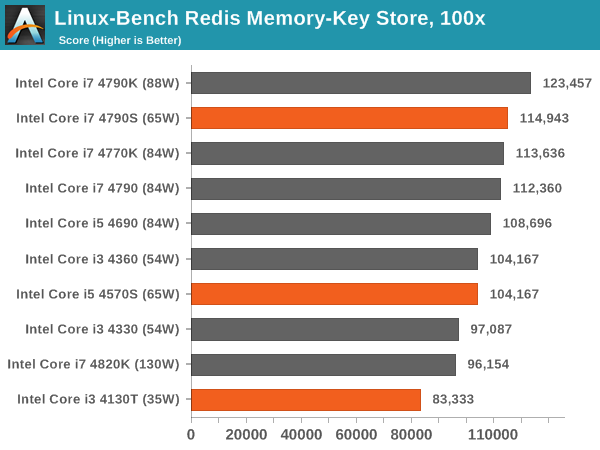

Redis: link

Many of the online applications rely on key-value caches and data structure servers to operate. Redis is an open-source, scalable web technology with a b developer base, but also relies heavily on memory bandwidth as well as CPU performance.

76 Comments

View All Comments

eanazag - Thursday, December 11, 2014 - link

That would directed at Ryan Smith and maybe jarred Walton for the mobile side of the equation. I suspect that the mobile side is unaffected at the moment.The only card that is well supported by all the new features in Omega is the R9 285 (Tonga). The R9 290 and 290X make a decent showing but are missing the 4K virtual resolution support.

chekk - Thursday, December 11, 2014 - link

Based on some other reviews, I was starting to think Anandtech had switched back to separate power consumption idle and load numbers, but here we go again. Please stop.The audience here is enthusiasts and enthusiasts want the complete picture.

DiHydro - Thursday, December 11, 2014 - link

I agree, if I have a server that is idle most of it's life, I want to know how many watts it is drawing at idle. On the other hand, if it is running 100% 24/7 then I want to see if a higher TDP, better performing CPU will be cost effective over a cheaper lower TDP part that might run a task longer.alacard - Thursday, December 11, 2014 - link

Xbitlabs did an excellent write up on the 4670S and T variants. Idle results for those can be found here: http://www.xbitlabs.com/articles/cpu/display/core-...Ultimately extremely disappointing results. Intel couldn't even be bothered to do the bare minimun and bin these chips for better perf/watt. Pathetic.

TiGr1982 - Thursday, December 11, 2014 - link

Captain Obvious tells us all, that Intel has a very weak competition on the x86 CPU front in all the recent years (I would say, right from the launch of Sandy Bridge LGA1155 almost 4 years ago). So, they make big money "for free" (in a sense that it's an easy money for them) all these years and don't have to bother about such peculiarities as better perf/watt...Khenglish - Thursday, December 11, 2014 - link

That xbitlabs review is very good and what I would have liked to see done here.Yeah bad results. I was hoping that these new parts meant that intel improved haswell's power efficiency, but in reality they just lowered the TDP.

smilingcrow - Thursday, December 11, 2014 - link

This feels like almost a completely wasted review, a sort of Seinfeld review; a review about nothing.Most things you can surmise about these S chips as many things scale linearly so it would have been good to have seen a much better focus on power consumption.

The unanswered question is whether these chips use different voltages than the stock chips and also a lack of hard power data; the delta data is not enough.

Also what did you use to load the cores?

It says AVX but what application and is AVX a good example to use as how many applications use it?

I’d like to have seen a focus on different CPU loads to determine the different characteristics of these S chips; INT, FP, AVX.

So rather than the multitude of redundant data that can deduced from scaling of frequency why not something focused and new!

What a wasted opportunity and a poorly thought out review.

theKai007 - Thursday, December 11, 2014 - link

Intel announced the Intel IoT Platform, a reference model end-to-end designed to unify ans simplify connectivity and security for the Internet of Things. http://bit.ly/1yCMSnBname99 - Thursday, December 11, 2014 - link

Look at the partners.Looks more like a enterprise software "solution" to acquire/store/extract data from than actual hardware or anything of interest to normal folks.

As long as Intel's HW story in that space is Quark, forget it...

xeizo - Thursday, December 11, 2014 - link

Right on spot, I use a 4570S in my Linux home server. But as an enthusiast I did some bclk(couldn't resist it) to 106.4 so that it Turbo:s to 3.83GHz and multithreadsx4 to 3.4GHz ;-) Anyway, it feels very fast running Linux. I couldn't have used a K-processor as I wouldn't be able to resist maximum clock it, no power saving server ...Gaming is done on a 4.8GHz 2600K, it doesn't look like a need to replace it anytime soon. Unless Skylake surprises us all.

Nice job informing about those lower power cpus, saves us time from undervolting and just draws less power from day one.