Intel Haswell Low Power CPU Review: Core i3-4130T, i5-4570S and i7-4790S Tested

by Ian Cutress on December 11, 2014 10:00 AM ESTProfessional Performance: Windows

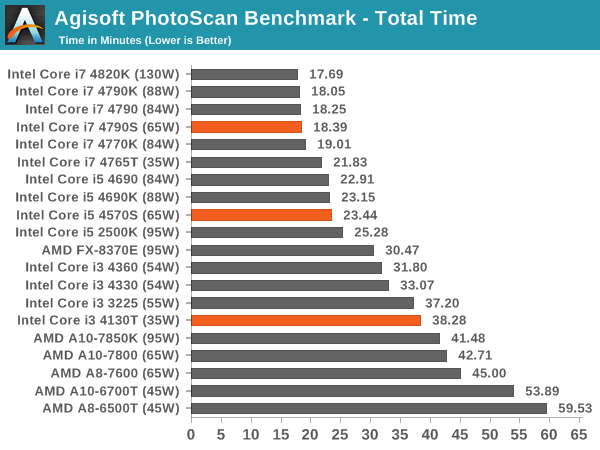

Agisoft Photoscan – 2D to 3D Image Manipulation: link

Agisoft Photoscan creates 3D models from 2D images, a process which is very computationally expensive. The algorithm is split into four distinct phases, and different phases of the model reconstruction require either fast memory, fast IPC, more cores, or even OpenCL compute devices to hand. Agisoft supplied us with a special version of the software to script the process, where we take 50 images of a stately home and convert it into a medium quality model. This benchmark typically takes around 15-20 minutes on a high end PC on the CPU alone, with GPUs reducing the time.

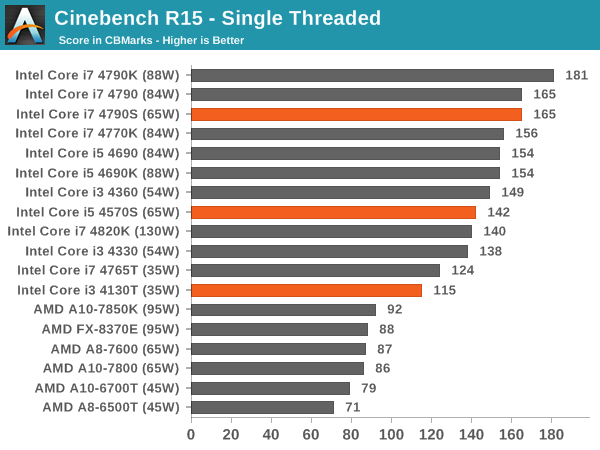

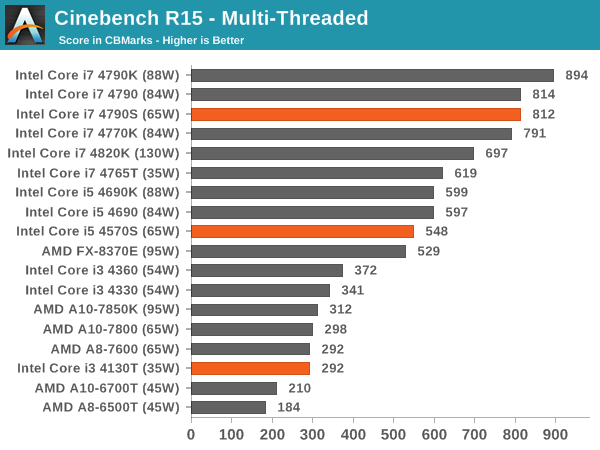

Cinebench R15

Professional Performance: Linux

Built around several freely available benchmarks for Linux, Linux-Bench is a project spearheaded by Patrick at ServeTheHome to streamline about a dozen of these tests in a single neat package run via a set of three commands using an Ubuntu 14.04 LiveCD. These tests include fluid dynamics used by NASA, ray-tracing, molecular modeling, and a scalable data structure server for web deployments. We run Linux-Bench and have chosen to report a select few of the tests that rely on CPU and DRAM speed.

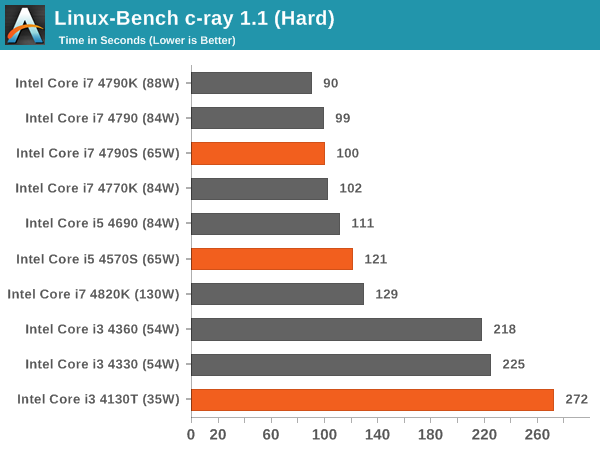

C-Ray: link

C-Ray is a simple ray-tracing program that focuses almost exclusively on processor performance rather than DRAM access. The test in Linux-Bench renders a heavy complex scene offering a large scalable scenario.

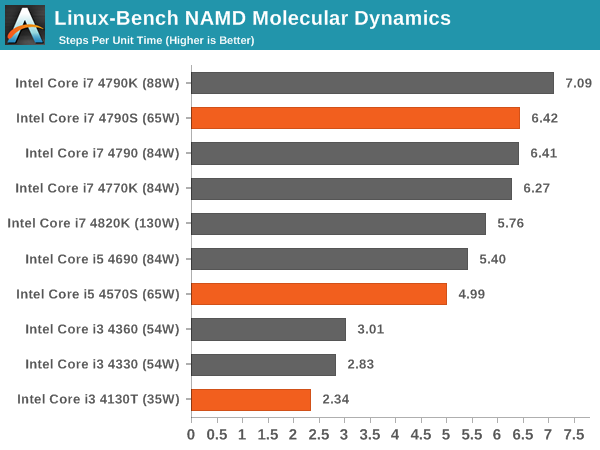

NAMD, Scalable Molecular Dynamics: link

Developed by the Theoretical and Computational Biophysics Group at the University of Illinois at Urbana-Champaign, NAMD is a set of parallel molecular dynamics codes for extreme parallelization up to and beyond 200,000 cores. The reference paper detailing NAMD has over 4000 citations, and our testing runs a small simulation where the calculation steps per unit time is the output vector.

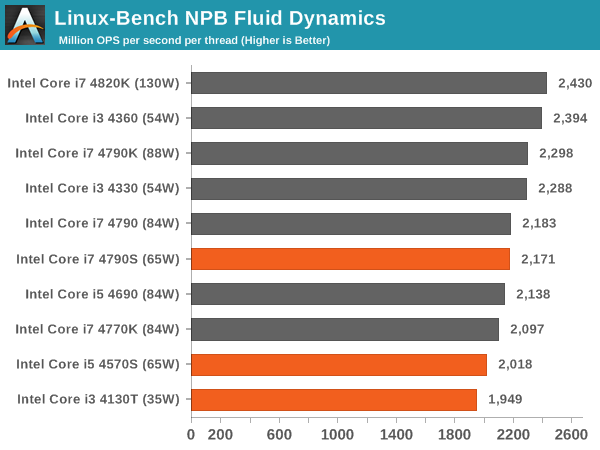

NPB, Fluid Dynamics: link

Aside from LINPACK, there are many other ways to benchmark supercomputers in terms of how effective they are for various types of mathematical processes. The NAS Parallel Benchmarks (NPB) are a set of small programs originally designed for NASA to test their supercomputers in terms of fluid dynamics simulations, useful for airflow reactions and design.

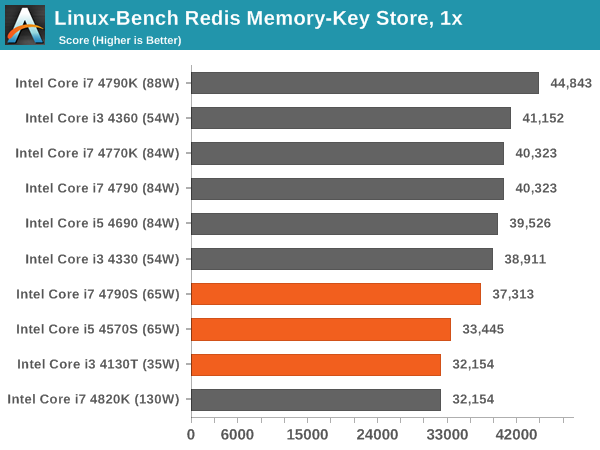

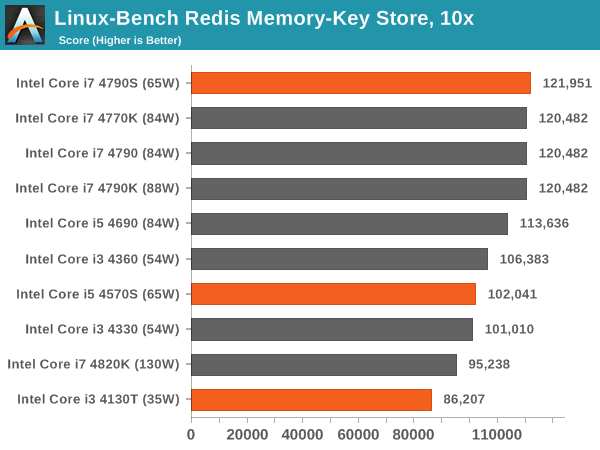

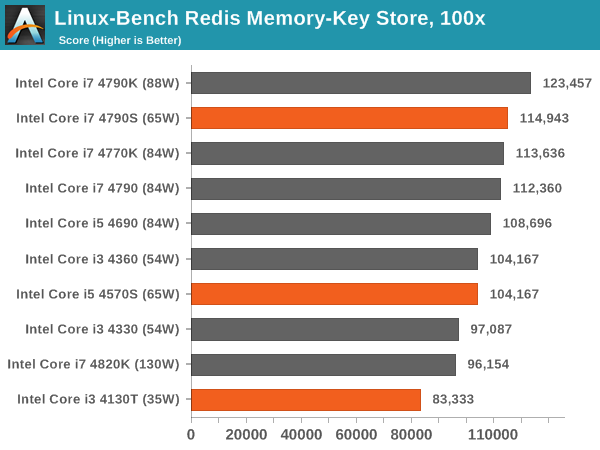

Redis: link

Many of the online applications rely on key-value caches and data structure servers to operate. Redis is an open-source, scalable web technology with a b developer base, but also relies heavily on memory bandwidth as well as CPU performance.

76 Comments

View All Comments

patrickjchase - Friday, December 12, 2014 - link

I used to work on SoCs in process nodes down to 28 nm, and the variation from the fast/fast (low-delay, leaky) to the slow/slow (high-delay, low-leakage) corners in modern processes is substantial. The fact that a given vendor isn't binning simply means that they're adding a fair bit of margin.For that matter I wouldn't be so sure that Apple doesn't bin. For example it's possible that the A7s in iPhone 5s and iPad Air were binned differently.

Finally, Intel's volumes create additional binning opportunities. A process condition that happens, say, 0.1% of time time would constitute such as small volume as to be useless to most vendors but adds up to a nice niche for Intel.

aj654987 - Friday, May 15, 2015 - link

I think its reasonable to believe they are binned. From a business perspective, is it really necessary to have THAT many different CPU models that Intel has? At some point you can have too many products and theyre competing with each other. Look at GM and how they had too many brands and rebadged vehicles that are competing with each other. I dont think there IS any business advantage to artificially create as many different chip models as intel has, though there is a business advantage to being able to salvage chips they would otherwise have to toss.When comparing to ARM processors, those are less complex and less expensive. If they have an ARM chip that tests bad, it may make more sense to toss it then to cripple it and sell it as a lower model. Also like consoles there is a preference to have the same speed across all devices, where as with a PC, different CPU speeds seem more acceptable to the market.

eanazag - Thursday, December 11, 2014 - link

The 65W parts seemed to show increased performance in IGP gaming versus their non-S counterparts. 4790S vs 4790. I would suspect the TDP budget for the IGP is unaffected by the TDP reduction and therefore might get a little thermal room to run harder. Looking at Intel ARK the 4790 series all runs at 350 base and 1.2 max; the 4790K is able to boost higher to 1.25GHz. The IGP gaming number seem to tell this story.You can also see when a discrete GPU is thrown in there the non-S parts then perform above the S parts in gaming.

For IGP gaming AMD is still the best choice; and that is about all they're good for.

evilspoons - Friday, December 12, 2014 - link

This is the story in thermally-limited situations like the Surface Pro 3. The i5 model is faster at games than the i7 simply because the i5's CPU uses less of the thermal budget so the iGPU can stay faster for longer. In a more extreme case, running old non-CPU bound games (World of Warcraft), the i3 model is even better - the CPU leaves even more room for the iGPU.Of course, this could all be avoided by the game simply going "hmm, which one is really slowing me down - the CPU loop or the GPU loop?" and then throttling one to match, but the odds of that happening any time soon are pretty poor.

mortenelkjaer - Friday, December 12, 2014 - link

IGP gaming, What is that?MrSpadge - Thursday, December 11, 2014 - link

No. The regular Intel 22 nm CPUs are so good that they can run ~4.0 GHz at ~1.0 V, whereas stock gives them almost 1.2 V at the top turbo bin. So cutting down on power consumption hardly requires any effort.Samus - Thursday, December 11, 2014 - link

Eventually wear and leakage will cause tapering. The long-term reliability is Intel's goal which is why these chips are so conservatively clocked. I've already read reports of people running Haswell at 1.3V that initially had them stable at 4.6+GHz and a year later, can't crack 4.2GHz at 1.2V.Keeping these things around 1.0V is key to their service life. As Spadge said, try to get the most you can out of the stock voltage (usually 4GHz, sometimes more.)

B3an - Thursday, December 11, 2014 - link

Completely off topic, but you guys do an article on AMD's new "Omega" driver? It has loads of new features and i can't find anywhere that's done a proper in-depth article on it.DiHydro - Thursday, December 11, 2014 - link

The Tech Report, and PCPer both have articles about the features and performance gains of the Omega driver release.JarredWalton - Thursday, December 11, 2014 - link

It's in the works. Ryan and I both were out for a few days due to illness, unfortunately.