The Google Nexus 9 Review

by Joshua Ho & Ryan Smith on February 4, 2015 8:00 AM EST- Posted in

- Tablets

- HTC

- Project Denver

- Android

- Mobile

- NVIDIA

- Nexus 9

- Lollipop

- Android 5.0

SPECing Denver's Performance

Finally, before diving into our look at Denver in the real world on the Nexus 9, let’s take a look at a few performance considerations.

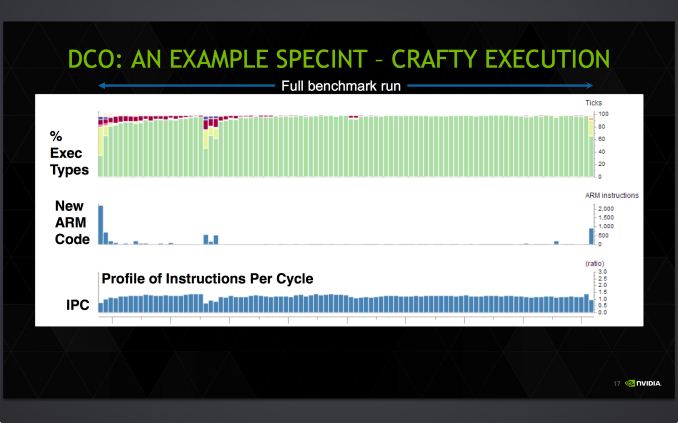

With so much of Denver’s performance riding on the DCO, starting with the DCO we have a slide from NVIDIA profiling the execution of SPECInt2000 on Denver. In it NVIDIA showcases how much time Denver spends on each type of code execution – native ARM code, the optimizer, and finally optimized code – along with an idea of the IPC they achieve on this benchmark.

What we find is that as expected, it takes a bit of time for Denver’s DCO to kick in and produce optimized native code. At the start of the benchmark execution with little optimized code to work with, Denver initially executes ARM code via its ARM decoder, taking a bit of time to find recurring code. Once it finds that recurring code Denver’s DCO kicks in – taking up CPU time itself – as the DCO begins replacing recurring code segments with optimized, native code.

In this case the amount of CPU time spent on the DCO is never too great of a percentage of time, however NVIDIA’s example has the DCO noticeably running for quite some time before it finally settles down to an imperceptible fraction of time. Initially a much larger fraction of the time is spent executing ARM code on Denver due to the time it takes for the optimizer to find recurring code and optimize it. Similarly, another spike in ARM code is found roughly mid-run, when Denver encounters new code segments that it needs to execute as ARM code before optimizing it and replacing it with native code.

Meanwhile there’s a clear hit to IPC whenever Denver is executing ARM code, with Denver’s IPC dropping below 1.0 whenever it’s executing large amounts of such code. This in a nutshell is why Denver’s DCO is so important and why Denver needs recurring code, as it’s going to achieve its best results with code it can optimize and then frequently re-use those results.

Also of note though, Denver’s IPC per slice of time never gets above 2.0, even with full optimization and significant code recurrence in effect. The specific IPC of any program is going to depend on the nature of the code, but this serves as a good example of the fact that even with a bag full of tricks in the DCO, Denver is not going to sustain anything near its theoretical maximum IPC of 7. Individual VLIW instructions may hit 7, but over any period of time if a lack of ILP in the code itself doesn’t become the bottleneck, then other issues such as VLIW density limits, cache flushes, and unavoidable memory stalls will. The important question is ultimately whether Denver’s IPC is enough of an improvement over Cortex A15/A57 to justify both the power consumption costs and the die space costs of its very wide design.

NVIDIA's example also neatly highlights the fact that due to Denver’s favoritism for code reuse, it is in a position to do very well in certain types of benchmarks. CPU benchmarks in particular are known for their extended runs of similar code to let the CPU settle and get a better sustained measurement of CPU performance, all of which plays into Denver’s hands. Which is not to say that it can’t also do well in real-world code, but in these specific situations Denver is well set to be a benchmark behemoth.

To that end, we have also run our standard copy of SPECInt2000 to profile Denver’s performance.

| SPECint2000 - Estimated Scores | ||||||

| K1-32 (A15) | K1-64 (Denver) | % Advantage | ||||

| 164.gzip |

869

|

1269

|

46%

|

|||

| 175.vpr |

909

|

1312

|

44%

|

|||

| 176.gcc |

1617

|

1884

|

17%

|

|||

| 181.mcf |

1304

|

1746

|

34%

|

|||

| 186.crafty |

1030

|

1470

|

43%

|

|||

| 197.parser |

909

|

1192

|

31%

|

|||

| 252.eon |

1940

|

2342

|

20%

|

|||

| 253.perlbmk |

1395

|

1818

|

30%

|

|||

| 254.gap |

1486

|

1844

|

24%

|

|||

| 255.vortex |

1535

|

2567

|

67%

|

|||

| 256.bzip2 |

1119

|

1468

|

31%

|

|||

| 300.twolf |

1339

|

1785

|

33%

|

|||

Given Denver’s obvious affinity for benchmarks such as SPEC we won’t dwell on the results too much here. But the results do show that Denver is a very strong CPU under SPEC, and by extension under conditions where it can take advantage of significant code reuse. Similarly, because these benchmarks aren’t heavily threaded, they’re all the happier with any improvements in single-threaded performance that Denver can offer.

Coming from the K1-32 and its Cortex-A15 CPU to K1-64 and its Denver CPU, the actual gains are unsurprisingly dependent on the benchmark. The worst case scenario of 176.gcc still has Denver ahead by 17%, meanwhile the best case scenario of 255.vortex finds that Denver bests A15 by 67%, coming closer than one would expect towards doubling A15's performance entirely. The best case scenario is of course unlikely to occur in real code, though I’m not sure the same can be said for the worst case scenario. At the same time we find that there aren’t any performance regressions, which is a good start for Denver.

If nothing else it's clear that Denver is a benchmark monster. Now let's see what it can do in the real world.

169 Comments

View All Comments

philosofa - Wednesday, February 4, 2015 - link

Anandtech have always prioritised quality, insight and being correct, over being the first to press. It's why a giant chunk of its readerbase reads it. This is going to be relevant and timely to 90% of people who purchase the Nexus 9. It's also likely to be the definitive article hardware and tech wise produced anywhere in the world.I'd call that a win. I just think there's no pleasing everyone, particularly the 'what happened to AT????!? crowd' that's existed here perpectually. You remind me of the Simcity Newspaper article 'naysayers say nay'. No: Yea.

iJeff - Wednesday, February 4, 2015 - link

You must be new around here. Anandtech was always quite a bit slow to release their mobile hardware reviews; the quality has always been consistently higher in turn.Rezurecta - Wednesday, February 4, 2015 - link

C'mon guys. This isn't the freakin Verge or Engadget review. If you want fancy photos and videos with the technical part saying how much RAM it has, go to those sites.The gift of Anandtech is the deep dive into the technical aspects of the new SoC. That ability is very exclusive and the reason that Anandtech is still the same site.

ayejay_nz - Wednesday, February 4, 2015 - link

Release article in a hurry and unfinished ... "What has happened to Anandtech..."Release article 'late' and complete ... "What has happened to Anandtech..."

There are some people you will never be able to please!

Calista - Thursday, February 5, 2015 - link

You still have plenty of other sites giving 0-day reviews. Let's give the guys (and girls?) of Anandtech the time needed for in-depth reviews.littlebitstrouds - Friday, February 6, 2015 - link

No offence but your privilege is showing...HarryX - Friday, February 6, 2015 - link

I remember that the Nexus 5 review came out so late and that was by Brian Klug, so it's really nothing unusual here. This review covers so much about Denver that wasn't mentioned elsewhere that I won't mind at all if it was coming late. Reviews like this one (and the Nexus 5 one to a lesser extent, or the Razer Blade 2014 one) are so extensive that I won't mind reading them months after release. The commentary and detailed information in them alone make them worth the read.UtilityMax - Sunday, February 8, 2015 - link

The review is relevant. The tablet market landscape hasn't been changed much between then and now. Anyone in the market for a Nexus 9 or similar tablet is going to evaluate it against what was available at the time of release.SunnyNW - Sunday, February 8, 2015 - link

Only thing that sucks about these late reviews is if you are trying to base your purchase decision on it especially with holiday season.Aftershocker - Friday, February 13, 2015 - link

I've got to agree, Anandtech have dropped the ball on this one. I always prefer to wait and read the comprehensive Anandtech review which I understand can take a few weeks, but this is simply to long to get a review out on a flagship product. Call me sceptical but I can't see such a situation occurring on the review of a new apple product.