The NVIDIA GeForce GTX 980 Review: Maxwell Mark 2

by Ryan Smith on September 18, 2014 10:30 PM ESTDisplay Matters: HDMI 2.0, HEVC, & VR Direct

Stepping away from the graphics heart of Maxwell 2 for a bit, NVIDIA has been busy not just optimizing their architecture and adding graphical features to their hardware, but they have also added some new display-oriented features to the Maxwell 2 architecture. This has resulted in an upgrade of their video encode capabilities, their display I/O capabilities, and even their ability to drive virtual reality headsets such as the Oculus Rift.

We’ll start first with display I/O. HDMI users will be happy to see that as of GM204, NVIDIA now supports HDMI 2.0, which will allow NVIDIA to drive future 4K@60Hz displays over HDMI and without compromise. HDMI 2.0 for its part is the 4K-focused upgrade of the HDMI standard, and brings with it support for the much higher data rate (through a greatly increased clockspeed of 600MHz) necessary to drive 4K displays at 60Hz, while also introducing features such as new subsampling patterns like YCbCr 4:2:0, and official support for wide aspect ratio (21:9 displays).

It should be noted that this is full HDMI 2.0 support, and as a result it notably differs from the earlier support that NVIDIA patched into Kepler and Maxwell 1 through drivers. Whereas NVIDIA’s earlier update was to allow these products to drive a 4K@60Hz display using 4:2:0 subsampling to stay within the bandwidth limitations of HDMI 1.4, Maxwell 2 implements the bandwidth improvements necessary to support 4K@60Hz with full resolution 4:4:4 and RGB color spaces.

Given the timeline for HDMI 2.0 development, the fact that we’re seeing HDMI 2.0 support now is if anything a pleasant surprise, since it’s earlier than we expected it. However this will leave HTPC users in a pickle if they want HDMI 2.0 support; with the GM107 based GTX 750 series having launched only 7 months ago without HDMI 2.0 support, we would not expect NVIDIA’s HTPC-centric video cards to be replaced any time soon. This means the only option for HTPC users wanting HDMI 2.0 support right away is to upgrade to a larger and more powerful Maxwell 2 based card, or otherwise stick to the low powered GTX 750 series and go without HDMI 2.0.

Meanwhile alongside the upgrade to HDMI 2.0, NVIDIA has also made one other change to their display controllers that should be of interest to multi-monitor users. With Maxwell 2, a single display controller can now drive multiple identical MST substreams on its own, rather than requiring a different display controller for each stream. This feature will be especially useful for driving tiled monitors such as many of today’s 4K monitors, which are internally a pair of identical displays driven using MST. By being able to drive both tiles off of a single display controller, NVIDIA can make better use of their 4 display controllers, allowing them to drive up to 4 such displays off of a Maxwell 2 GPU as opposed to the 2 display limitation that is inherent to Kepler GPUs. For the consumer cards we’re seeing today, the most common display I/O configuration will include 3 DisplayPorts, allowing these specific cards to drive up to 3 such 4K monitors.

HEVC & 4K Encoding

In Maxwell 1, NVIDIA introduced updated versions of both their video encode and decode engines. On the decode side the new VP6 decoder increased the performance of the decode block to allow NVIDIA to decode H.264 up to 4K@60Hz (Level 5.2), something the older VP5 decoder was not fast enough to do. Meanwhile the Maxwell 1 NVEC video encoder received a similar speed boost, roughly doubling its performance compared to Kepler.

Surprisingly, even after only 7 months since the first Maxwell 1 GPUs, NVIDIA has once again overhauled NVENC, and this time more significantly. The Maxwell 2 version of NVENC further builds off of the Maxwell 1 NVENC by adding full support for HEVC (H.265) encoding. Like HDMI 2.0 support, this marks the very first PC GPU we’ve seen integrate support for this more advanced codec.

At this point there’s really not much that can be done with Maxwell 2’s HEVC encoder – it’s not exposed in anything or used in NVIDIA’s current tools – but NVIDIA is laying the groundwork for the future once HEVC support becomes more commonplace in other hardware and software. NVIDIA envisions their killer app for HEVC support to be game streaming, where the higher efficiency of HEVC will improve the image quality of game streams due to the limited bandwidth available in most streaming scenarios. In the long run we would expect NVIDIA to utilize HEVC for GameStream for the home, and at the server level support for HEVC in the next generation of GRID cards will be a major boon to NVIDIA’s GRID streaming efforts.

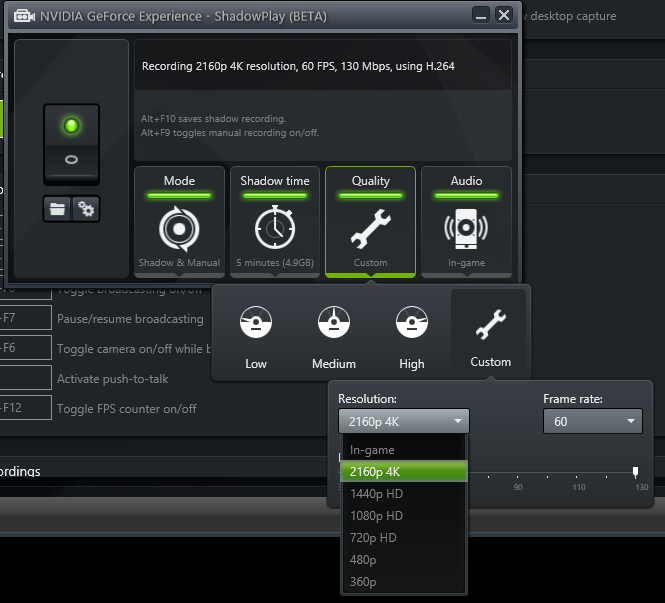

Meanwhile where the enhanced version of NVENC is going to be applicable today is in ShadowPlay. While still recording in H.264, the higher performance of NVENC means that NVIDIA can now offer recording at higher resolutions and bitrates. With GM204 NVIDIA’s hardware can now record at 1440p60 and 4Kp60 at bitrates up to 130Mbps, as opposed to the 1080p60 @ 50Mbps limit for their Kepler cards.

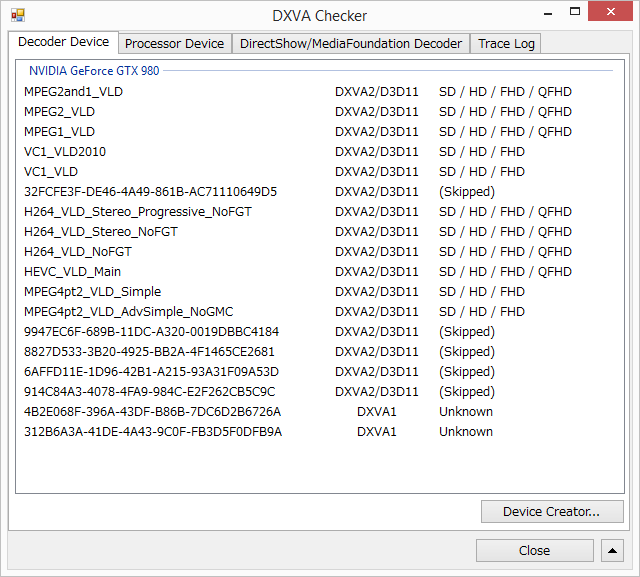

Finally, and somewhat paradoxically, Maxwell 2 inherits Kepler and Maxwell 1’s hybrid HEVC decode support. First introduced with Maxwell 1 and backported to Kepler, NVIDIA’s hybrid HEVC decode support enables HEVC decoding on these parts by using a combination of software (shader) and hardware decoding, leveraging the reusable portions of the H.264 decode block to offload to fixed function hardware what elements it can, and processing the rest in software.

A hybrid decode process is not going to be as power efficient as a full fixed function decoder, but handled in the GPU it will be much faster and more power efficient than handling the process in software. The fact that Maxwell 2 gets a hardware HEVC encoder but a hybrid HEVC decoder is in turn a result of the realities of hardware development for NVIDIA; you can’t hybridize encoding, and the hybrid decode process is good enough for now. So NVIDIA spent their efforts on getting hardware HEVC encoding going first, and at this point we’d expect to see full hardware HEVC decoding show up in a future generation of hardware (and we’d note that NVIDIA can swap VP blocks at will, so it doesn’t necessarily have to be Pascal).

VR Direct

Our final item on the list of NVIDIA’s new display features is a family of technologies NVIDIA is calling VR Direct.

VR Direct in a nutshell is a collection of technologies and software enhancements designed to improve the experience and performance of virtual reality headsets such as the Oculus Rift. From a practical perspective NVIDIA already has some experience in stereoscopic rendering through 3D Vision, and from a marketing perspective the high resource requirements of VR would be good for encouraging GeForce sales, so NVIDIA will be heavily investing into the development of VR technologies through VR Direct.

From a technical perspective the biggest thing that Oculus and other VR headset makers need from GPU manufacturers and the other companies involved in the PC ecosystem is methods of reducing the latency/input lag between a user’s input and when a finished frame becomes visible on a headset. While some latency is inevitable – it takes time to gather data and render a frame – the greater the latency the greater the disconnect will be between the user and the rendered world. In more extreme cases this can make the simulation unusable, or even trigger motion sickness in individuals whose minds can’t handle the disorientation from the latency. As a result several of NVIDIA’s features are focused on reducing latency in some manner.

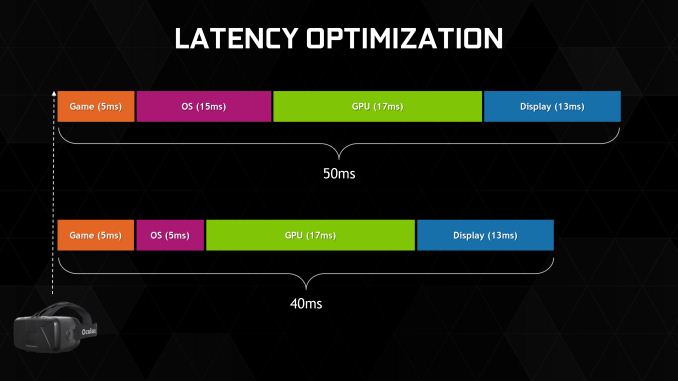

First and foremost, for VR headsets NVIDIA has implemented a low latency mode that minimizes the amount of time a frame spends being prepared by the drivers and OS. In an average case this low latency mode eliminates 10ms of OS-induced latency from the rendering pipeline, and this is the purest optimization of the bunch.

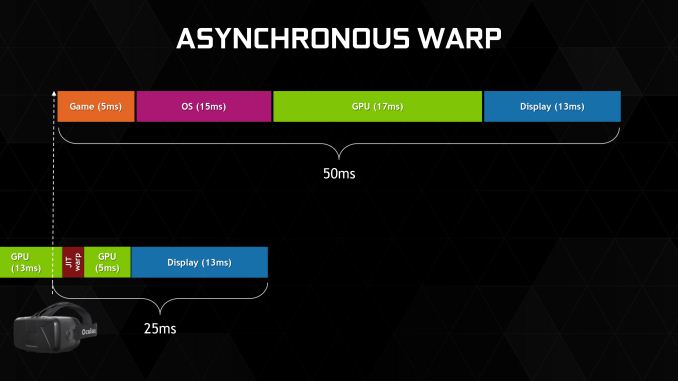

Meanwhile at the more extreme end of the feature spectrum, NVIDIA will be supporting a feature called asynchronous warp. This feature, known by Oculus developers as time warp, involves rendering a frame and then at the last possible moment updating the head tracking information from the user. After that information is acquired, the nearly finished frame then has a post-process warping applied to it to take into account head movement since the frame was initially submitted, with the ultimate goal of this warping being the simulation of what the frame should look like had it been rendered instantaneously.

From a quality perspective asynchronous warp stands to be a bit of a kludge, but it is the single most potent latency improvement among the VR Direct feature set. By modifying the frame to account for the user’s head position as late as is possible, it reduces the perceived latency by as much as 25ms.

NVIDIA’s third latency optimization is less a VR optimization and more a practical effect of an existing technology, and that is Multi-Frame sampled Anti-Aliasing. As we'll discuss later in our look at this new AA mode, Multi-Frame sampled Anti-Aliasing is designed to offer 4x MSAA-like quality with 2x MSAA-like performance. Assuming a baseline of 4x MSAA, switching it out for Multi-Frame sampled Anti-Aliasing can shave an additional few milliseconds off of the frame rendering time.

Lastly, NVIDIA’s fourth and final latency optimization for VR Direct is VR SLI. And this feature is simple enough: rather than using alternate frame rendering (AFR) to render both eyes at once on one GPU, split up the workload such that each GPU is working on each eye simultaneously. AFR, though highly compatible with traditional monoscopic rendering, introduces additional latency that would be undesirable for VR. By rendering each eye separately on each GPU, NVIDIA is able to apply the performance benefits of SLI to VR without creating additional latency. Given the very high performance and low latencies required for VR, it’s currently expected that most high-end games supporting VR headsets will need SLI to achieve their necessary performance, so being able to use SLI without a latency penalty will be an important part of making VR gaming commercially viable.

On a side note, for the sake of clarity we do want to point out that many of NVIDIA’s latency optimizations come from the best practices suggestions of Oculus VR. Asynchronous warp and OS level latency optimizations for example are features that Oculus VR is suggesting for hardware developers and/or pursuing themselves. So while these features are very useful to have on GeForce hardware, they are not necessarily all ideas that NVIDIA has come up with or technologies that are limited to NVIDIA hardware (or even the Maxwell 2 architecture).

Moving on, other than NVIDIA’s latency reduction technologies the VR Direct feature set will also include some feature improvements designed to improve the quality and usability of VR. NVIDIA’s Dynamic Super Resolution (DSR) technology will be available to VR, and given the physical limits on pixel density in today’s OLED panels it will be an important tool in reducing perceptible aliasing. NVIDIA will also be extending VR support to GeForce Experience at a future time, simplifying the configuration of VR-enabled games. For VR on GeForce Experience NVIDIA wants to go beyond just graphical settings and also auto-configure inputs as well, handling remapping of inputs to head/body tracking for the user automatically.

Ultimately at this point VR Direct is more of a forward looking technology than it is something applicable today – the first consumer Oculus Rift hasn’t even been announced, let alone shipped – but by focusing on VR early NVIDIA is hoping to improve the speed and ease of VR development, and have the underpinnings in place once consumer VR gear becomes readily available.

274 Comments

View All Comments

squngy - Wednesday, November 19, 2014 - link

It is explained in the article.Because GTX980 makes so many more frames the CPU is worked a lot harder. The W in those charts are for the whole system so when the CPU uses more power it makes it harder to directly compare GPUs

galta - Friday, September 19, 2014 - link

The simple fact is that a GPU more powerful than a GTX 980 does not make sense right now, no matter how much we would love to see it.See, most folks are still gaming @ 1080, some of us are moving up to 1440. Under this scenarios, a GTX 980 is more than enough, even if quality settings are maxed out. Early reviews show that it can even handle 4K with moderate settings, and we should expect further performance gains as drivers improve.

Maybe in a year or two, when 4K monitors become more relevant, a more powerful GPU would make sense. Now they simply don't.

For the moment, nVidia's movement is smart and commendable: power efficiency!

I mean, such a powerful card at only 165W! If you are crazy/wealthy enough to have two of them in SLI, you can cut your power demand by 170W, with following gains in temps and/or noise, and and less expensive PSU, if you're building from scratch.

In the end, are these new cards great? Of course they are!

Does it make sense to up-grade right now? Only if you running a 5xx or 6xx series card, or if your demands have increased dramatically (multi-monitor set-up, higher res. etc.).

Margalus - Friday, September 19, 2014 - link

A more powerful gpu does make sense. Some people like to play their games with triple monitors, or more. A single gpu that could play at 7680x1440 with all settings maxed out would be nice.galta - Saturday, September 20, 2014 - link

How many of us demand such power? The ones who really do can go SLI and OC the cards.nVidia would be spending billions for a card that would sell thousands. As I said: we would love the card, but still no sense

Again, I would love to see it, but in the forseeable future, I won't need it. Happier with noise, power and heat efficiency.

Da W - Monday, September 22, 2014 - link

Here's one that demands such power. I play 3600*1920 using 3 screens, almost 4k, 1/3 the budget, and still useful for, you know, working.Don't want sli/crossfire. Don't want a space heater either.

bebimbap - Saturday, September 20, 2014 - link

gaming at 1080@144 or 1080 with min fps of 120 for ulmb is no joke when it comes to gpu requirement. Most modern games max at 80-90fps on a OC'd gtx670 you need at least an OC'd gtx770-780. I'd recommend 780ti. and though a 24" 1080 might seem "small" you only have so much focus. You can't focus on periphery vision you'd have to move your eyes to focus on another piece of the screen. the 24"-27" size seems perfect so you don't have to move your eyes/head much or at all.the next step is 1440@144 or min fps of 120 which requires more gpu than @ 4k60. as 1440 is about 2x 1080 you'd need a gpu 2x as powerful. so you can see why nvidia must put out a powerful card at a moderate price point. They need it for their 144hz gsync tech and 3dvision

imo the ppi race isn't as beneficial as higher refresh rate. For TVs manufacturers are playing this game of misinformation so consumers get the short end of the stick, but having a monitor running at 144hz is a world of difference compared to 60hz for me. you can tell just from the mouse cursor moving across the screen. As I age I realize every day that my eyes will never be as good as yesterday, and knowing that I'd take a 27" 1440p @ 144hz any day over a 28" 5k @ 60hz.

Laststop311 - Sunday, September 21, 2014 - link

Well it all depends on viewing distance. I use a 30" 2560x1600 dell u3014 to game on currently since it's larger i can sit further away and still have just as good of an experience as a 24 or 27 thats closer. So you can't just say larger monitors mean you can;t focus on it all cause you can just at a further distance.theuglyman0war - Monday, September 22, 2014 - link

The power of the newest technology is and has always been an illusion because the creation of games will always be an exercise in "compromise". Even a game like WOW that isn't crippled by console consideration is created by the lowest common denominator demographic in the PC hardware population. In other words... ( if u buy it they will make it vs. if they make it I will upgrade ). Besides the unlimited reach of an openworld's "possible" textures and vtx counts."Some" artists are of the opinion that more hardware power would result in a less aggressive graphic budget! ( when the time spent wrangling a synced normal mapped representation of a high resolution sculpt or tracking seam problems in lightmapped approximations of complex illumination with long bake times can take longer than simply using that original complexity ). The compromise can take more time then if we had hardware that could keep up with an artists imagination.

In which case I gotta wonder about the imagination of the end user that really believes his hardware is the end to any graphics progress?

ppi - Friday, September 19, 2014 - link

On desktop, all AMD needs to do is to lower price and perhaps release OC'd 290X to match 980 performance. It will reduce their margins, but they won't be irrelevant on the market, like in CPUs vs Intel (where AMD's most powerful beasts barely touch Intel's low-end, apart from some specific multi-threaded cases)Why so simple? On desktop:

- Performance is still #1 factor - if you offer more per your $, you win

- Noise can be easily resolved via open air coolers

- Power consumption is not such a big deal

So ... if AMD card at a given price is as fast as Maxwell, then they are clearly worse choice. But if they are faster?

In mobile, however, they are screwed big way, unless they have something REAL good in their sleeve (looking at Tonga, I do not think they do, as I am convinced AMD intends to pull off another HD5870 (i.e. be on the new process node first), but it apparently did not work this time around.)

Friendly0Fire - Friday, September 19, 2014 - link

The 290X already is effectively an overclocked 290 though. I'm not sure they'd be able to crank up power consumption reliably without running into heat dissipation or power draw limits.Also, they'd have to invest in making a good reference cooler.