The NVIDIA GeForce GTX 980 Review: Maxwell Mark 2

by Ryan Smith on September 18, 2014 10:30 PM ESTMetro: Last Light

As always, kicking off our look at performance is 4A Games’ latest entry in their Metro series of subterranean shooters, Metro: Last Light. The original Metro: 2033 was a graphically punishing game for its time and Metro: Last Light is in its own right too. On the other hand it scales well with resolution and quality settings, so it’s still playable on lower end hardware.

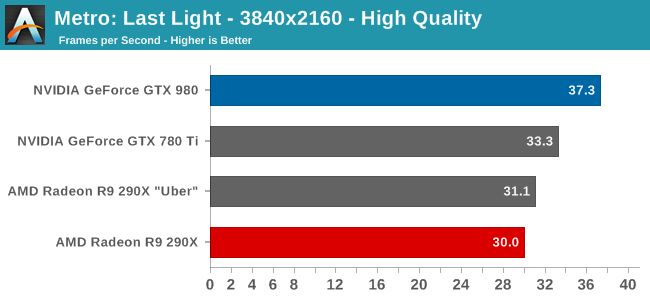

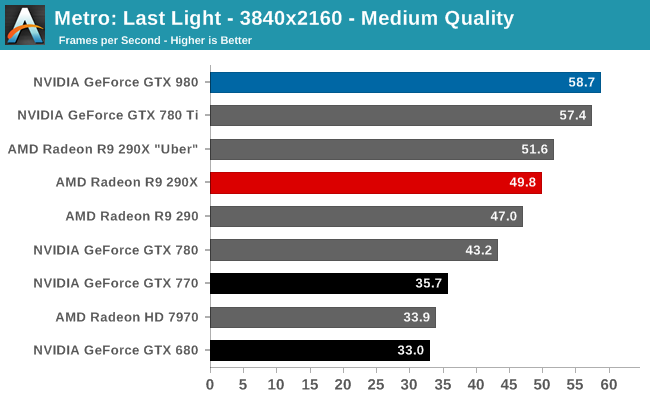

As has become customary for us for the last couple of high-end video card reviews, we’re going to be running all of our 4K video card benchmarks at both high quality and at a lower quality level. In practice not even GTX 980 is going to be fast enough to comfortably play most of these games at 3840x2160 with everything cranked up – that is going to be multi-GPU territory – so for that reason we’re including a lower quality setting to showcase just what performance looks like at settings more realistic for a single GPU.

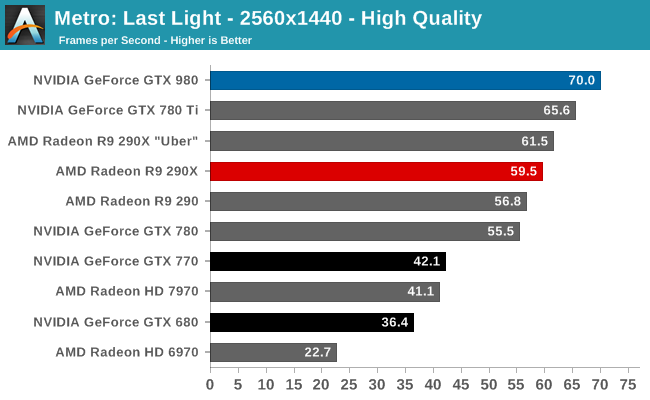

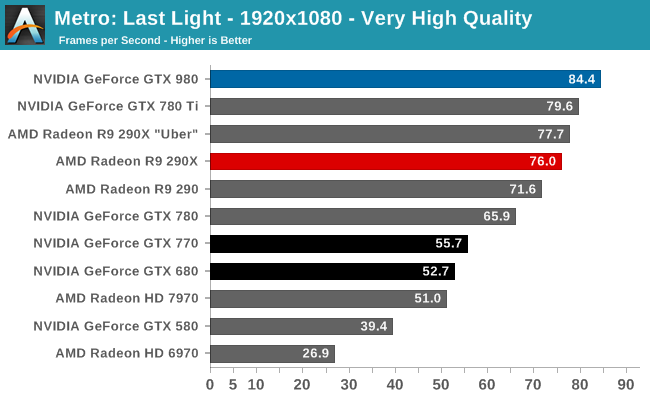

GTX 980 comes out swinging in our first set of benchmarks. If there was any doubt that it could surpass the likes of R9 290XU and GTX 780 Ti, then this first benchmark is a great place to set those doubts to rest. At all resolutions and quality settings it comes out on top, surpassing NVIDIA’s former consumer flagship by anywhere from a few percent to 12% at 4K with high quality settings. Otherwise against the R9 290XU it’s a consistent 13% lead at 2560 and 4K Medium.

In absolute terms this is enough performance to keep its average framerates well over 60fps at 2560, and even at 3840 Medium it comes just short of crossing the 60fps mark. High quality mode will take the wind out of GTX 980’s sails though, pushing framerates back into the borderline 30fps range.

Looking at NVIDIA’s last-generation parts for a moment, the performance gains over the lower tier GK110 based GTX 780 are around 25-35%. This is about where you’d expect to see a new GTX x80 card given NVIDIA’s quasi-regular 2 year performance upgrade cadence. And when extended out to a full 2 years, the performance advantage over GTX 680 is anywhere between 60% and 92% depending on the resolution we’re looking at. NVIDIA proclaims that GTX 980 will achieve 2x the performance per watt of GTX 680, and since GTX 980 is designed to operate at a lower TDP than GTX 680, as we can see it means performance over GTX 680 won’t quite be doubled in most cases.

274 Comments

View All Comments

jmunjr - Friday, September 19, 2014 - link

Wish you had done a GTX 970 review as well like many other sites since way more of us care about that card than the 980 since it is cheaper.Gonemad - Friday, September 19, 2014 - link

Apparently, if I want to run anything under the sun in 1080p cranked to full at 60fps, I will need to get me one GTX 980 and a suitable system to run with it, and forget mid-ranged priced cards.That should put an huge hole in my wallet.

Oh yes, the others can run stuff at 1080p, but you have to keep tweaking drivers, turning AA on, turning AA off, what a chore. And the milennar joke, yes it RUNS Crysis, at the resolution I'd like.

Didn't, by any chance, the card actually benefit of being fabricated at 28nm, by spreading its heat over a larger area? If the whole thing, hipothetically, just shrunk to 14nm, wouldn't all that 165W of power would be dissipated over a smaller area (1/4 area?), and this thing would hit the throttle and stay there?

Or by being made smaller, it would actually dissipate even less heat and still get faster?

Yojimbo - Friday, September 19, 2014 - link

I think that it depends on the process. If Dennard scaling were to be in effect, then it should dissipate proportionally less heat. But to my understanding, Dennard scaling has broken down somewhat in recent years, and so I think heat density could be a concern. However, I don't know if it would be accurate to say that the chip benefited from the 28nm process, since I think it was originally designed with the 20nm process in mind, and the problem with putting the chip on that process had to do with the cost and yields. So, presumably, the heat dissipation issues were already worked out for that process..?AnnonymousCoward - Friday, September 26, 2014 - link

The die size doesn't really matter for heat dissipation when the external heat sink is the same size; the thermal resistance from die to heat sink would be similar.danjw - Friday, September 19, 2014 - link

I would love to see these built on Intel's 14nm process or even the 22nm. I think both Nvidia and AMD aren't comfortable letting Intel look at their technology, despite NDAs and firewalls that would be a part of any such agreement.Anyway, thanks for the great review Ryan.

Yojimbo - Friday, September 19, 2014 - link

Well, if one goes by Jen-Hsun Huang's (Nvidia's CEO) comments of a year or two ago, Nvidia would have liked Intel to manufacture their SOCs for them, but it seems Intel was unwilling. I don't see why they would be willing to have them manufacture SOCs and not GPUs being that at that time they must have already had the plan to put their desktop GPU technology into their SOCs, unless the one year delay between the parts makes a difference.r13j13r13 - Friday, September 19, 2014 - link

hasta que no salga la serie 300 de AMD con soporte nativo para directx 12Arakageeta - Friday, September 19, 2014 - link

No interpretation of the compute graphs whatsoever? Could you at least report the output of CUDA's deviceQuery tool?texasti89 - Friday, September 19, 2014 - link

I'm truly impressed with this new line of GPUs. To be able to acheive this leap on efficiency using the same transistor feature size is a great incremental achievement. Bravo TSMC & Nvidia. I feel comfortable to think that we will soon get this amazing 980 performance level on game laptops once we scale technology to the 10nm process. Keep up the great work.stateofstatic - Friday, September 19, 2014 - link

Spoiler alert: Intel is building a new fab in Hillsboro, OR specifically for this purpose...