The NVIDIA GeForce GTX 980 Review: Maxwell Mark 2

by Ryan Smith on September 18, 2014 10:30 PM ESTMeet the GeForce GTX 980

For the physical design of the reference GeForce GTX 980, NVIDIA is clearly iterating on previous designs rather than coming up with something from scratch. With that said however, the idiom of “if it ain’t broke, don’t fix it” has been very applicable to NVIDIA over the last year and a half since the launch of the GTX Titan and its high-end cooler. The GTX Titan’s cooler set a new bar in build quality and performance for a blower design that is to this day unmatched, and for that reason NVIDIA has reused this design for the GTX 780, GTX 780 Ti, GTX Titan Black, and now the GTX 980. What this means for the GTX 980 is that its design comes from a very high pedigree, one that we believe shall serve it well.

At a high level, GTX 980 recycles the basic cooler design and aesthetics of GTX 780 Ti and GTX Titan Black. This means we’re looking at a high performance blower design that is intended to offer the full heat-exhaustion benefits of a blower, but without the usual tradeoff in acoustics. The shroud of the card is composed of cast aluminum housing and held together using a combination of rivets and screws. NVIDIA has also kept the black accenting first introduced by its predecessors, giving the card distinct black lettering and a black tinted polycarbonate window. The card measures 10.5” long overall, which again is the same length as the past high-end GTX cards.

Cracking open the card and removing the shroud exposes the card’s fan and heatsink assembly. Once again NVIDIA is lining the entire card with an aluminum baseplate, which provides heatsinking capabilities for the VRMs and other discrete components below it, along with providing additional protection for the board. The primary GPU heatsink is fundamentally the same as before, retaining the same wedged shape and angled fins.

However in one of the only major deviations from the earlier GTX Titan cooler, at the base NVIDIA has dropped the vapor chamber design for a simpler (and admittedly less effective) heatpipe design that uses a trio of heatpipes to transfer heat from the GPU to the heatsink. In the case of the GTX Titan and other GK110 cards a vapor chamber was deemed necessary due to the GPU’s 250W TDP, but with GM204’s much lower 165W TDP, the advanced performance of the vapor chamber should not be necessary. We would of course like to see a vapor chamber here anyhow, but we admittedly can’t fault NVIDIA for going without it on such a low TDP part.

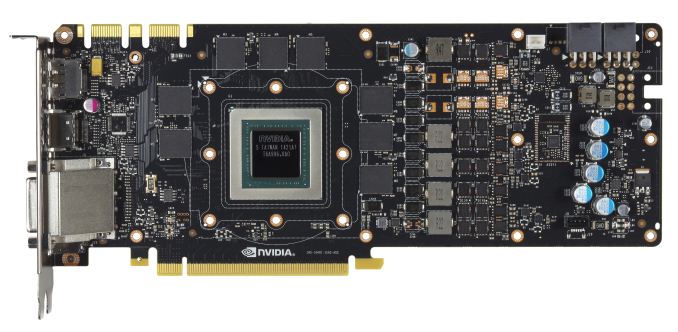

Drilling down to the PCB, we find a PCB design not all that far removed from NVIDIA’s GK110 PCBs. At the heart of the card is of course the new GM204 GPU, which although some 150mm2 smaller than GK110, is still a hefty GPU on its own. Paired with this GPU are the 8 4Gb 7GHz Samsung GDDR5 modules that surround it, composing the 4GB of VRAM and 256-bit memory bus that GM204 interfaces with.

Towards the far side of the PCB we find the card’s power delivery components, which is composed of a 4+1 phase design. Here NVIDIA is using 4 power phases for the GPU itself, and then another phase for the GDDR5. Like the 5+1 phase design on GK110 cards, this configuration is more than enough for stock operations and mild overclocking, however hardcore overclockers will probably end up gravitating towards custom designs more with more heavily overbuilt power delivery systems. Though it is interesting to note that NVIDIA’s design has open pads for 2 more power phases, meaning there is some kind of headroom left in the PCB design. Meanwhile feeding the power delivery system is a pair of 6pin PCIe sockets, giving the card a combined power delivery ceiling of 225W, which is still well above the maximum TDP NVIDIA allows for this card.

What may be the most interesting – or at least most novel – aspect of GTX 980 isn’t even found on the front side of the card, but rather it’s what’s found on the back side. Back after a long absence is a backplate for the card, which runs the entire length of the card and completely covers the back side of the PCB, leaving no element exposed except for the SLI connectors above it and the PCIe connector below it.

Generally speaking backplates are nice to have on video cards. Though they don’t provide any kind of meaningful mechanical/thermal benefit, they do serve to protect a card by reducing how much of the relatively vulnerable PCB is exposed, and similarly protect the user by keeping them from getting jabbed by the soldered tips of discrete components. However backplates typically come with one very big drawback, which is that the 2mm or so of space they occupy is not really their space, and encroaches on anything above it. For a single video card this is not a concern, but when pairing up video cards for SLI, if the cards are directly next to each other this extra 2mm makes all the difference in the world for cooling, blocking valuable space for airflow and otherwise suffocating the card unlucky enough to get blocked.

In more recent years motherboard manufacturers have done a better job of designing their boards by avoiding placing the best PCIe x16 slots next to each other, but there are still cases where these cards must be packed tightly together, such as in micro-ATX cases and when utilizing tri/quad-SLI. As a result of this clash between the benefits and drawbacks of the backplate, for GTX 980 NVIDIA has engineered a solution that allows them to include a backplate and simultaneously not impede the airflow of closely packed cards, and that is a partially removable backplate.

For GTX 980 a segment of the backplate towards the top back corner is detachable from the rest, and removing it exposes the PCB underneath. Based on studying the airflow of video cards with and without a backplate, NVIDIA tells us that they have been able to identify what portion of the backplate is responsible for impeding most of the airflow in an SLI configuration, and that they in turn have made this segment removable so as to be able to offer the full benefits of a backplate while also mitigating the airflow problems. Interestingly this segment is actually quite small – it’s only 34mm tall – making it much shorter than the radial fan on the front of the card, but NVIDIA tells us that this is all that needs to be removed to let a blocked card breathe. In our follow-up to the GTX 980 next week we will be looking at SLI performance, and this will include measuring the cooling impact of the removable backplate segment.

Moving on, beginning with GTX 980 NVIDIA’s standard I/O configuration has dramatically changed, and so for that matter has the design of their I/O shield. First introduced with GTX Titan Z and now present on GTX 980, NVIDIA has been working to maximize the amount of airflow available through their I/O bracket by replacing standard rectangular vents with triangular vents across the whole card. This results in pretty much every square centimeter of the card not occupied by an I/O port having venting through it, leading to very little of the card actually being blocked by the I/O shield.

Meanwhile starting with GTX 980, NVIDIA is introducing their new standard I/O configuration. NVIIDA has finally dropped the second DL-DVI port, and in its place they have installed a pair of full size DisplayPorts. This brings the total I/O configuration up to 1x DL-DVI-I, 3x DisplayPort 1.2, and 1x HDMI 2.0. The inclusion of more DisplayPorts has been a long time coming and I’m glad to see that NVIDIA has finally gone this route. DisplayPorts offer more functionality than any other type of port and can easily be converted to HDMI or SL-DVI as necessary. More importantly for NVIDIA, with 3 DisplayPorts NVIDIA can now drive 3 G-Sync monitors off of a single card, making G-Sync Surround viable for the first time.

Speaking of I/O, we’ll briefly note that NVIDIA’s SLI connectors are still present, with the pair of connectors allowing up to quad-SLI. However we’d also note that this also means that for anyone hoping that NVIDIA would have an all-PCIe multi-GPU solution analogous to AMD’s XDMA engine, Maxwell 2 will not be such a product. Physical bridges are still necessary for SLI, with NVIDIA splitting up the workload over SLI and PCIe in the case of very high resolutions such as 4K.

Wrapping up our look at the physical build quality of the GTX 980, NVIDIA has done a good job iterating on what was already an excellent design with the GTX Titan and its cooler. The backplate, though not a remarkable difference, does give the card that last bit of elegance that GTX Titan and its GK110 siblings never had, as the card is now clad in metal from top to bottom. As silly as it sounds, other than the PCIe connector the GTX 980 may as well be a complete consumer electronic product of its own, as it’s certainly built like one.

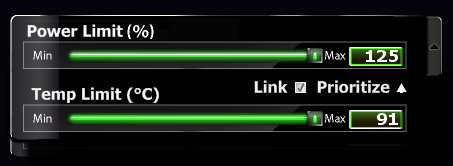

Finally, along with the hardware we also want to quickly summarize the GPU Boost 2.0 limits NVIDIA has chosen for the GTX 980, to better illustrate what the card is capable of. Like the other high-end NVIDIA cards before it, NVIDIA has opted to set the GTX 980’s temperature target at 80C, with a maximum target of 91C and an absolute thermal threshold of 95C. Meanwhile the card’s 165W TDP limit can be increased by as much as 25% to 206W, or 41W over its reference limit.

It’s interesting to note that despite the fact that the GTX Titan cooler was designed for a 250W card, GTX 980 will still see some temperature throttling under heavy, sustained loads. NVIDIA seems to have invested most of their cooling gains into acoustics, which has produced a card with amazing acoustic performance given the combination of the blower and the chart-topping performance, but it has also produced a card that is still going to throttle from time to time.

274 Comments

View All Comments

Kutark - Sunday, September 21, 2014 - link

I'd hold on to it. Thats still a damn fine card. Honestly you could probably find a used one on ebay for a decent price and SLI it up.IMO though id splurge for a 970 and call it a day. I've got dual 760's right now, first time i've done SLI in prob 10 years. And honestly, the headaches just arent worth it. Yeah, most games work, but some games will have weird graphical issues (BF4 near release was a big one, DOTA 2 doesnt seem to like it), others dont utilize it well, etc. I kind of wish id just have stuck with the single 760. Either way, my 2p

SkyBill40 - Wednesday, September 24, 2014 - link

@ Kutark:Yeah, I tried to buy a nice card at that time despite wanting something higher than a 660Ti. But, as my wallet was the one doing the dictating, it's what I ended up with and I've been very happy. My only concern with a used one is just that: it's USED. Electronics are one of those "no go" zones for me when it comes to buying second hand since you have no idea about the circumstances surrounding the device and seeing as it's a video card and not a Blu Ray player or something, I'd like to know how long it's run, it's it's been OC'd or not, and the like. I'd be fine with buying another one new but not for the prices I'm seeing that are right in line with a 970. That would be dumb.

In the end, I'll probably wait it out a bit more and decide. I'm good for now and will probably buy a new 144Hz monitor instead.

Kutark - Sunday, September 21, 2014 - link

Psshhhhh.... I still have my 3dfx Voodoo SLI card. Granted its just sitting on my desk, but still!!!In all seriousness though, my roommate, who is NOT a gamer, is still using an old 7800gt card i had laying around because the video card in his ancient computer decided to go out and he didnt feel like building a new one. Can't say i blame him, Core 2 quad's are juuust fine for browsing the web and such.

Kutark - Sunday, September 21, 2014 - link

Voodoo 2, i meant, realized i didnt type the 2.justniz - Tuesday, December 9, 2014 - link

>> the power bills are so ridiculous for the 8800 GTX!Sorry but this is ridiculous. Do the math.

Best info I can find is that your card is consuming 230w.

Assuming you're paying 15¢/kWh, even gaming for 12 hours a day every day for a whole month will cost you $12.59. Doing the same with a gtx980 (165w) would cost you $9.03/month.

So you'd be paying maybe $580 to save $3.56 a month.

LaughingTarget - Friday, September 19, 2014 - link

There is a major difference between market capitalization and available capital for investment. Market Cap is just a rote multiplication of the number of shares outstanding by the current share price. None of this is available for company use and is only an indirect measurement of how well a company is performing. Nvidia has $1.5 billion in cash and $2.5 billion in available treasury stock. Attempting to match Intel's process would put a significant dent into that with little indication it would justify the investment. Nvidia already took on a considerable chunk of debt going into this year as well, which would mean that future offerings would likely go for a higher cost of debt, making such an investment even harder to justify.While Nvidia is blowing out AMD 3:1 on R&D and capacity, Intel is blowing both of them away, combined, by a wide margin. Intel is dropping $10 billion a year on R&D, which is a full $3 billion beyond the entire asset base of Nvidia. It's just not possible to close the gap right now.

Silma - Saturday, September 20, 2014 - link

I don't think you realize how many billion dollars you need to spend to open a 14 nm factory, not even counting R&D & yearly costs.It's humongous, there is a reason why there are so few foundries in the world.

sp33d3r - Saturday, September 20, 2014 - link

Well, if the NVIDIA/AMD CEOs is blind enough and cannot see it coming, then intel are gonna manufacture their next integrated graphics on a 10 or 8 nm chip and though immature will be a tough competition to them in terms of power and efficiency and even weight.remember currently pcs load integrated graphics as a must by intel and people add third party graphics only 'cause intels is not good enough literally adding weight of two graphics cards (Intels and third partys) to the product. Its all worlds apart more convenient when integrated graphics outperforms or able to challenge third party GPUs, we would just throw away NVIDIA and guess what they wont remain a monopoly anymore rather completely wiped out

Besides Intels integrated graphics are getting more mature in terms of not just die size with every launch, just compare 4000s with 5000s, it wont be long before they catch up.

wiyosaya - Friday, September 26, 2014 - link

I have to agree that it is partly not about the verification cost breaking the bank. However, what I think is the more likely reason is that since the current node works, they will try to wring every penny out of that node. Look at the prices for the Titan Z. If this is not an attempt to fleece the "gotta have it buyer," I don't know what is.Ushio01 - Thursday, September 18, 2014 - link

Wouldn't paying to use the 22nm fabs be a better idea as there about to become under used and all the teething troubles have been fixed.