The NVIDIA GeForce GTX 980 Review: Maxwell Mark 2

by Ryan Smith on September 18, 2014 10:30 PM ESTPower, Temperature, & Noise

As always, last but not least is our look at power, temperature, and noise. Next to price and performance of course, these are some of the most important aspects of a GPU, due in large part to the impact of noise. All things considered, a loud card is undesirable unless there’s a sufficiently good reason – or sufficiently good performance – to ignore the noise.

Having already seen the Maxwell architecture in action with the GTX 750 series, the GTX 980 and its GM204 Maxwell 2 GPU have a very well regarded reputation to live up to. GTX 750 Ti shattered old energy efficiency marks, and we expect much the same of GTX 980. After all, NVIDIA tells us that they can deliver more performance than the GTX 780 Ti for less power than the GTX 680, and that will be no easy feat.

| GeForce GTX 980 Voltages | ||||

| GTX 980 Boost Voltage | GTX 980 Base Voltage | GTX 980 Idle Voltage | ||

| 1.225v | 1.075v | 0.856v | ||

We’ll start as always with voltages, which in this case I think makes for one of the more interesting aspects of GTX 980. Despite the fact that GM204 is a pretty large GPU at 398mm2 and is clocked at over 1.2GHz, NVIDIA is still promoting a TDP of just 165W. One way to curb power consumption is to build a processor wide-and-slow, and these voltage numbers are solid proof that NVIDIA has not done that.

With a load voltage of 1.225v, NVIDIA is driving GM204 as hard (if not harder) than any of the Kepler GPUs. This means that all of NVIDIA’s power optimizations – the key to driving 5.2 billion transistors at under 165W – lie with other architectural optimizations the company has made. Because at over 1.2v, they certainly aren’t deriving any advantages from operating at low voltages.

Next up, let’s take a look at average clockspeeds. As we alluded to earlier, NVIDIA has maintained the familiar 80C default temperature limit for GTX 980 that we saw on all other high-end GPU Boost 2.0 enabled cards. Furthermore as a result of reinvesting most of their efficiency gains into acoustics, what we are going to see is that GTX 980 still throttles. The question then is by how much.

| GeForce GTX 980 Average Clockspeeds | |||

| Max Boost Clock | 1252MHz | ||

| Metro: LL |

1192MHz

|

||

| CoH2 |

1177MHz

|

||

| Bioshock |

1201MHz

|

||

| Battlefield 4 |

1227MHz

|

||

| Crysis 3 |

1227MHz

|

||

| TW: Rome 2 |

1161MHz

|

||

| Thief |

1190MHz

|

||

| GRID 2 |

1151MHz

|

||

| Furmark |

923MHz

|

||

What we find is that while our GTX 980 has an official boost clock of 1216MHz, our sustained benchmarks are often not able to maintain clockspeeds at or above that level. Of our games only Bioshock Infinite, Crysis 3, and Battlefield 4 maintain an average clockspeed over 1200MHz, with everything else falling to between 1151MHz and 1192MHz. This still ends up being above NVIDIA’s base clockspeed of 1126MHz – by nearly 100MHz at times – but it’s clear that unlike our 700 series cards NVIDIA is much more aggressively rating their boost clock. The GTX 980’s performance is still spectacular even if it doesn’t get to run over 1.2GHz all of the time, but I would argue that the boost clock metric is less useful this time around if it’s going to overestimate clockspeeds rather than underestimate. (ed: always underpromise and overdeliver)

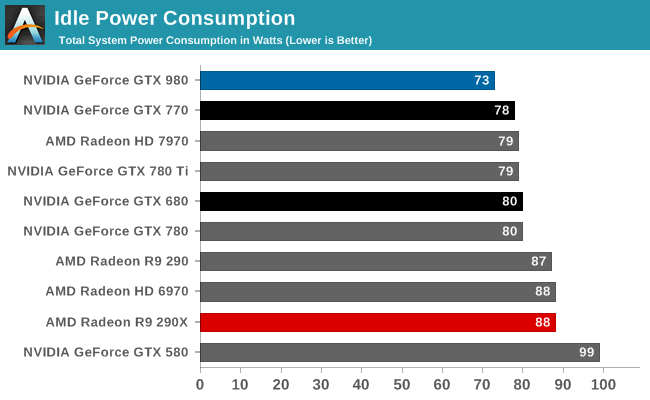

Starting as always with idle power consumption, while NVIDIA is not quoting specific power numbers it’s clear that the company’s energy efficiency efforts have been invested in idle power consumption as well as load power consumption. At 73W idle at the wall, our testbed equipped with the GTX 980 draws several watts less than any other high-end card, including the GK104 based GTX 770 and even AMD’s cards. In desktops this isn’t going to make much of a difference, but in laptops with always-on dGPUs this would be helpful in freeing up battery life.

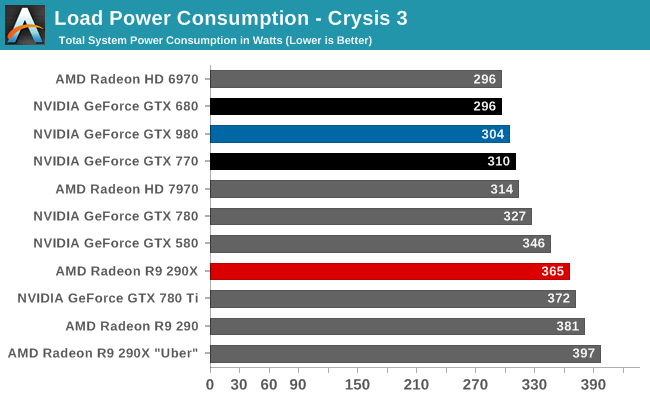

Our first load power test is our gaming test, with Crysis 3. Because we measure from the wall, this test means we’re seeing GPU power consumption as well as CPU power consumption, which means high performance cards will drive up the system power consumption numbers merely by giving the CPU more work to do. This is exactly what happens in the case of the GTX 980; at 304W it’s between the GK104 based GTX 680 and GTX 770, however it’s also delivering 30% better framerates. Accordingly the power consumption of the GTX 980 itself should be lower than either card, but we would not see it in a system power measurement.

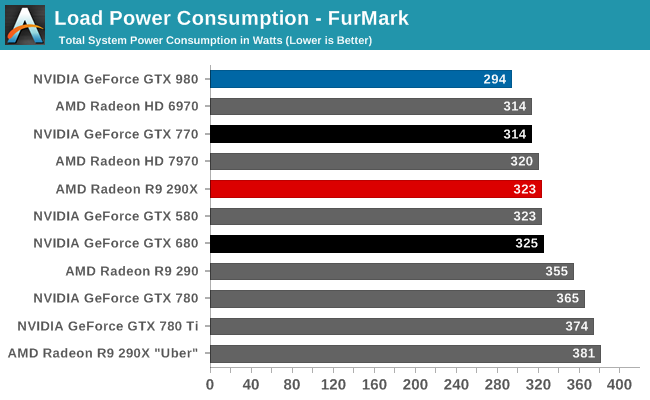

For that reason, when looking at recent generation cards implementing GPU Boost 2.0 or PowerTune 3, we prefer to turn to FurMark as it essentially nullifies the power consumption impact of the CPU. In this case we can clearly see what NVIDIA is promising: GTX 980’s power consumption is lower than everything else on the board, and noticeably so. With 294W at the wall, it’s 20W less than GTX 770, 29W less than 290X, and some 80W less than the previous NVIDIA flagship, GTX 780 Ti. At these power levels NVIDIA is essentially drawing the power of a midrange class card, but with chart-topping performance.

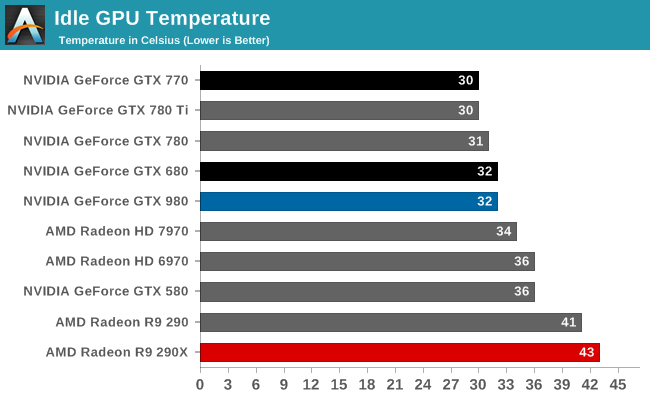

Moving on to temperatures, at idle we see nothing remarkable. All of these well-designed, low idle power designs are going to idle in the low 30s, especially since they’re not more than a few degrees over room temperature.

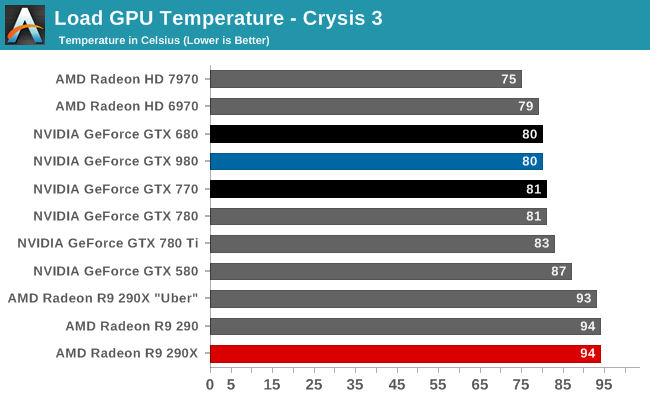

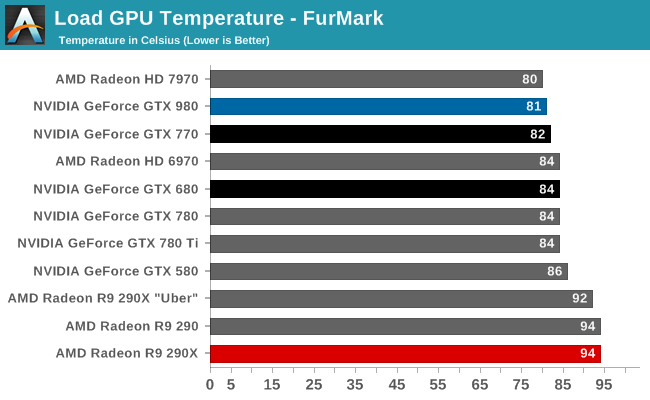

With an 80C throttle point in place for the GTX 980, it’s here where we see the card top out at. The fact that we’re hitting 80C is the reason why the card is exhibiting clockspeed throttling as we saw earlier. NVIDIA’s chosen fan curve is tuned for noise over temperature, so it’s letting the GPU reach its temperature throttle point rather than ramp up the fan (and the noise) too much.

Once again we see the 80C throttle in action. Like all GPU Boost 2.0 NVIDIA cards, NVIDIA makes sure their products aren’t going to get well over 80C no matter the workload.

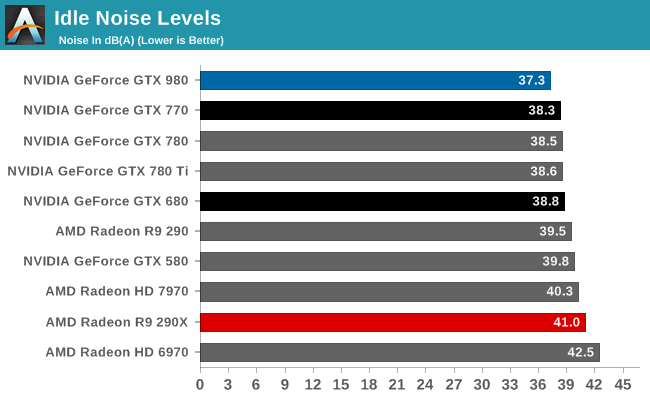

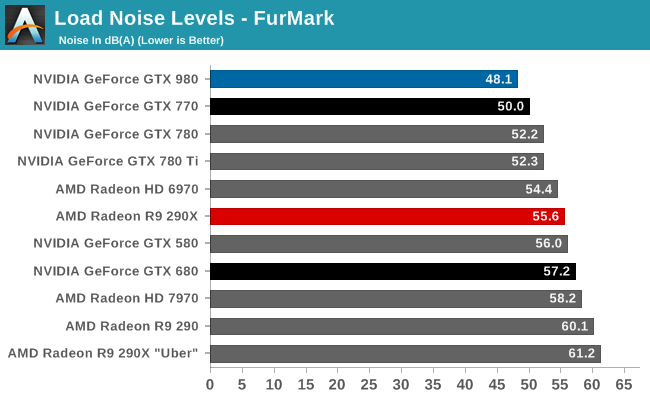

Last but not least we have our noise results. Right off the bat the GTX 980 is looking strong; even with the shared heritage of the cooler with the GTX 780 series, the GTX 980 is slightly but measurably quieter at idle than any other high-end NVIDIA or AMD card. At 37.3dB, the GTX 980 comes very close to being silent compared to the rest of the system.

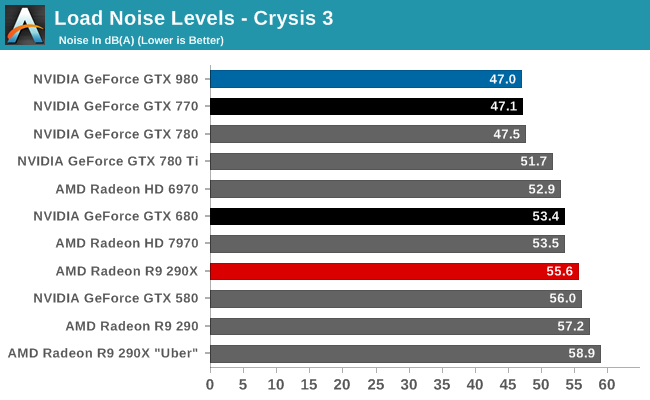

Our Crysis 3 load noise testing showcases the full benefits of the GTX 980’s well-built blower in action. GTX 980 doesn’t perform appreciably better than the GTX Titan cooler equipped GTX 770 and GTX 780, but then again GTX 980 is also not using quite as advanced of a cooler (forgoing the vapor chamber). Still, this is enough to edge ahead of the GTX 770 by 0.1dB, technically making it the quietest video card in this roundup. Though for all practical purposes, it’s better to consider it tied with the GTX 770.

FurMark noise testing on the other hand drives a wedge between the GTX 980 and all other cards, and it’s in the GTX 980’s favor. Despite the similar noise performance between various NVIDIA cards under Crysis 3, under our maximum, pathological workload of FurMark the GTX 980 pulls ahead thanks to its 165W TDP. At the end of the day its lower TDP limit means that the GTX 980 never has too much heat to dissipate, and as a result it never gets too loud. In fact it can’t. 48.1dB is as loud as the GTX 980 can get, which is why the GTX 980’s cooler and overall build are so impressive. There are open air cooled cards that now underperform the GTX 980 that can’t hit these low of noise levels, never mind the other cards with blowers.

Between the GTX Titan and its derivatives and now GTX 980, NVIDIA has spent quite a bit of time and effort on building a better blower, and with their latest effort it really shows. All things considered we prefer blower type coolers for their heat exhaustion benefits – just install it and go, there’s almost no need to worry about what the chassis cooling can do – and with NVIDIA’s efforts to build such a solid cooler for a moderately powered card, the end result is a card with a cooler that offers all the benefits of a blower with the acoustics that can rival and open air cooler. It’s a really good design and one of our favorite aspects of GTX Titan, its derivatives, and now GTX 980.

274 Comments

View All Comments

Sttm - Thursday, September 18, 2014 - link

"How will AMD and NVIDIA solve the problem they face and bring newer, better products to the market?"My suggestion is they send their CEOs over to Intel to beg on their knees for access to their 14nm process. This is getting silly, GPUs shouldn't be 4 years behind CPUs on process node. Someone cut Intel a big fat check and get this done already.

joepaxxx - Thursday, September 18, 2014 - link

It's not just about having access to the process technology and fab. The cost of actually designing and verifying an SoC at nodes past 28nm is approaching the breaking point for most markets, that's why companies aren't jumping on to them. I saw one estimate of 500 million for development of a 16/14nm device. You better have a pretty good lock on the market to spend that kind of money.extide - Friday, September 19, 2014 - link

Yeah, but the GPU market is not one of those markets where the verification cost will break the bank, dude.Samus - Friday, September 19, 2014 - link

Seriously, nVidia's market cap is $10 billion dollars, they can spend a tiny fortune moving to 20nm and beyond...if they want too.I just don't think they want to saturate their previous products with such leaps and bounds in performance while also absolutely destroying their competition.

Moving to a smaller process isn't out of nVidia's reach, I just don't think they have a competitive incentive to spend the money on it. They've already been accused of becoming a monopoly after purchasing 3Dfx, and it'd be painful if AMD/ATI exited the PC graphics market because nVidia's Maxwell's, being twice as efficient as GCN, were priced identically.

bernstein - Friday, September 19, 2014 - link

atm. it is out of reach to them. at least from a financial perspective.while it would be awesome to have maxwell designed for & produced on intel's 14nm process, intel doesn't even have the capacity to produce all of their own cpus... until fall 2015 (broadwell xeon-ep release)...

kron123456789 - Friday, September 19, 2014 - link

"it also marks the end of support for NVIDIA’s D3D10 GPUs: the 8, 9, 100, 200, and 300 series. Beginning with R343 these products are no longer supported in new driver branches and have been moved to legacy status." - This is it. The time has come to buy a new card to replace my GeForce 9800GT :)bobwya - Friday, September 19, 2014 - link

Such a modern card - why bother :-) The 980 will finally replace my 8800 GTX. Now that's a genuinely old card!!Actually I mainly need to do the upgrade because the power bills are so ridiculous for the 8800 GTX! For pities sake the card only has one power profile (high power usage).

djscrew - Friday, September 19, 2014 - link

Like +1kron123456789 - Saturday, September 20, 2014 - link

Oh yeah, modern :) It's only 6 years old) But it can handle even Tomb Raider at 1080p with 30-40fps at medium settings :)SkyBill40 - Saturday, September 20, 2014 - link

I've got an 8800 GTS 640MB still running in my mom's rig that's far more than what she'd ever need. Despite getting great performance from my MSI 660Ti OC 2GB Power Edition, it might be time to consider moving up the ladder since finding another identical card at a decent price for SLI likely wouldn't be worth the effort.So, either I sell off this 660Ti, give it to her, or hold onto it for a HTPC build at some point down the line. Decision, decisions. :)