AMD Radeon R9 285 Review: Feat. Sapphire R9 285 Dual-X OC

by Ryan Smith on September 10, 2014 2:00 PM ESTGCN 1.2 - Image & Video Processing

AMD’s final set of architectural improvements for GCN 1.2 are focused on image and video processing blocks contained within the GPU. These blocks, though not directly tied to GPU performance, are important to AMD by enabling new functionality and by offering new ways to offload tasks on to fixed function hardware for power saving purposes.

First and foremost then, with GCN 1.2 comes a new version of AMD’s video decode block, the Unified Video Decoder. It has now been some time since UVD has received a significant upgrade, as outside of the addition of VC-1/WMV9 support it has remained relatively unchanged for a couple of GPU generations.

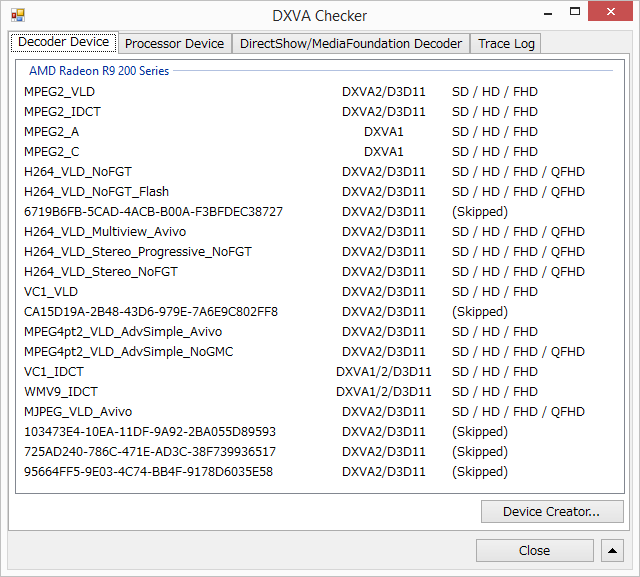

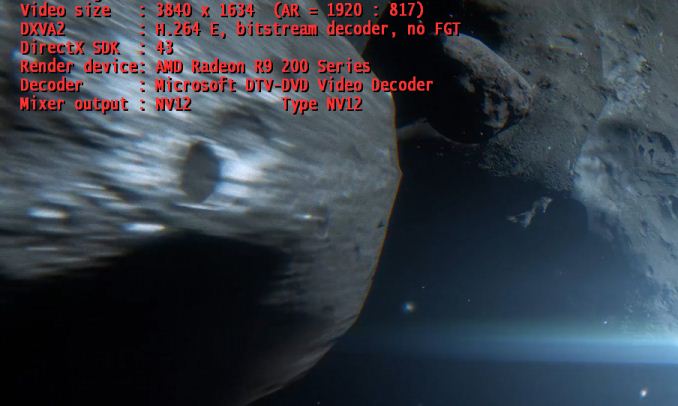

With this newest generation of UVD, AMD is finally catching up to NVIDIA and Intel in H.264 decode capabilities. New to UVD is full support for 4K H.264 video, up to level 5.2 (4Kp60). AMD had previously intended to support 4K up to level 5.1 (4Kp30) on the previous version of UVD, but that never panned out and AMD ultimately disabled that feature. So as of GCN 1.2 hardware decoding of 4K is finally up and working, meaning AMD GPU equipped systems will no longer have to fall back to relatively expensive software decoding for 4K H.264 video.

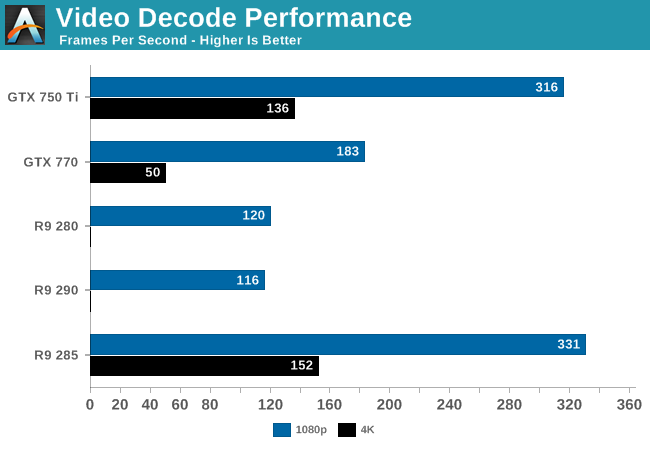

On a performance basis this newest iteration of UVD is around 3x faster than the previous version. Using DXVA checker we benchmarked it as playing back a 1080p video at 331fps, or roughly 27x real-time. For 1080p decode it has enough processing power to decode multiple streams and then-some, but this kind of performance is necessary for the much higher requirements of 4K decoding.

Speaking of which, we can confirm that 4K decoding is working like a charm. While Media Player Classic Home Cinema’s built-in decoder doesn’t know what to do for 4K on the new UVD, Windows’ built-in codec has no such trouble. Playing back a 4K video using that decoder hit 152fps, more than enough to play back a 4Kp60 video or two. For the moment this also gives AMD a leg-up over NVIDIA; while Kepler products can handle 4Kp30, their video decoders are too slow to sustain 4Kp60, which is something only Maxwell cards such as 750 Ti can currently do. So at least for the moment with R9 285’s competition being composed of Kepler cards, it’s the only enthusiast tier card capable of sustaining 4Kp60 decoding.

This new version of UVD also expands AMD’s supported codec set by 1 with the addition of hardware MJPEG decoding. AMD has previously implemented JPEG decoding for their APUs, so MJPEG is a natural extension of that. Though MJPEG is a fairly uncommon codec for most workloads these days, so outside of perhaps pro video I’m not sure how often this feature will get utilized.

What you won’t find though – and we’re surprised it’s not here – is support for H.265 decoding in any form. While we’re a bit too early for full fixed function H.265 decoders since the specification was only ratified relatively recently, both Intel and NVIDIA have opted to bridge the gap by implementing a hybrid decode mode that mixes software, GPU shader, and fixed function decoding steps. H.265 is still in its infancy, but given the increasingly long shelf lives of video cards, it’s a reasonable bet that Tonga cards will still be in significant use after H.265 takes off. But to give AMD some benefit of the doubt, since a hybrid mode is partially software anyhow, there’s admittedly nothing stopping them from implementing it in a future driver (NVIDIA having done just this for H.265 on Kepler).

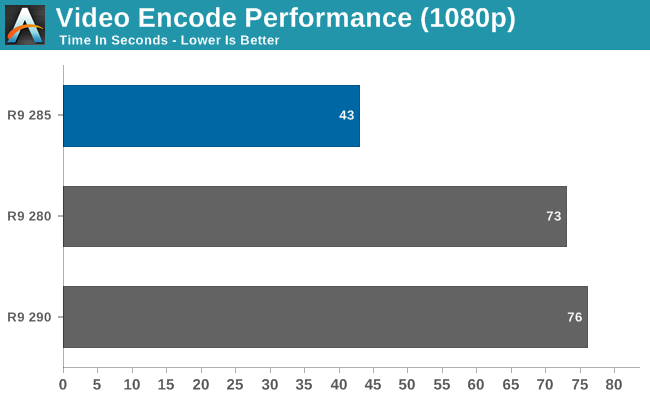

Moving on, along with their video decode capabilities, AMD has also improved on their video encode capabilities for GCN 1.2 with a new version of their Video Codec Engine. AMD’s hardware video encoder has received a speed boost to improve its encoding performance at all levels, and after previously being limited to a maximum resolution of 1080p can now encode at resolutions up to 4K. Meanwhile by AMD’s metrics this new version of VCE should be capable of encoding 1080p up to 12x over real time.

A quick performance check finds that while the current version of Cyberlink’s MediaEspresso software isn’t handling 4K video decoding quite right, encoding from a 1080p source shows that the new VCE is roughly 40% faster than the old VCE in our test.

4K video is still rather new, so there’s little to watch and even less of a reason to encode. That of course will change over time, but in the meantime the most promising use of a hardware 4K encoder would be 4K gameplay recording through the AMD Gaming Evolved Client’s DVR function.

86 Comments

View All Comments

chizow - Thursday, September 11, 2014 - link

If Tonga is a referendum on Mantle, it basically proves Mantle is a failure and will never succeed. This pretty much shows most of what AMD said about Mantle is BS, that it takes LESS effort (LMAO) on the part of the devs to implement than DX.If Mantle requires both an application update (game patch) from devs AFTER the game has already run past its prime shelf-date AND also requires AMD to release optimized drivers every time a new GPU is released, then there is simply no way Mantle will ever succeed in a meaningful manner with that level of effort. Simply put, no one is going to put in that kind of work if it means re-tweaking every time a new ASIC or SKU is released. Look at BF4, its already in the rear-view mirror from DICE's standpoint, and no one even cares anymore as they are already looking toward the next Battlefield#

TiGr1982 - Thursday, September 11, 2014 - link

Please stop calling GPUs ASICs - this looks ridiculous.Please go to Wikipedia and read what "ASIC" is.

chizow - Thursday, September 11, 2014 - link

Is this a joke or are you just new to the chipmaking industry? Maybe you should try re-reading the Wikipedia entry to understand GPUs are ASICs despite their more recent GPGPU functionality. GPU makers like AMD and Nvidia have been calling their chips ASICs for decades and will continue to do so, your pedantic objections notwithstanding.But no need to take my word for it, just look at their own internal memos and job listings:

https://www.google.com/#q=intel+asic

https://www.google.com/#q=amd+asic

https://www.google.com/#q=nvidia+asic

TiGr1982 - Thursday, September 11, 2014 - link

OK, I accept your arguments, but I still don't like this kind of terminology. To me, one may call things like fixed-function video decoder "ASIC" (for example UVD blocks inside Radeon GPUs), but not GPU as a whole, because people do GPGPU for a number of years on GPUs, and "General Purpose" in GPGPU contradicts with "Aplication Specific" in ASIC, isn't it?So, overall it's a terminology/naming issue; everyone uses the naming whatever he wants to use.

chizow - Thursday, September 11, 2014 - link

I think you are over-analyzing things a bit. When you look at the entire circuit board for a particular device, you will see each main component or chip is considered an ASIC, because each one has a specific application.For example, even the CPU is an ASIC even though it handles all general processing, but its specific application for a PC mainboard is to serve as the central processing unit. Similarly, a southbridge chip handles I/O and communications with peripheral devices, Northbridge handles traffic to/from CPU and RAM and so on and so forth.

TiGr1982 - Thursday, September 11, 2014 - link

OK, then according to this (broad) understanding, every chip in silicon industry may be called ASIC :)Let it be.

chizow - Friday, September 12, 2014 - link

Yes, that is why everyone in the silicon industry calls their chips that have specific applications ASICs. ;)Something like a capacitor, or resistor etc. would not be as they are of common commodity.

Sabresiberian - Thursday, September 11, 2014 - link

I reject the notion that we should be satisfied with a slower rate of GPU performance increase. We have more use than ever before for a big jump in power. 2560x1440@144Hz. 4K@60Hz.Of course it's all good for me to say that without being a micro-architecture design engineer myself, but I think it's time for a total re-think. Or if the companies are holding anything back - bring it out now, please! :)

Stochastic - Thursday, September 11, 2014 - link

Process node shrinks are getting more and more difficult, equipment costs are rising, and the benefits of moving to a smaller node are also diminishing. So sadly I think we'll have to adjust to a more sedate pace in the industry.TiGr1982 - Thursday, September 11, 2014 - link

I'm a longstanding AMD Radeon user for more than 10 years, but after reading this R9 285 review I can't help but think that, based on results of smaller GM107 in 750 Ti, GM204 in GTX 970/980 may offer much better performance/Watt/die area (at least for gaming tasks) in comparison to the whole AMD GPU lineup. Soon we'll see whether or not this will be the case.