AMD FX-8370E CPU Review: Vishera Down to 95W, Price Cuts for FX

by Ian Cutress on September 2, 2014 8:00 AM ESTGaming Benchmarks

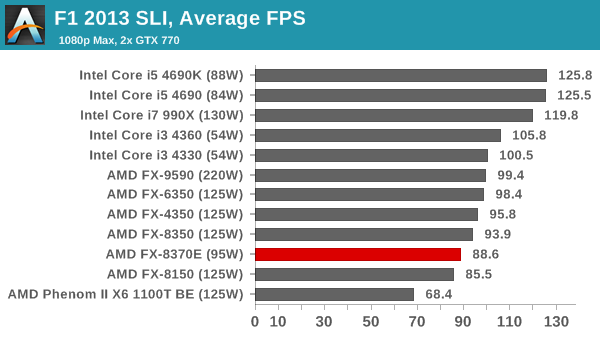

F1 2013

First up is F1 2013 by Codemasters. I am a big Formula 1 fan in my spare time, and nothing makes me happier than carving up the field in a Caterham, waving to the Red Bulls as I drive by (because I play on easy and take shortcuts). F1 2013 uses the EGO Engine, and like other Codemasters games ends up being very playable on old hardware quite easily. In order to beef up the benchmark a bit, we devised the following scenario for the benchmark mode: one lap of Spa-Francorchamps in the heavy wet, the benchmark follows Jenson Button in the McLaren who starts on the grid in 22nd place, with the field made up of 11 Williams cars, 5 Marussia and 5 Caterham in that order. This puts emphasis on the CPU to handle the AI in the wet, and allows for a good amount of overtaking during the automated benchmark. We test at 1920x1080 on Ultra graphical settings.

In all combinations, the 8370E and the 8150 duke it out. F1 2013 seems to be an Intel dominated title, given the i3 and outperform the FX-9590.

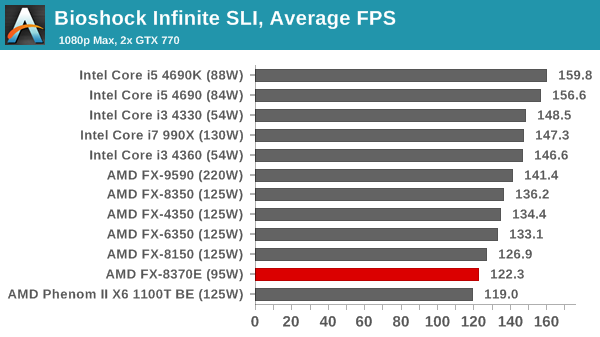

Bioshock Infinite

Bioshock Infinite was Zero Punctuation’s Game of the Year for 2013, uses the Unreal Engine 3, and is designed to scale with both cores and graphical prowess. We test the benchmark using the Adrenaline benchmark tool and the Xtreme (1920x1080, Maximum) performance setting, noting down the average frame rates and the minimum frame rates.

The FX-8350 again fits in just beneath the FX-8150, but for a lower power consumption.

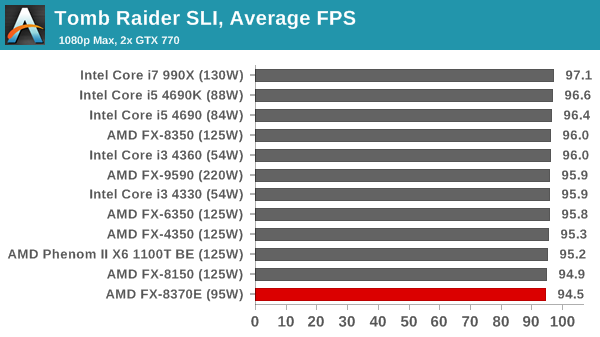

Tomb Raider

The next benchmark in our test is Tomb Raider. Tomb Raider is an AMD optimized game, lauded for its use of TressFX creating dynamic hair to increase the immersion in game. Tomb Raider uses a modified version of the Crystal Engine, and enjoys raw horsepower. We test the benchmark using the Adrenaline benchmark tool and the Xtreme (1920x1080, Maximum) performance setting, noting down the average frame rates and the minimum frame rates.

Tomb Raider continues to be CPU agnostic, even around the FX quad thread CPUs.

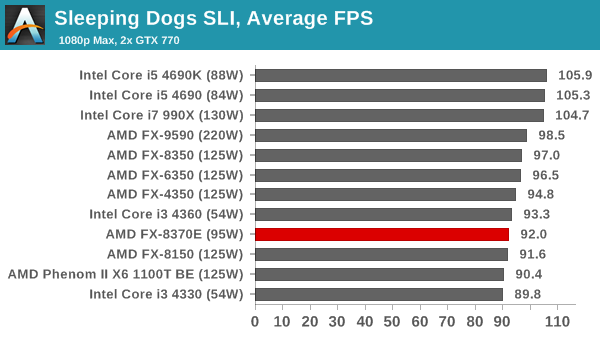

Sleeping Dogs

Sleeping Dogs is a benchmarking wet dream – a highly complex benchmark that can bring the toughest setup and high resolutions down into single figures. Having an extreme SSAO setting can do that, but at the right settings Sleeping Dogs is highly playable and enjoyable. We run the basic benchmark program laid out in the Adrenaline benchmark tool, and the Xtreme (1920x1080, Maximum) performance setting, noting down the average frame rates and the minimum frame rates.

The eight threads offers some advantage in minimum frame rates, but average frame rates are still around the FX-8150.

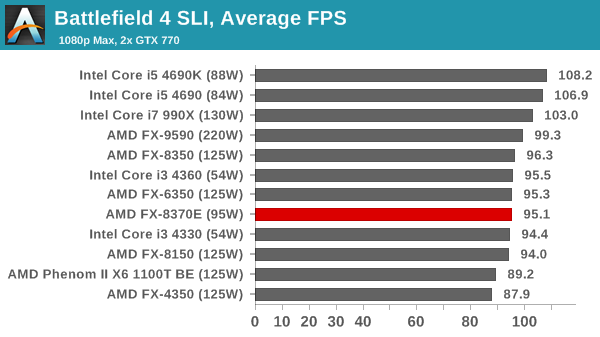

Battlefield 4

The EA/DICE series that has taken countless hours of my life away is back for another iteration, using the Frostbite 3 engine. AMD is also piling its resources into BF4 with the new Mantle API for developers, designed to cut the time required for the CPU to dispatch commands to the graphical sub-system. For our test we use the in-game benchmarking tools and record the frame time for the first ~70 seconds of the Tashgar single player mission, which is an on-rails generation of and rendering of objects and textures. We test at 1920x1080 at Ultra settings.

The FX-8370E stretches its legs a little in terms of minimum frame rates, particularly in SLI, however it is handily beaten by the i3-4330.

107 Comments

View All Comments

fluxtatic - Wednesday, September 3, 2014 - link

Yeah, that 40 years it held true was a total goof. That Moore, what an idiot! /sSamus - Wednesday, September 3, 2014 - link

I wish I could look more than 40 years into the future...But on a serious note, Moore's law still stands true, and will for the foreseeable future. You are right, we can't shrink things forever (we're already approaching the sub-atomic level) but there are creative ways around this; 3D transistors, quantum computing (currently vaporware IMHO) etc.

ironargonaut - Wednesday, September 3, 2014 - link

You are the fool Moore made no such assumption. Nor did he make any such statement. Others took what he said and condensed it into "Moore's law".Gigaplex - Tuesday, September 2, 2014 - link

"Seriously, how hard is to make a decent CPU"Let's see you do a better job if you think it's easy.

imin83 - Wednesday, September 3, 2014 - link

"Seriously, how hard is to make a decent CPU"-the correct reply to that statement would be, "Watch out, we got a badass over here"

IUU - Friday, September 5, 2014 - link

Well, even though I readily agree that Moore's Law will eventually come to an end, regarding the present technologies, I don't think this will happen until some years(may be 5? 10?).I bought my i7 920 at the end of 2008. So ~ 6 years later or about 3 Moore's cycles I bought a laptop

with an intel cpu that consumes officially 1/3 of the energy of the old beast. Taking into consideration the on-chip gpu, this is probably considerably less for the cpu part. Yet it performs, as a cpu,

about 10% faster on single threaded and moderately threaded applications(3-4 threaded apps). So, for any practical purpose, it's 10% faster for less than one third of the energy requirements.

If you do the multiplications and the divisions you will probably find that moore's law still holds true, not only from a manufacturing perspective but from a more substantial one;Flops per Watt.

The only problem is that performance per dollar went backwards for various reasons that are artificial and not based on the physical feasibility of things. Thus while you were purchasing a 130 watt cpu back in 2008 for ~300 dollars, now you cash out on average 500. But this is nothing.

Think about it. Many people happily waste hundreds of dollars per year for the¨"latest mobile tech", ie they spend for chips that are from an order of magnitude to possibly 2 orders of magnitude less capable, and it seems normal to almost everyone. Now go support the mobile involution in order to pay for an ordinary cpu not 1000 but 10000 dollars and then be nostalgic for the good old days when you could buy a haswell extreme for 1000 bucks.

Samus - Wednesday, September 3, 2014 - link

On one hand, AMD's stagnation is pissing me off because Intel hasn't had any competitive pressure to produce anything faster since Nehalem (Haswell is only about 20% faster in IPC, yet 5 years newer)On the other hand, Intel would still be assfucking us with Netburst if it wasn't for AMD's Athlon.

Intel strongarmed AMD out of the game. The lost revenue destroyed their R&D budget and they haven't rebounded since.

ddriver - Wednesday, September 3, 2014 - link

My biggest issue with intel is the decision to cram IGP inside high end products, which makes about ZERO sense. Integrated graphics are OK for low and lower midrange products, but in a high end product it is just waste of die space. Intel wastes 2/5 of the die on graphics which ends up never being used, and for what? To get better "video card" market share, even at the price of selling stuff that ends up never being used? Throw in 50% more cores and cache please, or just make the chip smaller and cheaper...Samus - Wednesday, September 3, 2014 - link

While I agree with you from an engineering perspective, you are free to purchase XEON or -E class CPU's if you don't like 2/5 of your die wasted.It does seem the Bloomfield Nehalem (Socket 1366) CPU's made the most efficient use of die for performance, being 45nm and all, and as soon as Intel integrated the graphics onchip it all the sudden lost its triple-channel memory controller (because there was no room left for it) so the die area can be better used, but in practice, the integrated graphics helps Intel's bottom line more than a triple channel controller or more cache, both of which 90% of consumers will benefit little from, while also increasing power consumption.

silverblue - Wednesday, September 3, 2014 - link

QuickSync could be useful...?