The Intel Haswell-E CPU Review: Core i7-5960X, i7-5930K and i7-5820K Tested

by Ian Cutress on August 29, 2014 12:00 PM ESTEvolution in Performance

The underlying architecture in Haswell-E is not anything new. Haswell desktop processors were first released in July 2013 to replace Ivy Bridge, and at the time we stated an expected 3-17% increase, especially in floating point heavy benchmarks. Users moving from Sandy Bridge should expect a ~20% increase all around, with Nehalem users in the 40% range. Due to the extreme systems only needing more cores, we could assume that the suggested recommendations for Haswell-E over IVB-E and the others were similar but we tested afresh for this review in order to test those assumptions.

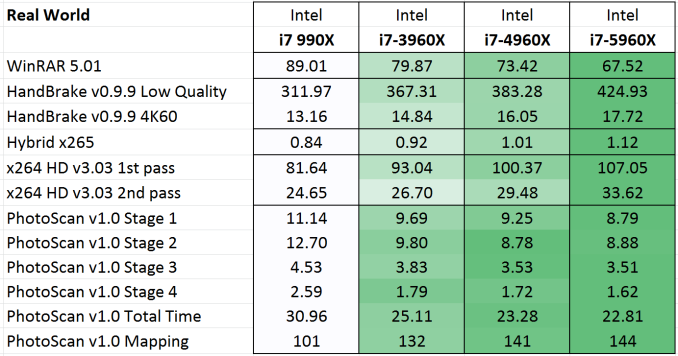

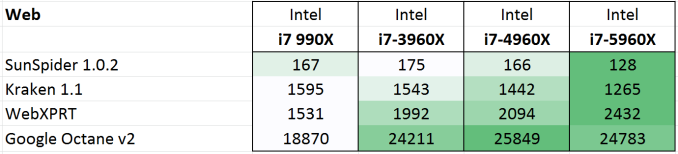

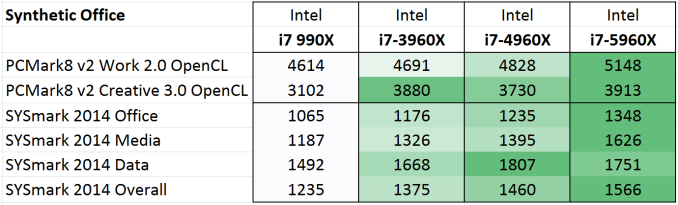

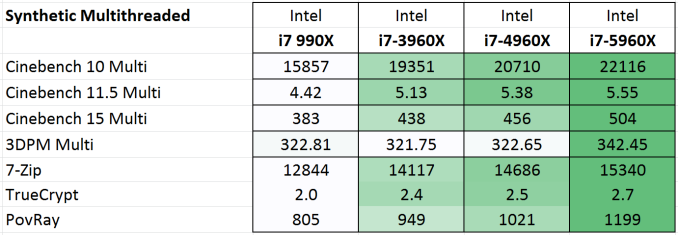

For our test, we took our previous CPU review samples from as far back as Nehalem. This means the i7-990X, i7-3960X, i7-4960X and the Haswell-E i7-5960X.

Each of the processors were set to 3.2 GHz on all the cores, and set to four cores without HyperThreading enabled.

Memory was set to the CPU supported frequency at JEDEC settings, meaning that if there should Intel have significantly adjusted the performance between the memory controllers of these platforms, this would show as well. For detailed explanations of these tests, refer to our main results section in this review.

Average results show an average 17% jump from Nehalem to SNB-E, 7% for SNB-E to IVB-E, and a final 6% from IVB-E to Haswell-E. This makes for a 31% (rounded) overall stretch in three generations.

Web benchmarks have to struggle with the domain and HTML 5 offers some way to help use as many cores in the system as possible. The biggest jump was in SunSpider, although overall there is a 34% jump from Nehalem to Haswell-E here. This is split by 14% Nehalem to SNB-E, 6% SNB-E to IVB-E and 12% from IVB-E to Haswell-E.

Purchasing managers often look to the PCMark and SYSmark data to clarify decisions and the important number here is that Haswell-E took a 7% average jump in scores over Ivy Bridge-E. This translates to a 24% jump since Nehalem.

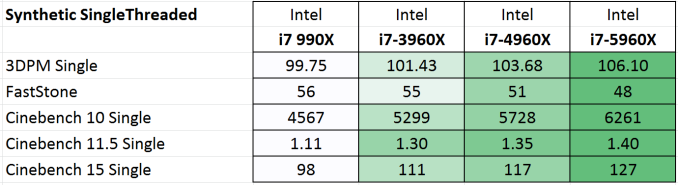

Some of the more common synthetic benchmarks in multithreaded mode showed an average 8% jump from Ivy Bridge-E, with a 29% jump overall. Nehalem to Sandy Bridge-E was a bigger single jump, giving 14% average.

In the single threaded tests, a smaller overall 23% improvement was seen from the i7-990X, with 6% in this final generation.

The take home message, if there was one, from these results is that:

Haswell-E has an 8% improvement in performance over Ivy Bridge-E clock for clock for pure CPU based workloads.

This also means an overall 13% jump from Sandy Bridge-E to Haswell-E.

From Nehalem, we have a total 28% raise in clock-for-clock performance.

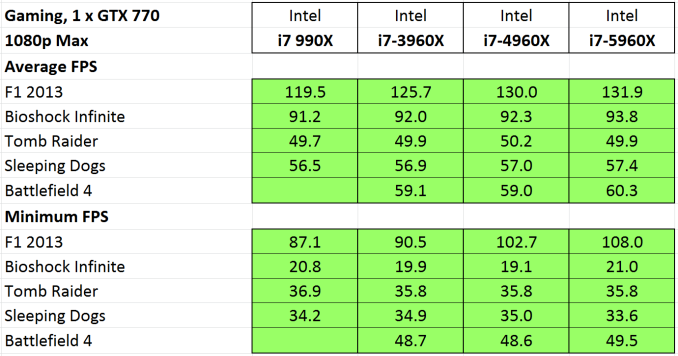

Looking at gaming workloads, the difference shrinks. Unfortunately our Nehalem system decided to stop working while taking this data, but we can still see some generational improvements. First up, a GTX 770 at 1080p Max settings:

The only title that gets much improvement is F1 2013 which uses the EGO engine and is most amenable to better hardware under the hood. The rise in minimum frame rates is quite impressive.

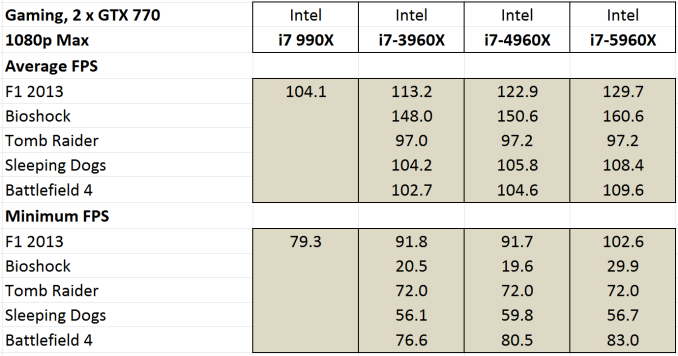

For SLI performance:

All of our titles except Tomb Raider get at least a small improvement in our clock-for-clock testing with this time Bioshock also getting in on the action in both average and minimum frame rates.

If we were to go on clock-for-clock testing alone, these numbers do not particularly show a benefit from upgrading from a Sandy Bridge system, except in F1 2013. However our numbers later in the review for stock and overclocked speeds might change that.

Memory Latency and CPU Architecture

Haswell is a tock, meaning the second crack at 22nm. Anand went for a deep dive into the details previously, but in brief Haswell bought better branch prediction, two new execution ports and increased buffers to feed an increased parallel set of execution resources. Haswell adds support for AVX2 which includes an FMA operation to increase floating point performance. As a result, Intel doubled the L1 cache bandwidth. While TSX was part of the instruction set as well, this has since been disabled due to a fundamental silicon flaw and will not be fixed in this generation.

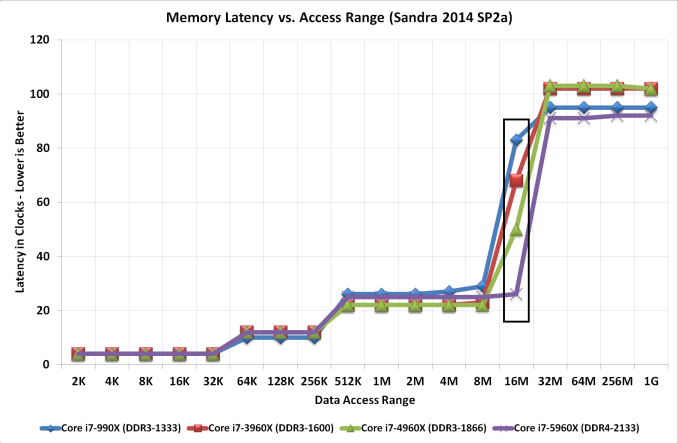

The increase in L3 cache sizes for the highest CPU comes from an increased core count, extending the lower latency portion of the L3 to larger data accesses. The move to DDR4 2133 C15 would seem to have latency benefits over previous DDR3-1866 and DDR3-1600 implementations as well.

203 Comments

View All Comments

schmak01 - Friday, January 16, 2015 - link

I thought the same thing, but it probably depends on the game. I got the MSI XPower AC X99s Board with the 5930K. when I was running a 2500k at 4.5 Ghz for years. I play a lot of FFXIV which is DX9 and therefore CPU strapped. I noticed a marked improvement. Its a multithreaded game so that helps, but on my trusty sandy bridge I was always at 100% across all cores while playing, now its rarely above 15-20%. Areas where Ethernet traffic picks up, high population areas, show a much better improvement as I am not running out of CPU cycles. Lastly Turnbased games like GalCivIII and Civ5 on absurdly large Maps/AI's run much faster. Loading an old game on Civ5 where turns took 3-4 minutes now take a few seconds.There is also the fact that when Broadwell-E's are out in 2016 they will still use the LGA 2011-3 socket and X99 chipset, I figured it was a good time to upgrade for 'future proofing' my box for a while.

Flunk - Friday, August 29, 2014 - link

Right, for rendering, video encoding, server applications and only if there is no GPU-accelerated version for the task at hand. You have to admit that embarrassingly parallel workloads are both rare and quite often better off handed to the GPU.Also, you're neglecting overclocking. If you take that into account the lowest-end Haswell-E only has a 20%-30% advantage. Also, I'm not sure about you but I normally use Xeons for my servers.

Haswell-E has a point, but it's extremely niche and dare I say extremely overpriced? 8-core at $600 would be a little more palatable to me, especially with these low clocks and uninspiring single thread performance.

wireframed - Friday, August 29, 2014 - link

The 5960X is half the price of the equivalent Xeon. Sure if you're budget is unlimited, 1k or 2k per CPU doesn't matter, but how often is that realistic.For content creation, CPU performance is still very much relevant. GPU acceleration just isn't up to scratch in many areas. Too little RAM, not flexible enough. When you're waiting days or weeks for renderings, every bit counts.

CaedenV - Friday, August 29, 2014 - link

improvements are relative. For gaming... not so much. Most games still only use 4 core (or less!), and rely more on the clock rate and GPU rather than specific CPU technologies and advantages, so having a newer 8 core really does not bring much more to the table to most games compared to an older quad core... and those sandy bridge parts could OC to the moon, even my locked part hits 4.2GHz without throwing a fuss.Even for things like HD video editing, basic 3D content creation, etc. you are looking at minor improvements that are never going to be noticed by the end user. Move into 4K editing, and larger 3D work... then you see substantial improvements moving to these new chips... but then again you should probably be on a dual Xeon setup for those kinds of high-end workloads. These chips are for gamers with too much money (a class that I hope to join some day!), or professionals trying to pinch a few pennies... they simply are not practical in their benefits for either camp.

ArtShapiro - Friday, August 29, 2014 - link

Same here. I think the cost of operation is of concern in these days of escalating energy rates. I run the 2500K in a little Antec MITX case with something like a 150 or 160 watt inbuilt power supply. It idles in the low 20s, if I recall, meaning I can leave it on all day without California needing to build more nuclear power plants. I can only cringe at talk about 1500 watt power supplies.wireframed - Friday, August 29, 2014 - link

Performance per watt is what's important. If the CPU is twice as fast, and uses 60% more power! you still come out ahead. The idle draw is actually pretty good for the Haswell-E. It's only when you start overclocking it gets really crazy.DDR4's main selling point is reduced power draw, so that helps as well.

actionjksn - Saturday, August 30, 2014 - link

If you have a 1500 watt power supply, it doesn't mean you're actually using 1500 watts. It will only put out what the system demands at whatever workload you're putting on it at the time. If you replaced your system with one of these big new ones, your monthly bill might go up 5 to 8 dollars per month if you are a pretty heavy user, and you're really hammering that system frequently and hard. The only exception I can think of would be if you were mining Bit Coin 24/7 or something like that. Even then it would be your graphics cards that would be hitting you hard on the electric bill. It may be a little higher in California since you guys get overcharged for pretty much everything.Flashman024 - Friday, May 8, 2015 - link

Just out of curiosity, what do you pay for electricity? Because I pay less here than I did when I lived in IA. We're at .10 KWh to .16 KWh (Tier 3 based on 1000KWh+ usage). Heard these tired blanket statements before we moved, and were pleased to find out it's mostly BS.CaedenV - Friday, August 29, 2014 - link

Agreed, my little i7 2600 still keeps up just fine, and I am not really tempted to upgrade my system yet... maybe a new GPU, but the system itself is still just fine.Let's see some more focus on better single-thread performance, refine DDR4 support a bit more, give PCIe HDDs a chance to catch on, then I will look into upgrading. Still, this is the first real step forward on the CPU side that we have seen in a good long time, and I am really excited to finally see some Intel consumer 8 core parts hit the market.

twtech - Friday, August 29, 2014 - link

The overclocking results are definitely a positive relative to the last generation, but really the pull-the-trigger point for me would have been the 5930K coming with 8 cores.It looks like I'll be waiting another generation as well. I'm currently running an OCed 3930K, and given the cost of this platform, the performance increase for the cost just doesn't justify the upgrade.