The Intel Haswell-E CPU Review: Core i7-5960X, i7-5930K and i7-5820K Tested

by Ian Cutress on August 29, 2014 12:00 PM ESTLoad Delta Power Consumption

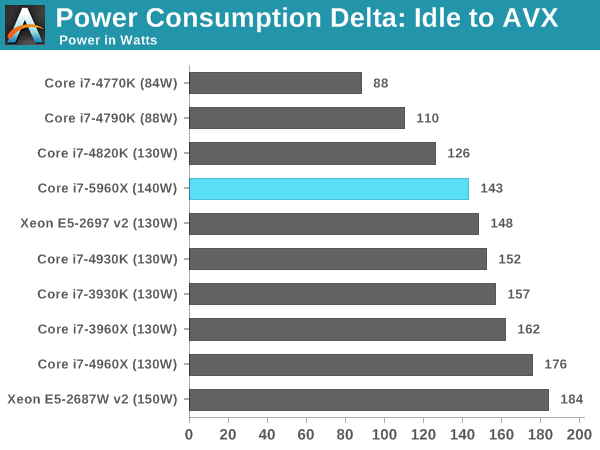

Power consumption was tested on the system while in a single MSI GTX 770 Lightning GPU configuration with a wall meter connected to the OCZ 1250W power supply. This power supply is Gold rated, and as I am in the UK on a 230-240 V supply, leads to ~75% efficiency under 50W and 90%+ efficiency at 250W, suitable for both idle and multi-GPU loading. This method of power reading allows us to compare the power management of the UEFI and the board to supply components with power under load, and includes typical PSU losses due to efficiency.

We take the power delta difference between idle and load as our tested value, giving an indication of the power increase from the CPU when placed under stress. Unfortuantely we were not in a position to test the power consumption for the two 6-core CPUs due to the timing of testing.

Because not all processors of the same designation leave the Intel fabs with the same stock voltages, there can be a mild variation and the TDP given on each CPU is understandably an absolute stock limit. Due to power supply efficiencies, we get higher results than TDP, but the more interesting results are the comparisons. The 5960X is coming across as more efficient than Sandy Bridge-E and Ivy Bridge-E, including the 130W Ivy Bridge-E Xeon.

Test Setup

| Test Setup | ||||

| Processor |

Intel Core i7-5820K Intel Core i7-5930K Intel Core i7-5960X |

6C/12T 6C/12T 8C/16T |

3.3 GHz / 3.6 GHz 3.5 GHz / 3.7 GHz 3.0 GHz / 3.5 GHz |

|

| Motherboard |

ASUS X99 Deluxe ASRock X99 Extreme4 |

|||

| Cooling |

Corsair H80i Cooler Master Nepton 140XL |

|||

| Power Supply |

OCZ 1250W Gold ZX Series Corsair AX1200i Platinum PSU |

1250W 1200W |

80 PLUS Gold 80 PLUS Platinum |

|

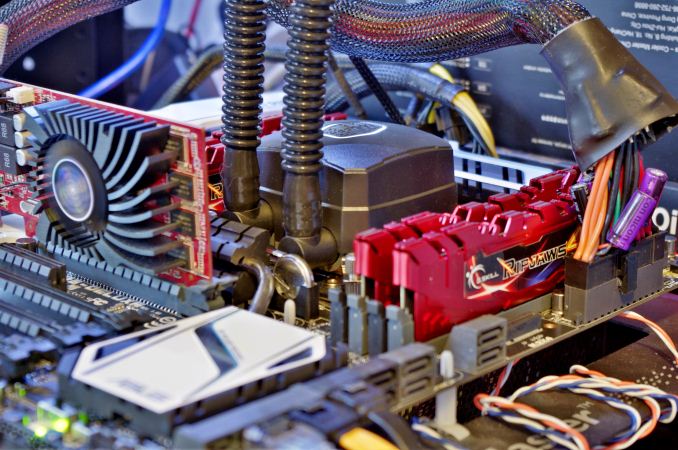

| Memory |

Corsair 4x8 GB G.Skill Ripjaws4 |

DDR4-2133 DDR4-2133 |

15-15-15 1.2V 15-15-15 1.2V |

|

| Memory Settings | JEDEC | |||

| Video Cards | MSI GTX 770 Lightning 2GB (1150/1202 Boost) | |||

| Video Drivers | NVIDIA Drivers 337.88 | |||

| Hard Drive | OCZ Vertex 3 | |||

| Optical Drive | LG GH22NS50 | |||

| Case | Open Test Bed | |||

| Operating System | Windows 7 64-bit SP1 | |||

| USB 2/3 Testing | OCZ Vertex 3 240GB with SATA->USB Adaptor | |||

Many thanks to...

We must thank the following companies for kindly providing hardware for our test bed:

Thank you to OCZ for providing us with PSUs and SSDs.

Thank you to G.Skill for providing us with memory.

Thank you to Corsair for providing us with an AX1200i PSU and a Corsair H80i CLC.

Thank you to MSI for providing us with the NVIDIA GTX 770 Lightning GPUs.

Thank you to Rosewill for providing us with PSUs and RK-9100 keyboards.

Thank you to ASRock for providing us with some IO testing kit.

Thank you to Cooler Master for providing us with Nepton 140XL CLCs and JAS minis.

A quick word to the manufacturers who sent us the extra testing kit for review, including G.Skill’s Ripjaws 4 DDR4-2133 CL15, Corsair for similar modules, and Cooler Master for the Nepton 140XL CLCs. We will be reviewing the DDR4 modules in due course, including Corsair's new extreme DDR4-3200 kit, but we have already tested the Nepton 140XL in a big 14-way CLC roundup. Read about it here.

203 Comments

View All Comments

Ninjawithagun - Tuesday, January 12, 2016 - link

You can't use the 5820K with an X79 motherboard.dawie1976 - Friday, September 12, 2014 - link

Yip,same here.I7 4790 @ 8.8 GHz.I am still goodmyT4U - Friday, March 4, 2016 - link

Tell us about your system and setup pleasedamianrobertjones - Sunday, March 8, 2015 - link

Yep 2500k @ 4.8 Ghz. Not really Just found it funny that each new post beat the previous by 100MhzStas - Friday, April 10, 2015 - link

Agreed, doing quite well with 2500k @ 4.8Ghzleminlyme - Tuesday, September 2, 2014 - link

I don't mean to be a prick, but you're not going to see anything in gaming performance even if intel releases a 32core 200$ 3.0 ghz processor. Because in the end, it's about the developers usage of the processors, and not many game developers want to ostracize the entry level market by making CPU heavy games. Now, when Star Citizen launches, there'll be a bit of a rush for 'better' but not 'best' cpus, and that appears to be virtually the only example worth bringing up in the next forseeable 3years of gaming (atleast so far in the public eye..) All you can do is boost up single core performance to a certain point before there's just no more benefits, upgrading your cpu for gaming is like upgrading your plumbing for pissing. Yeah it still goes through and could see marginal benefits, but you know damn well pissin' ain't shit ;Dawakenedmachine - Tuesday, September 2, 2014 - link

Not prick-like at all, I appreciate the comment. I'm an old dude who hasn't "gamed" for years and I'm just now getting back into it, trying to figure out what will work and for what price. Your insight is very helpful! Sounds like a lot of guys are using OC'ed i5 cores, good to know.swing848 - Thursday, September 4, 2014 - link

Crank up World of Tanks video settings to maximum and watch your FPS sink like a rock. A high end system is needed to run this title at max settings, that only recently began to use 2 CPU cores and 2 GPUs. No one using a mid-range Intel CPU and upper-midrange single GPU will see 60fps with the video cranked to maximum.Midwayman - Tuesday, September 30, 2014 - link

That game is just horribly coded. There is no excuse for the amount of CPU and GPU it needs.swing848 - Thursday, September 4, 2014 - link

Check out my post above regarding MS-FSX.And, yes, I have installed Star Citizen some time ago [alpha release FINALLY allowed some play], and my system has done well with it, even in alpha.