Recovering Data from a Failed Synology NAS

by Ganesh T S on August 22, 2014 6:00 AM ESTRecovering the Data

Ubuntu + mdadm

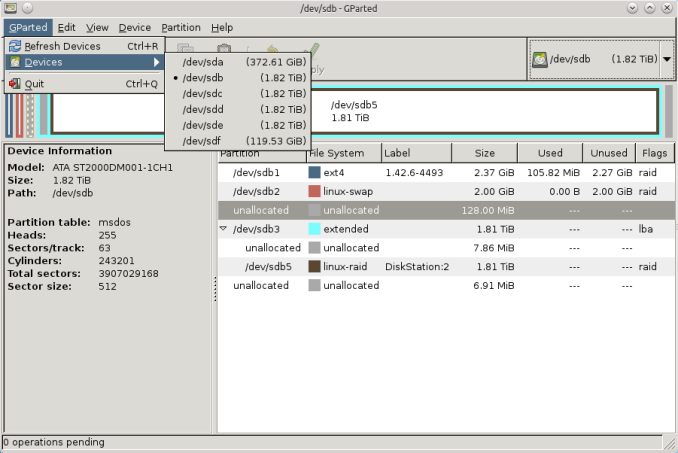

After booting Ubuntu with all the four drives connected, I first used GParted to ensure that all the disks and partitions were being correctly recognized by the OS.

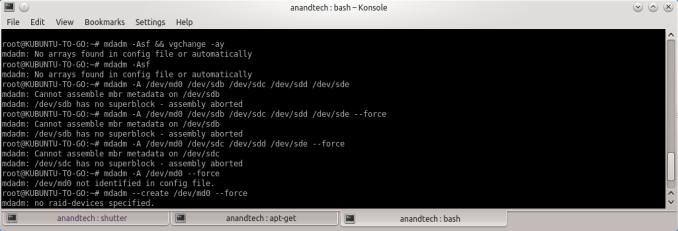

Synology's FAQ presents the ideal scenario where the listed commands work magically to provide the expected results. But, no two cases are really the same. When I tried to follow the FAQ directions, I ended up with a 'No arrays found in config file or automatically' message. No amount of forcing the array assembly helped.

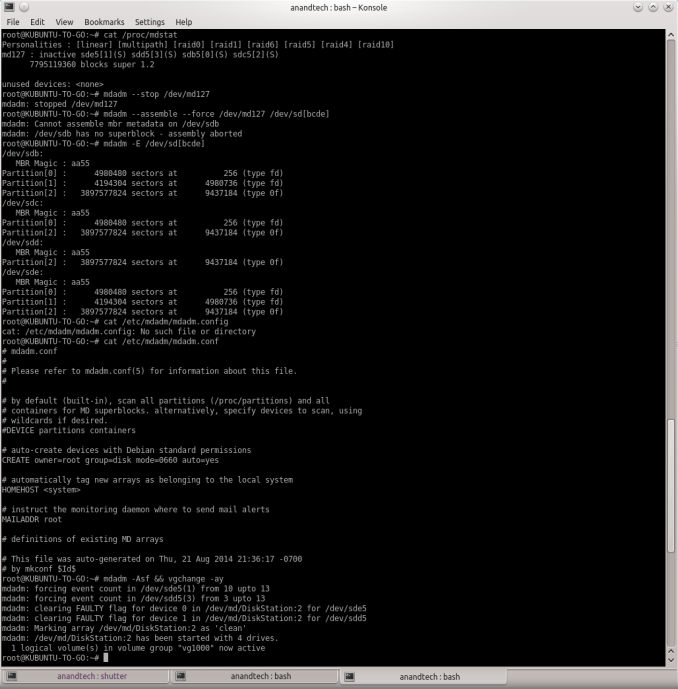

After a bit of reading up the man pages, I decided to look up mdstat and found that a md127 was actually being recognized from the Synology RAID operations. Unfortunately, all the drives had come up with a (S) spare tag. I experimented with some more commands after going through some Ubuntu forum threads.

The trick (in my case) seemed to lie in actually stopping the RAID device with '--stop' prior to executing the forced scan and assemble command suggested by Synology. Once this was done, the RAID volume automatically appeared as a Device in Dolphin (a file explorer program in Ubuntu).

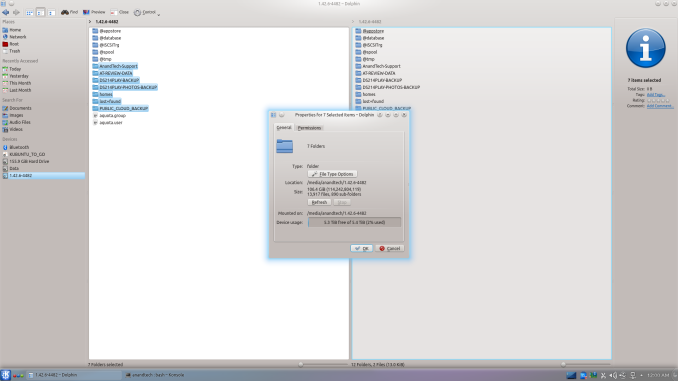

The files could then be viewed and copied over from the volume to another location. As shown above, the ~100 GB of data was safe and sound on the disks. Given the amount of time I had to spend searching online about mdadm, and the difficulties I encountered, I wouldn't be surprised if users short on time / little knowledge of Linux decide to go with a Windows-based solution even if it costs money.

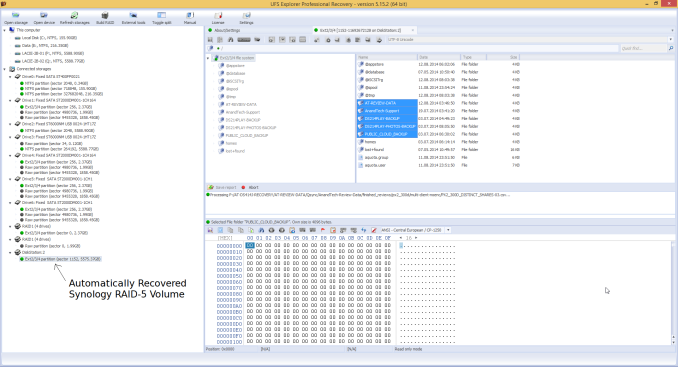

Windows + UFS Explorer

Prior to booting into Windows, I had all the four drives and the LaCie DAS connected to our DAS testbed. The four drives were recognized as having unknown partitions (thanks to most of them being in EXT4 format). However, that was not a problem for UFS Explorer. All the partitions in all the connected drives were recognized correctly and the program even presented the reassembled RAID-5 volume at the very end.

After this, it was a simple process of highlighting the appropriate folders in the right pane and saving it to one of the disks in the DAS.

Fortunately, I had only around 100 GB of data in the DS414j at the time of failure, and I got done with the recovery process less than 10 hours after waking up to the issue.

55 Comments

View All Comments

zodiacfml - Saturday, August 23, 2014 - link

Still that hard to restore files from a NAS? Vendors should develop a better way.colecrowder - Saturday, August 23, 2014 - link

At work we recently had 2 drives error on a Synology RAID 5. It wasn't quite total failure of two drives, but it crashed the volume. It's a 30+ TB system for our film digitization business, 13-disk RAID (1812+ and 513 expansion) and we've tried just about everything to recover, to no avail. UFS didn't help, an expert in the Ubuntu method we hired couldn't fix it either. Lesson learned: back everything up every night! Did find this useful guide for situations like ours, though:http://community.spiceworks.com/how_to/show/24731-...

ganeshts - Saturday, August 23, 2014 - link

Shame about the lost data, but the link is definitely interesting.In case of drive failures within accepted limits (1 for RAID-5, 2 for RAID-6), the NAS itself should be able to rebuild the array. If more drives are lost, RAID rebuild softwares can't help since data is actually missing and there is no parity to recover the lost data.

That said, if there is a drive failure as well as a NAS failure, I would personally make sure to image the remaining live drives on to some other storage before attempting recovery using software (rather than trying to recover from the live disks themselves)

Navvie - Monday, September 1, 2014 - link

30TB RAID5? Please tell me you replaced that array with something more suitable.DNABlob - Sunday, August 24, 2014 - link

Good article & research.These days for my personal stuff, I use cloud backups (CrashPlan) and a single disk or striped pair (space and/or performance). If quick recovery is imperative, I'll employ something like AeroFS to sync data between two hosts on the same LAN. Pretty decent setup if you don't need to maintain meta-data like owner & ACLs.

I'll spare you a long diatribe about software RAID5 and how a partial stripe write can silently corrupt data on a crash. As far as I can tell, this isn't fixed in Linux's RAID implementation. At the time Sun was very proud of their ZFS / RAID-Z implementation which fixed the partial write problem. For light write workloads, partial stripe writes are unlikely, but still a very real risk.

https://blogs.oracle.com/bonwick/en_US/entry/raid_...

KAlmquist - Monday, August 25, 2014 - link

In reply to DNABlob: As far as I know, no released version of the Linux RAID has had a problem with silent data corruption, so there is no need for a fix.The author of the article you've linked acknowledges that RAID 5 can be implemented correctly in software when he writes, "There are software-only workarounds for this, but they're so slow that software RAID has died in the marketplace." It is true that an incorrect implementation of RAID 5 could result in silent data corruption, but the same thing can be said of any software, including ZFS. ZFS includes checksums on all data, but those checksums don't do any good if a careless programmer has neglected to call the code that verifies the checksums.

elFarto - Sunday, August 24, 2014 - link

The reason your mdadm commands weren't working is because you were attempting to use the disks themselves, not their partitions.mannyvel - Monday, August 25, 2014 - link

What the article shows is that if your device uses a Linux raid implementation you can get the data off of your drives if your device goes belly up by using free or commercial tools. While useful, you could have done the same thing by buying a new device and dropping your drives in - correct?This isn't really data recovery where your raid craps out because of a two-drive failure or some other condition that whacks your data. This is recovery due to an enclosure failure. Show me a recovery where your RAID dies, not where your enclosure dies.

Lerianis - Friday, September 5, 2014 - link

Not always. Some machines are so badly designed that they INITIALIZE (wipe the drives) when old drives with data are put into them.crashplan - Thursday, September 18, 2014 - link

True. Anyways great article.