X-Gene 1, Atom C2000 and Xeon E3: Exploring the Scale-Out Server World

by Johan De Gelas on March 9, 2015 2:00 PM EST

The recent announcements of ARM, HP, AppliedMicro, AMD, and Intel make it clear that something is brewing in the low-end and micro server world. For those of you following the IT news that is nothing new, but although there have been lots of articles discussing the new trends, very little has been quantified. Yes, the Xeon E3 and Atom C2000 have found a home in many entry-level and micro servers, but how do they compare in real-world server applications? And how about the first incarnation of the 64-bit ARMv8 ISA, the AppliedMicro X-Gene 1?

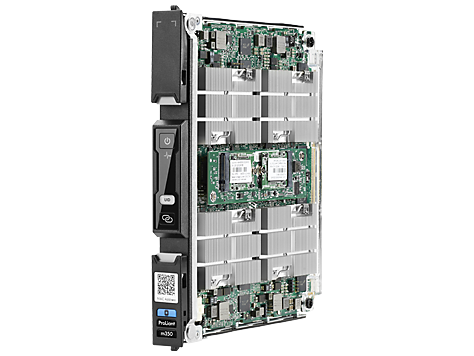

As we could not find much more than some vague benchmarks and statements that are hard to relate to the real world, we thought it would be useful to discuss and quantify this part of the enterprise market a bit more. We wanted to measure performance and power efficiency (performance/watt) of all current low-end and micro server offerings, but that proved to be a bit more complex than we initially thought. So we went out for a long journey from testing basic building blocks such as the ASRock Atom board, trying out an affordable Supermicro cloud server with 8 nodes, to ultimately end up with testing the X-Gene ARM cartridge inside an HP Moonshot chassis.

It did not end there. Micro servers are supposed to run scale-out workloads, so we also developed a new scale-out test based on Elasticsearch. It's time for some in depth analysis, based on solid real-world benchmarks.

Target Audience?

This article – as with most of my articles – is squarely targeted at professionals like system administrators and web hosting professionals. However, if you are a hardware enthusiast, the low-end server market does have quite a bit to offer. For example, if you want to make your system more robust, the use of ECC RAM may help. Also, if you want to experiment/work with virtual machines, the Xeons offer VT-d that allows you to directly access I/O devices in a virtual machine (and in some cases, the GPU). Last but not least, server boards support out-of-band remote management which allows you to turn the machine on and off remotely. That can be quite handy if you use your desktop as a file server as well.

Micro and Scale-Out Servers?

The business model of many web companies is based on delivering a service to a large number of users in order to be profitable. This is because the income (advertising or something else) per user is relatively low. Dropbox, Facebook, and Google are the prime examples that everybody knows, but even AnandTech is no exception to this rule. The result is that most web companies need lots of infrastructure but do not have the budget of an IT department that is running a traditional transactional system. Web (hosting) companies need dense, power efficient, and cheap servers to keep the hosting costs low.

Small 1U servers: dense but terrible for administration & power efficiency

Just a few years ago, they had few options. One possible option was half-width or short depth 1U servers, another was the more dense forms of blade servers. As we have shown more than once, 1U servers are not power efficient and need too much cabling, PSUs, etc. Blade servers are more power efficient, reduce the cabling complexity, and the total number of PSUs and fans. But most blade servers have lots of features web companies do not use and are also too expensive to be the ideal solution for all the web companies out there.

The result of the above limitations is that both Facebook and Google developed their own servers, a clear indication that there was a need for a different kind of server. In the process, a new kind of dense server chassis was introduced. At first, the ones with low power nodes with "wimpy" cores were called "micro servers". The more beefy servers targeted at more demanding scale-out software were called "scale-out servers". Since then, SeaMicro, HP, and Supermicro have been developing these simplified blade server chassis that offer density, low power, and low(er) costs.

Each vendor took a different angle. SeaMicro focused on density, capacity, and bandwidth. Supermicro focused on keeping the costs and complexity down. HP went for a flexible solution that could address the largest possible market – from ultra dense micro servers to beefier scale-out servers to specialized purpose servers (video transcoding, VDI etc.). Let's continue with a closer look at the components and servers we tested.

47 Comments

View All Comments

Wilco1 - Tuesday, March 10, 2015 - link

GCC4.9 doesn't contain all the work in GCC5.0 (close to final release, but you can build trunk). As you hinted in the article, it is early days for AArch64 support, so there is a huge difference between a 4.9 and 5.0 compiler, so 5.0 is what you'd use for benchmarking.JohanAnandtech - Tuesday, March 10, 2015 - link

You must realize that the situation in the ARM ecosystem is not as mature as on x86. the X-Gene runs on a specially patched kernel that has some decent support for ACPI, PCIe etc. If you do not use this kernel, you'll get in all kinds of hardware trouble. And afaik, gcc needs a certain version of the kernel.Wilco1 - Tuesday, March 10, 2015 - link

No you can use any newer GCC and GLIBC with an older kernel - that's the whole point of compatibility.Btw your results look wrong - X-Gene 1 scores much lower than Cortex-A15 on the single threaded LZMA tests (compare with results on http://www.7-cpu.com/). I'm wondering whether this is just due to using the wrong compiler/options, or running well below 2.4GHz somehow.

JohanAnandtech - Tuesday, March 10, 2015 - link

Hmm. the A57 scores 1500 at 1.9 GHz on compression. The X-Gene scores 1580 with Gcc 4.8 and 1670 with gcc 4.9. Our scores are on the low side, but it is not like they are impossibly low.Ubuntu 14.04, 3.13 kernel and gcc 4.8.2 was and is the standard environment that people will get on the the m400. You can tweak a lot, but that is not what most professionals will do. Then we can also have to start testing with icc on Intel. I am not convinced that the overall picture will change that much with lots of tweaking

Wilco1 - Tuesday, March 10, 2015 - link

Yes, and I'd expect the 7420 will do a lot better than the 5433. But the real surprise to me is that X-Gene 1 doesn't even beat the A15 in Tegra K1 despite being wider, newer and running at a higher frequency - that's why the results look too low.I wouldn't call upgrading to the latest compiler tweaking - for AArch64 that is kind of essential given it is early days and the rate of development is extremely high. If you tested 32-bit mode then I'd agree GCC 4.8 or 4.9 are fine.

CajunArson - Tuesday, March 10, 2015 - link

This is all part of the problem: Requiring people to use cutting edge software with custom recompilation just to beat a freakin' Atom much less a real CPU?You do realize that we could play the same game with all the Intel parts. Believe me, the people who constantly whine that Haswell isn't any faster than Sandy Bridge have never properly recompiled computationally intensive code to take advantage of AVX2 and FMA.

The fact that all those Intel servers were running software that was only compiled for a generic X86-64 target without requiring any special tweaking or exotic hacking is just another major advantage for Intel, not some "cheat".

Klimax - Tuesday, March 10, 2015 - link

And if we are going for cutting edge compiler, then why not ICC with Intel's nice libraries... (pretty sure even ancient atom would suddenly look not that bad)Wilco1 - Tuesday, March 10, 2015 - link

To make a fair comparison you'd either need to use the exact same compiler and options or go all out and allow people to write hand optimized assembler for the kernels.68k - Saturday, March 14, 2015 - link

You can't seriously claim that recompiling an existing program with a different (well known and mature) compiler is equal to hand optimize things in assembler. Hint, one of the options is ridiculous expensive, one is trivial.aryonoco - Monday, March 9, 2015 - link

Thank you Johan. Very very informative article. This is one of the least reported areas of IT in general, and one that I think is poised for significant uptake in the next 5 years or so.Very much appreciate your efforts into putting this together.