Western Digital Updates Red NAS Drive Lineup with 6 TB and Pro Versions

by Ganesh T S on July 21, 2014 8:00 AM EST- Posted in

- NAS

- Storage

- Western Digital

Back in July 2012, Western Digital began the trend of hard drive manufacturers bringing out dedicated units for the burgeoning NAS market with the 3.5" Red hard drive lineup. They specifically catered to units having 1-5 bays. The firmware was tuned for 24x7 operation in SOHO and consumer NAS units. 1 TB, 2 TB and 3 TB versions were made available at launch. Later, Seagate also jumped into the fray with a hard drive series carrying similar firmware features. Their differentiating aspect was the availability of a 4 TB version. Western Digital responded in September 2013 with their own 4 TB version (as well as a 2.5" lineup in capacities up to 1 TB).

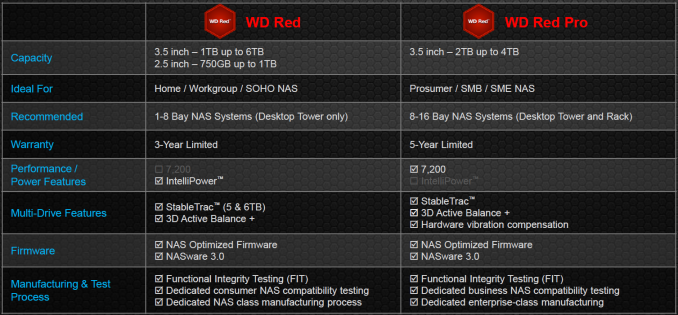

Today, Western Digital is making updates to their Red lineup for the third straight year in a row. The Red lineup gets the following updates:

- New capacities (5 TB and 6 TB versions)

- New firmware (NASware 3.0)

- Official sanction for use in 1-8 bay tower form factor NAS units

In addition, a new category is also being introduced, the Red Pro. Available in 2 - 4 TB capacities, this is targeted towards rackmount units with 8 - 16 bays (though nothing precludes it from use in tower form factor units with lower number of bays).

WD Red Updates

Even though 6 TB drives have been around (HGST introduced the Helium drives last November, while Seagate has been shipping Enterprise Capacity and Desktop HDD 6 TB versions for a few months now), Western Digital is the first to claim a NAS-specific 6 TB drive. The updated firmware (NASware 3.0) puts in some features related to vibration compensation, which allows the Red drives to now be used in 1 - 8 bay desktop NAS systems (earlier versions were officially sanctioned only for 1 - 5 bay units). NASware 3.0 also has some new features to help with data integrity protection in case of power loss. The unfortunate aspect here is that units with NASware 2.0 can't be upgraded to NASware 3.0 (since NASware 3.0 requires some recalibration of internal components that can only be done in the factory).

The 6 TB version of the WD Red has 5 platters, which makes it the first drive we have seen to have an areal density of more than 1 TB/platter (1.2 TB/platter in this case). This areal density increase is achieved using the plain old Perpendicular Magnetic Recording (PMR) technology. Western Digital has not yet found reason to move to any of the new technologies such as SMR (Shingled Magnetic Recording), HAMR (Heat-assisted Magnetic Recording) or Helium-filling for the WD Red lineup.The 5 TB and 6 TB versions also have WD's StableTrac technology (securing of the motor shaft at both ends in order to minimize vibration). As usual, the drive comes with a 3 year warranty. Other aspects such as the rotation speed, buffer capacity and qualification process remain the same as that of the previous generation units.

WD Red Pro

The Red Pro targets medium and large business NAS systems which require more performance by moving to a rotation speed of 7200 rpm. Like the enterprise drives, the Red Pro comes with hardware-assisted vibration compensation, undergoes extended thermal burn-in testing and carries a 5-year warranty. 2, 3 and 4 TB versions are available, with the 4 TB version being a five platter design (800 GB/platter).

The WD Green drives are also getting a capacity boost to 5 TB and 6 TB. WD also specifically mentioned that their in-house NAS and DAS units (My Cloud EX2 / EX4, My Book Duo etc.) are also getting new models with these higher capacity drives pre-installed. The MSRPs for the newly introduced drives are provided below

| WD Red Lineup 2014 Updates - Manufacturer Suggested Retail Prices | ||

| Model | Model Number | Price (USD) |

| WD Red - 5 TB | WD50EFRX | $249 |

| WD Red - 6 TB | WD60EFRX | $299 |

| WD Red Pro - 2 TB | WD2001FFSX | $159 |

| WD Red Pro - 3 TB | WD3001FFSX | $199 |

| WD Red Pro - 4 TB | WD4001FFSX | $259 |

We do have review units of both the 6 TB WD Red and the 4 TB WD Red Pro. Look out for the hands-on coverage in the reviews section over the next couple of weeks.

37 Comments

View All Comments

ZeDestructor - Tuesday, July 22, 2014 - link

You make a fair point, and tbh, I ramble on about it every NAS review: gimme ZFS from the factory already, damnit!I've just grown used to using ZFS for any form of RAID (and this is just more pro-softraid argument) that I forget that people actually uses non-ZFS RAID at all...

ZeDestructor - Tuesday, July 22, 2014 - link

Also, RAIDZ3 (three disk parity). Good stuff if like me you're thinking about huge arrays... 3x15disk vdevs in a backblaze pod is my plan... When I can finally afford to get 5disks in a single go and gradually build up from there...tuxRoller - Tuesday, July 22, 2014 - link

ZFS isn't always the best solution for home use. I preach the gospel of snapraid (non-realtime snapshot parity, non-striping, scrub support, arbitrary levels of parity support, mix/match drives, easy addition and removal... Open Source!). It's great for a media server with infrequent data changes, or for use as a backup for PCs.For high churn access patterns, zfs is still the best (well, for home use).

wintermute000 - Saturday, July 26, 2014 - link

Which means building your own and going ECC pretty much to handle ZFS (since we're talking about home/enthusiast/SOHO/SMB market not enterprise).At this point of URE probability with 6Tb drives and consumer class 10^14 reliability RAID6 is not much better than a RAID5. 50% fail rate per disk statistically speaking, even RAID6 you're dicing with danger during a rebuild.

ZeDestructor - Tuesday, July 29, 2014 - link

Quoting my own reply to asmian a little above:"ZFS should be able to catch said errors. The issue in traditional arrays is that file integrity verification is done so rarely that UREs can remain hidden for very long periods of time, and consequently, only show up when a rebuild from failure is attempted.

ZFS meanwhile will "scrub" files regularly on a well-setup machine, and will catch the UREs well before a rebuild happens. When it does catch the URE, it repairs that block, marks the relevant disk cluster as broken and never uses it again.

In addition, each URE is only a single bit of data in error. The odds of all drives having the URE for the exact same block at the exact same location AND the parity data for that block AND have all of those errors happen at the same time is extremely small compared to the chance of getting 1bit errors (that magical 1x10^14 number).

Besides, if you really get down to it, that error rate is the same for smaller disks as well, so if you're building a large array, the error rate is still just as high, but now spread over a larger amount of disks, so while the odds of a URE is smaller (arguable, I'm not going there), the odds of a physical crash, motor failure, drive head failure and controller failure are higher, and as anyone will tell you, far more likely to happen than 2 or more simultaneous UREs for the same block across disks (which is why you want more than 1 disk worth of parity, so there is enough redundancy DURING the rebuild to tolerate the second URE hapenning on another block)"

It boils down to one URE being only 1 bit, not a whole disk failure.

Sivar - Monday, July 21, 2014 - link

A single bit error is not the same as a drive failure.DanNeely - Monday, July 21, 2014 - link

The problem is with the raid controllers, mostly in that they work below the level of the FS. Instead of being able to report that sector 1098832109 was lost during the rebuild and that consequently you need to restore the file \blah\stuff\whatever\somethingelse\whocares.ext from an alternate backup source they report a failure to rebuild the array because the drive with the 1 bit error on it failed. And in a raid 1/5 configuration the presence of a second bad drive means that as far as the raid controller is concerned your array is now non-recoverable because you've lost 2 drives in an array that can only tolerate a single failure.icrf - Monday, July 21, 2014 - link

I was just about to buy a bunch of 4 TB Red drives. Is there any way to tell whether one is getting a 2.0 or 3.0 firmware drive, since it's not a user-upgradable thing?noeldillabough - Wednesday, July 23, 2014 - link

I'd like to know this too, noticed everywhere has a sale on the (presumably older) red drives right now.icrf - Monday, July 28, 2014 - link

I asked WD support and got a pretty disappointing answer:--------------------------------

Thank you for contacting Western Digital Customer Service and Support. My name is Daniel.

You have a very good question, I will be very happy to answer it.

You are right, the oldest drives cannot be updated to the new technology and the model is the same.

However the specs of the hard drives provided by every retailer should provide the correct data.

So, before purchasing the hard drive, check the specs to see if it has the newest technology. I just checked on Newegg and Amazon and they provide that detail. You will realize if it is an old drive or a new one.

If this does not answer your question, please let me know, I will be very happy to help you.

--------------------------------

At the time I asked, Amazon listed their version at 3.0 but the image still said 2.0. I asked them about it and the description reverted to 2.0, so trusting retailers to be accurate sounds like a bad plan.

I'm sure they're just trying to create the confusion to clear the old stock. I mean, who would buy the old one when the new one is sitting right beside it for the same price? It's just a little disappointing when I'm looking to buy right now, and the 6 TB drives are available and obviously updated since they're new, but $/GB is just a little high. 3 TB drives are actually the best on that metric, but 4 TB drives aren't far off and get me a little better density.