ARM’s Mali Midgard Architecture Explored

by Ryan Smith on July 3, 2014 11:00 AM ESTMidgard: The Modern Mali

As ARM’s current-generation SoC GPU architecture, at the highest level the Midgard architecture is an interesting take on GPUs that in some ways looks a lot like other GPUs we’ve seen before, and in other ways (owing to its uncommon ancestry) is radically unlike other GPUs. This is coupled with the fact that as an SoC GPU supplier, ARM is in an interesting position where they can offer both CPU and GPU designs to 3rd party licensees, unlike most other GPU designers who either use their designs internally (Qualcomm, NVIDIA) or only license out GPUs and not ARM CPUs (Imagination). From a sales perspective this means ARM can offer the CPU and GPU designs together in a bundle, but perhaps more importantly it means they have the capability design the two in concert with each other, being in the position of the sole creator of the ARM ISA.

Architecturally Midgard is a direct descendant of Utgard. While there is a significant difference in how unified and discrete shaders operate, and as a result they cannot simply be swapped, the resulting shader design for Midgard still ends inheriting many of Utgard’s design elements, features, and quirks. At the same time the surrounding functionality blocks that compose the rest of the GPU have received their own upgrades over the years to improve performance and features, but are none the less distinctly descended from Utgard as well. At the end of the day this is a distinction more important for programmers than it is users (or even tech enthusiasts), but going forward it’s interesting to note just how similar Utgard and Midgard are, a similarity we don’t normally see between unified and discrete shader designs.

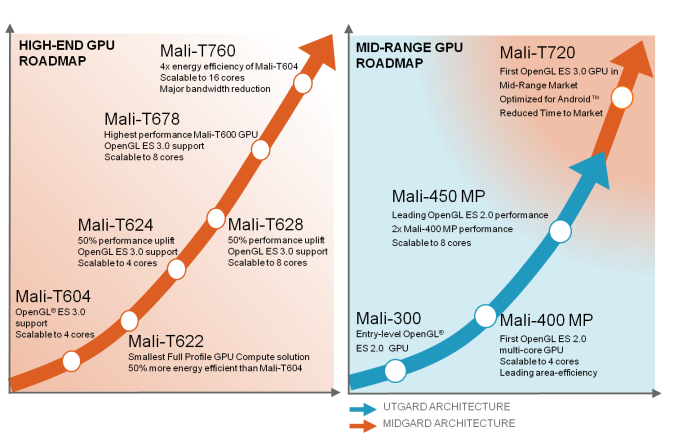

From a design standpoint Midgard is designed to span much of the range for SoC GPUs, from cheap, area-efficient designs to relatively massive designs with an eye on gaming. In doing so ARM offers a few different variations on the Midgard design that are all architecturally identical, but will vary slightly in features and internal organization. So for the purposes of today’s article we’ll be focusing on ARM’s latest and greatest design, Mali-T760, but we will also be calling out differences as necessary.

First and foremost then, let’s talk about design goals and features. Unlike the bare bones OpenGL ES 2.0 Utgard architecture, Midgard has been designed to be a more feature-rich architecture that not only offers solid graphics performance but solid compute performance too. This is in part a logical extension of what a unified shader GPU can already do – they’re innately good at mass math for graphics, so compute is only a minor stretch – but also a deliberate decision by ARM to push compute harder than they would otherwise have to for merely a graphics product.

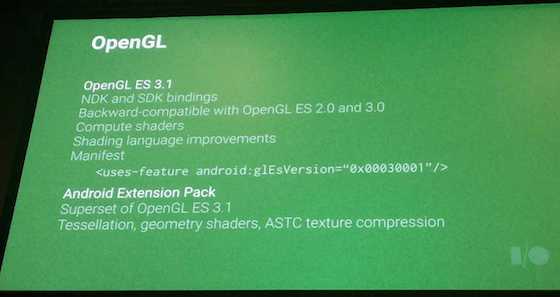

From an API standpoint then Midgard was designed as what is best described as an OpenGL ES 3.0+ part. The architecture was designed from the start to offer functionality beyond what OpenGL ES 3.0 would offer, a decision that has since benefitted ARM by allowing Midgard parts to keep up with newer API standards. In fact ARM has just recently completed OpenGL ES 3.1 conformance testing, with their updated drivers passing Khronos’s required tests. As such all Midgard parts at a hardware level can support OpenGL ES 3.1, with software support reliant on OS and device vendors shipping updated OSes and drivers that enable 3.1 functionality.

Even then Midgard has some functionality that has gone untapped, but will be enabled in the Android ecosystem through the upcoming Android Extension Pack for Android L. The AEP will further build off of OpenGL ES 3.1 by enabling features such as tessellation and geometry shaders, features that did not make it in to 3.1. As with OpenGL ES 3.1, ARM has confirmed that they expect all Midgard GPUs to support the AEP.

Finally, along with OpenGL ES support, ARM also officially offers Direct3D support on Midgard. This functionality has not yet been tapped – all Windows Phone and Windows RT devices so far have been Qualcomm or NVIDIA based – but in principle it is there. One thing to note however is that among the Mali 700 series, only Mali-T760 is Direct3D Feature Level 11_1 capable. Mali-T720 however only supports level 9_3, more befitting of the market realities and its status as a lower cost, lower complexity part.

Meanwhile from a compute standpoint Midgard is intended to be a strong competitor by supporting Android’s RenderScript framework and OpenCL 1.2 full profile. OpenCL support on SoC GPUs has been spotty due in part to the fact that the major OSes haven’t consistently supported it (iOS never has and Android only recently), and of those SoC GPUs that do support it, not all of them support the full profile as opposed to the much more restricted embedded profile. As is often the case with GPU computing just how well this functionality is used is up to the capabilities and imaginations of developers, but ARM has made it clear that they’re fully backing GPU computing even in the SoC space.

66 Comments

View All Comments

kkb - Monday, July 7, 2014 - link

I hope you do understand how to read benchmark results. 3dmark and GFXbench(offscreen) results are resolution independent. Now go and check the results in the article.As per T760, I will not comment on theoretical GFLOP numbers unless there is real product. Even the theoretical MAD GFLOPs are not so great (roughly half) compared to others. I don't think anyone going to fall for marketing gimmick of taking dot product also as extra 40 GFLOPs

darkich - Monday, July 7, 2014 - link

You said it definitely performs better yet it loses on the T Rex HD onscreen and in Basemark X overall. That's not definite you know.Regardless, even if a case can be made that it performs slightly better overall than the Mali T628, it is without doubt outperformed by the :

ULP Gforce 3

Adreno 330

Sgx G6320

.. and is *definitely* far outclassed by the Adreno 420, Kepler K1, SGX G6550, Mali T760MP6-10.

Until Intel shows their next generation of ULP graphics, I don't see a point in comparing the current one

darkich - Monday, July 7, 2014 - link

Correction, I believe the GPU in Tegra 4 is internally referred to as the ULP GeForce 4, not 3fithisux - Friday, July 4, 2014 - link

Could you provide an expository of C66x architecture since it is suitable in my opininion for GPGPU tasks and realtime software rendering/raytracingjann5s - Friday, July 4, 2014 - link

lol, I thought this expression was wrong: "the proof is in the pudding", but in fact I was wrong: http://en.wiktionary.org/wiki/the_proof_is_in_the_...toyotabedzrock - Friday, July 4, 2014 - link

I wish you would have talked more about the GPU in the Nexus 10 since that is a shipping product. It would be nice to know how it differs from the newer midgard designs.seanlumly - Friday, July 4, 2014 - link

Another interesting point to make about the Mali architecture (that goes unnoticed, but is significant) is that the anti-aliasing is fully pipelined, tiled (read zero bandwidth penalty for the op), and very fast. MSAA 4x costs 1 cycle, MSAA 8x costs 2 cycles, and MSAA 16x costs 4 cycles. This means that if you have a scene full of fragment shaders running for more than 4 cycles (which is not too complex these days) you get the benefit of ultra-high quality MSAA 16x for FREE.There aren't too many examples of MSAA 16x online, but even at MSAA 8x performs very well, with sharp, non-blurry results and is often compared against. MSAA would produce very crisp edges devoid of aliasing and crawling during animation.

Of course, MSAA isn't perfect -- it isn't terribly helpful for deferred renderers -- but it certainly doesn't hurt them when its costs are nothing, even if you are planning to do a screen-space pass in post.

toyotabedzrock - Friday, July 4, 2014 - link

Oddly the best open source driver is for adreno GPU, perhaps you should ask that person what he knows about it?ol1bit - Saturday, July 5, 2014 - link

This is another fantastic job guys! Thanks to you and Thanks to Midgard for sharing!cwabbott - Saturday, July 5, 2014 - link

Well, if they won't cough up the information, then there's always freedreno... Rob Clark has reverse engineered basically everything you would want to know about the Adreno architecture, up to even more detail than this article. All that remains is to fill in the pieces based on the documentation he's written...