ARM’s Mali Midgard Architecture Explored

by Ryan Smith on July 3, 2014 11:00 AM ESTMidgard’s Execution Model: ILP, not TLP

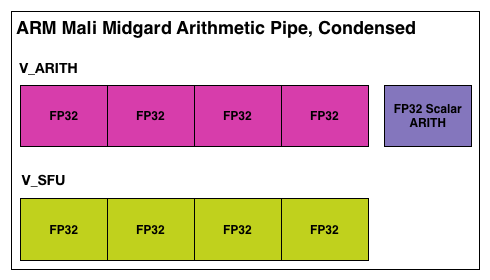

Having seen the makeup of a Midgard arithmetic unit, we can finally get to the subject of thread execution on Midgard, and in the process get to what’s easily the least traditional aspect of the Midgard GPU.

As we mentioned before, Midgard is based on the idea of using VLIW with SIMDs, or as ARM likes to call it Sequential Long Instruction Word. In this execution model the Midgard shader compiler attempts to construct a single word of instructions in order to fill as many of the ALUs in an arithmetic pipeline as is possible. As is common for VLIW designs, this requires extracting a degree of Instruction Level Parallelism from the instructions being fed to the shader compiler, both to find operations that can be placed together on the SIMD units and to find operations that can then fill any of the otherwise idle units in a Midgard arithmetic pipeline. There is no superscalar execution going on here, but to make full use of a Midgard arithmetic unit you need to be able to bundle several operations together into the single VLIW word.

Depending on who you ask, an ILP-centric design either makes a great deal of sense or is difficult to program for, depending of course on the specific quirks of an architecture. From a hardware perspective this is very simple because the compiler does all the work while the hardware itself is merely executing VLIW instructions as requested. The hardware is not doing any scheduling or hazard checking on its own, and only really needs to deal with a stall (when a memory operand isn’t available in time). This saves die space and this saves power, and because all of your instructions in an arithmetic unit are from the same thread then you can save even more space because you have a group of ALUs working on the same thread with its data elements adjacent to each other.

Of all of the SoC_class GPUs we’ve looked at that are currently paired with ARM processors – NVIDIA’s Kepler and Imagination’s Rogue – both are designs that rely at least in part on ILP. Both also happen to be superscalar (something that’s not the case for Midgard), but at a basic level Midgard is in good company with its ILP-centric design.

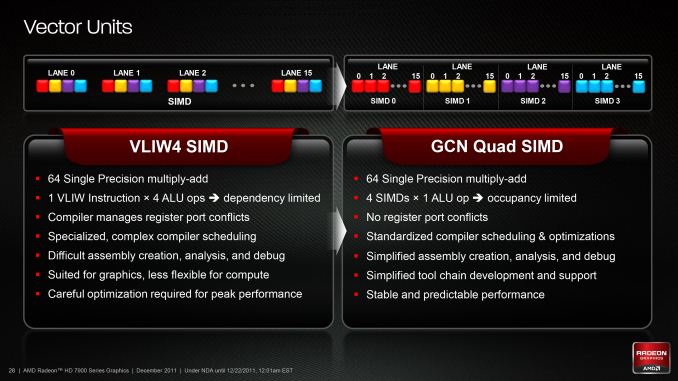

On the other hand we have seen one other company attempt a VLIW and ILP-centric design in recent history only to abandon it. AMD used a vaguely similar VLIW design for their Radeon HD 2000 through HD 6000 parts, only to abandon VLIW for a non-VLIW design in 2011. At the time they cited that compute workloads weren’t mapping well to their design, and even graphics workloads were increasingly becoming less optimal. AMD’s replacement architecture, Graphics Core Next, used no ILP and relied entirely on Thread Level Parallelism.

A Summary of AMD's Experiences With VLIW versus TLP SIMD

Ultimately the intended use case for Midgard is first and foremost graphics, and this is something that by all accounts it seems to do well at. For a simpler GPU design such as what would be suitable for an SoC-class GPU, VLIW is still a strong fit in ARM’s case because the 128bit vector SIMD maps well to RGBA shader operations (one color channel per SIMD lane) while the scalar unit covers anything else that doesn’t map well to the vector units. To that end we won’t spend too much more time covering the pros and cons of an ILP-centric VLIW design, but we did want to cover the history and how it compares to some other architectures.

Meanwhile, for anyone curious about the pipeline in an individual Midgard ALU, ARM also opened up to us on that front. In a very rare occasion in the GPU world ARM told us how deep their pipeline is, coming in at up to 128 stages deep for the entire Midgard pipeline. The artihmetic pipeline itself is under 30 stages deep but each pipeline of the tri-pipe design is a different length. Since we don’t know the depth of any other GPU pipelines we don’t have anything of substance to compare it to, but it is notable that ARM’s execution model is consistent with GPU execution models in general. A 128 stage deep pipeline invokes a high latency, high throughput machine that is interleaving a large number of threads to keep the pipeline full, which is in a nutshell an accurate description for GPUs in general. In any case, for Midgard ARM provides a further twist by bypassing misses (a failed attempt to read or write a piece of data) entirely; misses result in an instruction restart, rather than stalling the pipeline and all of the threads in it while waiting for the miss to be resolved.

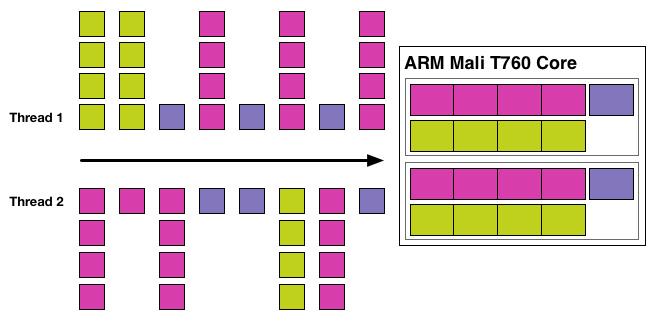

Looking at the larger picture then, now that we how a single thread executes across one Midgard arithmetic unit let’s see how multiple arithmetic units work in concert. Or rather let’s not, because they don’t.

In the larger picture Midgard deviates from any other modern GPU we’ve seen in a very important way: Midgard does not extract thread level parallelism.

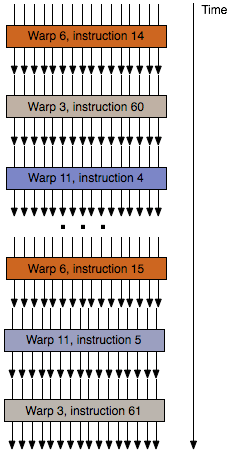

Every other GPU, be it Imagination, NVIDIA, AMD, or Intel, and whether it relies on ILP or not, also relies on thread level parallelism (TLP) to organize their work. In these designs multiple threads are bundled together and sent through an array of ALUs at once, where each thread in turn represents what is essentially a single pixel. Due to their spatial locality these pixels-as-threads are run together, the idea being that due to their locality they’re almost certainly going to have the same instruction applied, and in the end can be sent through ALUs that operate as a group and not independently. These wavefronts are a basic tenet of modern GPUs, whether it’s groups of 16 threads per cycle as is the case for AMD and Imagination, or groups of 32 threads per cycle as is the case for NVIDIA.

An Example of Wavefront/Warp Thread Execution On Other Architectures

But it’s this basic tenet that the Mali team has wanted to challenge, and in doing so they have put together an architecture in Midgard (and Utgard before it) that extracts no TLP from threads and executes each and every one of them independently. This, more than anything else, is the element that sets Midgard’s design apart from anything else we’ve seen so far.

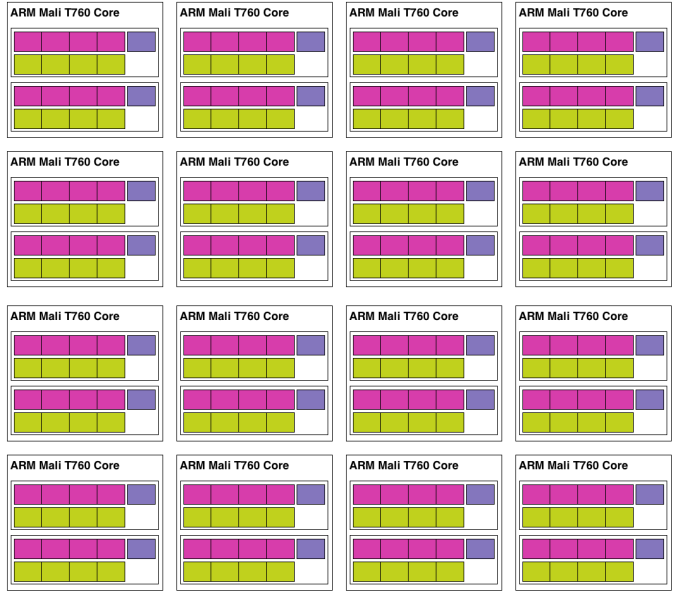

As a result of this design decision, each arithmetic pipeline in Midgard is essentially its own CPU, consuming threads and processing them independently. Even in a single Mail-T760 tri pipe where there are 2 arithmetic units in a block these arithmetic units act on their own, working separately from one-another. Or to put this in to practice, in a large Midgard configuration such as T760MP16, this would mean a full 32 threads (16 cores * 2 math units) are being actively and independently executed.

Taken at face value then, this is a design that is very unorthodox and comes with a significant number of pitfalls that thread level parallelism is designed to compensate for. On the whole TLP is considered to be very easy to extract due to the spatial locality of pixels, which is all the more reason why on the desktop AMD went from a design that mixed ILP and TLP to a design that only utilized TLP. Furthermore because of the locality of pixels and threads, the ALUs in a design that does utilize TLP – typically a 16 or 32 wide array of SIMD ALUs – could be smaller due to the fact that they did not need the means to act independently, allowing more ALUs to be placed on such a GPU than would otherwise be possible.

But on the other hand TLP-centric designs do have their own drawbacks, and these are the reasons that ARM believes their non-TLP Midgard architecture is the way to go. First and foremost, TLP designs can only excel when threads/pixels need to flow together. If they need to go their separate ways, say due to a branching operation, then execution is slowed down to handle that branch. Alternatively, a group of threads that are branching may not be large enough to fill all 16/32 slots in a wavefront, in which case there are ALUs in the array executing the wavefront are going unused.

Midgard in contrast does not suffer from any thread related occupancy issues beyond very light workloads where even 32 threads (T760MP16) cannot be found, in which case Midgard gets to shut off whole cores. In fact because of this Midgard gets to branch for next to free due to the fact that there aren’t any other threads an arithmetic pipeline must concern itself with. Midgard arithmetic units can branch as they please and access memory as they please, an especially convenient scenario since tiling is already bounding the work area. At the same time however, this means that Midgard is going to be at its best in code with lots of branches, as otherwise in code with minimal branching will map well to traditional TLP-centric designs.

Meanwhile on the matter of tiling, this brings up another point ARM likes to make about Midgard versus TLP-centric designs, which is that they believe that wavefronts and tile based renderers are poorly matched. At times the limited number of threads/pixels in tiles would certainly exacerbate any issues keeping wavefronts filled with threads, among other things.

With that said from a theoretical perspective there’s not much more we can say on the matter other than to cover the execution model and the potential strengths and weaknesses of it. Other than that the proof is in the pudding, and that how well ARM’s unorthodox architecture performs is ultimately what determines if ARM has made the best move in creating such a unique architecture, or if there is a reason everyone else invests in thread level parallelism.

But for what it is worth, this design does go back to Utgard, and Utgard was (and continues to be) a solid performer for ARM. It has sold well over the years and would strongly suggest that a TLP insensitive architecture can in fact map well to workloads, at least for OpenGL ES 2.0 graphics.

66 Comments

View All Comments

seanlumly - Thursday, July 3, 2014 - link

Given the "exotic" the ILP and the 128-bit VLIW SIMD, the Mali looks like an impressive performer. If a Mali T760MP10 is indeed a part fit for smartphone-level power consumption, then a quick linear-scaling -- given GFXBench scores of a T760MP4 -- would imply that such a GPU is very competitive with something like the adreno 420 and certainly impressive if scaled up further to a tablet-level power consumption. If, however, an MP10 consumes roughly as much as a K1 or GX6650, then I'm very sceptical about its competitive performance.I find the distribution of ALUs to memory units strange given mobile bandwidth limits. The Mali T760 in an 8-core configuration clocked at 600MHz will allow for 19.2 GB/s of load/store access (256-bits/clock: source ARM). This is quite high memory bandwidth, and an increase in GPU clocks or cores will likely yield idling load/stores units doing nothing but taking up valuable die area. Operations with high varying access, cache reads, and tile read/writes will of course make good use of these additional units, but it still seems like overkill on all but very memory-access heavy apps. ARM would know best, though I'm suspicious that so many load/store units are needed for common workloads. I would guess that in common scenarios, bandwidth to main memory would be exhausted long before all of the memory units were fully utilized on a Mali T760 of high core count (eg. MP12-16).

Decoupling the load/stores and texture units as their own "core" may allow more appropriate scaling to fit the bandwidth of the target system. A system with an ultra-high resolution, could be endowed with more load/stores and/or texture units. A system with a lower resolution could use less and opt for more ALUs in the same space. This would be similar to big.LITTLE (different cores for different targeted workloads). In this scenario, the memory unit cores could be scaled independently of the ALU cores, perfectly tailored to the target system.

EdvardS - Friday, July 4, 2014 - link

Remember that there is a cache system between those units and the SoC memory controller. Bandwidth to the cores is quite different from bandwidth to the DDR memory.seanlumly - Friday, July 4, 2014 - link

Indeed! Bandwidth to cache, tile memory, varying data, or textures would likely be more relevant with a tile-based renderer that often exploits spatial locality when processing batches of pixels. This is especially true with modern screen-space effects that do multiple dependent reads per display pixel (eg. SSAO), but are strongly confined to buffer fragments surrounding the target pixel. Such situations would value having many LS/Tex units at little penalty.But I do still like the idea of an independently scalable "memory" core (containing load/store/texure pipes) to complement a "math" core (containing ALUs). A high-performance system targeting a 720p display will likely consume far less bandwidth than one targeting a 4K display, and as such, it would be nice to trade LS/Tex units for more ALUs in such a case.

Such an arrangement may also enable ARM more leeway when making predictions about a new architecture -- no doubt the Midgard arch was in development many years before it saw implementation in a retail product, which means that ARM would have had to guess trends (eg. resolution) far in advance to attain the right balance of on-chip units per core; independently scalable memory-cores would be more forgiving if the trends turned out not match the initial predictions.

seanlumly - Friday, July 4, 2014 - link

Actually, I am starting to understand the motivation behind the ratio of ALUs to Memory units in a Midgard core. I notice in GFXBench 3.0 "Manhattan", that the Mali T760MP4 (Rockchip rk3288) performs incredibly well at 720p, but it's performance drops off more than proportionately as the resolution increases. This may imply that in these higher than 720p scenarios, the 4-core variant of GPU may not be able to keep up with the memory demands, as computation should scale very close to proportionally.Thus the 1080p offscreen score for the Mail T760 MP4 in the GFXBench 3.0 (offline) database may be misleading as the MP4 may be a bit small for this resolution, and thus the performance may be low relative to its competition. A T760 MP8 would likely more than double the performance for a doubling of the resolution, pushing something like the Mali T760 MP8 well beyond the competition, at what I suspect are similar levels of power and die-size. I predict that a T760 MP8 would get slightly north of 16fps in GFXBench 3.0 (assuming adequate bandwidth to DDR). Even an MP6 variant of the GPU (as was the case with the T628 MP6 in the Exynos 5420) should put it more-or-less on-par with the competition!

The Mali performs even better in the GFXBench 2.7 "T-Rex" test, where a small 4-core Mali T760 MP4 surpasses the competition at 720p and even sub-720p resolutions in some instances! This is incredible. In this case, it seems that the test is more computation bound, as there is a more proportional scaling between performance and resolution.

I hope that future GPUs consider using the T760 in higher-core-count configurations. I still like the idea of a Memory-core, though I have little doubt that a Mali GPU of evenly matched size can go toe-to-toe with the competition.

Frenetic Pony - Friday, July 4, 2014 - link

Every time I read an overview of a SoC GPU I am so, so glad I don't do anything with mobile stuff. "We support tessellation! I mean, don't actually do it. Ever. But you know, it's supported."kkb - Friday, July 4, 2014 - link

How come there is no comparison with intel GPUs like the ones in Baytrail?darkich - Friday, July 4, 2014 - link

Because there is no comparison phrase.That GPU is completely inferior compared to latest Mali, PowerVR and Adreno architectures

Krysto - Friday, July 4, 2014 - link

Word.kkb - Monday, July 7, 2014 - link

well.. I don't really agree. Please look at the AT review from last week or so.. http://www.anandtech.com/show/8197/samsung-galaxy-...MEMO pad is a baytrail product and definitely performs better than MALI devices.

darkich - Monday, July 7, 2014 - link

Get your facts and reading skills in order.Firstly, the GPU in Memo Pad is definitely not definitely performing better than even the Mali T628, in fact those very tests show it trades blows with it, mostly due to much lower resolution screen.

Secondly, do you realize that the T760 is MUCH faster than T628?

You can see here that it is basically comparable to the Tegra K1 and even the intimidating Series 6XT doesn't trounce it.

Rest assured that any of these three, as well as the Adreno 420, is way above the ULP HD graphics chip