Samsung SSD 850 Pro (128GB, 256GB & 1TB) Review: Enter the 3D Era

by Kristian Vättö on July 1, 2014 10:00 AM ESTAnandTech Storage Bench 2013

Our Storage Bench 2013 focuses on worst-case multitasking and IO consistency. Similar to our earlier Storage Benches, the test is still application trace based - we record all IO requests made to a test system and play them back on the drive we are testing and run statistical analysis on the drive's responses. There are 49.8 million IO operations in total with 1583.0GB of reads and 875.6GB of writes. I'm not including the full description of the test for better readability, so make sure to read our Storage Bench 2013 introduction for the full details.

| AnandTech Storage Bench 2013 - The Destroyer | ||

| Workload | Description | Applications Used |

| Photo Sync/Editing | Import images, edit, export | Adobe Photoshop CS6, Adobe Lightroom 4, Dropbox |

| Gaming | Download/install games, play games | Steam, Deus Ex, Skyrim, Starcraft 2, BioShock Infinite |

| Virtualization | Run/manage VM, use general apps inside VM | VirtualBox |

| General Productivity | Browse the web, manage local email, copy files, encrypt/decrypt files, backup system, download content, virus/malware scan | Chrome, IE10, Outlook, Windows 8, AxCrypt, uTorrent, AdAware |

| Video Playback | Copy and watch movies | Windows 8 |

| Application Development | Compile projects, check out code, download code samples | Visual Studio 2012 |

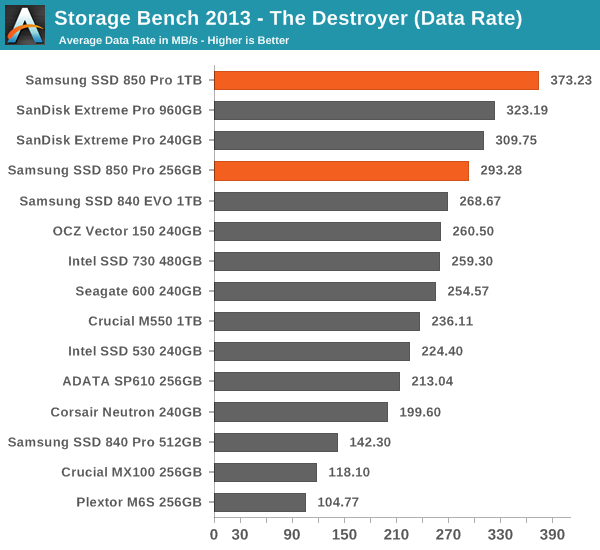

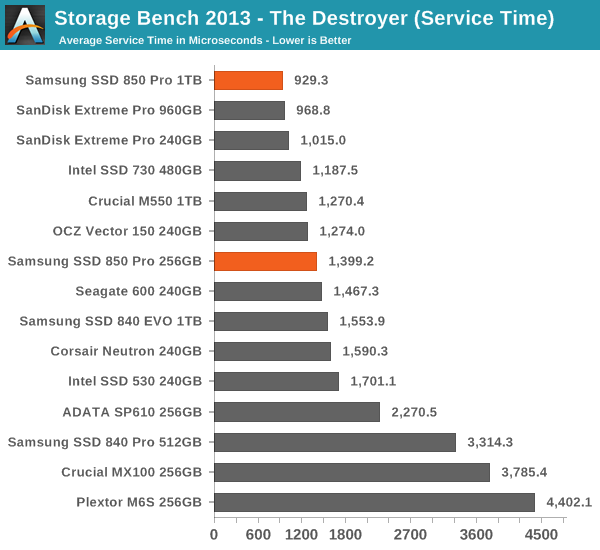

We are reporting two primary metrics with the Destroyer: average data rate in MB/s and average service time in microseconds. The former gives you an idea of the throughput of the drive during the time that it was running the test workload. This can be a very good indication of overall performance. What average data rate doesn't do a good job of is taking into account response time of very bursty (read: high queue depth) IO. By reporting average service time we heavily weigh latency for queued IOs. You'll note that this is a metric we have been reporting in our enterprise benchmarks for a while now. With the client tests maturing, the time was right for a little convergence.

Thanks to the excellent IO consistency, the 850 Pro dominates our 2013 Storage Bench. At the 1TB capacity point, the 850 Pro is over 15% faster than any drive when looking at the average data rate. That is huge because the 850 Pro has less over-provisioning than most of today's high-end drives and the 2013 Storage Bench tends to reward drives that have more over-provisioning because it essentially pushes drives to steady-state. The 256GB model does not do as well as the 1TB one but it is still one of the fastest drives in its class. I wonder if the lesser amount of over-provisioning is the reason or perhaps the Extreme Pro is just so well optimized for mixed workloads.

160 Comments

View All Comments

TrackSmart - Tuesday, July 1, 2014 - link

I second this. The Anandtech SSD tests were designed so that we could tell the difference between drives that are all so fast - that there is no way to tell them apart in ordinary usage scenarios. I see the value of testing the theoretical performance of drives as manufacturers push the technological limits.That said, at the end of the day user-experience is what matters. I agree with emn13 that the "light workload" test is already more strenuous than anything the average user is likely to do, and looking at the chart, we see that almost every drive is within a range of ~280 to ~380 MB/s. I'm guessing that the range in performance gets even narrower for "real world" workloads.

So keep up the innovative SSD testing, but be sure to put these theoretical performance gains into a real-world context when you get to the Conclusions section of these articles. Not everyone will benefit from these theoretical increases in performance.

hojnikb - Tuesday, July 1, 2014 - link

Is Samsung planning on doing TLC based V-NAND anytime soon ?It would be great for a mainstream drive, since endurance would be higher (due to older node), speeds would probobly also went up (so no need for gimicks like turbowrite).

Or is it not mature enough to scale down to TLC ?

artifex - Tuesday, July 1, 2014 - link

You had me at 10 years warranty. I don't mind the slight premium if I'm not buying another one midway through the cycle. Sure, it will be obsolete well before it dies, but that term signals Samsung is really confident about their reliability.Gigaplex - Tuesday, July 1, 2014 - link

Since it's twice the price of competition like the MX100, you're better off replacing mid way through the cycle.Arnulf - Tuesday, July 1, 2014 - link

I must have missed this in the article - are these V.NAND cells as used in 850 Pro drives 2 or 3 bits per cell ? I got the "larger lithography improves endurance" part, I'm just wondering whether they opted for more conservative option (MLC) there as well.extide - Tuesday, July 1, 2014 - link

These are MLC, or 2 bit per cell.It would be interesting if the non pro 850 comes out with TLC V-NAND!

himem.sys - Tuesday, July 1, 2014 - link

Heh, we are waiting for tests 850pro vs 840pro, because there are no bigger differences "on paper".sirvival - Tuesday, July 1, 2014 - link

Hi,one question:

In the review the idle power consumption for e.g. the 850 128gig is 35 mw.

I wanted to compare that to my Samsung 470 so I went to Bench and selected the drives for comparison.

There it says that the 850 uses 0.29 Watt.

So how comes there is a difference?

KAlmquist - Tuesday, July 1, 2014 - link

Anandtech Bench has four SSD power numbers:SSD Slumber Power (HIPM+DIPM)

Drive Power Consumption - Idle

Drive Power Consumption - Sequential Write

Drive Power Consumption - Random Write

The confusing things are that (1) the review only listed slumber power, not idle power, and (2) Bench lists both numbers but doesn't place the slumber power next to the other power values.

mutantmagnet - Tuesday, July 1, 2014 - link

I also find the lack of powerloss protection being a big negative over this hard drive. Until REFS has all the features it needs in Windows that you would get in Linux this is going to be an important feature for anyone who values data integrity. Even after that happens it still might be very important.