Samsung SSD 850 Pro (128GB, 256GB & 1TB) Review: Enter the 3D Era

by Kristian Vättö on July 1, 2014 10:00 AM ESTPerformance Consistency

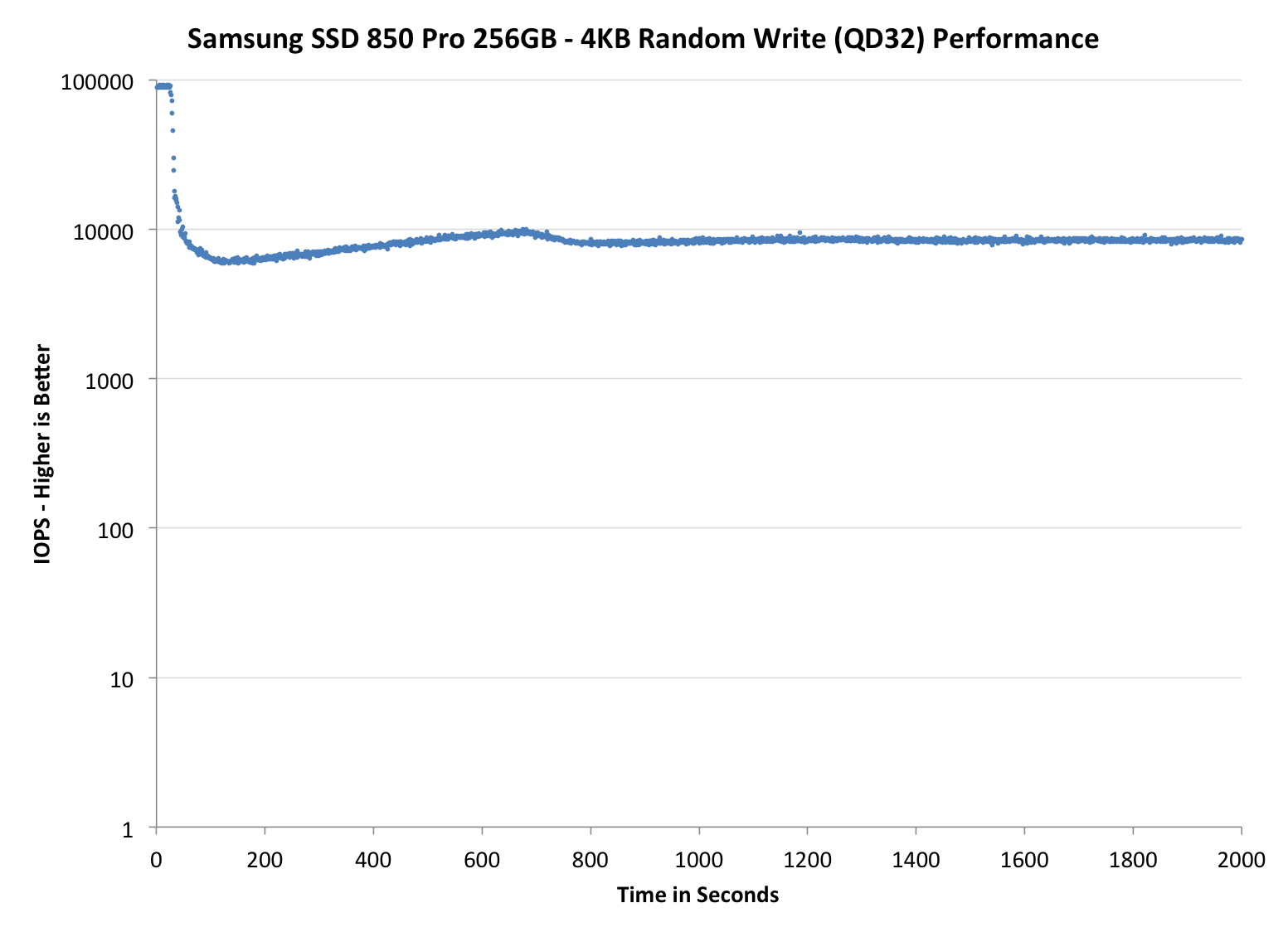

Performance consistency tells us a lot about the architecture of these SSDs and how they handle internal defragmentation. The reason we don’t have consistent IO latency with SSD is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag or cleanup routines directly impacts the user experience as inconsistent performance results in application slowdowns.

To test IO consistency, we fill a secure erased SSD with sequential data to ensure that all user accessible LBAs have data associated with them. Next we kick off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. The test is run for just over half an hour and we record instantaneous IOPS every second.

We are also testing drives with added over-provisioning by limiting the LBA range. This gives us a look into the drive’s behavior with varying levels of empty space, which is frankly a more realistic approach for client workloads.

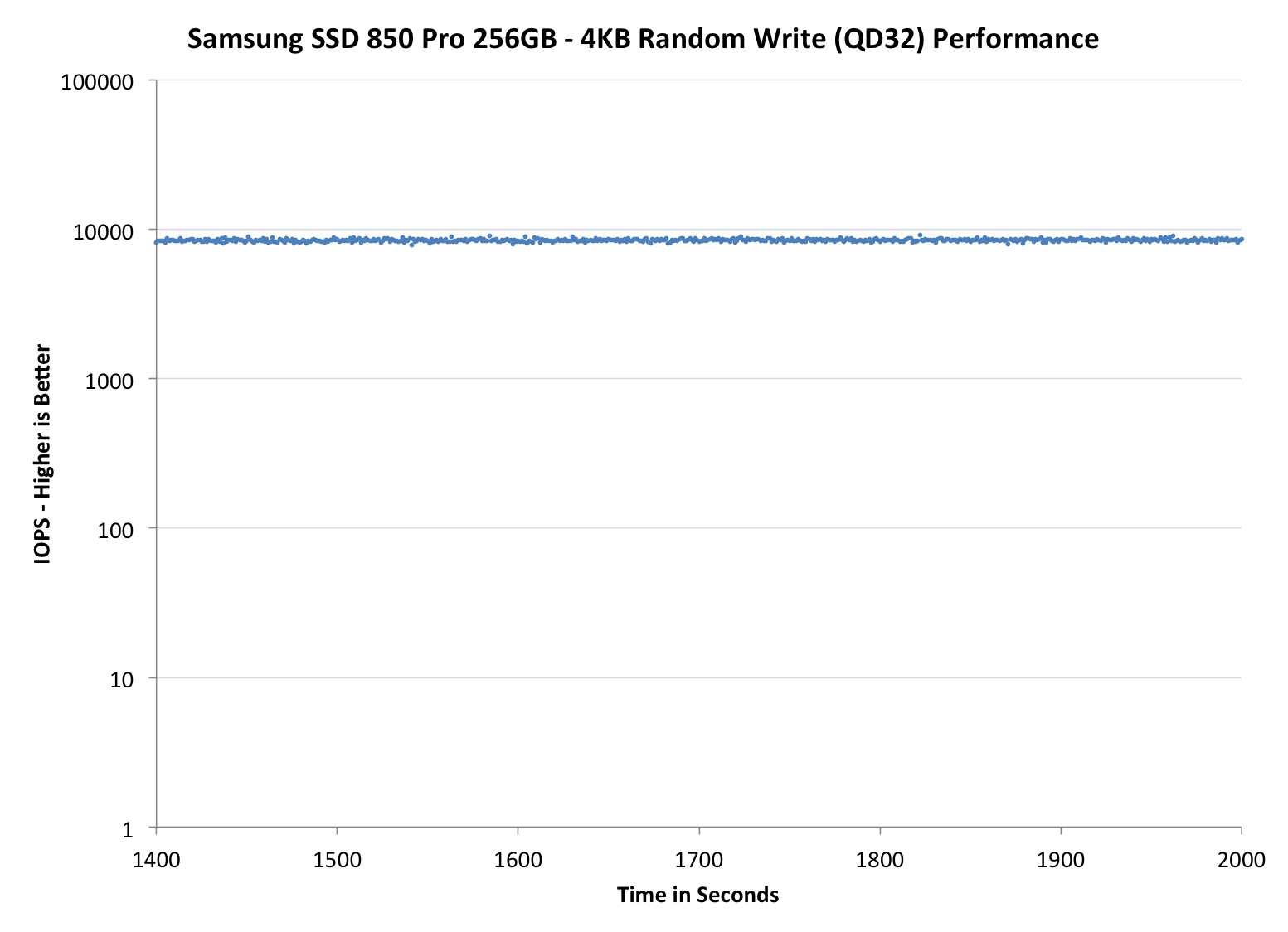

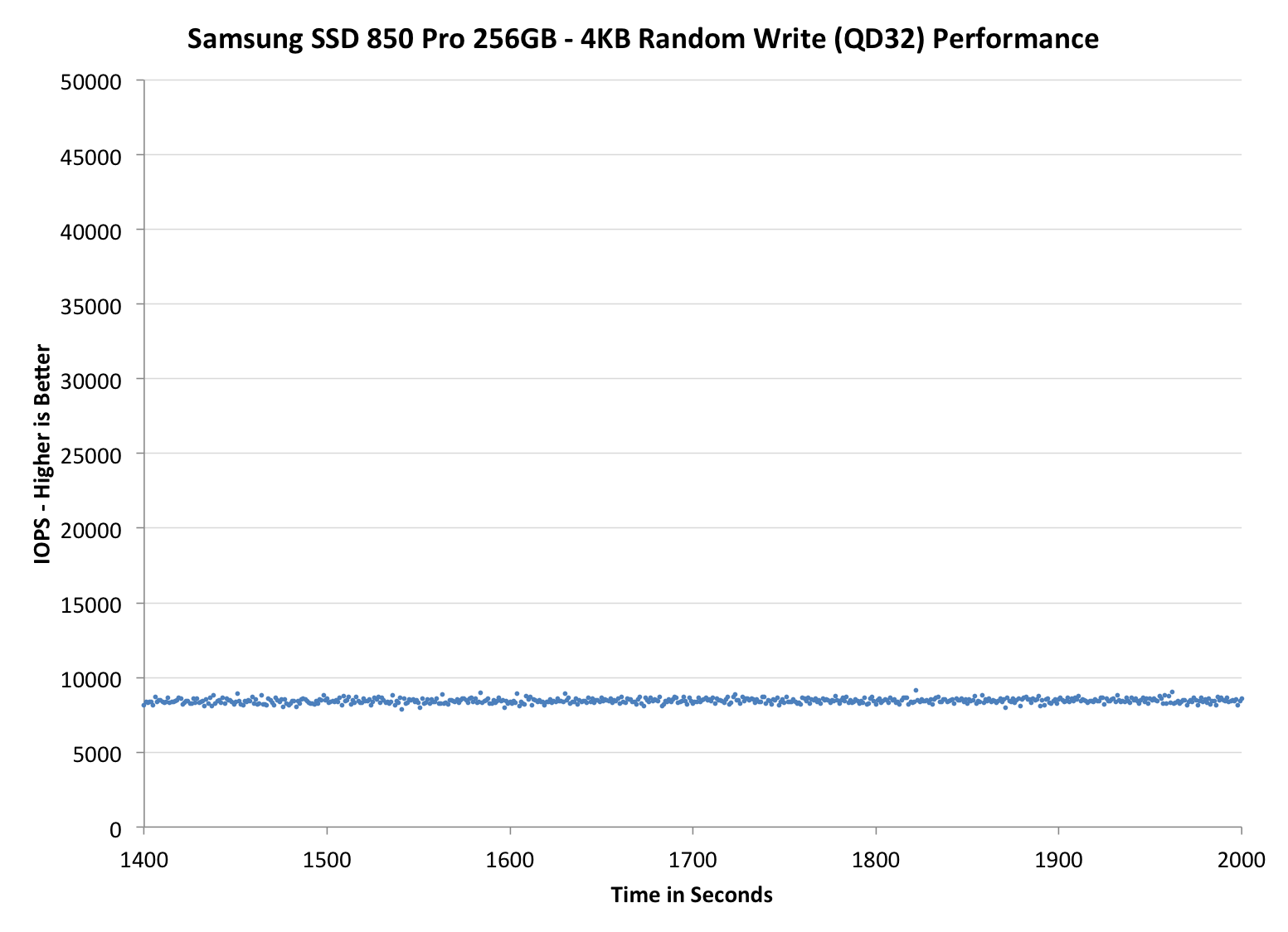

Each of the three graphs has its own purpose. The first one is of the whole duration of the test in log scale. The second and third one zoom into the beginning of steady-state operation (t=1400s) but on different scales: the second one uses log scale for easy comparison whereas the third one uses linear scale for better visualization of differences between drives. Click the buttons below each graph to switch the source data.

For more detailed description of the test and why performance consistency matters, read our original Intel SSD DC S3700 article.

|

|||||||||

| Samsung SSD 850 Pro | Samsung SSD 840 Pro | SanDisk Extreme Pro | Intel SSD 730 | OCZ Vector 150 | |||||

| 7% Over-Provisioning | - | - | - | ||||||

| 12% Over-Provisioning | |||||||||

| 25% Over-Provisioning | |||||||||

Wow, this is awesome. Even with the default 7% over-provisioning, the 850 Pro is pushing almost as many IOPS as the Extreme Pro with its 12% over-provisioning. When the over-provisioning is increased to the same 12% level, the 850 Pro is a leader without a doubt. Only the Vector 150 can come close, although it is nowhere hear as constant as the IOPS is ranging between 10K and 30K, whereas the 850 Pro can maintain a steady line.

When compared with the 840 Pro, the upgrade is tremendous. IO consistency was always the weak point of the 840 Pro, so it is great to see that Samsung has paid a great effort to fix that in the 850 Pro. A part of the performance increase obviously comes from the usage of V-NAND because with shorter program and erase latencies, the steady-state performance increases as the garbage collection takes less time and there are more empty blocks available.

Some of you may wonder the odd capacities at 25% over-provisioning but the reason is that I noticed an error in the old ones. Basically, the old 25% numbers were in gibibytes (i.e. 1024^3 bytes) whereas the other capacities have always been in gigabytes (1000^3 bytes). I decided to unify the capacities and now they are all reported in gigabytes. The actual testing or over-provisioning levels have not changes -- it is simply a matter of how the capacities are represented.

|

|||||||||

| Samsung SSD 850 Pro | Samsung SSD 840 Pro | SanDisk Extreme Pro | Intel SSD 730 | OCZ Vector 150 | |||||

| 7% Over-Provisioning | - | - | - | ||||||

| 12% Over-Provisioning | |||||||||

| 25% Over-Provisioning | |||||||||

|

|||||||||

| Samsung SSD 850 Pro | Samsung SSD 840 Pro | SanDisk Extreme Pro | Intel SSD 730 | OCZ Vector 150 | |||||

| 7% Over-Provisioning | - | - | - | ||||||

| 12% Over-Provisioning | |||||||||

| 25% Over-Provisioning | |||||||||

160 Comments

View All Comments

Squuiid - Saturday, March 14, 2015 - link

Plus, the MX100 reliability is horrible. Just google MX100 BSOD, disappearing drive.I have 2x MX100 512GB SSDs and I recommend you don't buy one, no matter how cheap they are.

nightauthor - Tuesday, July 1, 2014 - link

For business purposes, I would rather pay twice as much and get a 10 year warranty vs the 3 year supplied by Crucial. Though, for my daily, I would probably go with the Crucial.TheWrongChristian - Wednesday, July 2, 2014 - link

No current SATA drives push low queue depth random IOs to the point of saturating SATA II, let alone SATA III.At high queue depths, perhaps. But then, that is not a typical workload for most users, desktop or server.

Plus, it's a new drive, prices will come down.

jwcalla - Monday, June 30, 2014 - link

Unless they're doing 5% OP the capacities are kinda... off.melgross - Monday, June 30, 2014 - link

I think there's a slight misunderstanding of manufacturing cost. While the die size may be the same, or even smaller than a competing technology, the 32 level chip does cost more to make per area. There are more masks, more layers, more etching and washing cycles, and more chance of defects.Right now, I do see why the cost is higher. I can on,y assume that as this technology progresses, that cost will drop per area. But it will always remain higher than an SLC, MLC or TLC chip.

So there is a balance here.

Kristian Vättö - Tuesday, July 1, 2014 - link

You are correct. I did mention yield and equipment cost in the final paragraph but I figured I won't go into detail about masks and etching since those would have required an in-depth explanation of how NAND is manufactured :)R0H1T - Tuesday, July 1, 2014 - link

It would be great if Anand or you do a writeup on 3d NAND & deal with the specific pros & cons of it as compared to traditional 2d NAND & if possible include something related to manufacturing processes of these & how they're different OR more/less expensive, certainly as in case of V-NAND?MrSpadge - Tuesday, July 1, 2014 - link

You wouldn't need too much detail - just saying that the number of process steps increases by probably around an order of magnitude should make this pretty clear.frenchy_2001 - Tuesday, July 1, 2014 - link

It is probably more than that, as Samsung is currently manufacturing 32 layers of cells. Each layer requires multiple operations (deposit, etching, washing...). Their biggest advantage comes from regressing to 40nm: at that technology, each operation is *MUCH* cheaper than the equivalent one at 1X pitch (15~19nm).So, total cost is an unknown, but should be very competitive, after recovering the initial R&D investment.

Spatty - Tuesday, July 1, 2014 - link

And not to mention 3D NAND is still basically bleeding edge. It's still in the stages of where a new DDR generation arrives, much higher costs then current gen.