Samsung SSD 850 Pro (128GB, 256GB & 1TB) Review: Enter the 3D Era

by Kristian Vättö on July 1, 2014 10:00 AM EST3D NAND in Numbers: Is it Economical?

As with all new semiconductor technologies, the one big question is whether it is economical. There are a ton of memory technologies that have better characteristics than 2D NAND (MRAM and ReRAM to name a couple) but none of them is currently scalable enough to challenge NAND in cost. IMFT's 16nm node is the smallest node shipping in volume, so let's compare Samsung's second generation V-NAND to that to see how it stacks up.

I am basing my analysis on Andrew Walker's blog post from 3DInCites. He is a respected analyst in 3D technologies and his blog post is based on Samsung's and Micron's presentations at the 2014 International Solid-State Circuits Conference, so the data should be as accurate as it can be.

Update 7/8: I was able to find some more accurate data regarding the die size, so I've updated this section with the new data.

| Samsung 2nd Gen V-NAND | Micron 16nm NAND | |

| Process Node | 40nm | 16nm |

| # of Layers | 32 | - |

| Die Capacity | 86Gbit | 128Gbit |

| Die Size | 95.4mm2 | 173mm2 |

| Cell Size | 40,300nm2 | 1,312nm2 |

The only downside of Walker's data is that it only covers Samsung's first generation V-NAND, which was a 24-layer design with a die size of 133mm2 and capacity of 128Gbit. Fortunately, the second generation V-NAND is using the same 40nm node, so the only substantial difference between the first and second generation is the number of layers. The 86Gbit die capacity is certainly a bit odd but it actually makes sense as it is simply the first generation die cut in half with the added layers [(128Gbit / 2) * (32 / 24)]. As such, it is relatively safe to assume that the other variables (cell size etc.) are the same as with the first generation and that is what the table above is based on.

Update 7/8: Actually, the peripheral circuitry does not scale with the memory array, meaning that the die size is actually quite a bit larger than I originally thought.

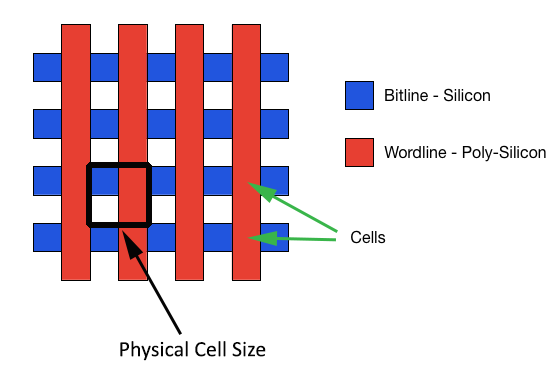

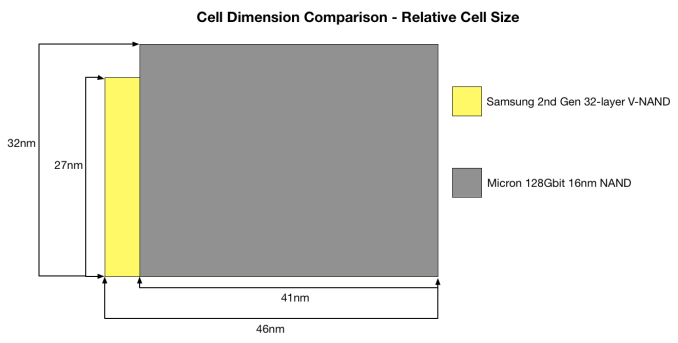

Before we go deeper with the density analysis, I want to explain how cell size is calculated. As you can see, the figures are way too big to make any sense if you just look at the 40nm and 16nm figures because no matter how you try to multiply them, the results do not make sense.

Oftentimes when cell size is discussed, it is only the actual size of the cell that is taken into account, which leaves the distance between cells out of the conclusion. However, the so called empty parts (they are not really empty as you saw in the X-ray a couple of pages back) take a part of the die area similar to the cells, meaning that they cannot be excluded. The appropriate way to measure cell size is from the inner corner of a cell to the outer corners of neighbouring cells in both X and Y axes. This is demonstrated by the black square on the above graph.

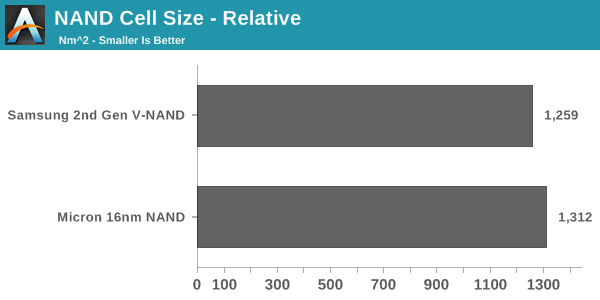

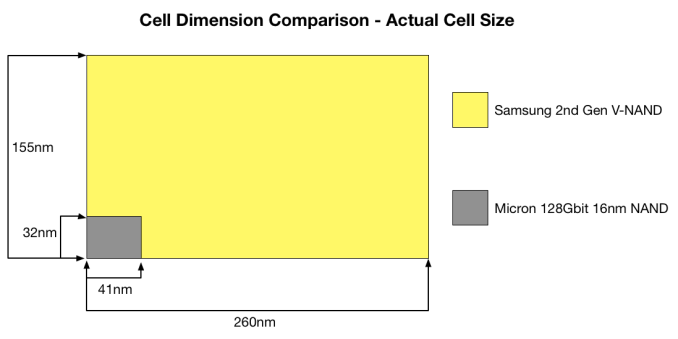

With the proper measurements, this is how V-NAND compares to 16nm NAND when just looking at cell size alone (i.e. excluding how the layers impact density). Doesn't look too good, huh?

If you are wondering where the 16nm comes from, it is mostly just marketing. 16nm refers to the smallest length (or resolution as it is often called) in the die, meaning that it is the finest line that can be printed. In the case of NAND, that can either be the wordline or bitline, or the distance between them. With Micron's 16nm NAND, that is likely the length of the wordline and the distance between two wordlines as the two are 32nm when combined (i.e. 16nm each).

The actual cell size did not make justice to V-NAND because the whole idea behind the move to 3D NAND is to increase the cell size and distances between cells to get rid of the issues 2D NAND has. In the graph above, I took the amount of layers into account because you essentially need 32 2D NAND cells to achieve the same density as with 32-layer V-NAND and the game totally changes.

The math behind that graph is just a couple of simple arithmetic equations. The actual cell area is 40,300 (155nm*260nm), meaning that the relative cell area is simply the actual cell area divided by the number of layers. That gives us 1,259nm2(40,300nm2 / 32). To get the relative dimensions, the actual dimensions are divided by the square root of the number of layers (e.g. 155nm / √32 = 27nm).

In the end, the relative cell size turns out to be smaller than Micron's 16nm NAND. The difference is not huge (~4%) but when the performance, power consumption and endurance advantages are taken into account, V-NAND is a clear winner.

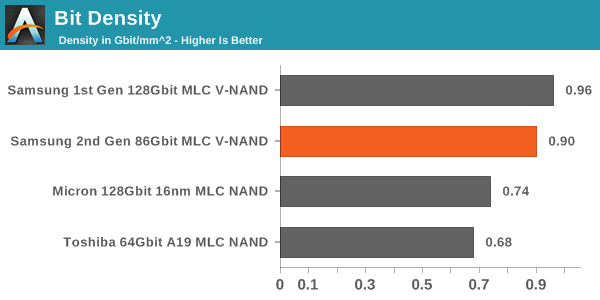

Another way to look at cost efficiency is the bit density. While cell size is a good measure, it does not take peripheral circuitry and ECC/spare bytes into account, which take a part of the die as well. Bit density is simply die capacity divided by die size and it gives us a number for quick and easy comparison.

In this comparison, V-NAND is the leader without any doubts. The bit density is as much as 73% higher, resulting in a much more cost efficient design. The reason why the difference between cell size and bit density is so large is that 2D NAND requires a lot more die area for ECC bytes because it is more error prone. With V-NAND there is less ECC needed thanks to its higher reliability. In addition, Micron's peripheral circuitry design is relatively die consuming, so I wonder how Toshiba's/SanDisk's 15nm stacks up with V-NAND as they are claiming to have a more efficient peripheral circuitry design.

Update 7/8: V-NAND is still denser than the latest 2D NAND nodes but the difference is not overwhelming.

All in all, there is a lot more than just cell area and bit density when it comes to cost efficiency. Yield and equipment cost are two major factors, which are both better for 2D NAND as it is a well known technology and some of the machinery can be reused when moving from one node to another.

160 Comments

View All Comments

Krakadoom - Saturday, September 27, 2014 - link

I bought the 850 Pro 500 GB version. The transfer speeds are around (and just under) 400 MB/s and the IOPS are always around 55-60k. Not impressed - considering returning it just due to the huge gap between the rated specs and actual performance. (Of course Samsung Magician over-reports the transfer speed by quite a large margin).stealth_lee - Wednesday, October 1, 2014 - link

Someone just tipped me that V-NAND in Samsung 850 Pro is actually TLC not MLC.The first reason is the 86Gbit/die number is odd, if 850 Pro uses a TLC 128Gbit/die and emulates it to MLC then it would be 86Gbit/die, the numbers fit well.

The second reason is Chipworks confirmed it in the die shots:

http://bit.ly/YTVm9Z

http://bit.ly/1uByKcm

I'm just the messenger here, I'm not expert.

So...I was wodering is it possible to hack Samsung 850 Pro to get extra storage space in TLC?

wcatlan - Saturday, October 25, 2014 - link

Why isn't the lack of power loss protection a showstopper for any of these drives? I love the speed and reliability benefits under normal operation, but how can anyone get excited about a drive that can get corrupted in an instant due to power loss or computer freeze, where a hard shutdown is required? Seems that these drives are more prone to massive data issues much more than HDDs under the same power fault conditions. I keep looking for a good answer, but it seems smart people are willing to look past this seemingly fatal Achilles heal. Not sure what I might be missing. Any thoughts?futurefilm - Monday, December 1, 2014 - link

Today, Cyber Monday deals on Amazon, the 850 Pro 256 is going for $150. The 128 for $100. Get it now while it's hot.saagar - Thursday, January 22, 2015 - link

Dear Kristian Vättö,Fantastic review of the drives and the technology behind it. This is what readers like me expect to see on Anandtech. Thanks for breaking it down. Keep up the good work!

gsuburban - Wednesday, April 8, 2015 - link

As of April 8, 2015, the 850 Pro 256GB SSD can be had for about $144 if you look hard enough.rockfella79 - Saturday, June 27, 2015 - link

I love my 850 Pro 128 GB SSD :)KDT - Thursday, March 24, 2016 - link

Please update the endurance to 300TBW for 1TB model. This was my basis for buying this SSD. This is 2nd to Crucial MX200 (320TBW on 1TB model) in terms of endurance - for client/consumer SSDs.BimmerInd - Sunday, June 26, 2016 - link

Samsung is using 40nm over Micron's 16nm. Doing the math implies that for every 2.5 16nm Micron nodes in planar section, Samsung only does 1 40nm node. If we scale vertically to 32 layers, then Micron (or others for that matter) still do only 2.5 nodes for every 32 nodes of Samsung. Which means for every 16nm node, Samsung provides 12.8 nodes. Meaning the density scaling factor for every 32 layer increments is a multiple of 12.8. Assuming the current die size for 32 layers to be 128Gbit, then the density advantage for 256Gbit is 12.8x2 times, 512Gbit is 12.8x4 times and for 1Tbit in 2017 should be 12.8x8 times for 256 layers of nodes stacked on top of each other. So the density advantage is approximately 102.4% (theoretically). Samsung can theoretically produce a 1Tbit die at a cost advantage/space advantage of nearly 100 times compared to planar and manufacturers. It is almost like you are able to earn 100 times the profit for the same die provided the cost per bit is scaled along without passing on the price advantage to the end users until other players enter 3D market.The same is the case with Intel's 3D Cross Point Technology. They are having a new tech in their hands that is faster than NAND and closer to DRAM. So they are also planning to price it exactly between NAND and DRAM. We are already paying high costs to shift from platters to NAND and are going to pay even more to make a shift from NAND to 3D xPoint. I just wish I can jump a few years to the future, grab a high capacity NAND/xPoint drive for cheap and come back to the present and use it. Sigh !

BimmerInd - Sunday, June 26, 2016 - link

By the way this is just a rough calculation and is not to be taken literally.