ADATA Premier SP610 SSD (256GB & 512GB) Review: Say Hello to an SMI Controller

by Kristian Vättö on June 27, 2014 2:00 PM EST- Posted in

- Storage

- SSDs

- ADATA

- SP610

- Silicon Motion

Performance Consistency

Performance consistency tells us a lot about the architecture of these SSDs and how they handle internal defragmentation. The reason we do not have consistent IO latency with SSDs is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag or cleanup routines directly impacts the user experience as inconsistent performance results in application slowdowns.

To test IO consistency, we fill a secure erased SSD with sequential data to ensure that all user accessible LBAs have data associated with them. Next we kick off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. The test is run for just over half an hour and we record instantaneous IOPS every second.

We are also testing drives with added over-provisioning by limiting the LBA range. This gives us a look into the drive’s behavior with varying levels of empty space, which is frankly a more realistic approach for client workloads.

Each of the three graphs has its own purpose. The first one is of the whole duration of the test in log scale. The second and third one zoom into the beginning of steady-state operation (t=1400s) but on different scales: the second one uses log scale for easy comparison whereas the third one uses linear scale for better visualization of differences between drives. Click the buttons below each graph to switch the source data.

For more detailed description of the test and why performance consistency matters, read our original Intel SSD DC S3700 article.

|

|||||||||

| ADATA SP610 | ADATA SP920 | JMicron JMF667H (Toshiba NAND) | Samsung SSD 840 EVO mSATA | Crucial MX100 | |||||

| Default | |||||||||

| 25% OP | |||||||||

Ouch, this doesn't look too promising. The SMI controller seems to be very aggressive when it comes to steady-state performance, meaning that as soon as there is an empty block it prioritizes host writes over internal garbage collection. The result is fairly inconsistent performance because for a second the drive is pushing over 50K IOPS but then it must do garbage collection to free up blocks, which results in the IOPS dropping to ~2,000. Even with added over-provisioning, the behavior continues, although now more IOs happen at a higher speed because the drive has to do less internal garbage collection to free up blocks.

This may have something to do with the fact that the SM2246EN controller only has a single core. Most controllers today are at least dual-core, which means that at least in one simple scenario one core can be dedicated to host operations while the other handles internal routines. Of course the utilization of cores is likely much more complex and manufactures are not usually willing to share this information, but it would explain why the SP610 has such a large variance in performance.

As we are dealing with a budget mainstream drive, I am not going to be that harsh with the IO consistency. Most users are unlikely to put the drive under a heavy 4KB random write load anyway, so for light and moderate usage the drive should do just fine because ~2,000 IOPS at the lowest is not even that bad -- and it's still a large step ahead of any HDD.

|

|||||||||

| ADATA SP610 | ADATA SP920 | JMicron JMF667H (Toshiba NAND) | Samsung SSD 840 EVO mSATA | Crucial MX100 | |||||

| Default | |||||||||

| 25% OP | |||||||||

Just to put things in perspective, however, even the over-provisioned "384GB" SP610 ends up offering worse consistency than the 128GB JMicron JMF667H SSD. Pricing will need to be very compelling if this drive is going to stand up against drives like the Crucial MX100.

|

|||||||||

| ADATA SP610 | ADATA SP920 | JMicron JMF667H (Toshiba NAND) | Samsung SSD 840 EVO mSATA | Crucial MX100 | |||||

| Default | |||||||||

| 25% OP | |||||||||

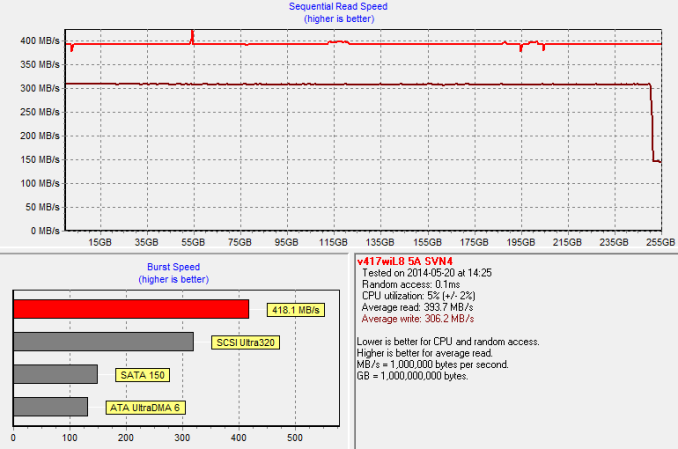

TRIM Validation

To test TRIM, I first filled all user-accessible LBAs with sequential data and continued with torturing the drive with 4KB random writes (100% LBA, QD=32) for 30 minutes. After the torture I TRIM'ed the drive (quick format in Windows 7/8) and ran HD Tach to make sure TRIM is functional.

And it is.

24 Comments

View All Comments

skiboysteve - Sunday, June 29, 2014 - link

Very very cool. Thanks for sharingshodanshok - Sunday, June 29, 2014 - link

Really interesting. How can we help in benchmarking?smadhu - Sunday, June 29, 2014 - link

WE are trying to get a benchmarking setup on a Zync zedboard card first. It is a partially simulated environment. That PCIe and the NAND flash are simulated using RAM but the controller and the CPU is the actual IP. Most universities want this setup first since it assumes an infinite source and sink and let you tune the protocol and the controller.We will also simultaneously release using the Xilinx AC701 card. This is a PCIe card but has no bulitin NAND modules. We are working with Xilinx to get a NAND module done ASAP. But even without it at lease the env. get more real in the sense that now the IP and PCIe are actual IP and only NAND is simulated.

Once proven on this card, we are creating a dedicated PCIe SSD card that will also be open sourced. That will a full fledged card with user replaceable NAND modules and will also be cost optimized. Hopefully Asian vendors will clone those in large quantities to being down cost. We neither charge any royalty nor do we apply for patents on an of our IP. Since the NAND modules are standard, we hope to create a 3rd party eco-system for NAND modules. So you can upgrade your PCIe card when you run out of storage space or when new NAND tech is available.

This effort is actually kind of a trojan horse for our larger project, the SHAKTI open source CPU. We have about 6 classes/families of CPU being developed, ranging from Cortex M-3 level microcontrollers to Xeon class 16-24 core server parts. HPC variants will have 512 bit SIMD with 64-100 cores (NoC fabric). All BSD licensed open source of course. We are running GCC on the cores now and wrapping up SoC integration for the lower end cores. Hope to get Linux running by Christmas. Low end target is the Diglinet Nexus 4 FPGA board

Th cores are important for Storage since we allows us to do the following

- modify the ISA for storage specific operations and remove instructions that is not needed for storage

- allow user defined code to run on the storage controllers

- add functional units for database acceleration

All SoC integration is via AXI framework, so vendors can easily use this IP without retraining their engineers. WE are not alone in such cores, Cambridge just released their MIPS compatible secure CPU.

see beri-cpu.org.

UCB will also shortly release its full blown cores.

Somebody asked me why we did such massive open source HW IP without expecting monetary returns. My answer was simple, I could either build a billion dollar startup or remove a few billion dollars from the IP market ! I chose the latter !

Beagus - Monday, June 30, 2014 - link

Page one Table MB/GB/TB.As always - Good work