Crucial MX100 (256GB & 512GB) Review

by Kristian Vättö on June 2, 2014 3:00 PM ESTPerformance Consistency

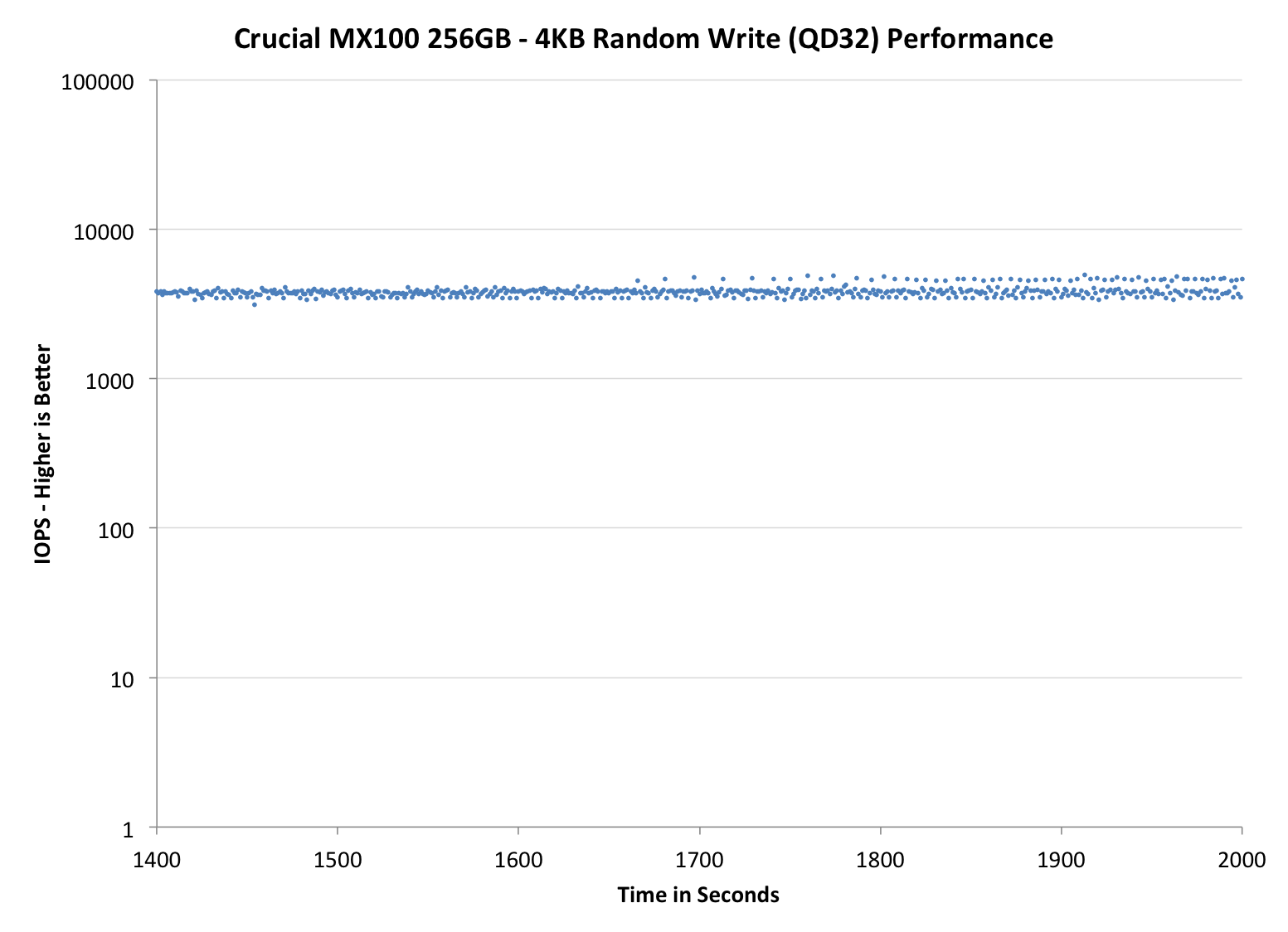

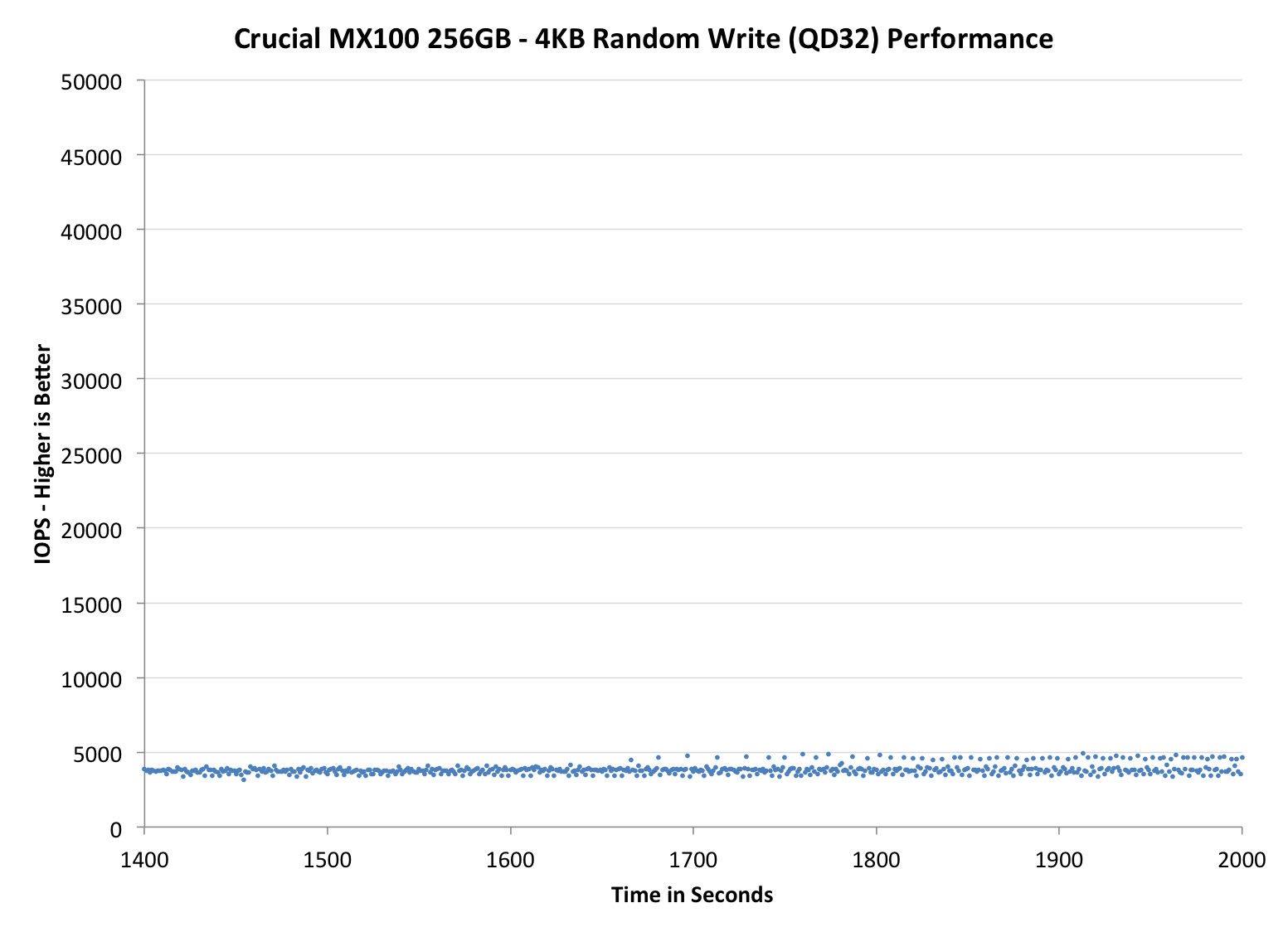

Performance consistency tells us a lot about the architecture of these SSDs and how they handle internal defragmentation. The reason we don’t have consistent IO latency with SSD is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag or cleanup routines directly impacts the user experience as inconsistent performance results in application slowdowns.

To test IO consistency, we fill a secure erased SSD with sequential data to ensure that all user accessible LBAs have data associated with them. Next we kick off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. The test is run for just over half an hour and we record instantaneous IOPS every second.

We are also testing drives with added over-provisioning by limiting the LBA range. This gives us a look into the drive’s behavior with varying levels of empty space, which is frankly a more realistic approach for client workloads.

Each of the three graphs has its own purpose. The first one is of the whole duration of the test in log scale. The second and third one zoom into the beginning of steady-state operation (t=1400s) but on different scales: the second one uses log scale for easy comparison whereas the third one uses linear scale for better visualization of differences between drives. Click the buttons below each graph to switch the source data.

For more detailed description of the test and why performance consistency matters, read our original Intel SSD DC S3700 article.

|

|||||||||

| Crucial MX100 | Crucial M550 | Crucial M500 | SanDisk Extreme II | Samsung SSD 840 EVO mSATA | |||||

| Default | |||||||||

| 25% Spare Area | |||||||||

The IO consistency is a match with the M550. There is effectively no change at all even though the 256GB M550 uses 64Gbit NAND and the MX100 uses 128Gbit NAND, which from a raw NAND performance standpoint should result in some difference due to reduced parallelism. I'm thinking this must be due to the firmware design because as we saw in the JMicron JMF667H review, the NAND can have a major impact on IO consistency because of program and erase time differences. On the other hand, it's great to see that Crucial is able to keep the performance the same despite the smaller (and probably slightly slower) NAND lithography.

With added over-provisioning there appears to be some change in consistency, though. While the 256GB M550 has odd up-and-down behavior, the MX100 has a thick line at 25K IOPS with drops ranging to as low as 5K IOPS. The 512GB MX100 exhibits behavior similar to the 256GB M550, so it looks like the garbage collection algorithms could be optimized individually for each capacity depending on the amount of die (the 256GB M550 and 512GB MX100 have the same number of die but each die in MX100 is twice the capacity).

|

|||||||||

| Crucial MX100 | Crucial M550 | Crucial M500 | SanDisk Extreme II | Samsung SSD 840 EVO mSATA | |||||

| Default | |||||||||

| 25% Spare Area | |||||||||

|

|||||||||

| Crucial MX100 | Crucial M550 | Crucial M500 | SanDisk Extreme II | Samsung SSD 840 EVO mSATA | |||||

| Default | |||||||||

| 25% Spare Area | |||||||||

50 Comments

View All Comments

sonny73n - Saturday, December 20, 2014 - link

Samsung Evo comes to mind ;-)MikeMurphy - Monday, June 2, 2014 - link

330MB/s reads with zero random access penalty is ample for 99.99% of the users out there.There isn't much (or any) real world difference between this and something faster.

isa - Monday, June 2, 2014 - link

Yes, I'd love to see a link to a $100 external SSD with 550MB/s at 95k IOPS.UltraWide - Monday, June 2, 2014 - link

Do you plan to include tests with encryption enabled in the future? Thank you.Zoomer - Tuesday, June 3, 2014 - link

And does it support bitlocker eDrive / OPAL, etc?stickmansam - Monday, June 2, 2014 - link

Dang those prices look goodMakes me wish I had waited to grab the MX100 instead of getting the SP920

Similar sustained performance but MX100 has better GC and consistency

nfriedly - Monday, June 2, 2014 - link

Neither of the Samsung buttons work for the last chart on http://www.anandtech.com/show/8066/crucial-mx100-2...The error in the firebug console is "TypeError: document.getElementById(...) is null"

JarredWalton - Monday, June 2, 2014 - link

Fixed, thanks!MikeMurphy - Monday, June 2, 2014 - link

Why are 4k random reads so much slower than 4k random writes? Or, are the graphs mislabeled and mixed up?khkha - Tuesday, June 3, 2014 - link

I have just bought seagate 600 for my early 2011 mbp. Should I return the drive and buy this instead for 30gb space bump?Thoughts anyone?