The Intel Haswell Refresh Review: Core i7-4790, i5-4690 and i3-4360 Tested

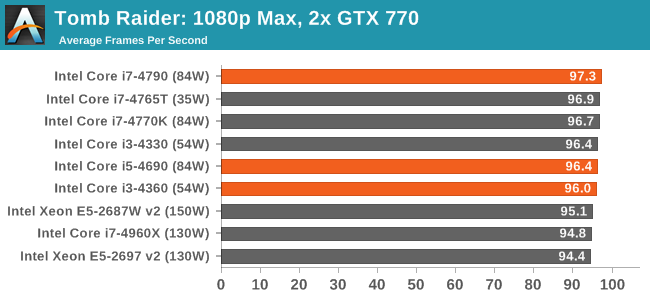

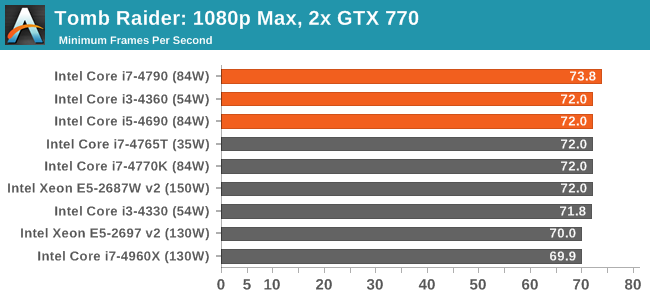

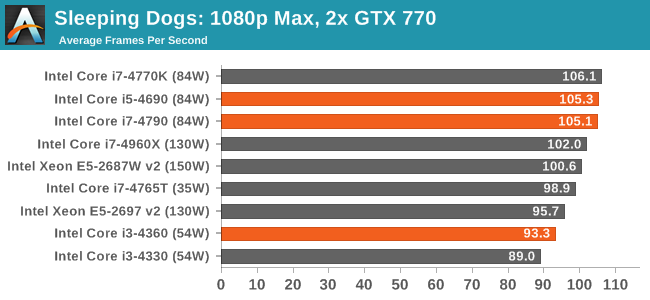

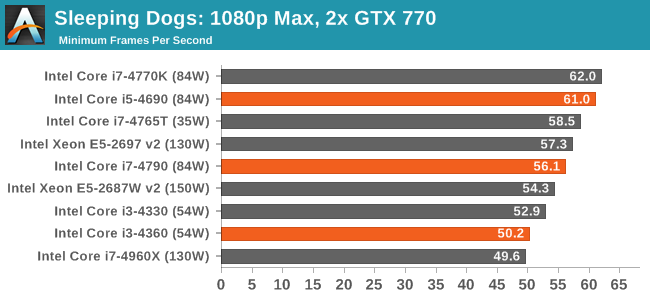

by Ian Cutress on May 11, 2014 3:01 AM ESTFor our discrete GPU benchmarks, we have split them up into the different GPU configurations we have tested. We have access to both MSI GTX 770 Lightning GPUs and ASUS reference HD 7970s, for SLI and Crossfire respectively. These tests are all run at 1080p and maximum settings, reporting the average and minimum frame rates.

dGPU Benchmarks: 2x MSI GTX770 Lightning

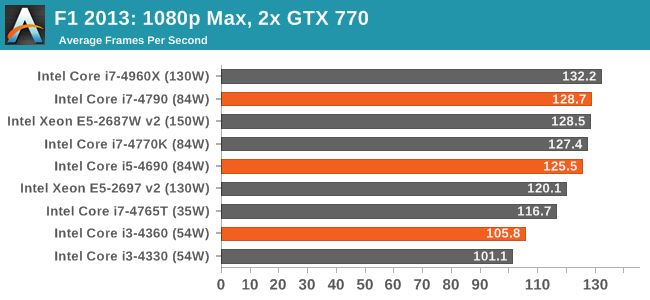

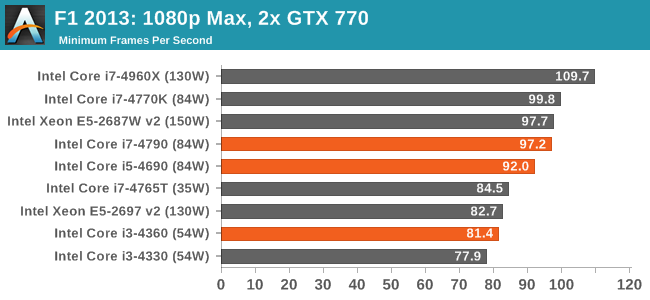

F1 2013

Despite the lack of scaling, moving to dual GPU puts a larger rift between the i3 and the other CPUs for average FPS in 2013.

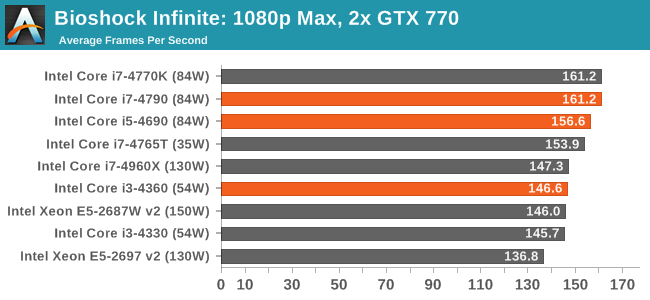

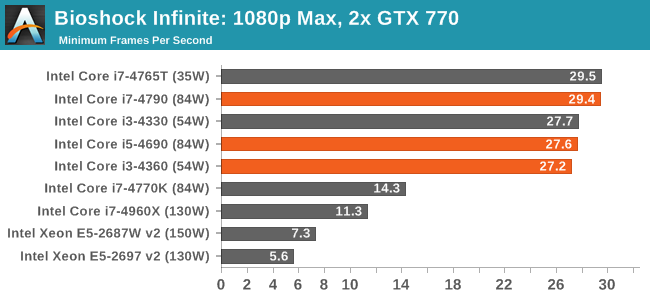

Bioshock Infinite

Tomb Raider

Sleeping Dogs

While average FPS takes a ~10% drop from i3 to i5, the same 10 FPS drop is seen in the minimum frame rates but this equates more to a ~20% decline.

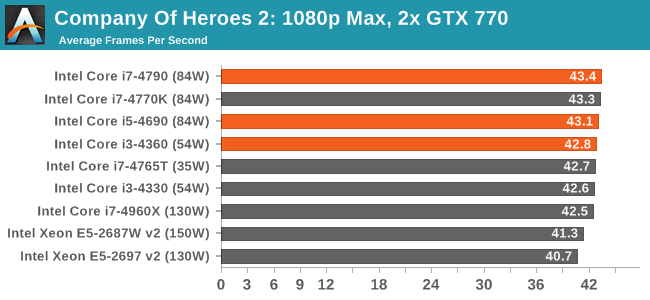

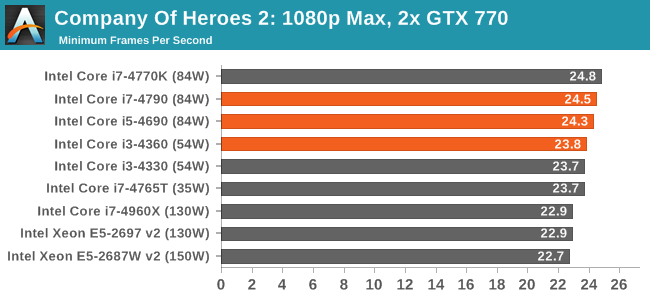

Company of Heroes 2

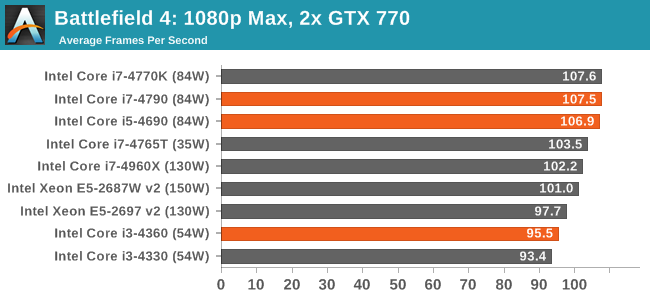

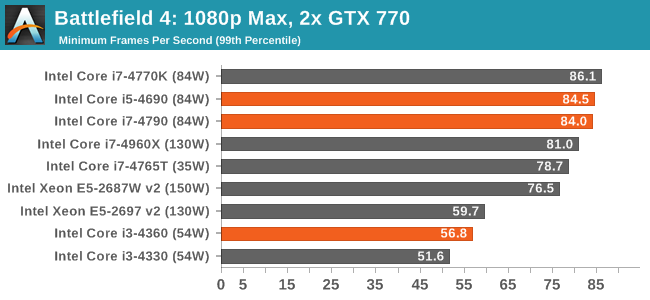

Battlefield 4

When you start adding the GPU horsepower, the i3 core count becomes a hindrance in minimum FPS values for BF4.

130 Comments

View All Comments

willis936 - Sunday, May 11, 2014 - link

Man why are you reading anantech if you think redundant data collection is a waste of time? Verification of both results and of expectations in new products is valuable. Intel could call it a refresh after botching every wafer in the past six months and dump it into a new product line.DanNeely - Sunday, May 11, 2014 - link

This is the locked, non OC, portion of the Haswell refresh. The new unlocked chips have a rumored ETA of early next month.If you're not interested; go read something else instead. What you want isn't available yet.

Flunk - Monday, May 12, 2014 - link

Proving there is no credible difference is useful, maybe it will save some people a few bucks.nutjob2 - Sunday, May 11, 2014 - link

Intel is chasing a dying market, ie, those who are willing to pay for single threaded performance at any cost, and worry less about power consumption, in the desktop and server space.These people fall into two broad categories:

First there are gamers with more money than sense who spend hundreds or thousands each year on the latest CPU/motherboard/GPU that delivers a 5%-10% increase in framerate. Also other people who feel they need the latest and greatest for whatever reason.

On these people Intel dumps their hottest parts since they're not very concerned about power consumption, water cooling is a badge of honor for these guys.

Then there are the corporates who are visited by HP/Dell/etc salesmen and are told they need big, big iron so they can virtualise all their servers to "save money". That of course means they've created a single point of failure for all those servers so they need a machine with redundant everything and huge density. No-one has the heart to tell them they still have a single point of failure.

These people don't so much care about power consumption than the fact you can't cool or deliver power very well to multiple processors in a box, so Intel give them their somewhat cooler parts. Because most of the software they run is not very clever and largely single threaded they stick to Intel.

The smart corporate money is not buying any of Intel's overpriced CPUs instead they're sticking with their existing hardware and waiting for "cloud" providers to lower their prices. Why are they doing that? Because Intel is playing most of the above market for suckers, making them pay through the nose while they sell their best parts to Amazon, Google, Microsoft, IBM et all who pay a fraction of what everyone pays. Intel doesn't do this out of the kindness of their hearts but because they know that cloud providers will eventually replace many of their existing direct customers and because they don't care about single threaded performance (they're almost always using all their cores). Intel are milking their cash cows while they kiss up to the new big players, lest they start getting too friendly with AMD or ARM vendors who can make custom parts for them, just like the big players make their own motherboards, etc shutting out people like HP and Dell.

willis936 - Sunday, May 11, 2014 - link

Adding an extra 20 to product numbers is "chasing"?betam4x - Sunday, May 11, 2014 - link

Regarding your comments:1) Gamers don't spend 'hundreds of thousands of dollars every year' on PC components. Top of the line PC hardware can be had for under 3 grand. Typically the users who buy this years new hardware are the ones that skipped the past 1-2 generations of hardware.

2) Intel's parts aren't 'hot'. They are more power efficient than they've ever been. The performance per watt is among the highest in the industry.

3) Virtualizing does save money. Even if that server went up in flames, a backup of that VM is stored elsewhere so that it can be quickly brought back up on the backup server located in another rack. This means minutes of downtime instead of hours.

4) At my company we buy intel CPUs every day. We upgrade all of our machines every 3 years and often have to buy new machines for new employees. Of course, we do other 'crazy' things as well, like dual monitors, ample workspace, etc. Imagine that?

Gigaplex - Monday, May 12, 2014 - link

"Gamers don't spend 'hundreds of thousands of dollars every year' on PC components."They said hundreds OR thousands, not "of".

rajod1 - Friday, May 30, 2014 - link

Gamers as a group spend millions on upgrades not hundreds or thousands.maximumGPU - Tuesday, May 13, 2014 - link

Upgrade every 3 years, and new machines for new employees? I'd like to apply to a job in your company, tired of XP and pentium 4's in my current one.YuLeven - Wednesday, May 14, 2014 - link

Know that feel. I use a Celeron D here.