Micron M500DC (480GB & 800GB) Review

by Kristian Vättö on April 22, 2014 2:35 PM ESTThe Features

Micron calls its enterprise feature set eXtended Performance and Enchanced Reliability Technology or just XPERT. It's a combination of hardware and software-level features that help to extend endurance and ensure data integrity at any time. Some elements of XPERT are present in client drives as well while some are limited to Micron's enterprise SSDs.

ARM/OR

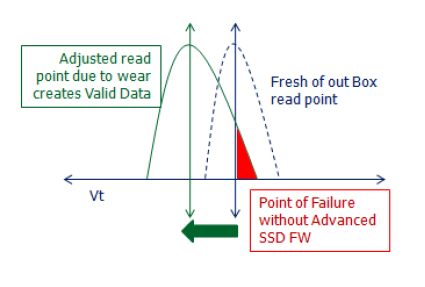

ARM/OR, short for Advanced Read Management/Optimized Read, is Micron's adaptive DSP technology (i.e. aDSP). The idea behind ARM/OR and aDSP in general is to monitor the NAND voltages in real time and make changes if necessary.

As NAND wears out, electrons get trapped inside the silicon oxide and floating gate. This changes the voltage required to read/program the cell (as the graph above shows) and eventually the cell would reach a point where it cannot be read/programmed using the original voltage. With ARM/OR the controller can adapt to the changes in read/program voltages and can continue to operate even after significant wear.

RAIN

We've talked about RAIN (Redundant Array of Independent NAND) before as it is a feature Micron utilizes in all of its SSDs but I'll go through it briefly here. Essentially RAIN is a RAID 5 like structure that uses parity to protect against data loss. In the case of the M500DC, the stripe ratio is 11:1 for the 120GB, 240GB and 480GB models and 15:1 for the 800GB one, meaning that one page/block is reserved for parity for every 11 or 15 pages/blocks. The parity can then be used to recover data in case there is a block failure or the data gets corrupted.

DataSAFE

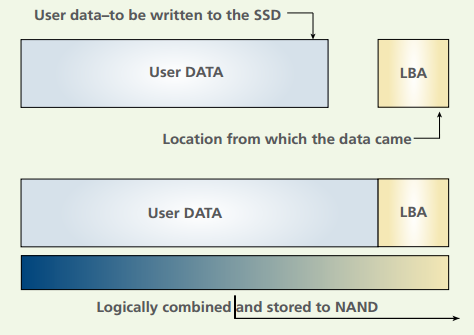

DataSAFE ensures that all data, read or written, is intact. When writing data, Micron not only writes the data from the host to the NAND but also the metadata associated with the data (i.e. its logical block address/LBA). When the host then requests the data to be read from the drive, the LBA of the data is first compared against the value in the L2P table (logical to physical -- Micron's name for the NAND mapping table) before sending the data to the host. This ensures that the data that is being read from the NAND is in fact the same data that the host requested. If the LBA values of the NAND and L2P table are different, then the drive will have to rely on ECC or RAIN to recover the correct data.

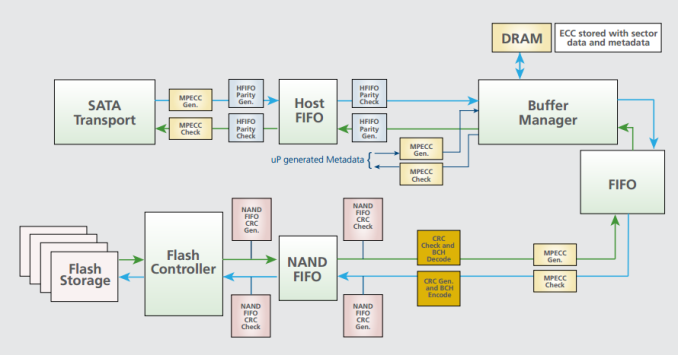

In addition to LBA embedding and read checking, DataSAFE does data path protection from the SATA transport all the way to the NAND controller. While client drives only generate CRC and BCH error correction codes to detect errors, the enterprise drives add an additional memory correction ECC (MPECC) layer. MPECC is a 12-byte error-correcting code that follows the user data during its whole path. The difference between the client and enterprise solutions is that the client drives rely on the host to make the actual error correcting, whereas the MPECC layer can correct a single-bit error within the data path (i.e. before the data even hits the host).

Power Loss Protection

While both Micron's client and enterprise SSDs feature power loss protection, there is a difference between them. The enterprise drives features more rigid tantalum capacitors that provide a higher capacitance compared to the standard capacitors used in the client drives. The higher capacitance ensures that absolutely no data is lost during a power loss, whereas there is still a small risk of data loss in client drives. I believe the difference is that the capacitors in client drives only provide enough capacitance to flush the NAND mapping table (or L2P table as Micron calls it) to the NAND, while the enterprise solution guarantees that in addition to the NAND mapping table, all write requests in process will also be completed.

37 Comments

View All Comments

abufrejoval - Monday, April 28, 2014 - link

I'm seen an opportunity here to clarify something that I've always wondered about:How exactly does this long time retention work for FLASH?

In the old days, when you had an SSD, you weren't very likely having it lie around, after you paid an arm and a leg for it.

These days, however, storing your most valuable data on an SSD almost seems logical, because one of my nightmares is dropping that very last backup magnetic drive, just when I'm trying to insert it after a complete loss of my primary active copy: SSD just seems so much more reliable!

And then there comes this retention figure...

So what happens when I re-insert an SSD, that has been lying around say for 9 months with those most valuable baby pics of your grown up children?

Does just powering it up mean all those flash cells with logical 1's in them will magically draw in charge like some sort of electron sponge?

Or will the drive have to go through a complete read-check/overwrite cycle depending on how near blocks have come to the electron depletion limit?

How would it know the time delta? How would I know it's finished the refresh and it's safe to put it away for another 9 months?

I have some older FusionIO 320GB MLC drives in the cupboard, that haven't been powered up for more than a year: Can I expect them to look blank?

P.S. Yes, you need an edit button and a resizable box for text entry!

Kristian Vättö - Tuesday, April 29, 2014 - link

The way NAND flash works is that electrons are injected to what is called a floating gate, which is insulated from the other parts of the transistor. As it is insulated, the electrons can't escape the floating gate and thus SSDs are able to hold the data. However, as the SSD is written to, the insulating layer will wear out, which decreases its ability to insulate the floating gate (i.e. make sure the electrons don't escape). That causes the decrease in data retention time.Figuring out the exact data retention time isn't really possible. At the maximum endurance, it should be 1 year for client drives and 3 months for enterprise drives but anything before and after is subject to several variables that the end-user don't have access to.

Solid State Brain - Tuesday, April 29, 2014 - link

Data retention depends mainly on NAND wear. It's the highest (several years - I've read 10+ years even for TLC memory though) at 0 P/E cycles and decreases with usage. By JEDEC specifications, consumer SSDs are to be considered at "end life" when the minimum retention time drops below 1 year, and that's what you should expect when reaching the P/E "limit" (which is not actually a hard limit, just a threshold based on those JEDEC-spec requirements). For enterprise drives it's 3 months. Storage temperature will also affect retention. If you store your drives in a cool place when unpowered, their retention time will be longer. By JEDEC specifications the 1 year time for consumer drives is at 30C, while the 3 months time for enterprise one is at 40C. Tidbit: manufacturers use to bake NAND memory in low temperature ovens to simulate high wear usage scenarios during tests.To be refreshed, data has to be reprogrammed again. Just powering up an SSD is not going to reset the retention time for the existing data, it's only going to make it temporarily slow down.

When powered, the SSD's internal controller keeps track of when writes occurred and reprograms old blocks as needed to make sure that data retention is maintained and consistent across all data. This is part of the wear leveling process, which usually is pretty efficient in keeping block usage consistent. However, I speculate this can happen only to a certain extent/rate. A worn drive left unpowered for a long time should preferably have its data dumped somewhere and then cloned back, to be sure that all NAND blocks have been refreshed and that their retention time has been reset to what their wear status allow.

hojnikb - Wednesday, April 23, 2014 - link

TLC is far from crap (well quality one that is). And no, TLC does not have issues holding a "charge". Jedec states a minimum of 1 year of data retention, so your statement is complete bullshit.apudapus - Wednesday, April 23, 2014 - link

TLC does have issues but the issues can be mitigated. A drive made up of TLC NAND requires much stronger ECC compared to MLC and SLC.Notmyusualid - Tuesday, April 22, 2014 - link

My SLC X25-E 64GB is still chugging along, with not so much as a hiccup.It n e v e r slows down, it 'felt' fast constantly, not matter what is going on.

In about that time I've had one failed OCZ 128GB disk (early Indullix I think), one failed Kingston V100, one failed Corsair 100GB too (model forgotten), a 160GB X25-M arrived DOA (but it's replacement is still going strong in a workstation), and late last year a failed Patriot Wildfire 240GB.

The two 840 Evo 250GB disks I have (TLC) are absolute garbage. So bad I had to remove them from the RAID0, and run them individually. When you want to over-write all the free space - you'd better have some time on your hands.

SLC for the win.

Solid State Brain - Wednesday, April 23, 2014 - link

The X25-E 64 GB actually has 80 GiB of NAND memory on its PCB. Since of these only 64 GB (-> 59.6 GiB) are available to the user, it means that about 25% of it is overprovisining area. The drive is obviously going to excel in performance consistency (at least for its time).On the other hand, the 840 250 GB EVO has less OP than the previous 840 models with TLC memory, as you have to subtract 9 GiB from the 23.17 GiB amount of unavailable space (256 GiB of physically installed NAND - 250 GB->232.83 GiB of user space) previously fully used as overprovisioning area, for the Turbowrite feature. This means that in trim-less or intensive write environments with little or no free space they're not going to be that great in performance consistency.

If you were going to use The Samsung 840 EVOs in a RAID-0 configuration you should really had at the very least to increase the OP area by setting up trimmed, unallocated space. So, it's not really that they are "absolute garbage" (as they obviously they aren't) and it's really inherently due to the TLC memory. It's your fault in that you most likely didn't take the necessary steps to use them properly with your RAID configuration.

Solid State Brain - Wednesday, April 23, 2014 - link

I meant:*...and it's NOT really inherently due to the...

TheWrongChristian - Friday, April 25, 2014 - link

> When you want to over-write all the free space - you'd better have some time on your hands.Why would you overwrite all the free space? Can't you TRIM the drives?

Any why run them in RAID0? Can't you use them as JBOD, and combine volumes?

SLC versus TLC results in a about a factor of 4 cheaper just based on a die area basis. That's why drives are MLC and TLC based, the extra storage being used to add extra spare area to make the drive more economical over the drives useful life. Your SLC x25-e, on the other hand, will probably never ever reach it's P/E limit before you discard it for a more useful, faster, bigger replacement drive. We'll probably have practical memrister based drives before the x25-e uses all it's P/E cycles.

zodiacsoulmate - Tuesday, April 22, 2014 - link

It make me think about my OCZ vector 256GB, it breaks everytime there is power lose, even hard reset...There are quite a lot people claim this problem online, and Vector 256GB became only sale refurbised before any other vector drive....

I RMAed two of them, and OCZ replaced mine with Vector 150, which seems fine now.. maybe we should add power lost test to SSDs...