Microsoft Announces DirectX 12: Low Level Graphics Programming Comes To DirectX

by Ryan Smith on March 24, 2014 8:00 AM ESTDirect3D 12 In Depth

This brings us to Direct3D 12, which is Microsoft’s entry into the world of low level graphics programming. Microsoft is still neck-deep in development of Direct3D 12 so far – they’re currently targeting it for game releases in Holiday 2015, roughly 18 months off – and as such Microsoft hasn’t released a ton of details about the API to the public yet. But they have given us a broad overview of what the plan to accomplish, with a couple of technical details on how they will be doing this.

At a high level there is no denying the fact that Direct3D 12 looks a lot like Mantle. Microsoft has set out with the same basic goals as AMD did with Mantle and looks to be achieving some of them in the same manner. Which to no surprise then that the end products are going to be similar as a result.

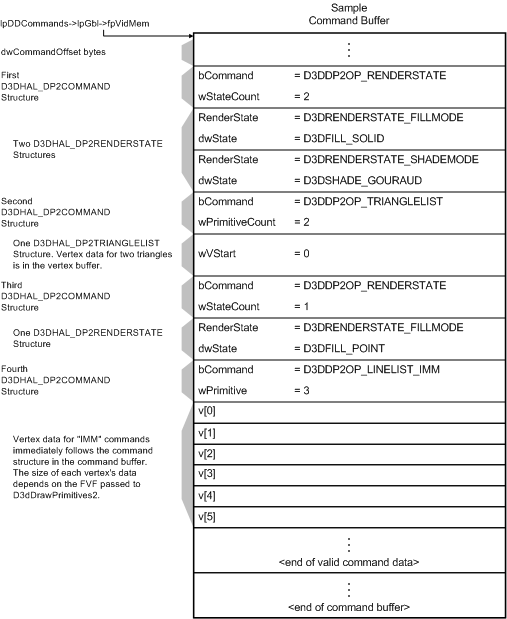

As with Mantle, the primary goal for Direct3D 12 is to greatly reduce the CPU overhead that we’ve talked about previously. As the biggest source of CPU overhead is having Direct3D assemble the command lists/buffers for a GPU, Direct3D 12 will be moving that job over to developers. By assembling their own command lists developers can more easily spread out the task over multiple cores, and this alone will have a significant impact on CPU utilization. At this point we don’t know what Direct3D 12 command lists will look like, and this will likely be one of the design choices that separates Direct3D 12 from Mantle, but there’s no reason at this time to expect them to be much different.

For Comparison: D3D11 Command Buffer

Microsoft will also be introducing a similar concept, a bundle, which is functionally a form of a reusable command list. This again is another CPU saving step, as using a bundle in place of multiple command lists further cuts down on the amount of CPU time spent making submissions. In this case the idea behind a bundle is to submit work once, and then allow the bundle to be executed multiple times with minor variations. Microsoft specifically notes having a character drawn twice with different textures as being a use case for this structure.

Meanwhile it’s interesting to note that with this change Microsoft has admitted that Direct3D 11 style immediate/deferred command lists haven’t lived up to their goals, stating “deferred contexts also do not map perfectly to hardware, and so relatively little work can be done in them.” To our knowledge the only game able to make significant use of the feature was Civilization V, and even then we’ve seen AMD video cards perform very well without supporting the feature.

Moving on, Direct3D 12 will also be introducing pipeline state objects. With pipeline state objects we’re really getting into the nitty-gritty of command buffer execution and how the various graphics architectures differ, but the important bit to take away is that most architectures don’t have the ability to freely transition between pipeline states as much as Direct3D 11 would like. This leads to problems for how quickly the hardware state can be set, as Direct3D must go back and take into account these hardware limitations.

The solution to this will be the aforementioned pipeline state objects (PSOs). PSOs bypass some of these pipeline limitations by using objects that are finalized on creation. Nitty-gritty details aside, the outcome from this is that it further reduces CPU overhead, once again increasing the number of draw calls the CPU can submit or freeing it up for other tasks.

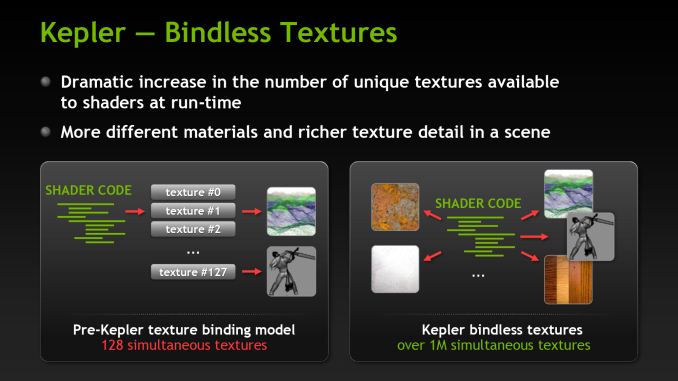

The final major addition to Direct3D 12 is descriptor heaps. Going back to 2012, one of the features introduced on NVIDIA’s then-new Kepler architecture was bindless resources, which bypassed the previous 128 slot limitation on resources (textures, etc). Through bindless an essentially infinite number of resources could be addressed, at a performance penalty, though an additional layer of indirection in memory accesses.

Descriptor heaps in turn appear to be the integration of bindless resources in Direct3D 12. Microsoft does not specifically call descriptor heaps bindless, but the description of slots and draw calls makes it clear that they’re intending to solve the problem with the bindless solution. With descriptor heaps and descriptor tables to reside in those heaps, Direct3D 12 will be able to perform bindless operations, both expanding the number of resources available to shader programs, and even outright dynamic indexing of resources.

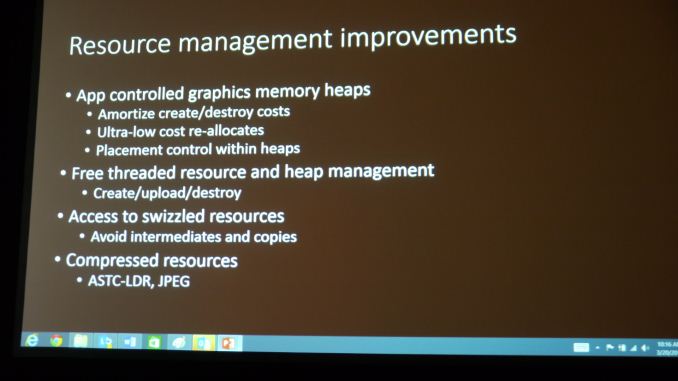

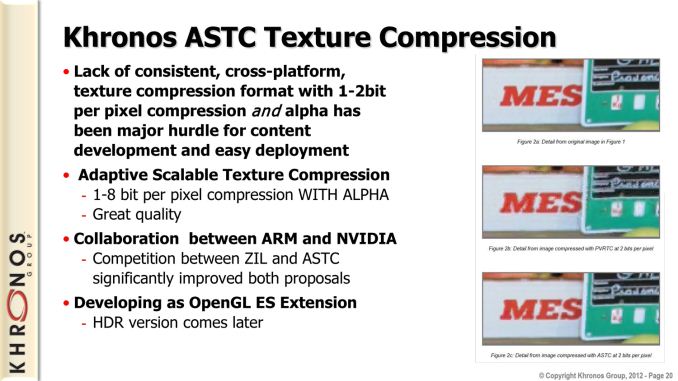

Finally, there are a few miscellaneous features that have popped up in Microsoft’s slides that have caught our attention, if only due to the lack of details provided. Specifically, the mention of compressed resources stands out. The resources mentioned, ASTC and JPEG, are not resources formats that we know to be supported on any current PC GPU. In the case of ASTC, Khronos’s next generation texture compression format, it is a finalized standard that will be supported on all GPUs in time as a core part of the OpenGL standard. Meanwhile JPEG is not a feature we’ve seen on any API roadmaps before.

Image Courtesy PC Perspective

To that end, the addition of ASTC is not all that surprising. Since it is royalty free and not otherwise restricted to OpenGL-only, there’s no reason not to support it when all of the underlying hardware will (eventually) support it anyhow.

JPEG on the other hand is a very curious thing to mention, as its lack of existence on any API roadmaps goes along with the fact that we’re not aware of anyone having announced plans to support JPEG in hardware. Furthermore JPEG is not a fixed ratio compressor – the number of bits a given sized input will generate can vary – which for GPUs would typically be a bad thing. It stands to reason then that Microsoft knows a bit more about what features are in the R&D pipelines for the GPU makers, and that someone will be implementing hardware JPEG support. So we’ll have to keep an eye on this and see what pops up.

Making a Common Low Level API

The need for a low level graphics API like Direct3D 12 is clear, but establishing a common API is no easy task. Abstraction is both what gives Direct3D 11 its ability to work on multiple platforms and robs Direct3D 11 of some of its performance. So to make a low level API that works across AMD, NVIDIA, Intel, Qualcomm, and others’ GPUs requires a careful balancing act to bring low level API improvements while adding no more abstraction than is necessary.

At this stage in development Microsoft is not ready to talk about that aspect of API development; for the moment that level of access is restricted to a small group of approved developers. But given their hardware requirements we can make a few educated guesses about what’s going on behind the scenes.

Of the big 3 GPU vendors, all of them have confirmed what GPUs will be supported. For Intel their Gen 7.5 GPUs (Haswell generation) will support Direct3D 12. As for NVIDIA, Fermi, Kepler, and Maxwell will support Direct3D 12. And for AMD, GCN 1.0 and GCN 1.1 will support Direct3D 12.

| Direct3D 12 Confirmed Supported GPUs | |||

| AMD |

GCN 1.0 (Radeon 7000/8000/200) GCN 1.1 (Radeon 200) |

||

| Intel | Gen 7.5 (Haswell/4th Gen Core) | ||

| NVIDIA |

Fermi (GeForce 400/500) Kepler (GeForce 600/700/800) Maxwell (GeForce 700/800) |

||

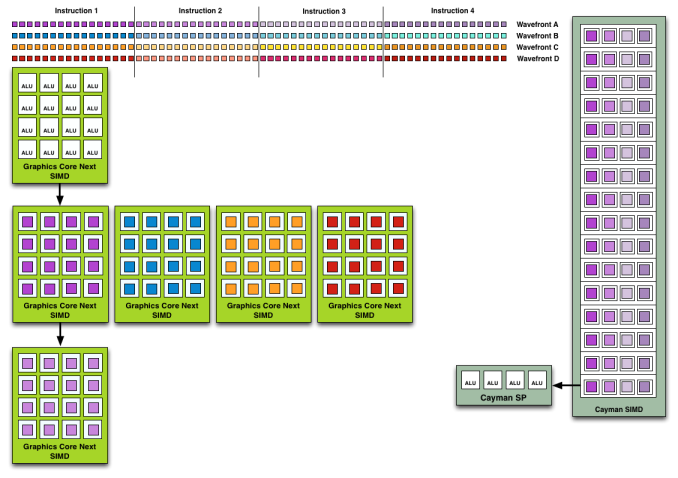

The interesting thing about all of this is what’s excluded: namely, AMD’s D3D11 VLIW5 and VLIW4 architectures. We’ve written about VLIW in comparison to GCN in great depth, and the takeaway from that is that unlike any of the other architectures here, only AMD was using a VLIW design. Every architecture has its strengths and weaknesses, and while VLIW could pack a lot of hardware in a small amount of space, the inflexible scheduling inherent to the execution model was a very big part of the reason that AMD moved to GCN, along with a number of special cases regarding pipeline and memory operations.

Now why do we bring this up? Because with GCN, Fermi, and Gen 7.5, all PC GPUs suddenly started looking a lot more alike. To be clear there are still a number of differences between these architectures, from their warp/wavefront size to how their SIMDs are organized and what they’re capable of. But the important point is that with each successive generation, driven by the flexibility required for efficient GPU computing, these architectures have become more and more alike. They’re far more similar now than they have been since even the earliest days of programmable GPUs.

Wavefront Execution Example: SIMD vs. VLIW. Not To Scale - Wavefront Size 16

Ultimately, all of this is a long-winded way of saying that a bit part of the reason that there can even be a common low level graphics API is because the hardware has homogenized to the point where less and less abstraction is necessary. On a spectrum ranging from a shared ISA (e.g. x86) to widely divergent designs, we’re nowhere near the former, but importantly we’re also nowhere near the latter. This is a subject we’re going to have to watch with great interest, because MS and the GPU vendors (through their drivers) are still going to have to introduce some level of abstraction to make everyone work together through a single common low level API. But the situation with modern hardware means that (with any luck) the additional abstraction with Direct3D 12 over something like Mantle will prove to be insignificant.

Finally, it’s worth pointing out that last week’s developments with Direct3D couldn’t be happening without a degree of political backbone, too. The problem in introducing any new graphics standard is not just technical, but in bringing together companies with differing interests and whose best interests don’t necessarily involve fast-tracking every technology proposed.

Microsoft to that end currently holds a very interesting spot in the world of PC graphics, being the maintainer of the most popular PC graphics API. And unlike the designed-by-committee OpenGL, Microsoft has some (but not complete) leverage to push new technologies through when the GPU vendors and software vendors would otherwise be at loggerheads with each other. So while Microsoft is being clear this is a joint effort between all of the involved parties, there’s still something to be said for having the influence and power to bring down changes that may not be popular with everyone.

105 Comments

View All Comments

ninjaquick - Tuesday, March 25, 2014 - link

I don't think you realize how much engineers like to tinker. They will use D3D12 and Mantle if they get the time, and will get them to the public whenever possible, so long as development is more fun than frustrating.Scali - Tuesday, March 25, 2014 - link

Well, aside from the 'tinkering' part, it's pretty much a given that D3D12 will become the standard at some point. Why not build that D3D12 now, rather than wait until D3D12 is as popular as D3D11? It will give you a competitive advantage.Same goes for Mantle, to a lesser extent. Having support is an advantage, but it is harder to justify the extra work, because it is AMD-only, and DX12 will probably make Mantle obsolete within 1.5 years.

Anders CT - Monday, March 24, 2014 - link

@ninjaquickWell, then that is a problem. Why would a developer optimize a game using an API that excludes 80-90% of the installbase?

jabber - Tuesday, March 25, 2014 - link

I think I remember reading the same comments when DX10 was announced.I don't think anyone died as a result. The world carried on.

ninjaquick - Tuesday, March 25, 2014 - link

Because developers, contrary to popular belief, are people organizations, with real people working in them. Many engineers love to do what they do and welcome new challenges, and can easily pitch working on something new and exciting so long as they can properly list the risks and benefits.Why would anyone support stereoscopic 3D, even though the vast majority of the population doesn't use it? Because they can.

Homeles - Monday, March 24, 2014 - link

Microsoft's previous API-locking was a software limitation (DX10 requires WDDM 1.0), rather than an arbitrary work of greed, so it mostly depends on whether or not Windows 7's WDDM 1.1 can support it. Of course, there's the support issue that A5 has highlighted as well.lefty2 - Monday, March 24, 2014 - link

Actually, OpenGL has had extensions that bring the benefits of DirectX 12, since about a year ago. That was covered in one of the GDC tech talks. The only catch is that these extensions are yet only fully implemented on Nvidia hardware... but still quite interestingsteven75 - Monday, March 24, 2014 - link

I would like to know the answer to this as well. OpenGL sure seems more important these days.boe - Monday, March 24, 2014 - link

How many FPS was this getting on DX11? 60FPS on DX12 doesn't tell us much for all I know it was getting about 80FPS.Developer Turn 10 had ported the game from Direct3D 11.X to Direct3D 12, allowing the game to easily be run on a PC. Powered by a GeForce GTX Titan Black, Microsoft tells us the demo is capable of sustaining 60fps.

DesktopMan - Monday, March 24, 2014 - link

Yeah knowing that the game runs at 60 when capped at 60 is not informative at all. It should run at 120fps+ on a Titan, compared to the Xbox One GPU.