Microsoft Announces DirectX 12: Low Level Graphics Programming Comes To DirectX

by Ryan Smith on March 24, 2014 8:00 AM EST

With GDC 2014 having drawn to a close, we have finally seen what is easily the most exciting piece of news for PC gamers. As previously teased by Microsoft, Microsoft took to the stage last week to announce the next iteration of DirectX: DirectX 12. And as hinted at by the session description, Microsoft’s session was all about bringing low level graphics programming to Direct3D.

As is often the case for these early announcements Microsoft has been careful on releasing too many technical details at once. But from their presentation and the smaller press releases put together by their GPU partners, we’ve been given our first glimpse at Microsoft’s plans for low level programming in Direct3D.

Preface: Why Low Level Programming?

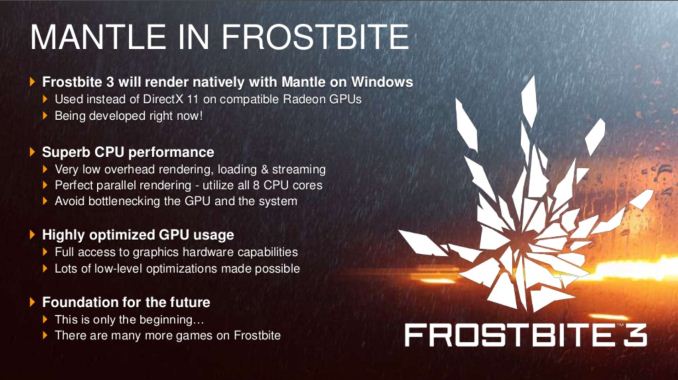

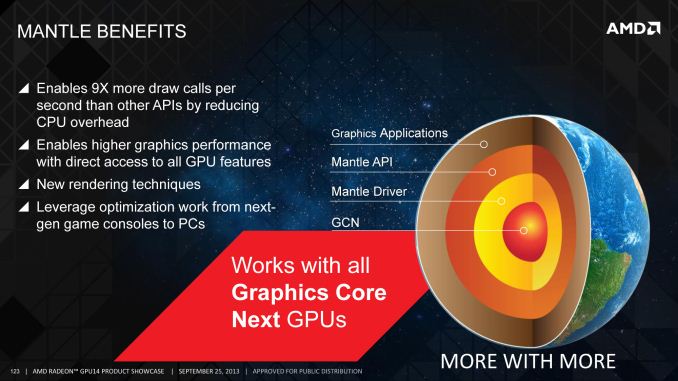

The subject of low level graphics programming has become a very hot topic very quickly in the PC graphics industry. In the last 6 months we’ve gone from low level programming being a backburner subject, to being a major public initiative for AMD, to now being a major initiative for the PC gaming industry as a whole through Direct3D 12. The sudden surge in interest and development isn’t a mistake – this is a subject that has been brewing for years – but it’s within the last couple of years that all of the pieces have finally come together.

But why are we seeing so much interest in low level graphics programming on the PC? The short answer is performance, and more specifically what can be gained from returning to it.

Something worth pointing out right away is that low level programming is not new or even all that uncommon. Most high performance console games are written in such a manner, thanks to the fact that consoles are fixed platforms and therefore easily allow this style of programming to be used. By working with hardware at such a low level programmers are able to tease out a great deal of performance of this hardware, which is why console games look and perform as well as they do given the consoles’ underpowered specifications relative to the PC hardware from which they’re derived.

However with PCs the same cannot be said. PCs, being a flexible platform, have long worked off of high level APIs such as Direct3D and OpenGL. Through the powerful abstraction provided by these high level APIs, PCs have been able to support a wide variety of hardware and over a much longer span of time. With low level PC graphics programming having essentially died with DOS and vendor specific APIs, PCs have traded some performance for the convenience and flexibility that abstraction offers.

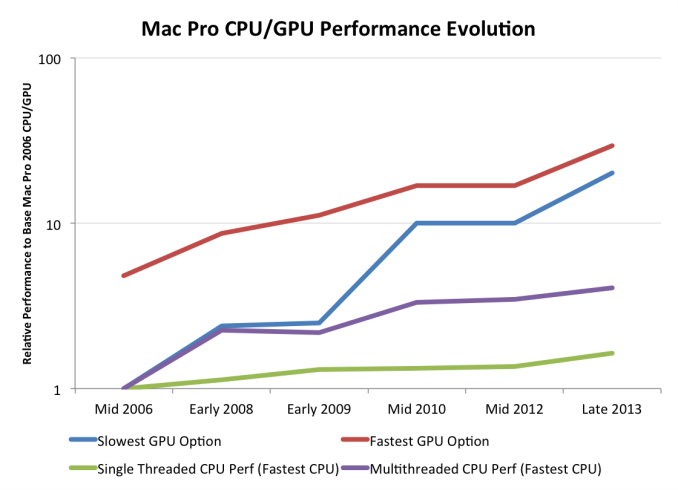

The nature of that performance tradeoff has shifted over the years though, requiring that it be reevaluated. As we’ve covered in great detail in our look at AMD’s Mantle, these tradeoffs were established at a time when CPUs and GPUs were growing in performance by leaps and bounds year after year. But in the last decade or so that has changed – CPUs are no longer rapidly increasing in performance, especially in the case of single-threaded performance. CPU clockspeeds have reached a point where higher clockspeeds are increasingly power-expensive, and the “low hanging fruit” for improving CPU IPC has long been exhausted. Meanwhile GPUs have roughly continued their incredible pace of growth, owing to the embarrassingly parallel nature of graphics rendering.

The result is that when looking at single threaded CPU performance, GPUs have greatly outstripped CPU performance growth. This in and of itself isn’t necessarily a problem, but it does present a problem when coupled with the high level APIs used for PC graphics. The bulk of the work these APIs do in preparing data for GPUs is single threaded by its very nature, causing the slowdown in CPU performance increases to create a bottleneck. As a result of this gap and its ever-increasing nature, the potential for bottlenecking has similarly increased; the price of abstraction is the CPU performance required to provide it.

Low level programming in contrast is more resistant against this type of bottlenecking. There is still the need for a “master” thread and hence the possibility of bottlenecking on that master, but low level programming styles have no need for a CPU-intensive API and runtime to prepare data for GPUs. This makes it much easier to farm out work to multiple CPU cores, protecting against this bottlenecking. To use consoles as an example once again, this is why they are capable of so much with such a (relatively) weak CPU, as they’re better able to utilize their multiple CPU cores than a high level programmed PC can.

The end result of this situation is that it has become time to seriously reevaluate the place of low level graphics programming in the PC space. Game developers and GPU vendors alike want better performance. Meanwhile, though it’s a bit cynical, there’s a very real threat posed by the latest crop of consoles, putting PC gaming in a tight spot where it needs to adapt to keep pace with the consoles. PCs still hold a massive lead in single-threaded CPU performance, but given the limits we’ve discussed earlier, too much bottlenecking can lead to the PC being the slower platform despite the significant hardware advantage. A PC platform that can process fewer draw calls than a $400 game console is a poor outcome for the industry as a whole.

105 Comments

View All Comments

ninjaquick - Tuesday, March 25, 2014 - link

It is not BS at all. Developers have been asking, even crying out, for low level access to GPU hardware on PC for ages. The Xbox One was the last straw though, currently it is no more programmable than a PC. This caused Crytek a massive headache as they budgeted rendering based on 'to the metal' efficiency, and were instead met with massive draw overheads forcing them to severely reduce the quality of their work in 'Ryse'. Other developers have complained on the very same thing.. The Xbox 360 is more programmable. The benefit D3D12 has this time around is that the X1 is based on a hybrid WindowsRT/8x64, meaning d3d12 can be pushed to all win8 gen devices.rootheday3 - Monday, March 24, 2014 - link

I am pretty sure that at least one of the demos (3D MARK?) was actually run on Haswell iGpu - meaning Intel is well along on driver development. Some if the announced features (support for order independent transparency) also sound like Intrl extensions on dx11 (pixel sync)rootheday3 - Monday, March 24, 2014 - link

Also- reducing driver cost and single threaded perf should also help ensure that mobile gaming on laptops and tablets is less likely to be cpu bound due to frequency constraints. Should also allow more of the thermal budget to go to gpu for better rendering/ less throttling.Zak - Tuesday, March 25, 2014 - link

"Powered by a GeForce GTX Titan Black, Microsoft tells us the demo is capable of sustaining 60fps."Titan Black? No kidding. At what resolution?

Scali - Tuesday, March 25, 2014 - link

"To use consoles as an example once again, this is why they are capable of so much with such a (relatively) weak CPU, as they’re better able to utilize their multiple CPU cores than a high level programmed PC can."This is patently false.

Namely, the PS3 with its Cell has only a single regular CPU core. The SPEs are very limited and not suitable for batching up draw calls.

The XBox 360 is a more 'regular' CPU, but it has 'only' 3 cores, and the rendering is mostly done on a single core. (PS4 and XBox One are too new to draw any conclusions yet, so 'console efficiency' is what we know of consoles that are not all about multithreading).

You are confusing low-level with multithreading. Low-level is about programming the GPU with a very direct path, little abstraction. That is why it is efficient on consoles. There is a much thinner layer between OS, GPU, driver, API and application than on a regular PC.

Multithreading is another way of speeding up graphics, but this does not necessarily require programming the GPU directly. Which is also not what DX12 is going to do. It will still abstract the hardware to a point where vendor and implementation are not relevant. But it will allow better control of batching up calls on threads other than the master rendering thread (D3D11 already has support for multithreading, but it has some limitations).

It seems that AMD has done a great job on confusing the general public, with its Mantle propaganda.

Death666Angel - Tuesday, March 25, 2014 - link

All these articles make me think of John Carmack's QuakeCon 2013 (I think) keynote where he talked about having the same access to the GPU as he does to the CPU and basically programming in machine code. Hope this is coming. :) I need the performance for 120fps/4k Oculus Rift games! :DScali - Tuesday, March 25, 2014 - link

The ironly is that the original D3D had low-level access to the GPU, with its execute buffer system. This would easily allow multiple threads to batch up commands for the GPU in parallel efficiently.But Carmack complained that the API was too hard to use.

It looks like we're going back in time somewhat, getting closer to the original D3D.

Ramon Zarat - Tuesday, March 25, 2014 - link

The only thing I really want to know is this:Does the current gen GPU hardware, mainly Kepler/Maxwell and Tahiti/Hawaii, *technically* ABLE to support DX12? With strong emphasis on ABLE, as even if they are able, I seriously doubt AMD or Nvidia will be "charitable" enough to actually do it and instead, force us all to upgrade again, thanks to the wonder of artificial market segmentation.

Will we see modded driver enabling (at least partially) DX12 featues on current hardware? That would be interesting... For example, I'm currently running a modded Intel OROM BIOS on my Z68 board so it can use TRIM under RAID0 with SSD. With the Z77 and Z87, no TRIM problem out of the box and they are 99.9% identical to the Z68 SATA controller. I had ZERO problem in 2 1/2 years, so yeah, TRIM work in RAID0 SSD on the Z68, thanks a lot, Intel...for nothing.

inighthawki - Wednesday, March 26, 2014 - link

Considering nvidia made it a huge point that Fermi and above will be supported and represent >50% of the existing market, yeah probably. Why would they announce that then not write drivers for it?TheJian - Wednesday, March 26, 2014 - link

"For low level PC graphics APIs Mantle will be the only game in town for the next 18-20 months; but after that, then what?"Ignorance or Dumbest comment I've seen this year ;) Either ignorant of just trying to pretend OpenGL can't do this already for years. You forgot the OpenGL speeches showing 5-30x better draw calls and it is ALREADY here, not 2yrs away like DX12.

http://blogs.nvidia.com/blog/2014/03/20/opengl-gdc...

Still showing bias here I guess...Fee free to watch the 52min video in that link on DRAW CALLS and how pointless Mantle is as OpenGL has had this stuff for years. He even shows some code.

"How to get a crap-ton more draw calls" (a new technical term he said in the video…LOL), which clearly is telling you in the first minute, there is no need for mantle as it is about 10x draw calls right? Mantle=dead. If not by DX12 (but this isn’t out for a while-Win9?), then OpenGL/SteamOS/Android pushing OpenGL.

NV’s speech wasn't a SMALL speech or there wouldn't be a 130 slide doc (at least you guys mentioned it, that's not enough) explaining what they covered right (on top of the 52min dev day video covering Draw Calls +many others)? Considering it is ALREADY working in opengl (same crap as mantle, even carmack said you can do the same thing already and get as close to metal as you'd like in OpenGL with extensions a YEAR ago), I'm sure the OpenGL speeches had more concrete info than the DX speech at GDC (valve didn’t say a word about anything BUT OpenGL recently and how to port DX9 to it). How can you not detail the info on OpenGL and call yourself a hardware site? I know you guys hate cuda (or you'd test it vs. opencl/AMD repeatedly to death), but why the hate for OpenGL which NV has no control over?

Mantle's main competitor for 2yrs while we wait for DX12 is ...wait for it...OPENGL, which already does the same crap mantle does... ;) I expected nothing less from Ryan Smith. Where is the big OpenGL speeches coverage? Devs skipped the VR speech to hear about the draw call speech (it was going on right next door and John Mcdonald even says at the end of the vid he'd be in there if he wasn't giving the speech...LOL) and NV was a bit surprised those devs skipped VR. They expected 5 people, got a crowd wanting OpenGL draw call info ;)

But believe Ryan, Mantle's only competition is DX even though OpenGL (ES) is the only thing really used in games on mobile which will further Valves desktop opengl push too. Steam Dev Days was all about leaving DX and going OpenGL, much of GDC was the same or on ES3.1 mobile info. DX12 won't get far unless you believe MS will take out Android/SteamOS/iOS. They are already stuck in cement being so far ahead with pure unit sales off the charts, game devs' mind share already on ios/android, etc, they can easily push OpenGL together and all 3 want DX/Wintel dead.

Wintel lost 21% of ALL notebook share last year. This year we have 64bit cpus coming from all ARM soc players and they'll will use those to go further up the chain from crapbooks (chromebooks to me...LOL) to REAL notebooks, and low-end desktops (+some servers) and move up again with the next revs into everything high-end that x86 owns today.

NV’s speech wasn't a SMALL speech or there wouldn't be a 130 slide doc explaining what they covered right (on top of the 52min video from steam dev days+many others)? Considering it is ALREADY working in opengl (same stuff as mantle, even carmack said you can do the same thing already and get as close to metal as you'd like in OpenGL with extensions a YEAR ago on stage), I'm sure the OpenGL speeches had more concrete info than the DX speech at GDC (valve didn’t say a word about anything BUT OpenGL). How can you not detail the info on OpenGL and call yourself a hardware site? I know you guys hate cuda (or you'd test it vs. opencl/AMD repeatedly to death), but why the hate for OpenGL which NV has no control over? It hurts mantle, is DONE now, AMD pays you for a portal etc, so it's off limits?