Imagination's PowerVR Rogue Architecture Explored

by Ryan Smith on February 24, 2014 3:00 AM EST- Posted in

- GPUs

- Imagination Technologies

- PowerVR

- PowerVR Series6

- SoCs

When it comes to our coverage of SoCs, one aspect we’ve been trying to improve on for some time now is our coverage and understanding of the GPU portion of those SoCs. In the PC space we’re fortunate that there are just three major players – Intel, NVIDIA, and AMD – and that all three of them have over the years learned how to become very open and forthcoming about their GPU architectures. As a result we’ve had a level of access that has allowed us to better understand PC GPUs in a way that in earlier times simply wasn’t possible.

In the SoC space however we haven’t been so fortunate. Our understanding of most SoC GPU architectures has not been nearly as deep due to the fact that SoC GPU designers have been less willing to come forward with public details about their architectures and how those architectures have evolved over the years. And this has been for what’s arguably a good reason – unlike the PC GPU space, where only 2 of the 3 players compete in either the iGPU or dGPU markets, in the SoC GPU space there are no fewer than 7 players, all of whom are competing in one manner or another: NVIDIA, Imagination Technologies, Intel, ARM, Qualcomm, Broadcom, and Vivante.

Some of these players use their designs internally while others license out their designs as IP for inclusion in 3rd party SoCs, but all these players are in a much more competitive market that is in a younger place in its life. All the while SoC GPU development still happens at a relatively quick pace (by GPU standards), leading to similarly quick turnarounds between GPU generations as GPU complexity has not yet stretched out development to a 3-4 year process. As a result of SoC GPUs still being a young and highly competitive market, it’s a foregone conclusion that there is still a period of consolidation ahead of us – not unlike what has happened to SoC integrators such as TI – which provides all the more reason for SoC GPU players to be conservative about providing public details about their architectures.

With that said, over the years we have made some progress in getting access to the technical details, due in large part to the existing openness policies of NVIDIA and Intel. Nevertheless, as two of the smaller players in the mobile GPU space this still leaves us with few details on the architectures behind the majority of SoC GPUs. We still want more.

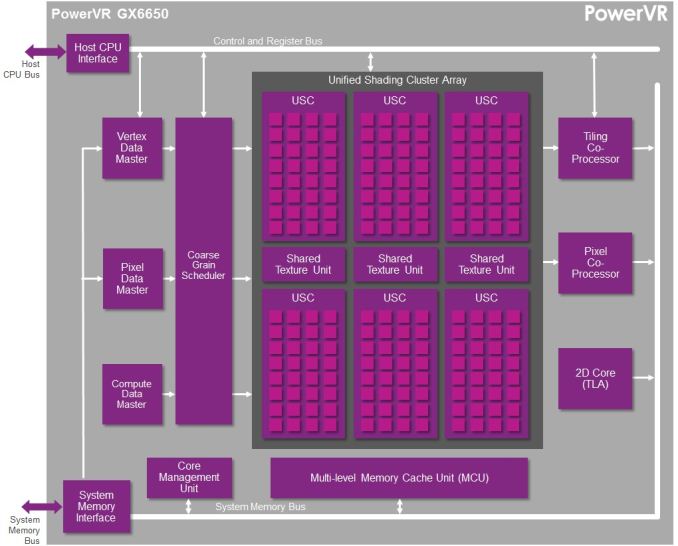

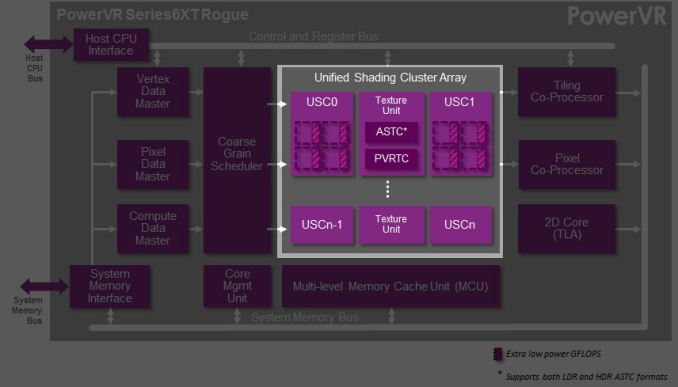

This brings us to today. In what should prove to be an extremely eventful and important day for our coverage and understanding of SoC GPUs, we’d like to welcome Imagination Technologies to the “open architecture” table. Imagination chosen to share more details about the inner workings of their Rogue Series 6 and Series 6XT architectures, thereby giving us our first in-depth look at the architecture that’s powering a number of high-end products (not the least of which is all of Apple’s current-gen products) and descended from some of the most widely used SoC GPU designs of all time.

Now Imagination is not going to be sharing everything with us today. The bulk of the details Imagination is making available relate to their Unified Shading Cluster (USC) shading block, the heart of the Series 6/6XT GPUs. They aren’t discussing other aspects of their designs such as their geometry processors, cache structure, or Tile Based Deferred Rendering system – the company’s secret sauce and most potent weapon for SoC efficiency – but hopefully one day we’ll get there. In the meantime we will have our hands full just taking our first look at the Series 6/6XT USCs.

Finally, before we begin we’d like to thank Imagination for giving us this opportunity to evaluate their architecture in such great detail. We’ve been pushing for this for quite some time, so we’re pleased that this is coming to pass.

Imagination is publishing a pair of blogs and pseudo whitepapers on their website today: Graphics cores: trying to compare apples to apples, and PowerVR GX6650: redefining performance in mobile with 192 cores. Along with this they have also been answering many of our deepest technical questions, so we should have a good handle on the Rogue USC. So with that in mind, let’s dive in.

95 Comments

View All Comments

MrPoletski - Sunday, March 9, 2014 - link

The reason PoewrVR left the desktop market was simply because ST Microelectronics sold their graphics division. The KYRO was an extremely successful card and would have continued to be. IIRC Via tried to buy it up and carry on selling the KYRO 3 but could not reach a licence deal with STMicro - who claimed copyright on the chip design (the non powervr parts)Scali - Monday, February 24, 2014 - link

They could... But Imagination is just like ARM: they don't build GPUs themselves, they only license the designs.It has been possible for years to license a PowerVR design and scale it up to an interesting desktop GPU. It's just that so far, no company has done that. Probably too big a risk to take, trying to compete with giants such as nVidia and AMD.

The last desktop PowerVR cards mainly failed because of poor software support. Aside from the drivers not being all that mature, there was also the problem that many games made assumptions that simply would not hold on a TBDR architecture, and rendering bugs were the result.

If you were to build a PowerVR-based desktop solution today, chances are that you run into quite a lot of incompatibilities with existing games.

iwod - Monday, February 24, 2014 - link

I didn't want the word Apple in it to retrain from trolls and flame war, so i didn't write it out clearly the first time.The sole reason why PowerVR failed in the first place were their Drivers And the same reason why most other GPU company failed as well. Much like S3. Drivers in the GPU market means literally everything. It doesn't matter if their GPU is insanely great if it doesn't run any of the latest games and error upon error it simply wont sell. Unlike CPU which you actually get down to the mental programming.

Nvidia famously pointed out they have much more software engineers then Hardware. Writing a decent performing drivers takes time and money. Hence why not many GPU manufacturer survive. Most of them dont have enough resources to scale. Same goes with PowerVR. I still remember my Kyro Graphics Card I love, until it doesn't work on games I want to play.

But this time it is different. The Mobile Market has already exceed the PC market and will likely exceed the total GPU shipped in PC + Console Combined! Since the drivers you are writing for Mobile iOS can in many case effectively be used on MacOSX as well. That is why using PowerVR on Mac makes an appealing case.

May be the industry leader view Tablet / Mobile Phone + Console being the next trend, while PC & Mac will simply relinquish from Gaming?

Scali - Tuesday, February 25, 2014 - link

"The sole reason why PowerVR failed in the first place were their Drivers"As I said, it was not necessarily the drivers themselves. A nice example is 3DMark2001. Some scenes did not work correctly because of illegal assumptions about z-buffer contents. When 3DMark2001SE was released, one of the changes was that it now worked correctly on Kyro cards.

It is unclear where PowerVR stands today, since both their hardware and the 3D APIs and engines have changed massively. The only thing we know for sure is that there are various engines and games that work correctly on iPhone/iPad.

Sushisamurai - Monday, February 24, 2014 - link

Typo: "one thread per shader care, which like the shader cores are grouped together into what we call wavefronts." Should be shader core?In "Background: how GPU's work"

Ryan Smith - Monday, February 24, 2014 - link

Indeed it was. Thank you for pointing that out.chinmaythosar - Monday, February 24, 2014 - link

i wonder how AAPL will handle the FP16 cores ... they are moving to 64bit in CPUs and they would have hoped to move to FP64 in GPU ... it would have given them real talking point in the keynote for iPad 6 (or whatever they call it) .. " next-gen 192 core GPU FP64 architechture ..4x graphics power etc etc .. :PMrSpadge - Saturday, March 1, 2014 - link

Not sure what AAPL is, but pure FP64 for graphics would be horrible. You don't need the precision but waste lot's of die space and power.xeizo - Monday, February 24, 2014 - link

They would be more competitive and interesting to use if they published open drivers instead of "open architecture" pics .... :(Eckej - Monday, February 24, 2014 - link

Couple of small errors/typos:Under How Rogues Get Executed: Wavefronts & Superscalar ILP - the diagram should probably have 16, not 20 pipelines - looks like an extra row slipped in!

The page before: " With Series 6, Imagination has an interesting setup where there FP16 ALUs can process up to 3 operations in one cycle." There should read their.

Bottom of page 2 "And with that behind us, we can now take a look at the PowerVR Series 6/6XT Unfired Shading Cluster." - Unfired should read Unified.

Sorry to be picky.