Imagination's PowerVR Rogue Architecture Explored

by Ryan Smith on February 24, 2014 3:00 AM EST- Posted in

- GPUs

- Imagination Technologies

- PowerVR

- PowerVR Series6

- SoCs

Background: How GPUs Work

Seeing as how this is our first in-depth architecture article on a SoC GPU design (specifically as opposed to PC-derived designs like Intel and NVIDIA), we felt it best to start at the beginning. For our regular GPU readers the following should be redundant, but if you’ve ever wanted to learn a bit more about how a GPU works, this is your place to start.

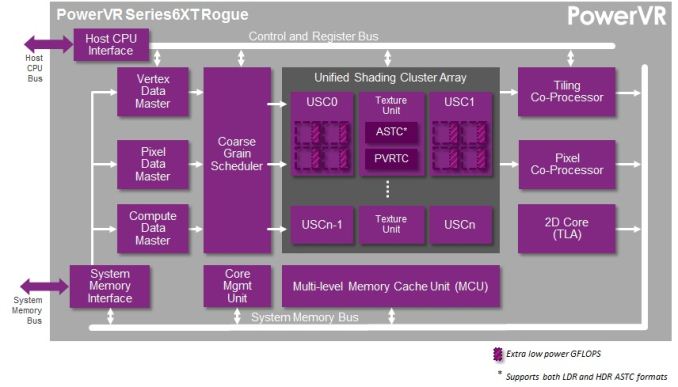

GPUs, like most complex processors, are composed of a number of different functional units responsible for the different aspects of computation and rendering. We have functional units that setup geometry data, frequently called geometry engine, geometry processors, or polymorph engines. We have memory subsystems that provide caching and access to external memory. We have rendering backends (ROPs or pixel co-processors) that take computed geometry and pixels to blend them and finalize them. We have texture mapping units (TMUs) that fetch textures and texels to place them within a scene. And of course we have shaders, the compute cores that do much of the heavy lifting in today’s games.

Perhaps the most basic question even from a simple summary of the functional units in a GPU is why there are so many different functional units in the first place. While conceptually virtually all of these steps (except memory) can be done in software – and hence done in something like a shader – GPU designers don’t do that for performance and power reasons. So-called fixed function hardware (such as ROPs) exists because it’s far more efficient to do certain tasks with hardware that is tightly optimized for the job, rather than doing it with flexible hardware such as shaders. For a given task flexible hardware is bigger and consumes more power than fixed function hardware, hence the need to do as much work in power/space efficient fixed function hardware as is possible. As such the portions of the rendering process that need flexibility will take place in shaders, while other aspects that are by their nature consistent and fixed take place in fixed function units.

The bulk of the information Imagination is sharing with us today is with respect to shaders, so that’s what we’ll focus on today. On a die area basis and power basis the shader blocks are the biggest contributors to rendering. Though every functional unit is important for its job, it’s in the shaders that most of the work takes place for rendering, and the proportion of that work that is bottlenecked by shaders increases with every year and with every generation, as increasingly complex shader programs are created.

So with that in mind, let’s start with a simple question: just what is a shader?

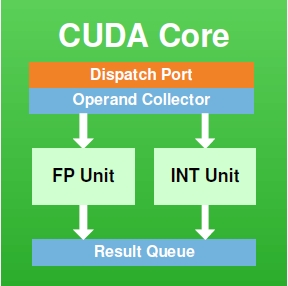

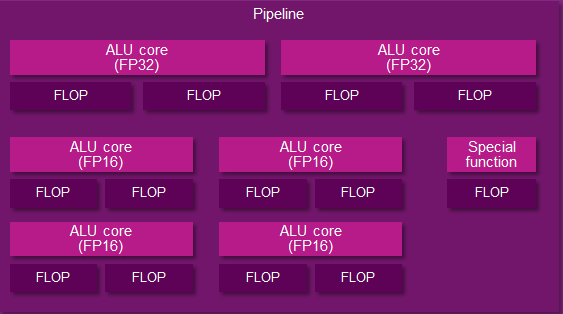

At its most fundamental level, a shader core is a flexible mathematics pipeline; it is a single computational resource that accepts instructions (a shader program) and executes it in order to manipulate the pixels and polygon vertices within a scene. An individual shader core goes by many names depending on who makes it: AMD has Stream Processors, NVIDIA has CUDA cores, and Imagination has Pipelines. At the same time how a shader core is built and configured depends on the architecture and its design goals, so while there are always similarities it is rare that shader cores are identical.

On a lower technical level, a shader core itself contains several elements. It contains decoders, dispatchers, operand collectors, results collectors, and more. But the single most important element, and the element we’re typically fixated on, is the Arithmetic Logic Unit (ALU). ALUs are the most fundamental building blocks in a GPU, and are the base unit that actually performs the mathematical operations called for as part of a shader program.

An NVIDIA CUDA Core

And an Imgination PVR Rogue Series 6XT Pipeline

The number of ALUs within a shader core in turn depends on the design of the shader core. To use NVIDIA as an example again, they have 2 ALUs – an FP32 floating point ALU and an integer ALU – either of which is in operation as a shader program requires. In other designs such as Imagination’s Rogue Series 6XT, a single shader core can have up to 7 ALUs, in which multiple ALUs can be used simultaneously. From a practical perspective we typically count shader cores when discussing architectures, but it is at times important to remember that the number of ALUs within a shader core can vary.

When it comes to shader cores, GPU designs will implement hundreds and up to thousands of these shader cores. Graphics rendering is what we call an embarrassingly parallel process, as there are potentially millions of pixels in a scene, most of which can be operated in in a semi-independent or fully-independent manner. As a result a GPU will implement a large number of shader cores to work on multiple pixels in parallel. The use of a “wide” design is well suited for graphics rendering as it allows each shader core to be clocked relatively low, saving power while achieving work in bulk. A shader core may only operate at a few hundred megahertz, but because there are so many of them the aggregate throughput of a GPU can be enormous, which is just what we need for graphics rendering (and some classes of compute workloads, as it turns out).

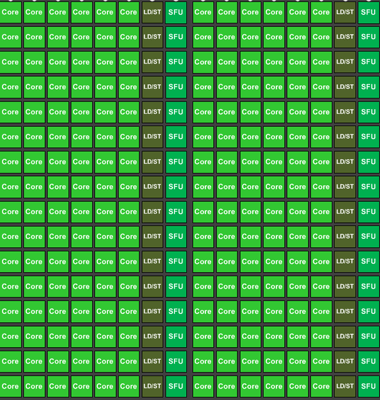

A collection of Kepler CUDA cores, 192 in all

The final piece of the puzzle then is how these shader cores are organized. Like all processors, the shader cores in a GPU are fed by a “thread” of instructions, one instruction following another until all the necessary operations are complete for that program. In terms of shader organization there is a tradeoff between just how independent a shader core is, and how much space/power it takes up. In a perfectly ideal scenario, each and every shader core would be fully independent, potentially working on something entirely different than any of its neighbors. But in the real world we do not do that because it is space and power inefficient, and as it turns out it’s unnecessary.

Neighboring pixels may be independent – that is, their outcome doesn’t depend on the outcome of their neighbors – but in rendering a scene, most of the time we’re going to be applying the same operations to large groups of pixels. So rather than grant the shader cores true independence, they are grouped up together for the purpose of having all of them executing threads out of the same collection of threads. This setup is power and space efficient as the collection of shader cores take up less power and less space since they don’t need the intelligence to operate completely independently of each other.

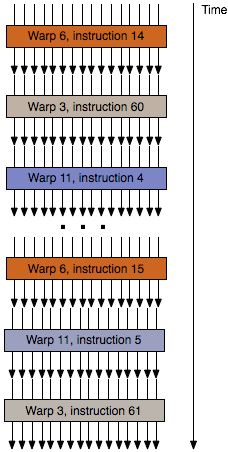

The flow of threads within a wavefront/warp

Not unlike the construction of a shader core, how shader cores are grouped together will depend on the design. The most common groupings are either 16 or 32 shader cores. Smaller groupings are more performance efficient (you have fewer shader cores sitting idle if you can’t fill all of them with identical threads), while larger groupings are more space/power efficient since you can group more shader cores together under the control of a single instruction scheduler.

Finally, these groupings of threads can go by several different names. NVIDIA uses the term warp, AMD uses the term wavefront, and the official OpenGL terminology is the workgroup. Workgroup is technically the most accurate term, however it’s also the most ambiguous; lots of things in the world are called workgroups. Imagination doesn’t have an official name for their workgroups, so our preference is to stick with the term wavefront, since its more limited use makes it easier to pick up on the context of the discussion.

Summing things up then, we have ALUs, the most basic building block in a GPU shader design. From those ALUs we build up a shader core, and then we group those shader cores into a array of (typically) 16 or 32 shader cores. Finally, those arrays are fed threads of instructions, one thread per shader core, which like the shader cores are grouped together. We call these thread groups wavefronts.

And with that behind us, we can now take a look at the PowerVR Series 6/6XT Unfied Shading Cluster.

95 Comments

View All Comments

MrPoletski - Sunday, March 9, 2014 - link

Factor of 4X, where is the edit button?iwod - Monday, February 24, 2014 - link

So that is a pretty decent GPU even from Desktop perspective. But Why we dont see this being used on Laptop or Desktop? It doesn't seem hard to scale the Imagination PVR GX6650 to NVIDIA GTX 650 level.StevoLincolnite - Monday, February 24, 2014 - link

Imagination used to build graphics processors for the Desktop, they were unable to compete with ATI, nVidia, Matrox, S3, 3dfx, NEC etc'. - Instead they shifted their focus to a niche market, the low-powered market, if only the other players knew how big that market would eventually grow to.Intel has also used PowerVR graphics chips for it's IGP's in the past like the GMA 3600 in the Intel Atom.

In general, they are far from ideal, they leave much to be desired in the drivers department.

One of the earlier PowerVR chips in the Intel Atom still doesn't have it's decoder functioning.

Krysto - Monday, February 24, 2014 - link

Imagination is losing the war for the exact same reason they lost the last time - their tile-based rendering, that was only meant for low-end "embedded" chips. But the chips are becoming "desktop class" these days, and need to work on a lot more advanced content with super high resolutions - and that's why Imagination's tile-based rendering will fail. Tile-based rendering is meant for simple operations, and that's where it shows its greater efficiency. The more complex those operations (the games) get the harder it will be for the PowerVR architecture to keep up.It used to be that their competitors couldn't even touch them. Now every single one will match or exceed their performance and features, and I imagine next year's 16nm FinFET Mobile Maxwell will leave it in the dust (wouldn't surprise me to see higher performance than Xbox One in it, or at least 1 TF).

michael2k - Monday, February 24, 2014 - link

You mean Imagination is still winning the war because everyone else only just realized there was a market in SoC? Intel is only barely in the game, AMD isn't, and Mali and Adreno is the only real competitor in terms of unit share. Unlike GPU, this market is tied to the success of your SoC, and PVR has a strong ally in Apple unless NVIDIA can convince Apple to license some of their GPU tech.Apple ships something like 1 in 5 smartphones, 1 in 2 tablets, etc. They have a huge presence in the market right now. Qualcomm definitely ships more SoC, but their GPUs don't all sit in the high end performance space.

ryszu - Monday, February 24, 2014 - link

Our TBDR front-end is absolutely not just designed for simple operations. Pure FUD, it scales very well.Scali - Monday, February 24, 2014 - link

How do you figure that TBDR is only for simple operations? It actually excels at more complex pixel operations, because it defers most of the shading and texturing until after visibility has been solved.khanov - Monday, February 24, 2014 - link

Pure nonsense. Go back to the kiddy table.Tile-Based Deferred Rendering eliminates overdraw and the performance gains it achieves INCREASE with scene complexity.

phoenix_rizzen - Friday, February 28, 2014 - link

Haven't you been saying the same thing for the past two years with the release of Tegra3 and Tegra4? And nVidia is still way behind.Series 6 is out now in products you can actually buy. Tegra K1 isn't.

Series 6 XT will be out in the next year-ish. Tegra K1 will probably be out by then.

The follow-up to 6XT will probably be out in two years. Who knows when the next Tegra after K1 will actually be out?

Until there's actual, physical devices out there with an nVidia chipset in it that betters the other, actual, physical devices out there, you're just barking smoke.

MrPoletski - Sunday, March 9, 2014 - link

You are completely wrong!