The NVIDIA GeForce GTX 750 Ti and GTX 750 Review: Maxwell Makes Its Move

by Ryan Smith & Ganesh T S on February 18, 2014 9:00 AM ESTPower, Temperature, & Noise

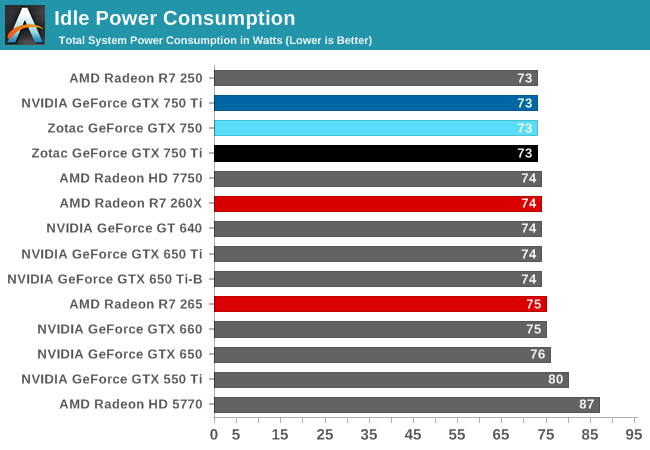

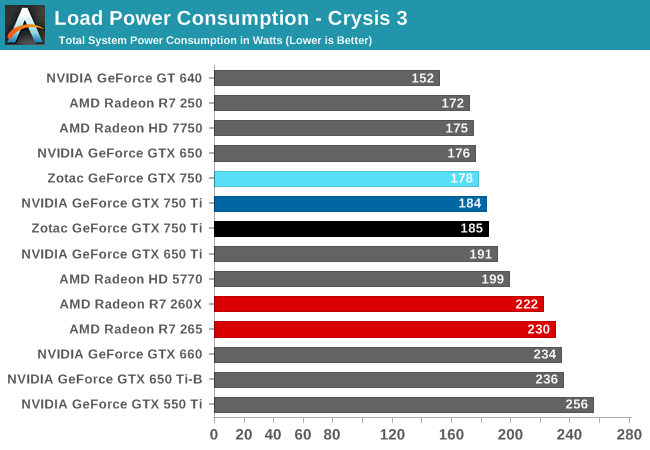

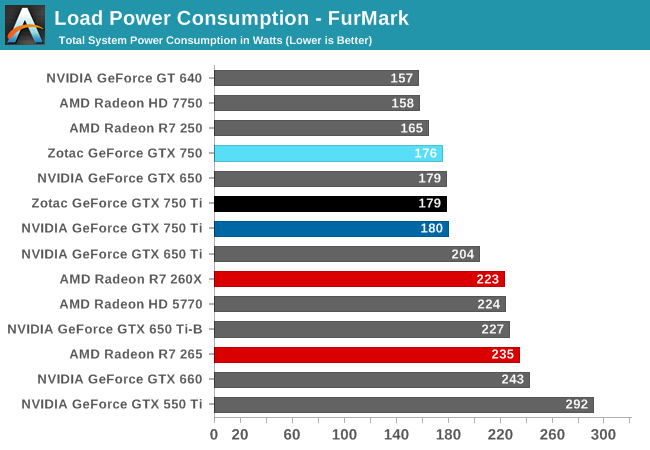

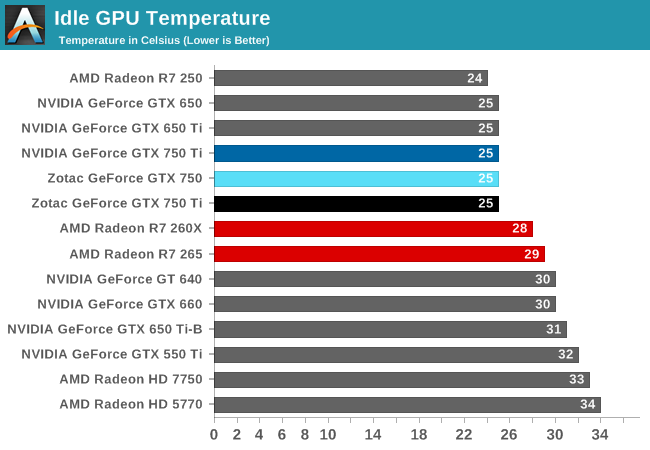

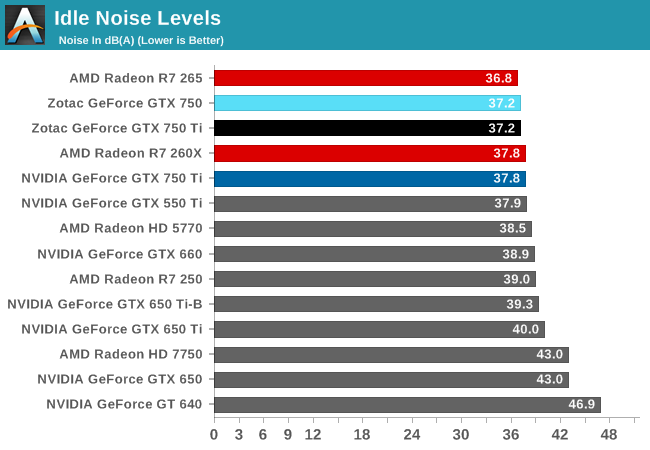

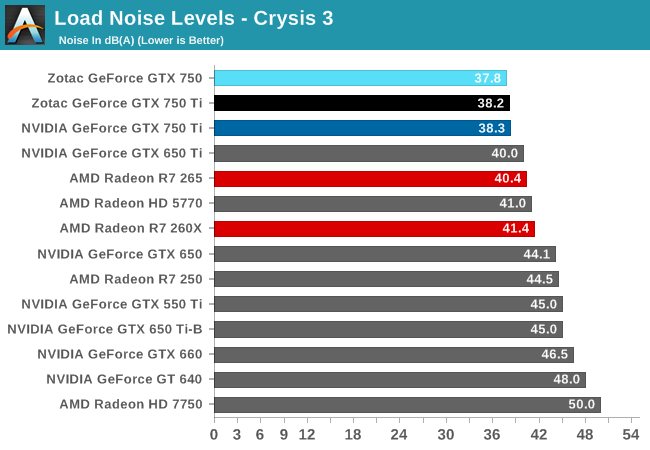

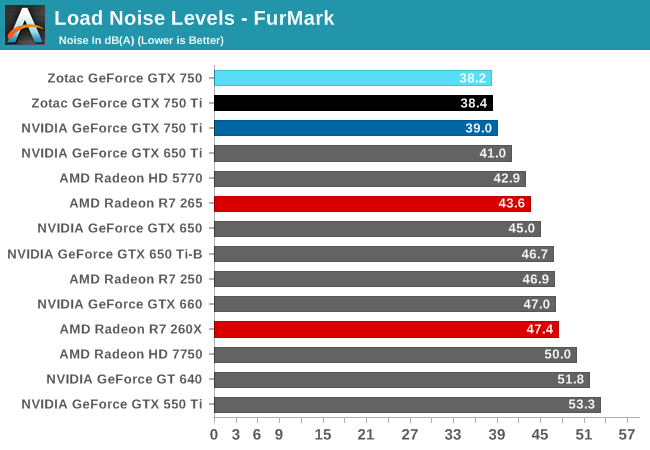

As always, last but not least is our look at power, temperature, and noise. Next to price and performance of course, these are some of the most important aspects of a GPU, due in large part to the impact of noise. All things considered, a loud card is undesirable unless there’s a sufficiently good reason – or sufficiently good performance – to ignore the noise.

| GeForce GTX 750 Series Voltages | ||||

| Ref GTX 750 Ti Boost Voltage | Zotac GTX 750 Ti Boost Voltage | Zotac GTX 750 Boost Voltage | ||

| 1.168v | 1.137v | 1.187v | ||

For those of you keeping track of voltages, you’ll find that the voltages for GM107 as used on the GTX 750 series is not significantly different from the voltages used on GK107. Since we’re looking at a chip that’s built on the same 28nm process as GK107, the voltages needed to drive it to hit the desired frequencies have not changed.

| GeForce GTX 750 Series Average Clockspeeds | |||||

| Ref GTX 750 Ti | Zotac GTX 750 Ti | Zotac GTX 750 | |||

| Max Boost Clock |

1150MHz

|

1175MHz

|

1162MHz

|

||

| Metro: LL |

1150MHz

|

1172MHz

|

1162MHz

|

||

| CoH2 |

1148MHz

|

1172MHz

|

1162MHz

|

||

| Bioshock |

1150MHz

|

1175MHz

|

1162MHz

|

||

| Battlefield 4 |

1150MHz

|

1175MHz

|

1162MHz

|

||

| Crysis 3 |

1149MHz

|

1174MHz

|

1162MHz

|

||

| Crysis: Warhead |

1150MHz

|

1175MHz

|

1162MHz

|

||

| TW: Rome 2 |

1150MHz

|

1175MHz

|

1162MHz

|

||

| Hitman |

1150MHz

|

1175MHz

|

1162MHz

|

||

| GRID 2 |

1150MHz

|

1175MHz

|

1162MHz

|

||

| Furmark |

1006MHz

|

1032MHz

|

1084MHz

|

||

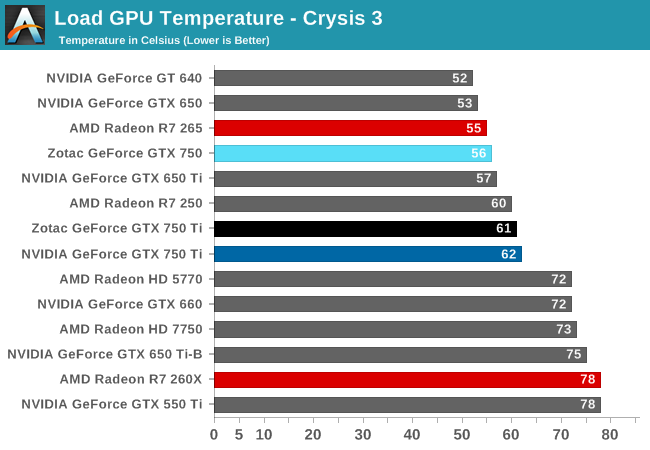

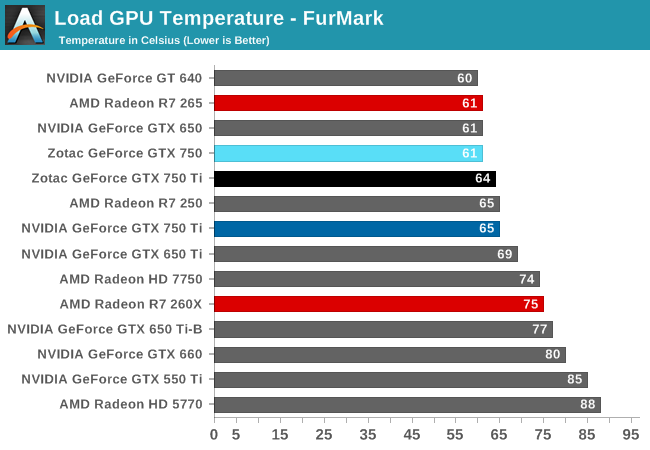

Looking at average clockspeeds, we can see that our cards are essentially free to run at their maximum boost bins, well above their base clockspeed or even their official boost clockspeed. Because these cards operate at such a low TDP cooling is rendered a non-factor in our testbed setup, with all of these cards easily staying in the 60C or lower range, well below the 80C thermal throttle point that GPU Boost 2.0 uses.

As such they are limited only by TDP, which as we can see does make itself felt, but is not a meaningful limitation. Both GTX 750 Ti cards become TDP limited at times while gaming, but only for a refresh period or two, pulling the averages down just slightly. The Zotac GTX 750 on the other hand has no such problem (the power savings of losing an SMX), so it stays at 1162MHz throughout the entire run.

177 Comments

View All Comments

Mondozai - Wednesday, February 19, 2014 - link

Anywhere outside of NA gives normal prices. Get out of your bubble.ddriver - Wednesday, February 19, 2014 - link

Yes, prices here are pretty much normal, no on rushes to waste electricity on something as stupid as bitcoin mining. Anyway, I got most of the cards even before that craze began.R3MF - Tuesday, February 18, 2014 - link

at ~1Bn transitors for 512Maxwell shaders i think a 20nm enthusiast card could afford the 10bn transistors necessary for a 4096 shaders...Krysto - Tuesday, February 18, 2014 - link

If Maxwell has 2x the P/W, and Tegra K2 arrives at 16nm, with 2 SMX (which is very reasonable expection), then Tegra K2 will have at least a 1 Teraflop of performance, if not more than 1.2 Teraflops, which would already surpass the Xbox One.Now THAT's exciting.

chizow - Tuesday, February 18, 2014 - link

It probably won't be Tegra K2, will most likely be Tegra M1 and could very well have 3xSMM at 20nm (192x2 vs. 128x3), which according to the article might be a 2.7x speed-up vs. just a 2x using Kepler's SMX arch. But yes, certainly all very exciting possibilities.grahaman27 - Wednesday, February 19, 2014 - link

the Tegra M1 will be on 16nm finfet if they stick to their roadmap. But, since they are bringing the 64bit version sooner than expected, I dont know what to expect. BTW, it has yet to be announce what manufacturing process the 64bit version will be... we can only hope TSMC 20nm will arrive in time.Mondozai - Wednesday, February 19, 2014 - link

Exciting or f%#king embarrassing for M$? Or for the console industry overall.RealiBrad - Tuesday, February 18, 2014 - link

Looks to be an OK card when you consider that mining has caused AMD cards to sell out and push up price.It looks like the R7 265 is fairly close on power, temp, and noise. If AMD supply could meet demand, then the 750Ti would need to be much cheaper and would not look nearly as good.

Homeles - Tuesday, February 18, 2014 - link

Load power consumption is clearly in Nvidia's favor.DryAir - Tuesday, February 18, 2014 - link

Power consumpion is way higher... give a look at TPU´s review. But price/perf is a lot beter yeah.Personally I'm a sucker for low power, and I will gadly pay for it.