OCZ Vertex 460 (240GB) Review

by Kristian Vättö on January 22, 2014 9:00 AM EST- Posted in

- Storage

- SSDs

- OCZ

- Indilinx

- Vertex 460

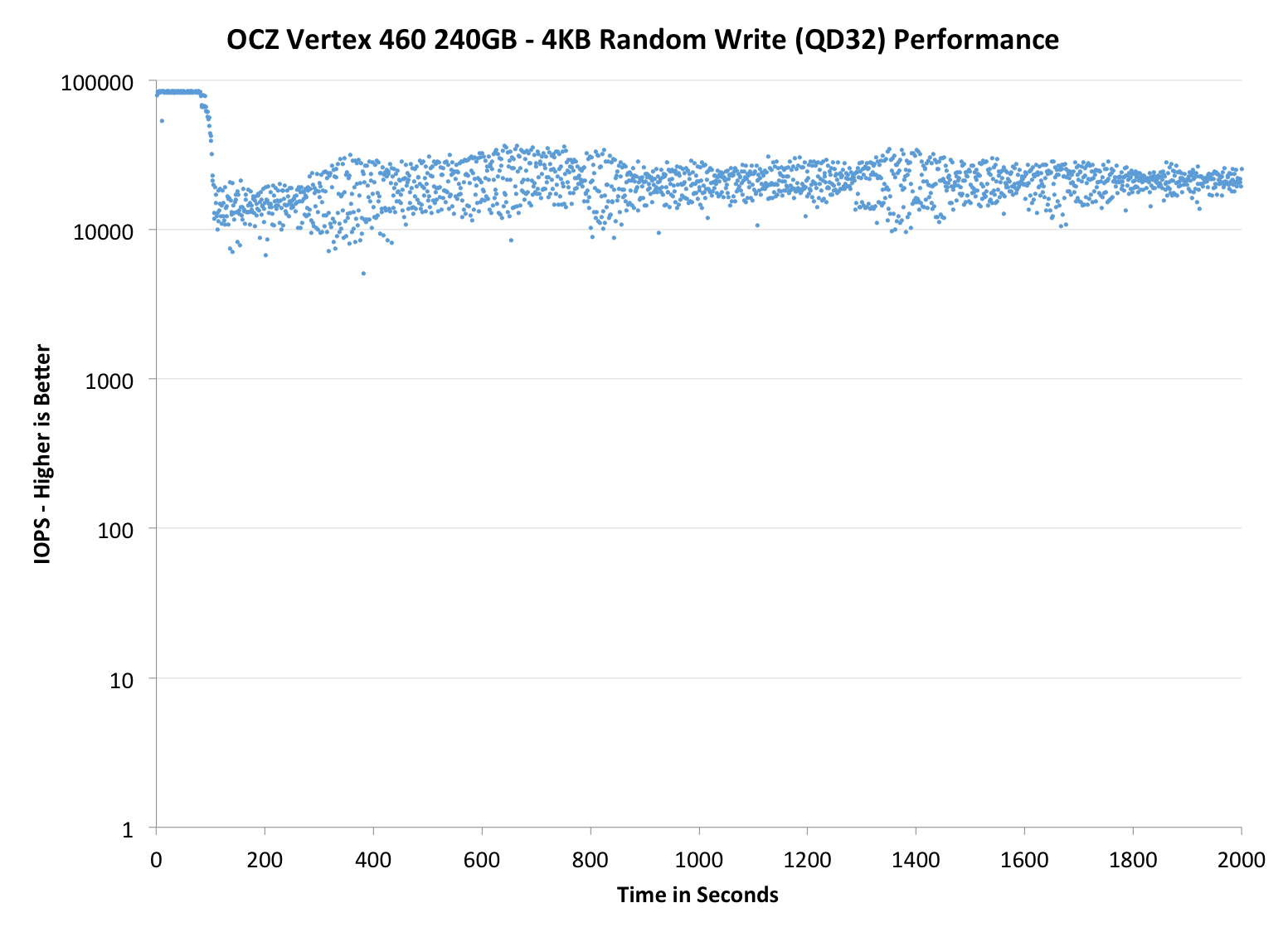

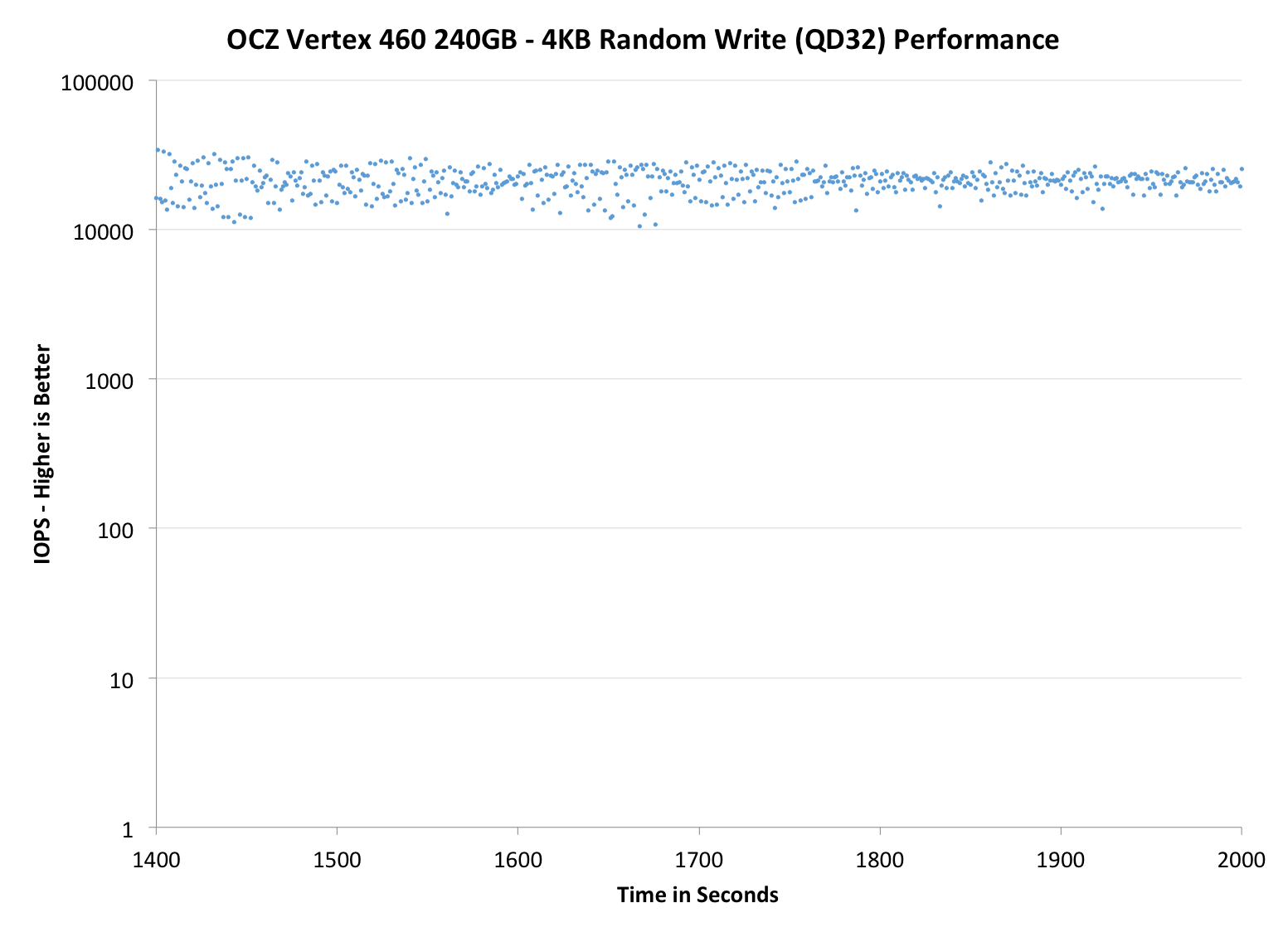

Performance Consistency

In our Intel SSD DC S3700 review Anand introduced a new method of characterizing performance: looking at the latency of individual operations over time. The S3700 promised a level of performance consistency that was unmatched in the industry, and as a result needed some additional testing to show that. The reason we don't have consistent IO latency with SSDs is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag and cleanup routines directly impacts the user experience. Frequent (borderline aggressive) cleanup generally results in more stable performance, while delaying that can result in higher peak performance at the expense of much lower worst-case performance. The graphs below tell us a lot about the architecture of these SSDs and how they handle internal defragmentation.

To generate the data below we take a freshly secure erased SSD and fill it with sequential data. This ensures that all user accessible LBAs have data associated with them. Next we kick off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. We run the test for just over half an hour, nowhere near what we run our steady state tests for but enough to give a good look at drive behavior once all spare area fills up.

We record instantaneous IOPS every second for the duration of the test and then plot IOPS vs. time and generate the scatter plots below. Each set of graphs features the same scale. The first two sets use a log scale for easy comparison, while the last set of graphs uses a linear scale that tops out at 40K IOPS for better visualization of differences between drives.

The high level testing methodology remains unchanged from our S3700 review. Unlike in previous reviews however, we vary the percentage of the drive that gets filled/tested depending on the amount of spare area we're trying to simulate. The buttons are labeled with the advertised user capacity had the SSD vendor decided to use that specific amount of spare area. If you want to replicate this on your own all you need to do is create a partition smaller than the total capacity of the drive and leave the remaining space unused to simulate a larger amount of spare area. The partitioning step isn't absolutely necessary in every case but it's an easy way to make sure you never exceed your allocated spare area. It's a good idea to do this from the start (e.g. secure erase, partition, then install Windows), but if you are working backwards you can always create the spare area partition, format it to TRIM it, then delete the partition. Finally, this method of creating spare area works on the drives we've tested here but not all controllers are guaranteed to behave the same way.

The first set of graphs shows the performance data over the entire 2000 second test period. In these charts you'll notice an early period of very high performance followed by a sharp dropoff. What you're seeing in that case is the drive allocating new blocks from its spare area, then eventually using up all free blocks and having to perform a read-modify-write for all subsequent writes (write amplification goes up, performance goes down).

The second set of graphs zooms in to the beginning of steady state operation for the drive (t=1400s). The third set also looks at the beginning of steady state operation but on a linear performance scale. Click the buttons below each graph to switch source data.

|

|||||||||

| OCZ Vertex 460 240GB | OCZ Vector 150 240GB | Corsair Neutron 240GB | Sandisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

| 25% OP | |||||||||

Performance consistency is more or less a match with the Vector 150. There is essentially no difference, only some slight variation which may as well be caused by the nature of how garbage collection algorithms work (i.e. the result is never exactly the same).

|

|||||||||

| OCZ Vertex 460 240GB | OCZ Vector 150 240GB | Corsair Neutron 240GB | SanDisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

| 25% OP | |||||||||

|

|||||||||

| OCZ Vertex 460 240GB | OCZ Vector 150 240GB | Corsair Neutron 240GB | SanDisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

| 25% OP | |||||||||

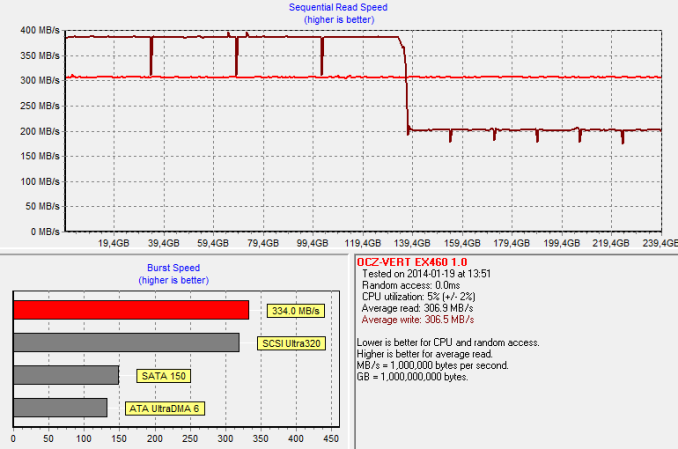

TRIM Validation

To test TRIM, I first filled all user-accessible LBAs with sequential data and continued with torturing the drive with 4KB random writes (100% LBA, QD=32) for 60 minutes. After the torture I TRIM'ed the drive (quick format in Windows 7/8) and ran HD Tach to make sure TRIM is functional.

And TRIM works. The HD Tach graph also shows the impact of OCZ's performance mode, although in a negative light. Once half of the LBAs have been filled, all data has to be reorganized. The result is a decrease in write performance as the drive is reorganizing the existing data at the same time as HD Tach is writing to it. Once the reorganization process is over, the performance will recover close to the original performance.

69 Comments

View All Comments

Kristian Vättö - Wednesday, January 22, 2014 - link

All OEMs do cherry-picking, so blaming OCZ is useless. However, in SSDs it doesn't matter that much because NAND is binned for endurance, not performance. While there can always be minor differences in performance between units, it's nowhere near as big as in e.g. CPUs.As for buying review samples, that would not be financially efficient. Consumer Reports and the like are different because they're funded by the government or other huge organisation, whereas we are private. Furthermore, we wouldn't be able to deliver reviews on time for release because we'd have to wait for retail availability like everyone else.

FunBunny2 - Wednesday, January 22, 2014 - link

I can 'buy' the second reason, but Anand can't afford a $300 SSD? Come on.bhaberle - Wednesday, January 22, 2014 - link

So you are telling me you would be okay with spending at minimum, tens of thousands of dollars on parts? Sure that is just ONE $300 SSD. What about about the other 15+ that they would need to get. Be realistic. If it is not a big deal, why don't you go buy that many. Sure they make money with this site, but it would take some time just to break even on the costs of the parts even for a large site like Anandtech. If you don't appreciate the effort they put in their reviews then stick to consumer reports.blanarahul - Wednesday, January 22, 2014 - link

That's what I said.BTW, can you guys test Samsung XP941?? And if possible, a comparison with 2 840 Pros in SLI.

Uhh. RAID..

Kristian Vättö - Friday, January 24, 2014 - link

I asked Samsung for a sample a while back but they wouldn't send us one since it's an OEM-only product. However, Anand got a pair of XP941s in the new Thunderbolt 2 equipped LaCie drive... ;-)henrybravo - Wednesday, January 22, 2014 - link

Great comment. Blame company PR, not Anandtech.PEJUman - Wednesday, January 22, 2014 - link

I own various branded SSDs, Intel, OCZ, Corsair to name a few.Some fails some don't (yes, even my intel X25-M G2 failed me at one point).

In the end, Anandtech readers are typically smart enough to run backups so any failures like that is not a big deal.

I form my own price/performance/risk assesment:

I use newegg, amazon, slickdeals, camelx3 for price.

Anandtech, Toms and forums for performance.

and lastly verified buyer comments at newegg & amazon for risk.

I could care less about a brand or what color is the box of my CPU/GPU.

MrSpadge - Wednesday, January 22, 2014 - link

And some people are obsessed with hating OCZ. Sure they made several mistakes, some not directly related to their technology. But that happened years ago. Sit back and see how the new drives developed in coorporation with Toshiba work out. Judge those drives by what they are, not what their grand-grandfathers were.Bob Todd - Wednesday, January 22, 2014 - link

You make it sound like all of their problems with quality control and high failure rates happened in the ancient past. This site's Vector review sample died during testing less than 3 months ago. I'm on my 3rd Agility 4 after RMAs and this one needs to go back too. That drive only came out ~18 months ago. I'd be happy to embrace a new wave of OCZ SSDs that were reliable, but we won't know how well (or if) they've managed to turn things around until we get several models in consumers hands and have adequate time to judge reliability.Roland00Address - Thursday, January 23, 2014 - link

OCZ has made plenty of shitty ssds in the last 3 years. I will be happy to buy a OCZ/Toshiba SSD but not until they have a track record of 3 good years for their ssds.Why deal with the head ache of your computer going out or your data corrupted just to save $10 or $20 dollars?