AMD Kaveri Review: A8-7600 and A10-7850K Tested

by Ian Cutress & Rahul Garg on January 14, 2014 8:00 AM ESTKaveri: Aiming for 1080p30 and Compute

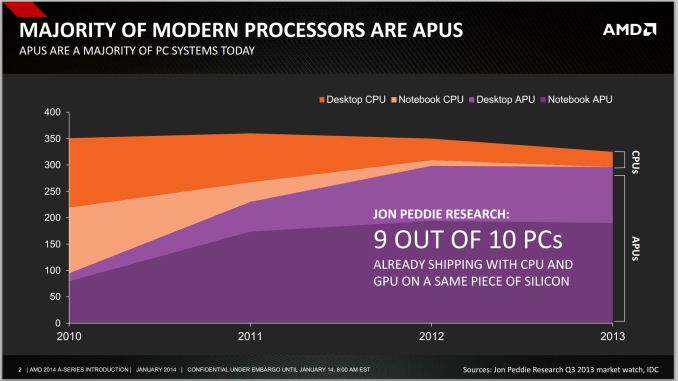

The numerical differences between Kaveri and Richland are easy enough to rattle off – later in the review we will be discussing these in depth – but at a high level AMD is aiming for a middle ground between the desktop model (CPU + discrete graphics) and Apple’s Mac Pro dream (offloading compute onto different discrete graphics cards) by doing the dream on a single processor. At AMD’s Kaveri tech day the following graph was thrown in front of journalists worldwide:

With Intel now on board, processor graphics is a big deal. You can argue whether or not AMD should continue to use the acronym APU instead of SoC, but the fact remains that it's tough to buy a CPU without an integrated GPU.

In the absence of vertical integration, software optimization always trails hardware availability. If you look at 2011 as the crossover year when APUs/SoCs took over the market, it's not much of a surprise that we haven't seen aggressive moves by software developers to truly leverage GPU compute. Part of the problem has been programming model, which AMD hopes to address with Kaveri and HSA. Kaveri enables a full heterogeneous unified memory architecture (hUMA), such that the integrated graphics topology can access the full breadth of memory that the CPU can, putting a 32GB enabled compute device into the hands of developers.

One of the complexities of compute is also time: getting the CPU and GPU to communicate to each other without HSA and hUMA requires an amount of overhead that is not trivial. For compute, this comes in the form of allowing the CPU and GPU to work on the same data set at the same time, effectively opening up all the compute to the same task without asynchronous calls to memory copies and expensive memory checks for coherency.

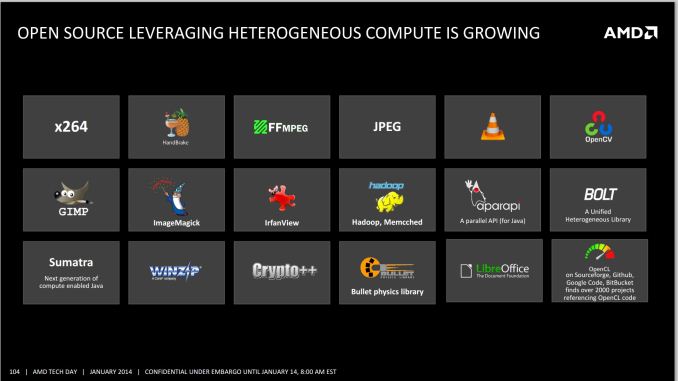

The issue AMD has with their HSA ecosystem is the need for developers to jump on board. The analogy oft cited is that on Day 1, iOS had very few apps, yet today has millions. Perhaps a small equivocation fallacy comes in here – Apple is able to manage their OS and system in its entirety, whereas AMD has to compete in the same space as non-HSA enabled products and lacks the control. Nevertheless, AMD is attempting to integrate programming tools for HSA (and OpenCL 2.0) as seamlessly as possible to all modern platforms via a HSA Instruction Layer (HSAIL). The goal is for programming languages like Java, C++ and C++ AMP, as well as common acceleration API libraries and toolkits to provide these features at little or no coding cost. This is something our resident compute guru Rahul will be looking at in further detail later on in the review.

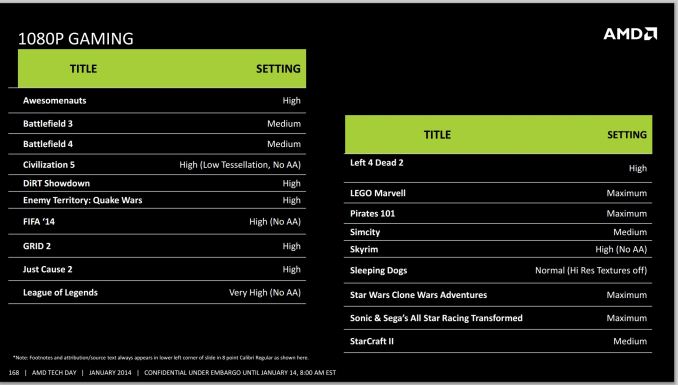

On the gaming side, 30 FPS has been a goal for AMD’s integrated graphics solutions for a couple of generations now.

Arguably we could say that any game should be able to do 30 FPS if we turn down the settings far enough, but AMD has put at least one restriction on that: resolution. 1080p is a lofty goal to hold at 30 FPS with some of the more challenging titles of today. In our testing in this review, it was clear that users had a choice – start with a high resolution and turn the settings down, or keep the settings on medium-high and adjust the resolution. Games like BF4 and Crysis 3 are going to tax any graphics card, especially when additional DirectX 11 features come in to play (ambient occlusion, depth of field, global illumination, and bilateral filtering are some that AMD mention).

380 Comments

View All Comments

boozed - Tuesday, January 14, 2014 - link

You must be a hoot at parties.boozed - Wednesday, January 15, 2014 - link

And I hit reply on the wrong bloody comment. My apologies...monsieurrigsby - Wednesday, January 29, 2014 - link

I'm a bit slow to the party, but talk of discrete GPUs leads me to the main question I still have that I don't see explained (possibly because the authors assume deeper understanding of CPU/GPU programming), and haven't seen discussed elsewhere. (I've not looked *that* hard...)If you have a Kaveri APU and a mid/high-end discrete GPU that won't work with Dual Graphics (if it arrives), what processing can and can't use the on-APU GPU? If we're talking games (the main scenario), what can developers offload onto the onboard GPU and what can't they? What depends on the nature of the discrete card (e.g., are modern AMD ones 'HSA enabled' in some way?)? If you *do* have a Dual Graphics capable discrete GPU, does this still limit what you can *explicitly* farm off to the onboard GPU?

My layman's guess is that GPU compute stuff can still be done but, without dual graphics, stuff to do with actual frame rendering can't. (I don't know enough about GPU programming to know how well-defined that latter bit is...)

It's just that that seems the obvious question for the gaming consumer: if I have a discrete card, in what contexts is the on-APU GPU 'wasted' and when could it be used (and how much depends on what the discrete card is)? And I guess the related point is how much effort is the latter, and so how likely are we to see elements of it?

Am I missing something that's clear?

monsieurrigsby - Wednesday, January 29, 2014 - link

Plus detail on Mantle seems to suggest that this might provide more control in this area? But are there certain types of things which would be *dependent* on Mantle?http://hothardware.com/News/How-AMDs-Mantle-Will-R...

nissangtr786 - Tuesday, January 14, 2014 - link

I told amd fanboys the fpu on intel and the raw mflops mips ofintel cpu destroy current a10 apus, its no real suprise all those improvement show very little in benchmarks with kaveri steamroller cores. amd fanboys said it will reach i5 2500k performance, I said i3 4130 but overall i3 4130 will be faster in raw performance and I am right. I personally have an i5 4430 and it looks like i5's still destroy these a10 apu in raw performance.http://browser.primatelabs.com/geekbench3/326781

browser.primatelabs.com/geekbench3/321256

a10-7850k Sharpen Filter Multi-core 5846 4.33 Gflops

browser.primatelabs.com/geekbench3/321256

i5 4430 Sharpen Filter Multi-core 11421 8.46 Gflops

gngl - Tuesday, January 14, 2014 - link

"I personally have an i5 4430 and it looks like i5's still destroy these a10 apu in raw performance."You seem to have a very peculiar notion of what "raw performance" means, if you're measuring it in terms of what one specific benchmark does with one specific part of the chip. There's nothing raw about a particular piece of code executing a specific real-world benchmark using a particular sequence of instructions.

chrnochime - Tuesday, January 14, 2014 - link

Who cares what CPU you have anyway. If you want to show off, tell us you have at least a 4670k and not a 4430. LOLkeveazy - Tuesday, January 14, 2014 - link

It's relevant that he used the i5 4430 in his comment. Compare the price range and you'll see. These AMD apu's are useless unless your just looking to build a PC that's not meant to handle heavily threaded tasks.tcube - Thursday, January 16, 2014 - link

Ok... heavily threaded tasks ok... examples! Give me one example of one software 90% of pc users use 90% of the time that this apu can't handle... then and ONLY then is the cpu relevant! Other then that it's just bragging rights and microseconds nobody cares about on a PC!Instead we do care to have a chip that plays anything from hd video to AAA 3d games and also is fast enough for anything else and don't need a gpu for extra cost, power usage heat and noise! And that ain't any intel that fits on a budget!

keveazy - Saturday, January 18, 2014 - link

I'll give you 1 example. Battlefield 4.