Synology RS10613xs+: 10GbE 10-bay Rackmount NAS Review

by Ganesh T S on December 26, 2013 3:11 AM EST- Posted in

- NAS

- Synology

- Enterprise

Introduction and Setup Impressions

Our enterprise NAS reviews have focused on Atom-based desktop form factor systems till now. These units have enough performance for a moderately sized workgroup and lack some of the essential features in the enterprise space such as acceptable performance with encrypted volumes. A number of readers have mailed in asking for more coverage of the NAS market straddling the high-end NAS and the NAS - SAN (storage area network) hybrid space. Models catering to this space come in the rackmount form factor and are based on more powerful processors such as the Intel Core series or the Xeon series.

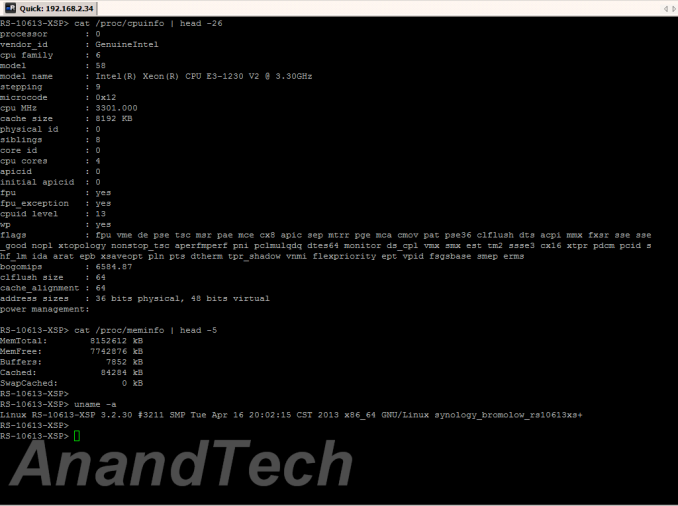

Synology's flagship in this space over the last 12 months or so has been the RS10613xs+. Based on the Intel Xeon E3-1230 processor, this 2U rackmount system comes with twelve hot-swappable bays (two of which are dedicated for caching purposes) and 8GB of ECC RAM (expandable to 32 GB). Both SATA and SAS disks in 3.5" as well as 2.5" form factor are supported. In addition to the 10-bays, the unit has also got space for 2x 2.5" drives behind the display module. SSDs can be used in these bays to serve as a cache.

The specifications of the RS10613xs+ are as below:

| Synology RS10613xs+ Specifications | |||

| Processor | Intel Xeon E3-1230 (4C/8T, 3.2 GHz) | ||

| RAM | 8 GB DDR3 ECC RAM (Upgradable to 32 GB) | ||

| Drive Bays | 10x 3.5"/2.5" SATA / SAS 6 Gbps HDD / SSD + 2x 2.5" SSD Cache Bays | ||

| Network Links | 4x 1 GbE + 2x 10 GbE (Add-on PCIe card) | ||

| USB Slots | 4x USB 2.0 | ||

| SAS Expansion Ports | 2x (compatible with RX1213sas) | ||

| Expansion Slots | 2x (10 GbE card occupies one) | ||

| VGA / Console | Reserved for Maintenance | ||

| Full Specifications Link | Synology RS10613xs+ Hardware Specs | ||

Synology is well regarded in the SMB space for the stability as well as wealth of features offered on their units. The OS (DiskStation Manager - DSM) is very user-friendly. We have been following the evolution of DSM over the last couple of years. The RS10613xs+ is the first unit that we are reviewing with DSM 4.x, and we can say with conviction that DSM only keeps getting better.

Our only real complaint about DSM has been the lack of seamless storage pools with the capability to use a single disk across multiple RAID volumes (the type that Windows Storage Spaces provides). This is useful in scenarios with, say, four bay units, where the end user wants some data protected against a single disk failure and some other data protected against failure of two disks. This issue is not a problem with the RS10613xs+, since it has plenty of bays to create two separate volumes in this scenario. In any case, this is a situation more common in the home consumer segment rather than the enterprise segment towards which the RS10613xs+ is targeted.

The front panel has ten 3.5" drive bays arranged in three rows of four bays each. The rightmost column has a two-row / one-column wide LCM display panel with buttons to take care of administrative tasks. This panel can be pulled out to reveal the two caching SSD bays. On the rear side, we have redundant power supplies (integrated 1U PSUs of 400W each), a console and VGA port (not suggested for use by the end consumer), 4x USB 2.0 ports, 4x 1Gb Ethernet ports (all natively on the unit's motherboard) and two SAS-out expansion ports to connect up to 8 RX1213sas expansion units. There is also space for a half-height PCIe card, and it was outfitted with a dual 10 GbE SFP+ card in our review unit.

On the software side, not much has changed with respect to the UI in DSM 4.x compared to the older versions. There is definitely a more polished look and feel. For example, we have drag and drop support while configuring disks in different volumes. These types of minor improvements tend to contribute to a better user experience all around. The setup process is a breeze, with the unit's configuration page available on the network even in diskless mode. As the gallery below shows, the unit comes with a built-in OS which can be installed in case the unit / setup computer is not connected to the Internet / Synology's servers. A Quick Start Wizard prompts the user to create a volume to start using the unit.

An interesting aspect of the Storage Manager is the SSD Cache for boosting read performance. Automatic generation of file access statistics on a given volume helps in deciding the right amount of cache that might be beneficial to the system. Volumes are part of RAID groups. All volumes in a given RAID group are at the same RAID level. In addition, the storage manager also provides for configuration of iSCSU LUNs / targets and management of the disk drives (S.M.A.R.T and other similar disk-specific aspects).

RAID expansions / migrations as well as rebuilds are handled in the storage manager too. The other interesting aspect is the Network section. In the gallery above, one can see that it is possible to bond all the 6 network ports together in 802.3ad dynamic link aggregation mode. SSH access is available (as in older DSM versions). A CLI guide to work on the RAID groups / volumes in a SSH session would be a welcome complementary feature to the excellent web UI.

In the rest of this review, we will talk about our testbed setup, present results from our evaluation of single client performance with CIFS and NFS shares as well as iSCSI LUNs. Encryption support is also evaluated for CIFS shares. A section on performance with Linux clients will also be presented. Multi-client performance is evaluated using IOMeter on CIFS shares. In the final section we talk about power consumption, RAID rebuild durations and other miscellaneous aspects.

51 Comments

View All Comments

mfenn - Friday, December 27, 2013 - link

The 802.3ad testing in this article is fundamentally flawed. 802.3ad does NOT, repeat NOT, create a virtual link whose throughput is the sum of its components. What it does is provide a mechanism for automatically selecting which link in a set (bundle) to use for a particular packet based on its source and destination. The definition of "source and destination" depends on the particular hashing algorithm you choose, but the common algorithms will all hash a network file system client / server pair to the same link.In a 4 1Gb/s + 2 10Gb/s 802.3ad ling aggregation group, you would expect that 2/3rd's of the clients would get hashed to the 1 Gb/s links and 1/3rd would get hashed to the 10Gb/s links. In a situation where all clients are running in lock-step (i.e. everyone must complete their tests before moving on to the next), you would expect the 10 Gb/s clients to be limited by the 1 Gb/s ones, thus providing a ~ 6 Gb/s line rate ~= 600 MB/s user data result.

Since 2 * 10 Gb/s > 6 * 1 Gb/s, I recommend retesting with only the two 10 Gb/s links in the 802.3ad aggregation group.

Marquis42 - Friday, December 27, 2013 - link

Indeed, that's what I was going to get at when I asked more about the particulars of the setup in question. Thanks for just laying it out, saved me some time. ;)ganeshts - Friday, December 27, 2013 - link

mfenn / Marquis42,Thanks for the note. I indeed realized this issue after processing the data for the Synology unit. Our subsequent 10GbE reviews which are slated to go out over the next week or so (the QNAP TS-470 and the Netgear ReadyNAS RN-716) have been evaluated with only the 10GbE links in aggregated mode (and the 1 GbE links disconnected).

I will repeat the Synology multi-client benchmark with RAID-5 / 2 x 10Gb 802.3ad and update the article tomorrow.

ganeshts - Saturday, December 28, 2013 - link

I have updated the piece with the graphs obtained by just using the 2 x 10G links in 802.3ad dynamic link aggregation. I believe the numbers don't change too much compared to teaming all the 6 ports together.Just for more information on our LACP setup:

We are using the GSM7352S's SFP+ ports teamed with link trap and STP mode enabled. Obviously, dynamic link aggregation mode. The Hash Mode is set to 'Src/Dest MAC, VLAN, EType,Incoming Port'.

I did face problems in evaluating other units where having the 1 Gb links active and connected to the same switch while the 10G ports were link-aggregated would bring down the benchmark numbers. I have since resolved that by completely disconnecting the 1G links in multi-client mode for the 10G-enabled NAS units.

shodanshok - Saturday, December 28, 2013 - link

Hi all,while I understand that RAID5 surely has its domains, RAID10 is generally a way better choice, both for redundancy and performance.

The RAID5 read-modify-write penalty present itself in a pretty heavy way when using anything doing many small writes, as databases ans virtual machines. So, then only case where I would create a RAID5 array is when it will be used as a storage archive (eg: fileserver).

On the other hand, many, many sysadmins create "by default" RAID5 arrays pretending to consolidate on it many virtual machines. Unless you have a very high-end RAID controller (w/512+ MB of NVCache), they will badly suffer from RAID5 and alignment issues, which are basically non-existent on RAID10.

One exception can be done for SSD arrays: in that case, a parity-based scheme (RAID5 or, better, RAID6) can do its work done very well, as SSD have no seek latency and tend to be of lower capacity than mechanical disks. However, alignment issues remain significant, and need to be taken into account when creating both the array and the virtual machines on top of it.

Regards.

sebsta - Saturday, December 28, 2013 - link

Since the introduction of 4k sector size disks things have changed a lot,at least in the ZFS world. Everyone who is thinking about building their

storage system with ZFS and RaidZ should see this Video.

http://zfsday.com/zfsday/y4k/

Starting at 17:00 comes the bad stuff for RaidZ users.

Here the one of the co creators of ZFS basically tells you.....

Stay away from RaidZ if you are using 4k sector disks.

hydromike - Sunday, December 29, 2013 - link

It depends on the OS implemented if this is a current problem. Many of the commercial ZFS vendors have had this fixed for awhile 18 to 24 months. FreeNAS in its latest release 9.2.0 have fixed this issue. ZFS has been a command-line heavy operation that you really understand drive setup and to tune it for the best speed.sebsta - Sunday, December 29, 2013 - link

I don't know much about FreeNAS but like FreeBSD they get their ZFS from Illumos.Illumos ZFS implementation has no fix. What is ZFS supposed to do if you write 8k to a RaidZ with 4 data disks if the sector size of a disk is 4k?

The video explain what happens on Illumos. You will end up with something like this

1st 4k data -> disk1

2nd 4k data -> disk2

1st 4k data -> disk3

2nd 4k data -> disk4

Parity -> disk5

So you have written the same data twice plus parity. Much like mirroring with the additional overhead of calculating and writing the parity. FreeNAS has changed the ZFS implementation in that regard?

sebsta - Sunday, December 29, 2013 - link

I did a quick search and at least in January this year FreeBSD had the same issues.See here https://forums.freebsd.org/viewtopic.php?&t=37...

shodanshok - Monday, December 30, 2013 - link

Yes this is true, but for this very same reason many enterprise disks remain at 512 Byte per sector.Take the "enterprise drives" from WD:

- the low cost WD SE are Advanced Format ones (4K sector size)

- the somewhat pricey WD RE have classical 512B sector

- the top-of-the line WD XE have 512B sector

So, the 4K formatted disks are proposed for storage archiving duties, while for VMs and DBs the 512B disks remain the norm.

Regards.