The Mac Pro Review (Late 2013)

by Anand Lal Shimpi on December 31, 2013 3:18 PM ESTPlotting the Mac Pro’s GPU Performance Over Time

The Mac Pro’s CPU options have ballooned at times during its 7 year history. What started with four CPU options grew to six for the early 2009 - mid 2010 models. It was also during that time period that we saw an expansion of the number of total core counts from 4 up to the current mix of 4, 6, 8 and 12 core configurations.

What’s particularly unique about this year’s Mac Pro is that all configurations are accomplished with a single socket. Moore’s Law and the process cadence it characterizes leave us in a place where Intel can effectively ship a single die with 12 big x86 cores. It wasn’t that long ago where you’d need multiple sockets to achieve the same thing.

While the CPU moved to a single socket configuration this year, the Mac Pro’s GPU went the opposite direction. For the first time in Mac Pro history, the new system ships with two GPUs in all configurations. I turned to Ryan Smith, our Senior GPU Editor, for his help in roughly characterizing Mac Pro GPU options over the years.

| Mac Pro - GPU Upgrade Path | ||||||||||

| Mid 2006 | Early 2008 | Early 2009 | Mid 2010 | Mid 2012 | Late 2013 | |||||

| Slowest GPU Option | NVIDIA GeForce 7300 GT | ATI Radeon HD 2600 XT | NVIDIA GeForce GT 120 | ATI Radeon HD 5770 | ATI Radeon HD 5770 | Dual AMD FirePro D300 | ||||

| Fastest GPU Option | NVIDIA Quadro FX 4500 | NVIDIA Quadro FX 5600 | ATI Radeon HD 4870 | ATI Radeon HD 5870 | ATI Radeon HD 5870 | Dual AMD FirePro D700 | ||||

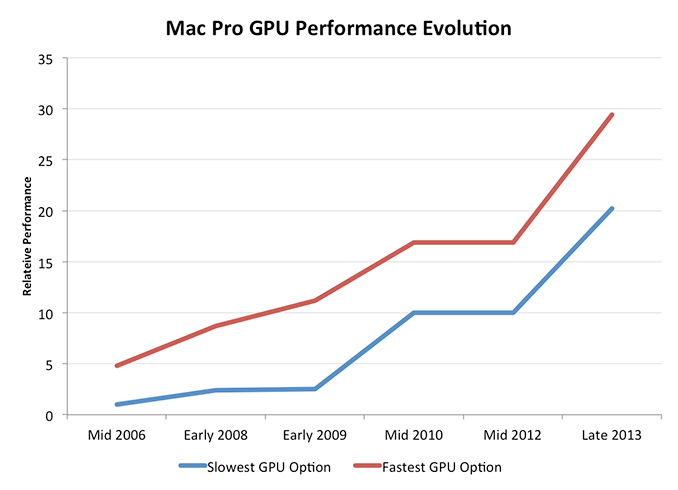

Since the Mac Pro GPU offerings were limited to 2 - 3 cards per generation, it was pretty easy to put together comparisons. We eliminated the mid range configuration for this comparison and only looked at scaling with the cheapest and most expensive GPU options each generation.

Now we’re talking. At the low end, Mac Pro GPU performance improved by 20x over the past 7 years. Even if you always bought the fastest GPU possible you'd be looking at a 6x increase in performance, and that's not taking into account the move to multiple GPUs this last round (if you assume 50% multi-GPU scaling then even the high end path would net you 9x better GPU performance over 7 years).

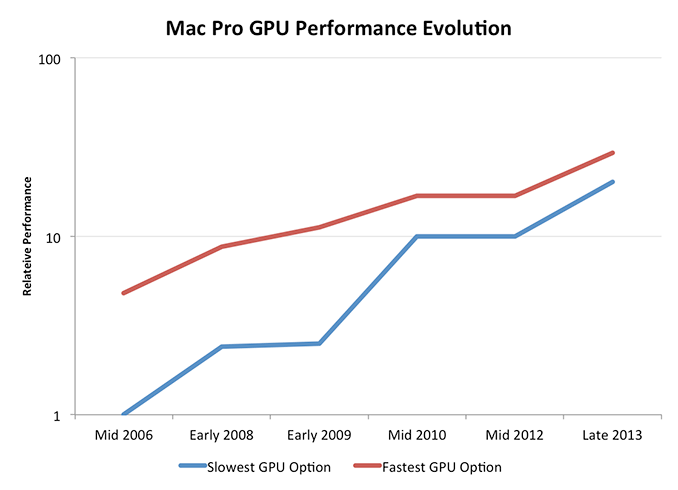

Ryan recommended presenting the data with a log scale as well to more accurately depict the gains over time:

Here you see convergence, at a high level, between the slowest and fastest GPU options in the Mac Pro. Another way of putting it is that Apple values GPU performance more today than it did back in 2006, so even the cheapest GPU is a much higher performing part than it would be.

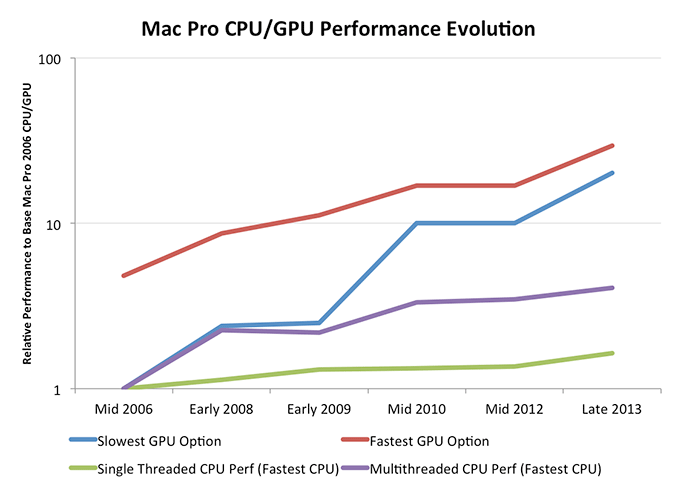

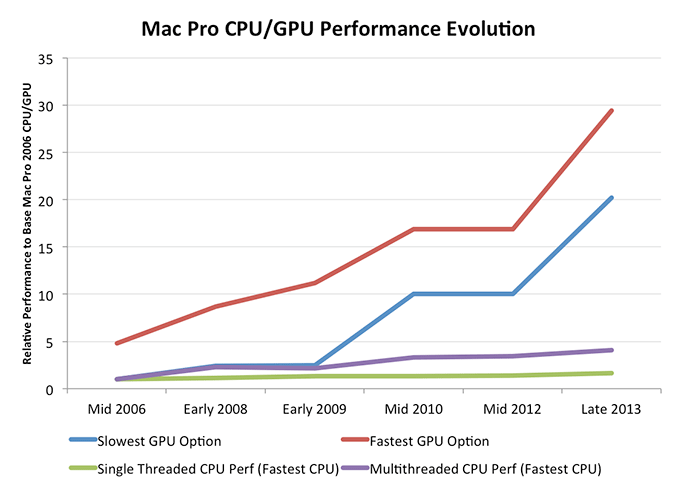

If you’re a GPU company (or a Senior GPU Editor), this next chart should make you very happy. Here I’m comparing relative increases in performance for both CPU and GPU on the same graph:

This is exactly why Apple (and AMD) is so fond of ramping up GPU performance: it’s the only way to get serious performance gains each generation. Ultimately we’ll see GPU performance gains level off as well, but if you want to scale compute in a serious way you need to heavily leverage faster GPUs.

This is the crux of the Mac Pro story. It’s not just about a faster CPU, but rather a true shift towards GPU compute. In a little over a year, Apple increased the GPU horsepower of the cheapest Mac Pro by as great of a margin as it did from 2006 - 2012. The fastest GPU option didn’t improve by quite as much, but it’s close.

Looking at the same data on a log scale you’ll see that the percentage increase in GPU performance is slowing down over time, much like what we saw with CPUs, just to a much lesser extent. Note that this graph doesn't take into account that the Late 2013 Mac Pro has a second GPU. If we take that into account, GPU performance scaling obviously looks even better. Scaling silicon performance is tough regardless of what space you’re playing in these days. If you’re looking for big performance gains though, you’ll need to exploit the GPU.

The similarities between what I’m saying here about GPU performance and AMD’s mantra over the past few years aren’t lost. The key difference between Apple’s approach and those of every other GPU company is that Apple spends handsomely to ensure it has close to the best single threaded CPU performance as well as the best GPU performance. This is an important distinction, and ultimately the right approach.

267 Comments

View All Comments

frelledstl - Tuesday, December 31, 2013 - link

"I have to admit that I've been petting it regularly ever since. It's really awesomely smooth. It's actually the first desktop in a very long time that I want very close to me."You lost me here dude. Scary...

lilo777 - Tuesday, December 31, 2013 - link

It's jusr AT delivering main Apple talking points. After all small size is the only [questionable] benefit of MP. How else can they justify skimping on GPU power no expandability etc.darkcrayon - Wednesday, January 1, 2014 - link

Ahh yes, a willfully ignorant troll on any forum. "No expandability"...akdj - Wednesday, January 1, 2014 - link

Lol...you're baaaack! To spew more bullshit? Expandability skimping? Developing thunderbolt hand in hand with Intel, decreasing the weight from 70 to 10 pounds yet blowing the doors off its predecessor with its 'skimpy' GPU offerings....hmmm, I'll take two. Sorry you've no need for the machine. Many that do will easily save time.....which in turn allows the computer to make more money....which allows it to pay for itself.Engineering art AND function AND the balls to pull it off

Are you still missing your Soundblaster? Your serial and parallel connections?

I'd like to think LILO has a life....but it's pretty much a given, ANY Apple story, review, even objective measurements Anand and team provide, LILO will be here....fast as he can. Quarterbacking from mom's basement with his Pentium 4 and Voodoo3DFX....feed the spider dude. Get out. Get some air. Then learn about computers. It wouldn't waste as much 'comment' space. You're obviously in need of an xbox...not a workstation with MORE Expandability than any other computer on the market and weighing a bit more than Dell and HP's 'workstation' laptops. Wow. Just. Wow. Hopefully some day anonymity will be taken away in these comment sections. Would make it nice to know some folks would just find a different place to troll

bji - Thursday, January 2, 2014 - link

That's alot of hate for something so insignificant.Dennis Travis - Thursday, January 2, 2014 - link

He probably uses an old Adlib card and not even a soundblaster! :D Grin it's been so long I actually had google to remember the name Adlib!KVFinn - Tuesday, December 31, 2013 - link

People have been avoiding crossfire AMD chips for awhile because of frame pacing issues (high frame but with stutters and frames out of sequence so looks worse overall) AMD recently fixed this but only in the 290 model. Do the D700s suffer from this issue in windows gaming?Ryan Smith - Tuesday, December 31, 2013 - link

Yes. D700s are Tahiti based and as such have all the same limitations as the 7970/280x parts, where it has yet to be fixed for Eyefinity configurations (including tiled monitors).mesahusa - Thursday, January 9, 2014 - link

why in hell would you even ask such a stupid question? its pretty obvious that this is for video editors and movie makers, not gamers -_-solipsism - Tuesday, December 31, 2013 - link

Where is the Single Threaded Performance for the first graph on page 2?