The AMD Radeon R9 290 Review

by Ryan Smith on November 5, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

Metro: Last Light

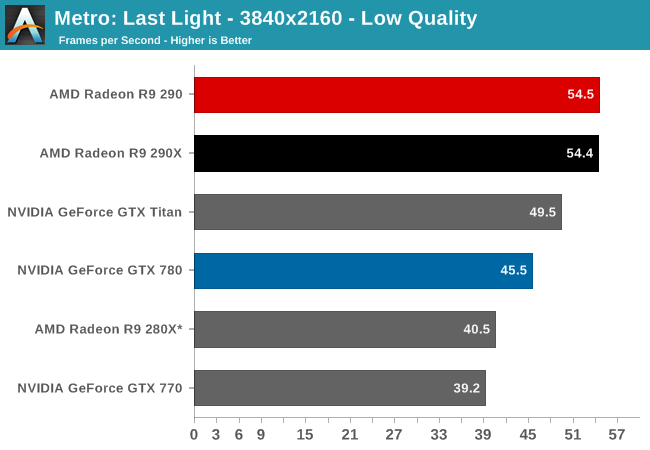

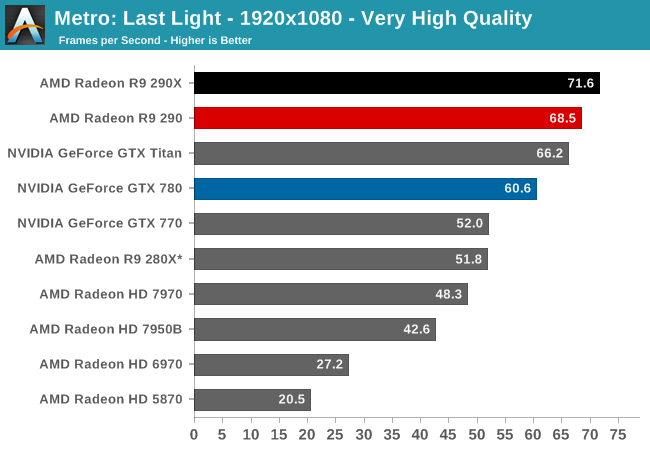

As always, kicking off our look at performance is 4A Games’ latest entry in their Metro series of subterranean shooters, Metro: Last Light. The original Metro: 2033 was a graphically punishing game for its time and Metro: Last Light is in its own right too. On the other hand it scales well with resolution and quality settings, so it’s still playable on lower end hardware.

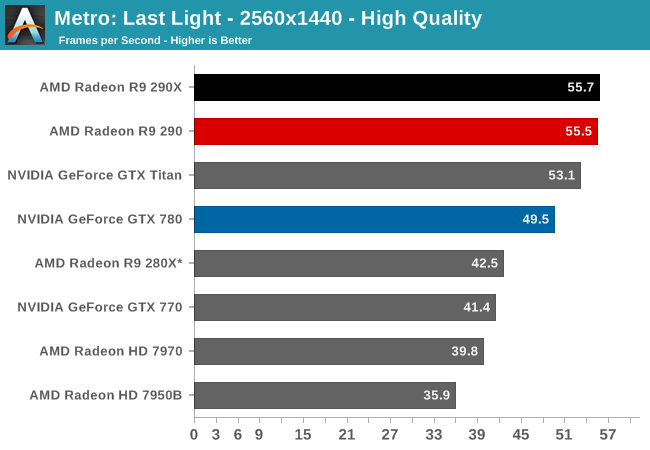

For the bulk of our analysis we’re going to be focusing on our 2560x1440 results, as monitors at this resolution will be what we expect the 290 to be primarily used with. A single 290 may have the horsepower to drive 4K in at least some situations, but given the current costs of 4K monitors that’s going to be a much different usage scenario. The significant quality tradeoff for making 4K playable on a single card means that it makes far more sense to double up on GPUs, given the fact that even a pair of 290Xs would still be a fraction of the cost of a 4K, 60Hz monitor.

With that said, there are a couple of things that should be immediately obvious when looking at the performance of the 290.

- It’s incredibly fast for the price.

- Its performance is at times extremely close to the 290X

To get right to the point, because of AMD’s fan speed modification the 290 doesn’t throttle in any of our games, not even Metro or Crysis 3. The 290X in comparison sees significant throttling in both of those games, and as a result once fully warmed up the 290X is operating at clockspeeds well below its 1000MHz boost clock, or even the 290’s 947MHz boost clock. As a result rather than having a 5% clockspeed deficit as the official specs for these cards would indicate, the 290 for all intents and purposes clocks higher than the 290X. Which means that its clockspeed advantage is now offsetting the loss of shader/texturing performance due to the CU reduction, while providing a clockspeed greater than the 290X for the equally configured front-end and back-end. In practice this means that 290 has over 100% of 290X’s ROP/geometry performance, 100% of the memory bandwidth, and at least 91% of the shading performance.

So in games where we’re not significantly shader bound, and Metro at 2560 appears to be one such case, the 290 can trade blows with the 290X despite its inherent disadvantage. Now as we’ll see this is not going to be the case in every game, as not every game GPU bound in the same manner and not every game throttles on the 290X by the same degree, but it sets up a very interesting performance scenario. By pushing the 290 this hard, and by throwing any noise considerations out the window, AMD has created a card that can not only threaten the GTX 780, but can threaten the 290X too. As we’ll see by the end of our benchmarks, the 290 is only going to trail the 290X by an average of 3% at 2560x1440.

Anyhow, looking at Metro it’s a very strong start for the 290. At 55.5fps it’s essentially tied with the 290X and 12% ahead of the GTX 780. Or to make a comparison against the cards it’s actually priced closer to, the 290 is 34% faster than the GTX 770 and 31% faster than the 280X. AMD’s performance advantage will come crashing down once we revisit the power and noise aspects of the card, but looking at raw performance it’s going to look very good for the 290.

295 Comments

View All Comments

doggghouse - Wednesday, November 6, 2013 - link

So at 60dB, it's as loud as someone talking next to you. In other words, you would have some difficulty hearing another person over the sound of the GPU fan. I would say that's pretty loud.I think the 290 and 290X have a lot of potential, but with the stock cooling I would stay away from it.

Vorl - Wednesday, November 6, 2013 - link

I don't remember the exact noise test, but I thought the measurement was taken right next to the card, at the fan... so if you put distance, and a case around the card, it will not be nearly as loud as that.ThomasS31 - Wednesday, November 6, 2013 - link

R9 290 series is the worst ever launch for AMD... this was a chance to show how professional they are and gain market share with a great opportunity and product... and they screwed it up and failed.And this is not he first time they failed to monetize their products. So maybe there shall be some personal consequences and changes needed.

Hope the new hires (leaders) change this and this is the last time we saw great products hindered with bad execution.

After the 7990 cooler I and what nVidia did they learned the lessons... but not.

And now as I hear they are not allowing custom coolers and/ or limit manufacturers in the use of the best coolers/design they could do... as competition is bad for market or what???

Mondozai - Friday, December 13, 2013 - link

lolmartixy - Wednesday, November 6, 2013 - link

So the takeaway is:There's a new king in town, and it's name is AMD.

For now...

+You get to be Mantle-proof.

What I wanna see now is Mantle on an nV card and g-sync on an AMD card.

nevertell - Wednesday, November 6, 2013 - link

Why is it so that when a ridiculously loud nvidia card gets released, people go crazy about the heat and noise generated, but when AMD does the same, they only focus on the performance?I do understand that the price and performance for these cards is pretty ground braking, but then again, AMD used to release some nasty adverts about people using Fermi cards. And fermi cards were not even this loud.

And considering the fact that the testbed here was probably properly ventilated and designed for hot and fast cards, I believe there is a significant portion of the market, who will buy the card and stick it into a small or badly ventilated or just crammed case and call it a day. And those people will not be able to get the performance advertised here, as their cards will probably throttle a lot more.

But I do love how the roles are switching, only 3 generations ago, AMD's and Nvidia's positions were exactly the opposite, at least in the power and noise, and mostly power efficiency, departments.

We still have to wait for 780ti, but seeing as titan is already having a run for it's money.

Death666Angel - Wednesday, November 6, 2013 - link

I don't care one bit about the loudness of a card. Anything I own has to be watercooled and the custom water cooling block costs the same whether it is from nVidia or AMD. So this card is a win on all fronts. And unless you have to buy reference design without switching to WC, I don't think you'll be disappointed.It's really hard to read 5 paragraphs on noise when that is the least of anyones concerns when buying a video card. People who are concerned with it have custom cooling stuff which is the same for most cards (nVidia or AMD), since most cards are the same. Or they don't care since their other stuff is louder and/or they use good headphones. So I think knocking the 290 for loudness is a bit petty. :)

Achaios - Wednesday, November 6, 2013 - link

Can't wait for Gigabyte's R9 290 SOC (Super Overclock) with 3X Windforce Cooler. I drool at the thought.ecuador - Wednesday, November 6, 2013 - link

Ryan, it is interesting to contrast this review to Anand's review of the FX 5800. You sound much more damning for a card that is much cheaper and faster than the competition at a 9.7dB louder, than Anand was for a card that was slower and 13dB louder than the competition back then! Ok, it is not for everyone until it gets custom coolers, but it sure gives you a lot for that tradeoff. The mystery is why AMD does not make a cooler that is worth a damn!swing848 - Wednesday, November 6, 2013 - link

Anand,Most people that read your reviews know you are a GeForce fan boy. And, the last page of your "review" tells people not to purchase the R9 290.

In fact, many people purchase this card BECAUSE THEY WANT IT. Let the buyer decide what he or she want in price and performance, and stop poking AMD in they eye with your GeForce stick. If anything give advice to people on how to keep temperatures under control with the least noise possible; but, no, you have to get on your GeForce box and pound AMD ... again. How much do they pay your or your company?

The R9 290 is a great card, and after reading several reviews, know it.