The AMD Radeon R9 290X Review

by Ryan Smith on October 24, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

XDMA: Improving Crossfire

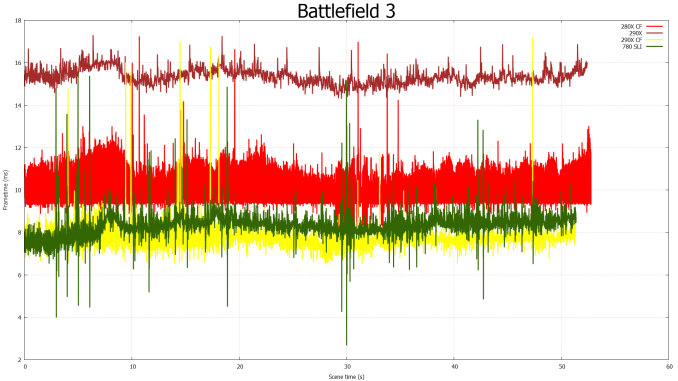

Over the past year or so a lot of noise has been made over AMD’s Crossfire scaling capabilities, and for good reason. With the evolution of frame capture tools such as FCAT it finally became possible to easily and objectively measure frame delivery patterns. The results of course weren’t pretty for AMD, showcasing that Crossfire may have been generating plenty of frames, but in most cases it was doing a very poor job of delivering them.

AMD for their part doubled down on the situation and began rolling out improvements in a plan that would see Crossfire improved in multiple phases. Phase 1, deployed in August, saw a revised Crossfire frame pacing scheme implemented for single monitor resolutions (2560x1600 and below) which generally resolved AMD’s frame pacing in those scenarios. Phase 2, which is scheduled for next month, will address multi-monitor and high resolution scaling, which faces a different set of problems and requires a different set of fixes than what went into phase 1.

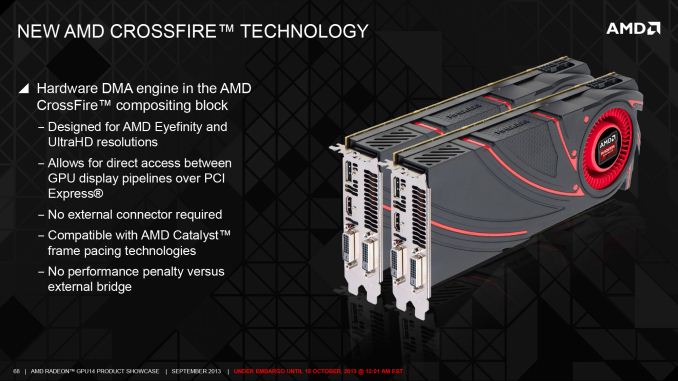

The fact that there’s even a phase 2 brings us to our next topic of discussion, which is a new hardware DMA engine in GCN 1.1 parts called XDMA. Being first utilized on Hawaii, XDMA is the final solution to AMD’s frame pacing woes, and in doing so it is redefining how Crossfire is implemented on 290X and future cards. Specifically, AMD is forgoing the Crossfire Bridge Interconnect (CFBI) entirely and moving all inter-GPU communication over the PCIe bus, with XDMA being the hardware engine that makes this both practical and efficient.

But before we get too far ahead of ourselves, it would be best to put the current Crossfire situation in context before discussing how XDMA deviates from it.

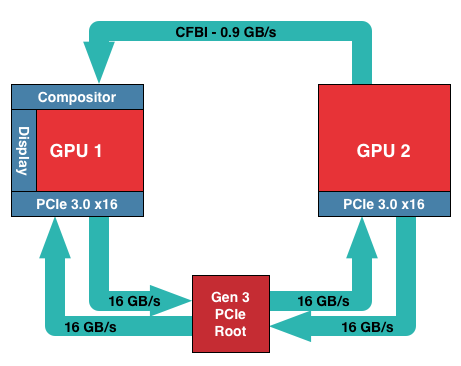

In AMD’s current CFBI implementation, which itself dates back to the X1900 generation, a CFBI link directly connects two GPUs and has 900MB/sec of bandwidth. In this setup the purpose of the CFBI link is to transfer completed frames to the master GPU for display purposes, and to so in a direct GPU-to-GPU manner to complete the job as quickly and efficiently as possible.

For single monitor configurations and today’s common resolutions the CFBI excels at its task. AMD’s software frame pacing algorithms aside, the CFBI has enough bandwidth to pass around complete 2560x1600 frames at over 60Hz, allowing the CFBI to handle the scenarios laid out in AMD’s phase 1 frame pacing fix.

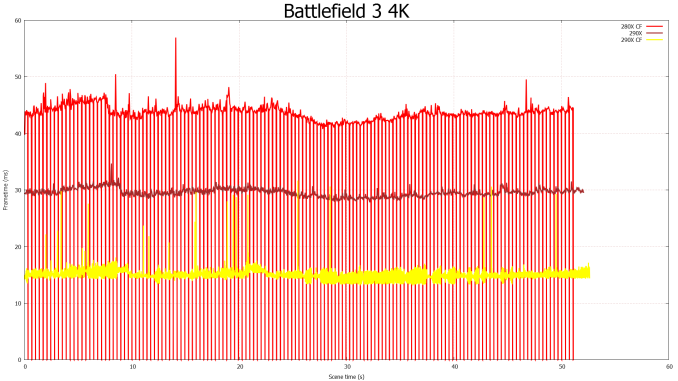

The issue with the CFBI is that while it’s an efficient GPU-to-GPU link, it hasn’t been updated to keep up with the greater bandwidth demands generated by Eyefinity, and more recently 4K monitors. For a 3x1080p setup frames are now just shy of 20MB/each, and for a 4K setup frames are larger still at almost 24MB/each. With frames this large CFBI doesn’t have enough bandwidth to transfer them at high framerates – realistically you’d top out at 30Hz or so for 4K – requiring that AMD go over the PCIe bus for their existing cards.

Going over the PCIe bus is not in and of itself inherently a problem, but pre-GCN 1.1 hardware lacks any specialized hardware to help with the task. Without an efficient way to move frames, and specifically a way to DMA transfer frames directly between the cards without involving CPU time, AMD has to resort to much uglier methods of moving frames between the cards, which are in part responsible for the poor frame pacing we see today on Eyefinity/4K setups.

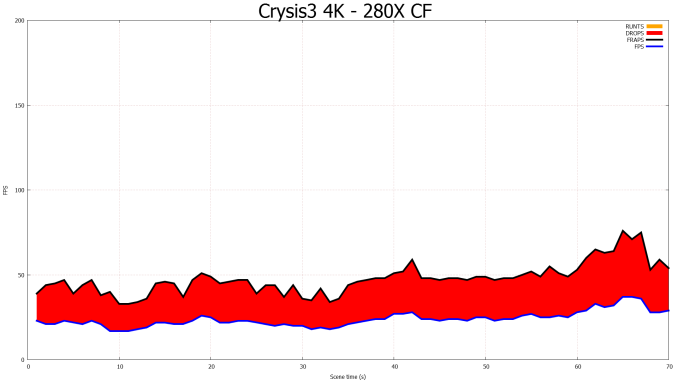

CFBI Crossfire At 4K: Still Dropping Frames

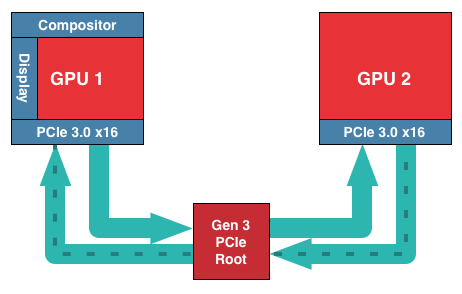

For GCN 1.1 and Hawaii in particular, AMD has chosen to solve this problem by continuing to use the PCIe bus, but by doing so with hardware dedicated to the task. Dubbed the XDMA engine, the purpose of this hardware is to allow CPU-free DMA based frame transfers between the GPUs, thereby allowing AMD to transfer frames over the PCIe bus without the ugliness and performance costs of doing so on pre-GCN 1.1 cards.

With that in mind, the specific role of the XDMA engine is relatively simple. Located within the display controller block (the final destination for all completed frames) the XDMA engine allows the display controllers within each Hawaii GPU to directly talk to each other and their associated memory ranges, bypassing the CPU and large chunks of the GPU entirely. Within that context the purpose of the XDMA engine is to be a dedicated DMA engine for the display controllers and nothing more. Frame transfers and frame presentations are still directed by the display controllers as before – which in turn are directed by the algorithms loaded up by AMD’s drivers – so the XDMA engine is not strictly speaking a standalone device, nor is it a hardware frame pacing device (which is something of a misnomer anyhow). Meanwhile this setup also allows AMD to implement their existing Crossfire frame pacing algorithms on the new hardware rather than starting from scratch, and of course to continue iterating on those algorithms as time goes on.

Of course by relying solely on the PCIe bus to transfer frames there are tradeoffs to be made, both for the better and for the worse. The benefits are of course the vast increase in memory bandwidth (PCIe 3.0 x16 has 16GB/sec available versus .9GB/sec for CFBI) not to mention allowing Crossfire to be implemented without those pesky Crossfire bridges. The downside to relying on the PCIe bus is that it’s not a dedicated, point-to-point connection between GPUs, and for that reason there will bandwidth contention, and the latency for using the PCIe bus will be higher than the CFBI. How much worse depends on the configuration; PCIe bridge chips for example can both improve and worsen latency depending on where in the chain the bridges and the GPUs are located, not to mention the generation and width of the PCIe link. But, as AMD tells us, any latency can be overcome by measuring it and thereby planning frame transfers around it to take the impact of latency into account.

Ultimately AMD’s goal with the XDMA engine is to make PCIe based Crossfire just as efficient, performant, and compatible as CFBI based Crossfire, and despite the initial concerns we had over the use of the PCIe bus, based on our test results AMD appears to have delivered on their promises.

The XDMA engine alone can’t eliminate the variation in frame times, but in its first implementation it’s already as good as CFBI in single monitor setups, and being free of the Eyefinity/4K frame pacing issues that still plague CFBI, is nothing short of a massive improvement over CFBI in those scenarios. True to their promises, AMD has delivered a PCie based Crossfire implementation that incurs no performance penalty versus CFBI, and on the whole fully and sufficiently resolves AMD’s outstanding frame pacing issues. The downside of course is that XDMA won’t help the 280X or other pre-GCN 1.1 cards, but at the very least going forward AMD finally has demonstrated that they have frame pacing fully under control.

On a side note, looking at our results it’s interesting to see that despite the general reuse of frame pacing algorithms, the XDMA Crossfire implementation doesn’t exhibit any of the distinct frame time plateaus that the CFBI implementation does. The plateaus were more an interesting artifact than a problem, but it does mean that AMD’s XDMA Crossfire implementation is much more “organic” like NVIDIA’s, rather than strictly enforcing a minimum frame time as appeared to be the case with CFBI.

396 Comments

View All Comments

46andtool - Thursday, October 24, 2013 - link

I dont know where your getting your information but your obviously nvidia biased because its all wrong. AMD is known for using poor reference coolers, once manufactures like sapphire and HIS roll out there cards in a couple weeks Im sure the noise and heat wont be a problem. and the 780ti is poised to be between a 780gtx and a titan, it will not be faster than a 290x, sorry. We already have the 780ti's specs..what Nvidia needs to focus on is dropping its insane pricing.SolMiester - Monday, October 28, 2013 - link

Sorry bud, but the Ti will be much faster than Titan, otherwise there is no point, hell even the 780OC is enough to edge the Titan. Why are people going on about Titan, its a once in a blue moon product to fill a void that AMD left open with CUDA dev for prosumers...Full monty with perhaps 7ghz memory, wahey!Samus - Friday, October 25, 2013 - link

What in the world makes you think the 780Ti will be faster than Titan? That's ridiculous. What's next, a statement that the 760Ti will be faster than the 770?TheJian - Friday, October 25, 2013 - link

http://www.techradar.com/us/news/computing-compone...Another shader and more mhz.

http://news.softpedia.com/news/NVIDIA-GeForce-GTX-...

If the specs are true quite a few sites think it will be faster than titan.

http://hexus.net/tech/news/graphics/61445-nvidia-g...

Check the table. 780TI would win in gflops if leak is true. The extra 80mhz+1SMX mean it should either tie or barely beat it in nearly everything.

Even a tie at $650 would be quite awesome at less watts/heat/noise possibly. Of course it will be beat a week later buy a fully unlocked titan ultra or more mhz, or mhz+fully unlocked. NV won't just drop titan. They will make a better one easily. It's not like NV just sat on their butts for the last 8 months. It's comic anyone thinks AMD has won. OK, for a few weeks tops (and not really even now other than price looking at 1080p and the games I mentioned previously).

ShieTar - Thursday, October 24, 2013 - link

It doesn't cost less than a GTX780, it only has a lower MSRP. The actual price for which you can buy a GTX780 is already below 549$ today, so as usual you pay the same price for the same performance with both companies.And testing 4K gaming is important right now, but it should be another 3-5 years before 4K performance actually impacts sales figures in any relevant way.

And about Titan? Keep in mind that it is 8 months old, still has one SMX disabled (unlike the Quadro K6000), and still uses less power in games than the 290X. So I wouldn't be surprised to see a Titan+ come out soon, with 15 SMX and higher base clocks, and as Ryan puts it in this article "building a refined GPU against a much more mature 28nm process". That should be enough to gain 10%-15% performance in anything restricted by computing power, thus showing a much more clear lead over the 290X.

The only games that the 290X will clearly win are those that are restricted by memory bandwidth. But nVidia have proven with the 770 that they can operate memory at 7GHz as well, so they can increase Titans bandwidth by 16% through clocks alone.

Don't get me wrong, the 290X looks like a very nice card, with a very good price to it. I just don't think nVidia has any reason to worry, this is just competition as usual, AMD have made their move, nVidia will follow.

Drumsticks - Thursday, October 24, 2013 - link

http://www.newegg.com/Product/ProductList.aspx?Sub...Searched on Newegg, sorted by lowest price, lowest one was surprise! $650. I don't think Newegg is over $100 off in their pricing with competitors.

46andtool - Thursday, October 24, 2013 - link

http://www.newegg.com/Product/Product.aspx?Item=N8...your clearly horrible at searching

TheJian - Friday, October 25, 2013 - link

$580 isn't $550 though right? And out of stock. I wonder how many of these they can actually make seeing how hot it is already in every review pegged 94c. Nobody was able to OC it past 1125. They're clearly pushing this thing a lot already.ShieTar - Friday, October 25, 2013 - link

Well, color me surprised. I admittedly didn't check the US market, because for more than a decade now, electronics used to be sold in the Euro-Region with a price conversion assumption of 1€=1$, so everything was about 35% more expensive over here (but including 19% VAT of course).So for this discussion I used our German comparison engines. Both the GTX780 and the R290X are sold for the same price of just over 500€ over here, which is basically 560$+19%VAT. I figured the same price policies would apply in the US, it basically always does.

Well, as international shipping is rarely more that 15$, it would seem like your cheapest way to get a 780 right now is to actually import it from Germany. Its always been the other way around with electronics, interesting to see it the other way around for once.

46andtool - Thursday, October 24, 2013 - link

the price of a 780gtx is not below $649 unless you are talking about a refurbished or open box card.