The AMD Radeon R9 290X Review

by Ryan Smith on October 24, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

XDMA: Improving Crossfire

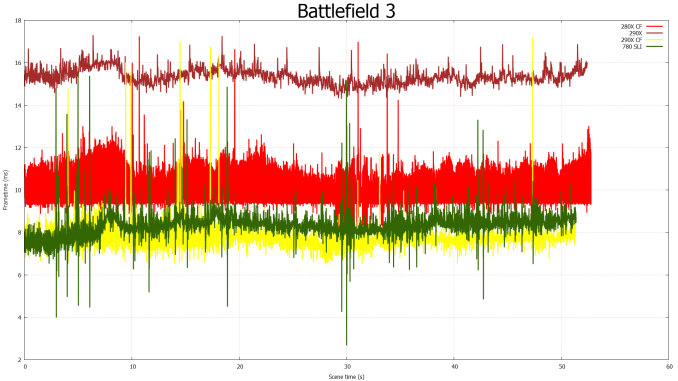

Over the past year or so a lot of noise has been made over AMD’s Crossfire scaling capabilities, and for good reason. With the evolution of frame capture tools such as FCAT it finally became possible to easily and objectively measure frame delivery patterns. The results of course weren’t pretty for AMD, showcasing that Crossfire may have been generating plenty of frames, but in most cases it was doing a very poor job of delivering them.

AMD for their part doubled down on the situation and began rolling out improvements in a plan that would see Crossfire improved in multiple phases. Phase 1, deployed in August, saw a revised Crossfire frame pacing scheme implemented for single monitor resolutions (2560x1600 and below) which generally resolved AMD’s frame pacing in those scenarios. Phase 2, which is scheduled for next month, will address multi-monitor and high resolution scaling, which faces a different set of problems and requires a different set of fixes than what went into phase 1.

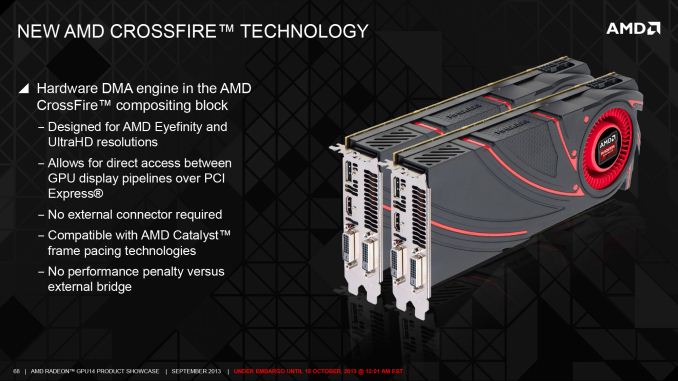

The fact that there’s even a phase 2 brings us to our next topic of discussion, which is a new hardware DMA engine in GCN 1.1 parts called XDMA. Being first utilized on Hawaii, XDMA is the final solution to AMD’s frame pacing woes, and in doing so it is redefining how Crossfire is implemented on 290X and future cards. Specifically, AMD is forgoing the Crossfire Bridge Interconnect (CFBI) entirely and moving all inter-GPU communication over the PCIe bus, with XDMA being the hardware engine that makes this both practical and efficient.

But before we get too far ahead of ourselves, it would be best to put the current Crossfire situation in context before discussing how XDMA deviates from it.

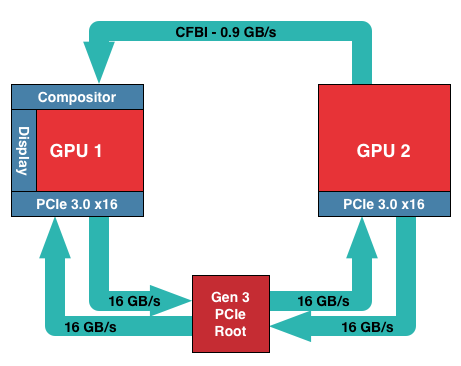

In AMD’s current CFBI implementation, which itself dates back to the X1900 generation, a CFBI link directly connects two GPUs and has 900MB/sec of bandwidth. In this setup the purpose of the CFBI link is to transfer completed frames to the master GPU for display purposes, and to so in a direct GPU-to-GPU manner to complete the job as quickly and efficiently as possible.

For single monitor configurations and today’s common resolutions the CFBI excels at its task. AMD’s software frame pacing algorithms aside, the CFBI has enough bandwidth to pass around complete 2560x1600 frames at over 60Hz, allowing the CFBI to handle the scenarios laid out in AMD’s phase 1 frame pacing fix.

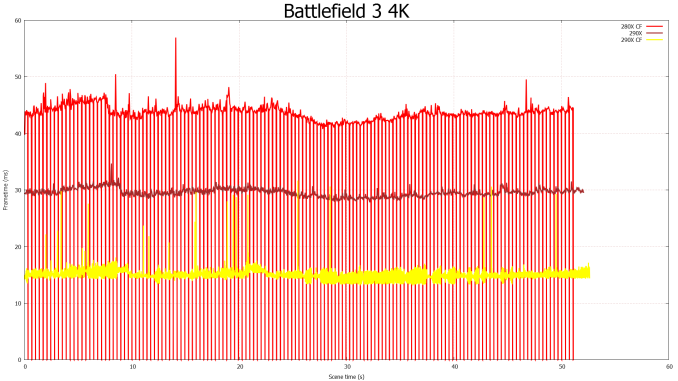

The issue with the CFBI is that while it’s an efficient GPU-to-GPU link, it hasn’t been updated to keep up with the greater bandwidth demands generated by Eyefinity, and more recently 4K monitors. For a 3x1080p setup frames are now just shy of 20MB/each, and for a 4K setup frames are larger still at almost 24MB/each. With frames this large CFBI doesn’t have enough bandwidth to transfer them at high framerates – realistically you’d top out at 30Hz or so for 4K – requiring that AMD go over the PCIe bus for their existing cards.

Going over the PCIe bus is not in and of itself inherently a problem, but pre-GCN 1.1 hardware lacks any specialized hardware to help with the task. Without an efficient way to move frames, and specifically a way to DMA transfer frames directly between the cards without involving CPU time, AMD has to resort to much uglier methods of moving frames between the cards, which are in part responsible for the poor frame pacing we see today on Eyefinity/4K setups.

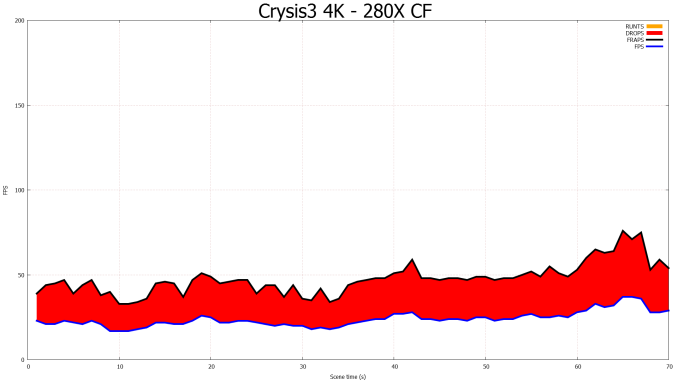

CFBI Crossfire At 4K: Still Dropping Frames

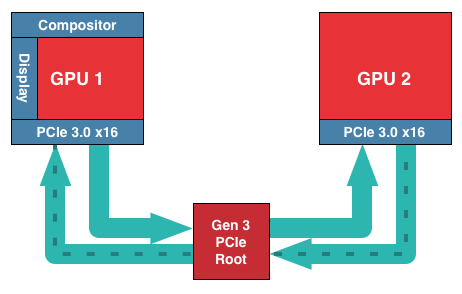

For GCN 1.1 and Hawaii in particular, AMD has chosen to solve this problem by continuing to use the PCIe bus, but by doing so with hardware dedicated to the task. Dubbed the XDMA engine, the purpose of this hardware is to allow CPU-free DMA based frame transfers between the GPUs, thereby allowing AMD to transfer frames over the PCIe bus without the ugliness and performance costs of doing so on pre-GCN 1.1 cards.

With that in mind, the specific role of the XDMA engine is relatively simple. Located within the display controller block (the final destination for all completed frames) the XDMA engine allows the display controllers within each Hawaii GPU to directly talk to each other and their associated memory ranges, bypassing the CPU and large chunks of the GPU entirely. Within that context the purpose of the XDMA engine is to be a dedicated DMA engine for the display controllers and nothing more. Frame transfers and frame presentations are still directed by the display controllers as before – which in turn are directed by the algorithms loaded up by AMD’s drivers – so the XDMA engine is not strictly speaking a standalone device, nor is it a hardware frame pacing device (which is something of a misnomer anyhow). Meanwhile this setup also allows AMD to implement their existing Crossfire frame pacing algorithms on the new hardware rather than starting from scratch, and of course to continue iterating on those algorithms as time goes on.

Of course by relying solely on the PCIe bus to transfer frames there are tradeoffs to be made, both for the better and for the worse. The benefits are of course the vast increase in memory bandwidth (PCIe 3.0 x16 has 16GB/sec available versus .9GB/sec for CFBI) not to mention allowing Crossfire to be implemented without those pesky Crossfire bridges. The downside to relying on the PCIe bus is that it’s not a dedicated, point-to-point connection between GPUs, and for that reason there will bandwidth contention, and the latency for using the PCIe bus will be higher than the CFBI. How much worse depends on the configuration; PCIe bridge chips for example can both improve and worsen latency depending on where in the chain the bridges and the GPUs are located, not to mention the generation and width of the PCIe link. But, as AMD tells us, any latency can be overcome by measuring it and thereby planning frame transfers around it to take the impact of latency into account.

Ultimately AMD’s goal with the XDMA engine is to make PCIe based Crossfire just as efficient, performant, and compatible as CFBI based Crossfire, and despite the initial concerns we had over the use of the PCIe bus, based on our test results AMD appears to have delivered on their promises.

The XDMA engine alone can’t eliminate the variation in frame times, but in its first implementation it’s already as good as CFBI in single monitor setups, and being free of the Eyefinity/4K frame pacing issues that still plague CFBI, is nothing short of a massive improvement over CFBI in those scenarios. True to their promises, AMD has delivered a PCie based Crossfire implementation that incurs no performance penalty versus CFBI, and on the whole fully and sufficiently resolves AMD’s outstanding frame pacing issues. The downside of course is that XDMA won’t help the 280X or other pre-GCN 1.1 cards, but at the very least going forward AMD finally has demonstrated that they have frame pacing fully under control.

On a side note, looking at our results it’s interesting to see that despite the general reuse of frame pacing algorithms, the XDMA Crossfire implementation doesn’t exhibit any of the distinct frame time plateaus that the CFBI implementation does. The plateaus were more an interesting artifact than a problem, but it does mean that AMD’s XDMA Crossfire implementation is much more “organic” like NVIDIA’s, rather than strictly enforcing a minimum frame time as appeared to be the case with CFBI.

396 Comments

View All Comments

mr_tawan - Tuesday, November 5, 2013 - link

AMD card may suffer from loud cooler. Let's just hope that the OEM versions would be shipped with quieter coolers.1Angelreloaded - Monday, November 11, 2013 - link

I have to be Honest here, it is beast, in fact the only thing in my mind holding this back is lack of feature sets compared to NVidia, namely PhysX, to me this is a bit of a deal breaker compared for 150$ more the 780 Ti gives me that with lower TDP/and sound profile, as we are only able to so much pull from 1 120W breaker without tripping it and modification for some people is a deal breaker due to wear they live and all. Honestly What I really need to see from a site is 4k gaming at max, 1600p/1200p/1080p benchmarks with single cards as well as SLI/Crossfire to see how they scale against each other. To be clear as well a benchmark using Skyrim Modded to the gills in texture resolutions as well to fully see how the VRAM might effect the cards in future games from this next Gen era, where the Consoles can manage a higher texture resolution natively now, and ultimately this will affect PC performance when the standard was 1-2k texture resolutions now becomes double to 4k or even in a select few up to 8k depth. With a native 64 bit architecture as well you will be able to draw more system RAM into the equation where Skyrim can use a max of 3.5 before it dies with Maxwell coming out and a shared memory pool with a single core microprocessor on the die itself with Gsync for smoothness we might see an over engineered GPU card capable of much much more than we thought, ATI as well has their own ideas which will progress, I have a large feeling Hawaii is actually a reject of sorts because they have to compete with Maxwell and engineer more into the cards themselves.marceloviana - Monday, November 25, 2013 - link

I Just wondering why does this card came with 32Gb gddr5 and see only 4Gb. The PCB show 16 Elpida EDW2032BBBG (2G each). This amount of memory will help a lot in large scenes wit Vray-RT.Mat3 - Thursday, March 13, 2014 - link

I don't get it. It's supposed to have 11 compute units per shader engine, making 44 on the entire chip. But the 2nd picture says each shader engine can only have up to 9 compute units....?Mat3 - Thursday, March 13, 2014 - link

2nd picture on page three I mean.sanaris - Monday, April 14, 2014 - link

Who cares? This card was never meant to compute something.It supposed to be "cheap but decent".

Initially they made this ridiculous price, but now it is around 200-350 at ebay.

For $200 it worth its price, because it can be used only to play games.

Who wants to play games at medium quality (not the future ones), may prefer it.