The AMD Radeon R9 290X Review

by Ryan Smith on October 24, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

Total War: Rome 2

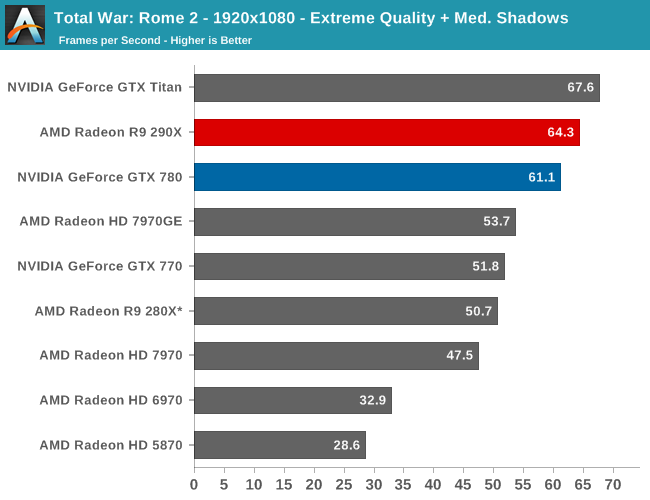

The second strategy game in our benchmark suite, Total War: Rome 2 is the latest game in the Total War franchise. Total War games have traditionally been a mix of CPU and GPU bottlenecks, so it takes a good system on both ends of the equation to do well here. In this case the game comes with a built-in benchmark that plays out over a forested area with a large number of units, definitely stressing the GPU in particular.

For this game in particular we’ve also gone and turned down the shadows to medium. Rome’s shadows are extremely CPU intensive (as opposed to GPU intensive), so this keeps us from CPU bottlenecking nearly as easily.

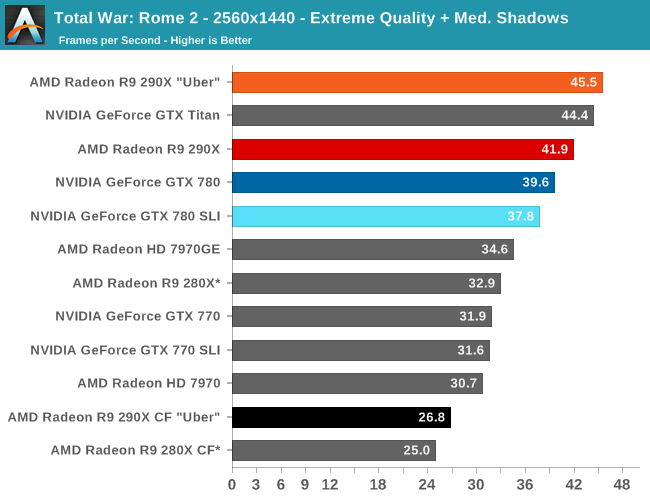

With Rome 2 no one is getting 60fps at 2560, but then again as a strategy game it’s hardly necessary. In which case the 290X once again beats the GTX 780 by a smaller than average 6%, essentially sitting in the middle of the gap between the GTX 780 and GTX Titan.

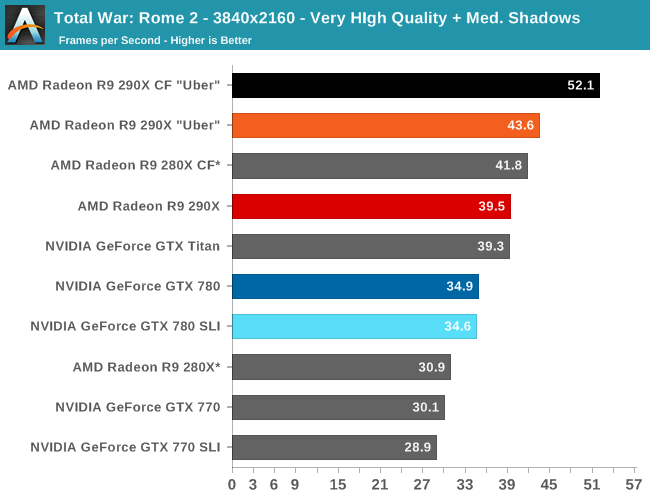

Meanwhile at 4K we can actually get some relatively strong results out of even our single card configurations, but we have to drop our settings down by 2 notches to Very High to do so. Though like all of our 4K game tests, it turns out well for AMD, with the 290X’s lead growing to 13%.

AFR performance is a completely different matter though. It’s not unusual for strategy games to scale poorly or not at all, but Rome 2 is different yet. The GTX 780 SLI consistently doesn’t scale at all, however with the 290X CF we see anything from massive negative scaling at 2560 to a small performance gain at 4K. Given the nature of the game we weren’t expecting anything here at all, and though getting any scaling is a nice turn of events to have negative scaling like this is a bit embarrassing for AMD. At least NVIDIA can claim to be more consistent here.

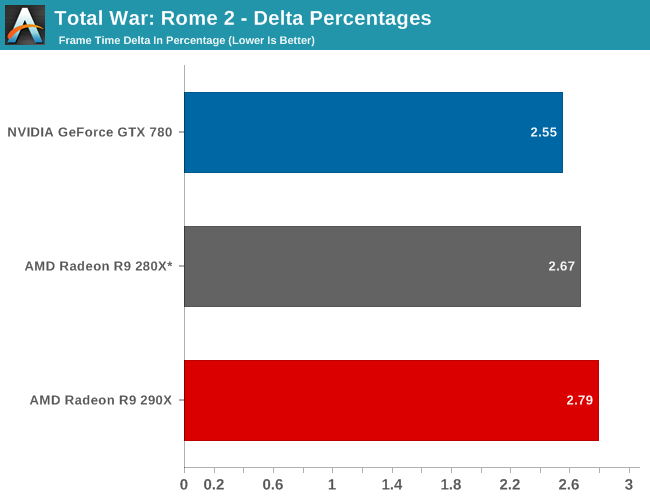

Without working AFR scaling, our deltas are limited to single-GPU configurations and as a result are unremarkable. Sub-3% for everyone, everywhere, which is a solid result for any single-GPU setup.

396 Comments

View All Comments

Spunjji - Friday, October 25, 2013 - link

Word.extide - Thursday, October 24, 2013 - link

That doesn't mean that AMD can't come up with a solution that might even be compatible with G-Sync... Time will tell..piroroadkill - Friday, October 25, 2013 - link

That would not be in NVIDIA's best interests. If a lot of machines (AMD, Intel) won't support it, why would you buy a screen for a specific graphics card? Later down the line, maybe something like the R9 290X comes out, and you can save a TON of money on a high performing graphics card from another team.It doesn't make sense.

For NVIDIA, their best bet at getting this out there and making the most money from it, is licencing it.

Mstngs351 - Sunday, November 3, 2013 - link

Well it depends on the buyer. I've bounced between AMD and Nvidia (to be upfront I've had more Nvidia cards) and I've been wanting to step up to a larger 1440 monitor. I will be sure that it supports Gsync as it looks to be one of the more exciting recent developments.So although you are correct that not a lot of folks will buy an extra monitor just for Gsync, there are a lot of us who have been waiting for an excuse. :P

nutingut - Saturday, October 26, 2013 - link

Haha, that would be something for the cartel office then, I figure.elajt_1 - Sunday, October 27, 2013 - link

This doesn't prevent AMD from making something similiar, if Nvidia decides to not make it open.hoboville - Thursday, October 24, 2013 - link

Gsync will require you to buy a new monitor. Dropping more money on graphics and smoothness will apply at the high end and for those with big wallets, but for the rest of us there's little point to jumping into Gsync.In 3-4 years when IPS 2560x1440 has matured to the point where it's both mainstream (cheap) and capable of delivering low-latency ghosting-free images, then Gsync will be a big deal, but right now only a small percentage of the population have invested in 1440p.

The fact is, most people have been sitting on their 1080p screens for 3+ years and probably will for another 3 unless those same screens fail--$500+ for a desktop monitor is a lot to justify. Once the monitor upgrades start en mass, then Gsync will be a market changer because AMD will not have anything to compete with.

misfit410 - Thursday, October 24, 2013 - link

G-String didn't kill anything, I'm not about to give up my Dell Ultrasharp for another Proprietary Nvidia tool.anubis44 - Tuesday, October 29, 2013 - link

Agreed. G-sync is a stupid solution to non-existent problem. If you have a fast enough frame rate, there's nothing to fix.MADDER1 - Thursday, October 24, 2013 - link

Mantle could be for either higher frame rate or more detail. Gsync sounds like just frame rate.