Intel SSD 530 (240GB) Review

by Kristian Vättö on November 15, 2013 1:45 PM EST- Posted in

- Storage

- SSDs

- Intel

- Intel SSD 530

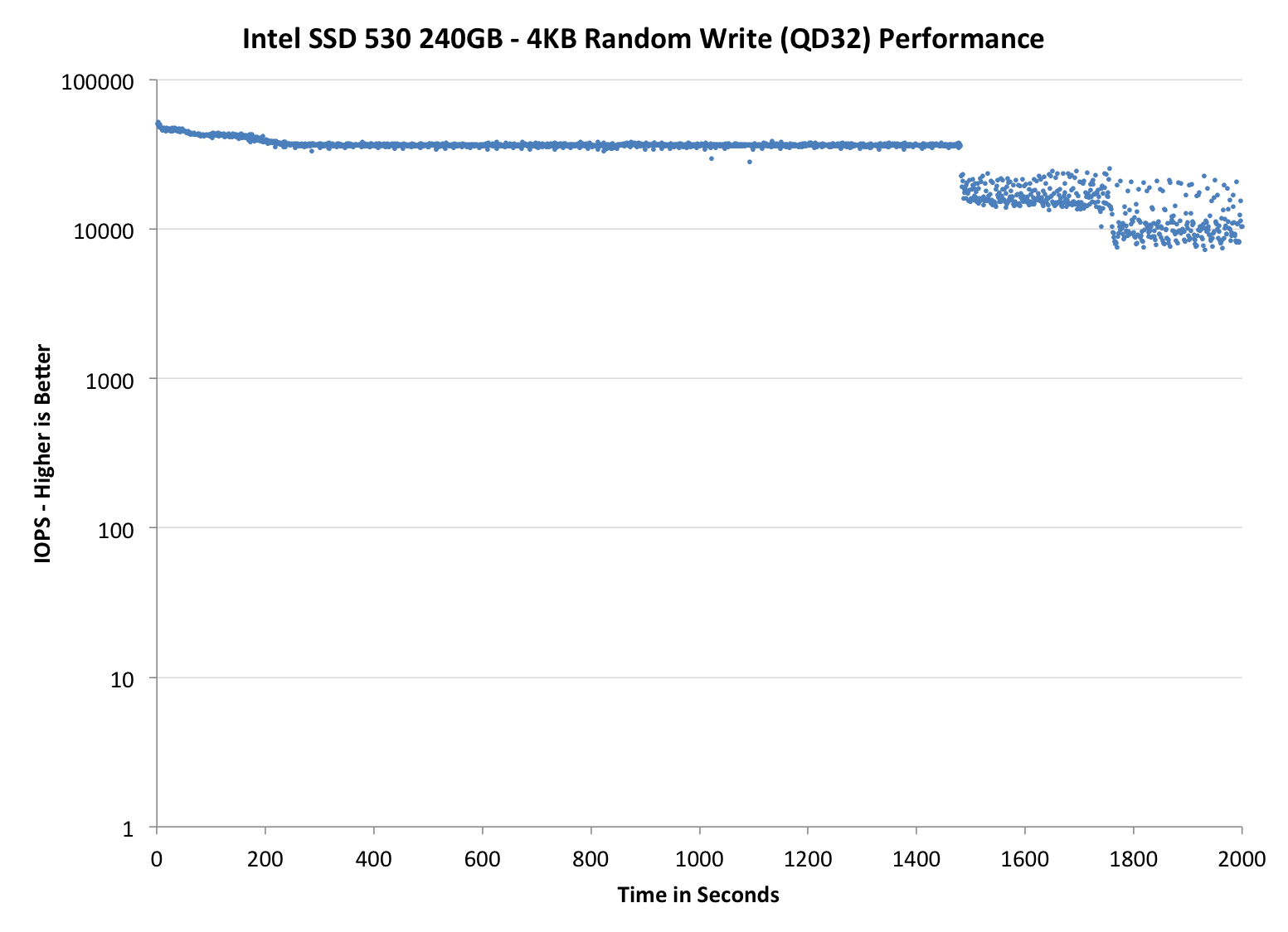

Performance Consistency

In our Intel SSD DC S3700 review Anand introduced a new method of characterizing performance: looking at the latency of individual operations over time. The S3700 promised a level of performance consistency that was unmatched in the industry, and as a result needed some additional testing to show that. The reason we don't have consistent IO latency with SSDs is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag and cleanup routines directly impacts the user experience. Frequent (borderline aggressive) cleanup generally results in more stable performance, while delaying that can result in higher peak performance at the expense of much lower worst-case performance. The graphs below tell us a lot about the architecture of these SSDs and how they handle internal defragmentation.

To generate the data below we take a freshly secure erased SSD and fill it with sequential data. This ensures that all user accessible LBAs have data associated with them. Next we kick off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. We run the test for just over half an hour, nowhere near what we run our steady state tests for but enough to give a good look at drive behavior once all spare area fills up.

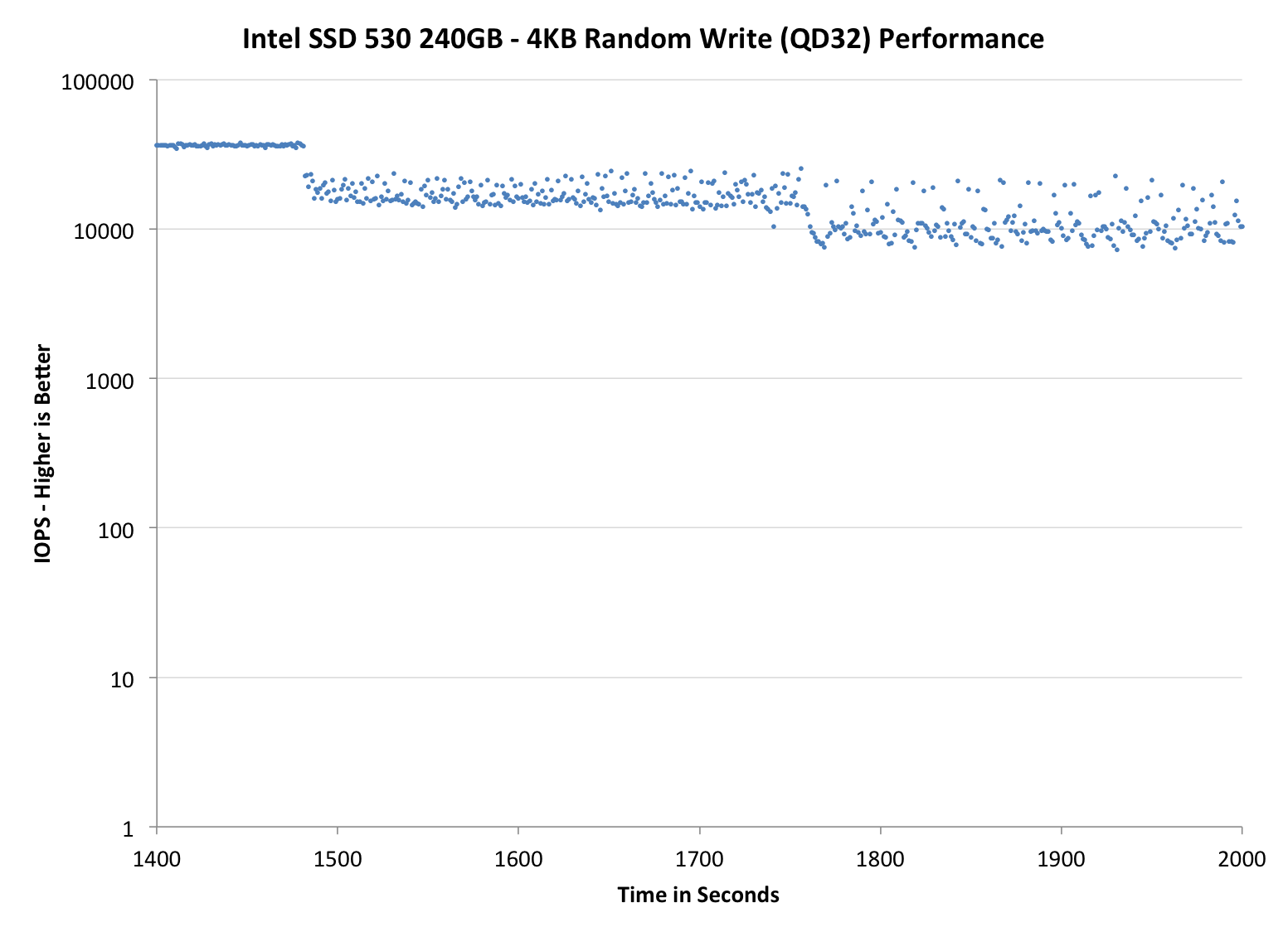

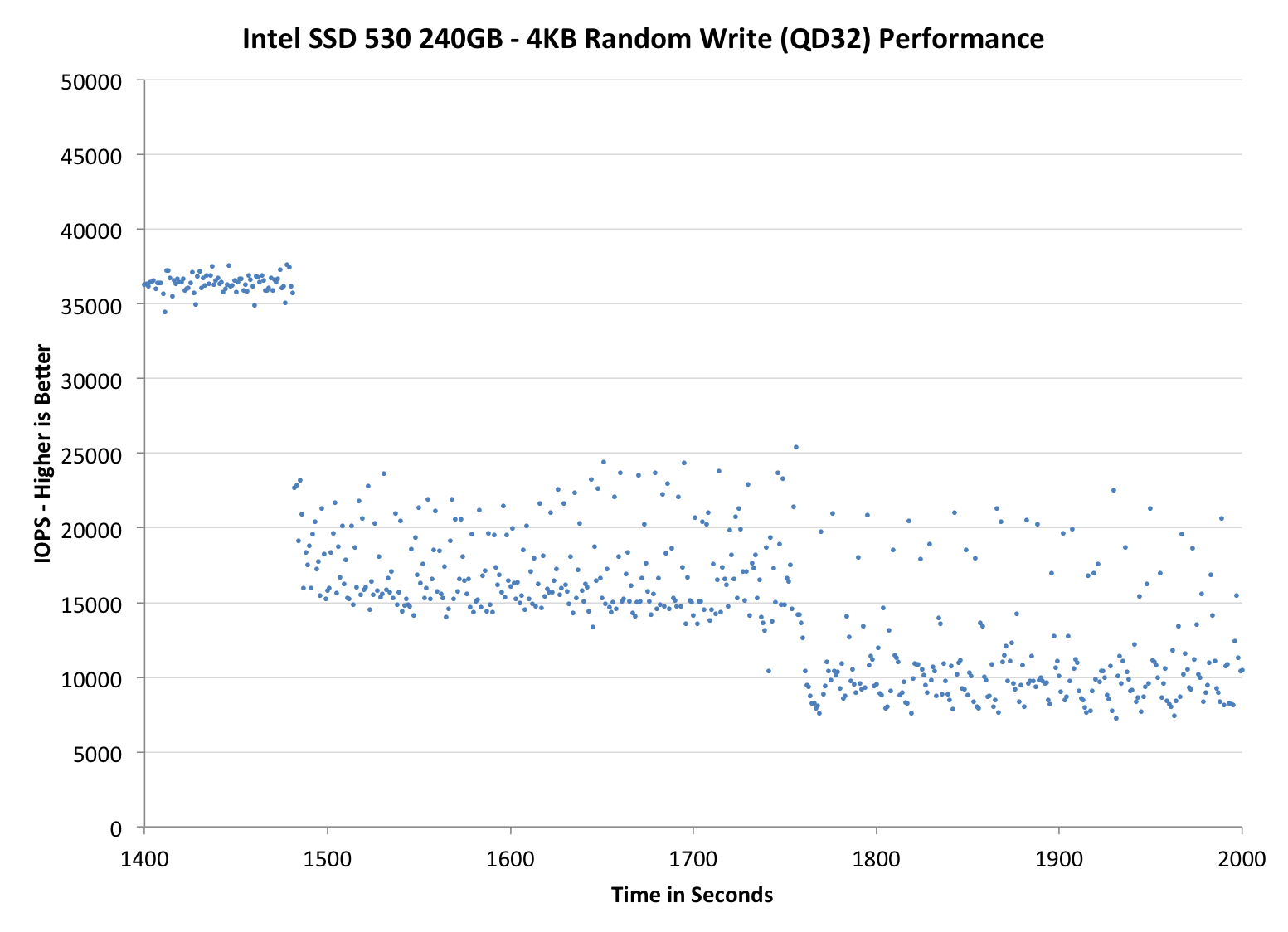

We record instantaneous IOPS every second for the duration of the test and then plot IOPS vs. time and generate the scatter plots below. Each set of graphs features the same scale. The first two sets use a log scale for easy comparison, while the last set of graphs uses a linear scale that tops out at 40K IOPS for better visualization of differences between drives.

The high level testing methodology remains unchanged from our S3700 review. Unlike in previous reviews however, we vary the percentage of the drive that gets filled/tested depending on the amount of spare area we're trying to simulate. The buttons are labeled with the advertised user capacity had the SSD vendor decided to use that specific amount of spare area. If you want to replicate this on your own all you need to do is create a partition smaller than the total capacity of the drive and leave the remaining space unused to simulate a larger amount of spare area. The partitioning step isn't absolutely necessary in every case but it's an easy way to make sure you never exceed your allocated spare area. It's a good idea to do this from the start (e.g. secure erase, partition, then install Windows), but if you are working backwards you can always create the spare area partition, format it to TRIM it, then delete the partition. Finally, this method of creating spare area works on the drives we've tested here but not all controllers are guaranteed to behave the same way.

The first set of graphs shows the performance data over the entire 2000 second test period. In these charts you'll notice an early period of very high performance followed by a sharp dropoff. What you're seeing in that case is the drive allocating new blocks from its spare area, then eventually using up all free blocks and having to perform a read-modify-write for all subsequent writes (write amplification goes up, performance goes down).

The second set of graphs zooms in to the beginning of steady state operation for the drive (t=1400s). The third set also looks at the beginning of steady state operation but on a linear performance scale. Click the buttons below each graph to switch source data.

|

|||||||||

| Intel SSD 530 240GB | Intel SSD 335 240GB | Corsair Neutron 240GB | Samsung SSD 840 EVO 250GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

| 25% OP | - | ||||||||

Even though the SF-2281 is over two and a half years old, its performance consistency is still impressive. Compared to the SSD 335, there's been quite significant improvement as it takes nearly double the time for SSD 530 to enter steady-state. Increasing the over-provisioning doesn't seem to have a major impact on performance, which is odd. On one hand it's a good thing as you can fill the SSD 530 without worrying that its performance will degrade but on the other hand, the steady-state performance could be better. For example the Corsair Neutron beats the SSD 530 by a fairly big margin with 25% over-provisioning.

|

|||||||||

| Intel SSD 530 240GB | Intel SSD 335 240GB | Corsair Neutron 240GB | Samsung SSD 840 EVO 250GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

| 25% OP | - | ||||||||

|

|||||||||

| Intel SSD 530 240GB | Intel SSD 335 240GB | Corsair Neutron 240GB | Samsung SSD 840 EVO 250GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

| 25% OP | - | ||||||||

TRIM Validation

To test TRIM, I filled the drive with incompressible sequential data and proceeded with 60 minutes of incompressible 4KB random writes at queue depth of 32. I measured performance after the torture as well as after a single TRIM pass with AS-SSD since it uses incompressible data and hence suits for this purpose.

| Intel SSD 530 Resiliency - AS-SSD Incompressible Sequential Write | |||

| Clean | After Torture (60 min) | After TRIM | |

| Intel SSD 530 240GB | 315.1MB/s | 183.3MB/s | 193.3MB/s |

SandForce's TRIM has never been fully functional when the drive is pushed into a corner with incompressible writes and the SSD 530 doesn't bring any change to that. This is really a big problem with SandForce drives if you're going to store lots of incompressible data (such as MP3s, H.264 videos and other highly compressed formats) because sequential speeds may suffer even more in the long run. As an OS drive the SSD 530 will do just fine since it won't be full of incompressible data, but I would recommend buying something non-SandForce if the main use will be storage of incompressible data. Hopefully SandForce's third generation controller will bring a fix to this.

60 Comments

View All Comments

HisDivineOrder - Saturday, November 16, 2013 - link

Remember when Sandforce used to be desired? That was a long, long time ago. Now they stink of bad firmwares and ugly compromise.jwcalla - Saturday, November 16, 2013 - link

I'm surprised we haven't seen a new gen from them yet. I wonder if they're even working on anything.purerice - Sunday, November 17, 2013 - link

True. It is a better problem to have than great firmware with bad hardware though. I mean, if they have the desire, they can fix existing drives. If they don't, they'll just lose customers, end of story.GuizmoPhil - Sunday, November 17, 2013 - link

The mITX ASUS Maximus VI Impact also got an M2 slot.g00ey - Sunday, November 17, 2013 - link

Sorry but just I don't believe in PCIe as a viable interface for SSD storage. If SATA 6 Gbps turns out to be a bottleneck then make drives that use two SATA channels or more. Or even switch to SAS 12Gbbs which was introduced back in 2011. Not many changes will be needed when switching to SAS since SAS is pin-compatible with SATA and a SAS controller can run SATA drives. The only noticeable difference is that SAS is more stable and cable lengths up to 10 meters (33 feet) are possible whereas only 1 meter (3.3 feet) works for SATA. I also like the SFF-8087/8088 connectors which house 4 SAS/SATA channels in one connector, there is both an internal version (SFF-8087) and an external version (SFF-8088) of this connector, just like SATA vs eSATA.The major advantages of SAS/SATA over PCIe is spelled RAID and hot-swap so it only makes sense to implement PCIe based storage in ultra-portable applications and applications with extremely high demands on low-latency.

tygrus - Sunday, November 17, 2013 - link

How do the SSD's perform with a simultaneous mix of Read/Write ? eg. 70/30 mix of random R/W with Q=32 or simulate tasks that stream read-modify-write.emvonline - Sunday, November 17, 2013 - link

Couple items: The real difference with the 530 is low power options from Sandforce controller and potentially lower cost 20nm NAND. If it isnt cheaper than 520, don't buy it.Intel chose 2281 controller for its consumers SSDs over its internal controller. Why would you recommend that Intel do a consumer SSD with its internal controller? Intels 3500 internal controller is purchased from and fab'd by another company anyway. Do you think the performance it much better than Sandforce 2281 B2?

'nar - Monday, November 18, 2013 - link

I must be dense, because I still don't get why you criticize Sandforce so much about incompressible data. I don't see a need to put incompressible data on an SSD in the first place, so the argument is meaningless.For cost per GB of storage, most people still do not want SSD's holding 500GB of data. Why do they have over 500GB? Pictures, music, movies, ie incompressible data. Therefore, that is stored on a much more cost-effective hard drive and hence, irrelevant here.

I don't see a performance advantage either. What do you do with music and movies? Play them. How much speed does that require? 12MBps? Hard drives are fine for media servers. Maybe you want to copy to a flash drive, but it will be limited itself to about 150MBps for good USB 3 drives anyway. And if you are editing video often then you are likely going over that 20GB per day of writes, so you should put that on an enterprise scratch disk anyway.

So, you ask if Sandforce will "fix" this problem? What problem? It is the fundamental design feature they have. It is what makes them unique, and in "normal" system it is quite useful, reviewers looking for bigger sledge hammers not withstanding. That's like saying the president is not so bad, but maybe if he weren't so black.

You can break anything. These are not build to be indestructible, nobody would be able to afford them if they were. These are built for common use, and I do not see hammering incompressible data in these benchmarks a common use.

Kristian Vättö - Tuesday, November 19, 2013 - link

If you're using software based encryption, it's quite a big deal because all your data will be incompressible. For other SSDs it's the one and same whether the data is compressible or not, but for SandForce based SSDs it's not, so it's a thing worth mentioning. What would be the point of reviews in the first place if we couldn't point out differences and potential design flaws?'nar - Thursday, November 21, 2013 - link

noted. That's it. Not half of all benchmarks. I don't use software encryption for most of my data.