21.5-inch iMac (Late 2013) Review: Iris Pro Driving an Accurate Display

by Anand Lal Shimpi on October 7, 2013 3:28 AM ESTGPU Performance: Iris Pro in the Wild

The new iMac is pretty good, but what drew me to the system was it’s among the first implementations of Intel’s Iris Pro 5200 graphics in a shipping system. There are some pretty big differences between what ships in the entry-level iMac and what we tested earlier this year however.

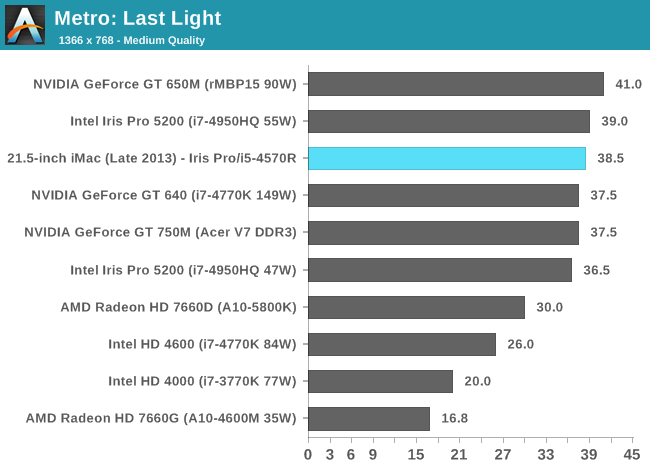

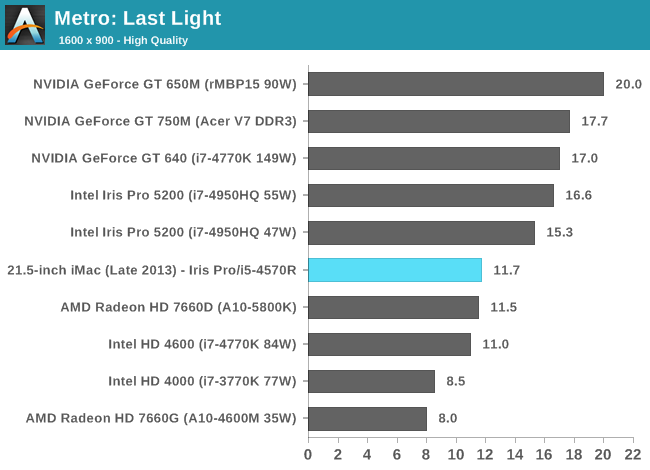

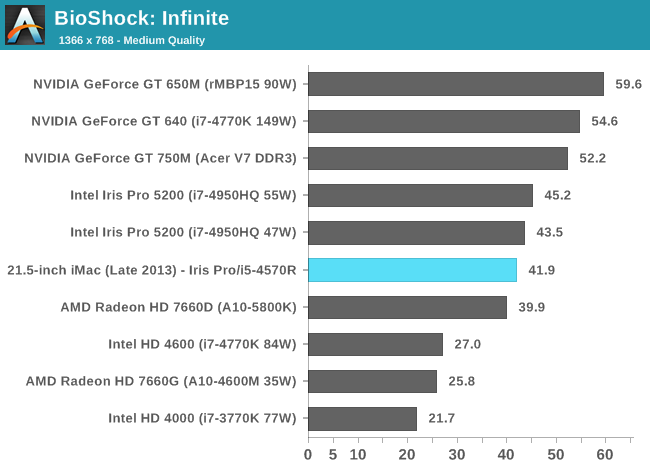

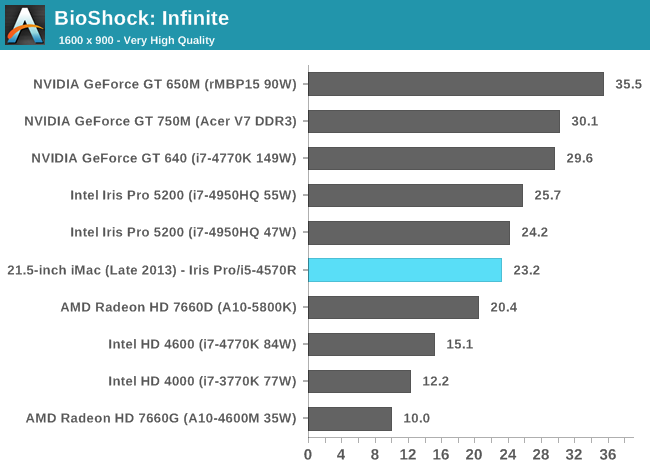

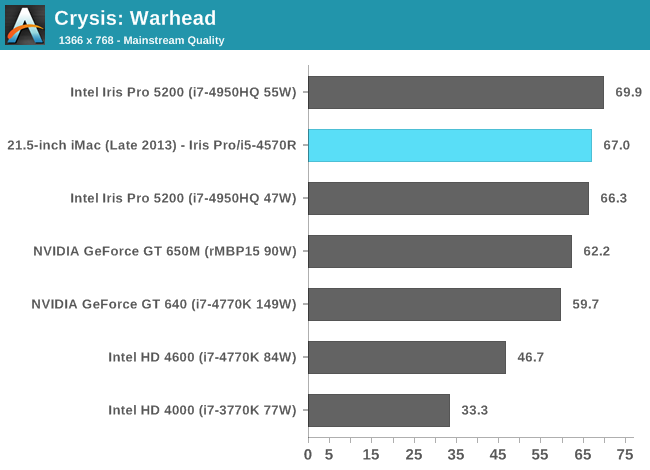

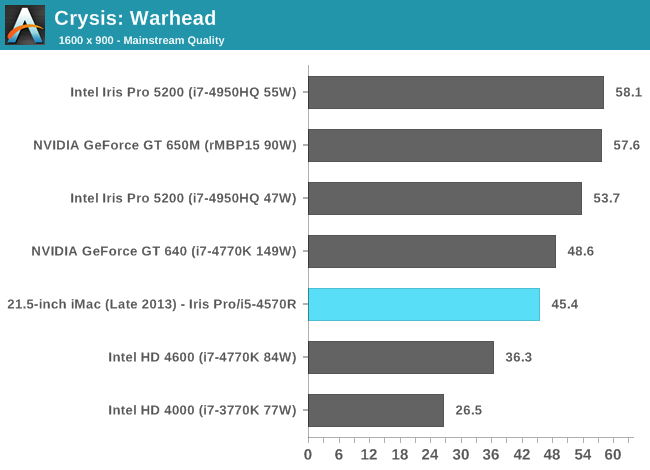

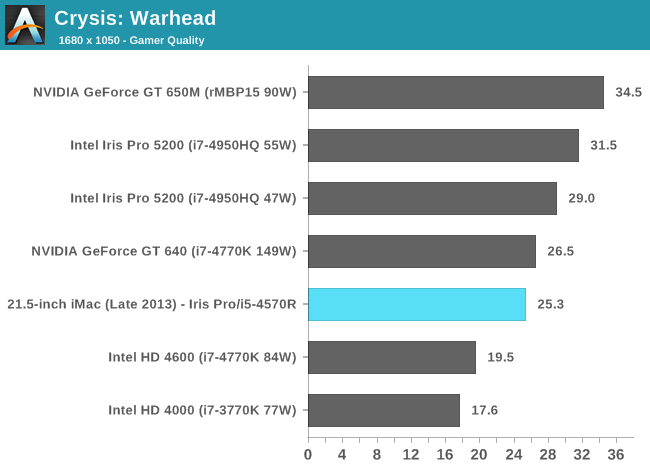

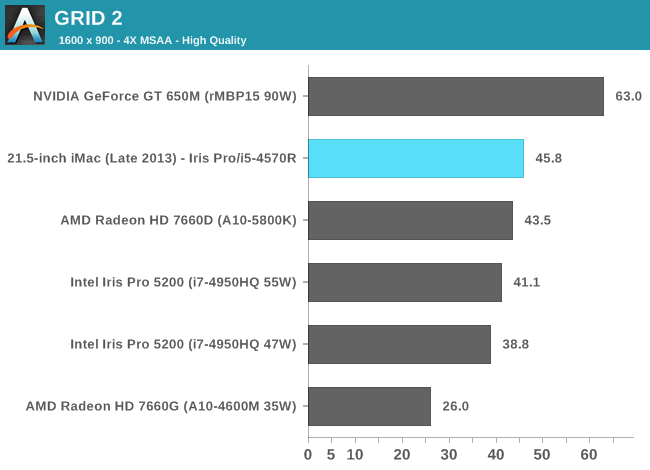

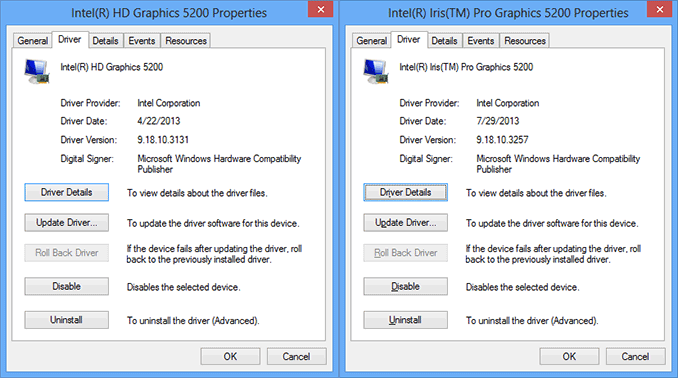

We benchmarked a Core i7-4950HQ, a 2.4GHz 47W quad-core part with a 3.6GHz max turbo and 6MB of L3 cache (in addition to the 128MB eDRAM L4). The new entry-level 21.5-inch iMac is offered with no CPU options in its $1299 configuration: a Core i5-4570R. This is a 65W part clocked at 2.7GHz but with a 3GHz max turbo and only 4MB of L3 cache (still 128MB of eDRAM). The 4570R also features a lower max GPU turbo clock of 1.15GHz vs. 1.30GHz for the 4950HQ. In other words, you should expect lower performance across the board from the iMac compared to what we reviewed over the summer. At launch Apple provided a fairly old version of Iris Pro drivers for Boot Camp, I updated to the latest available driver revision before running any of these tests under Windows.

Iris Pro 5200’s performance is still amazingly potent for what it is. With Broadwell I’m expecting to see another healthy increase in performance, and hopefully we’ll see Intel continue down this path with future generations as well. I do have concerns about the area efficiency of Intel’s Gen7 graphics. I’m not one to normally care about performance per mm^2, but in Intel’s case it’s a concern given how stingy the company tends to be with die area.

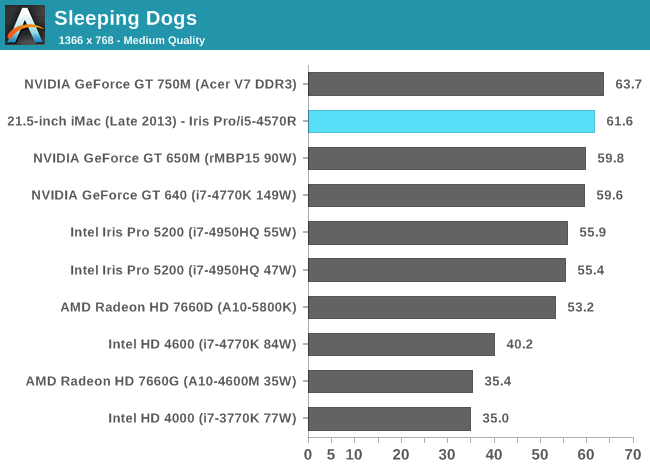

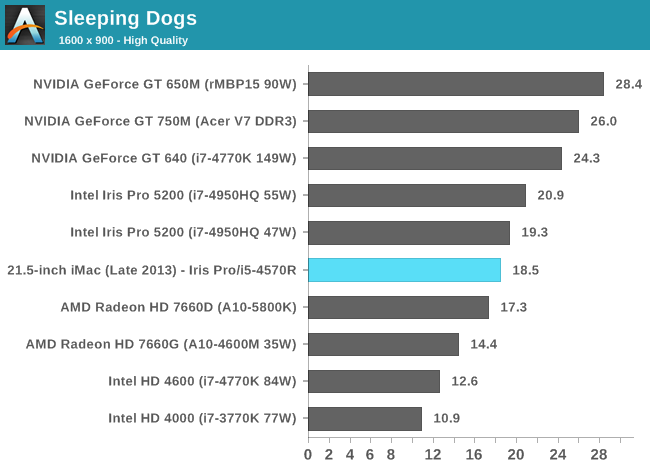

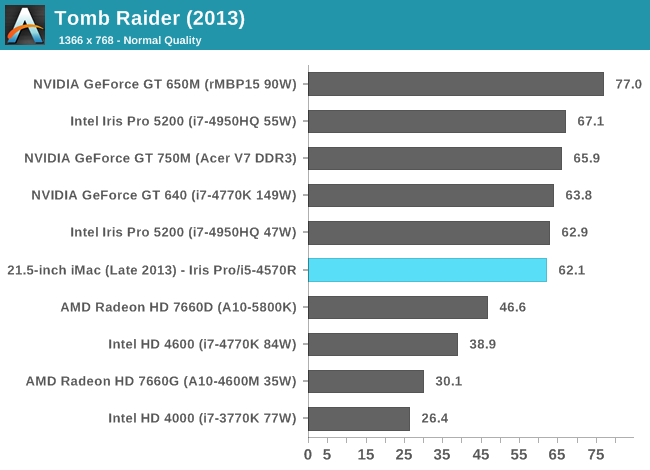

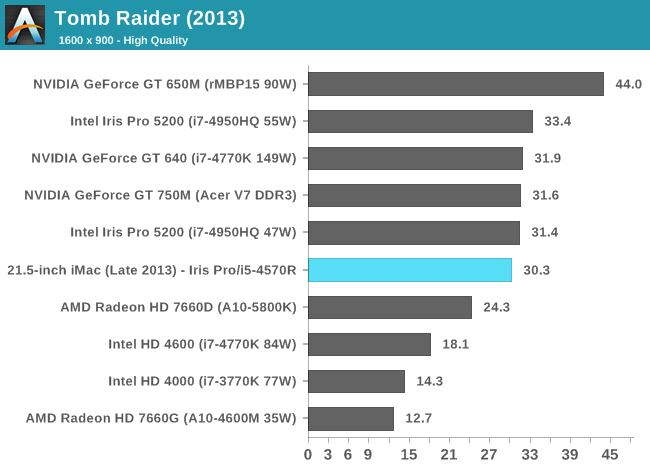

The comparison of note is the GT 750M, as that's likely closest in performance to the GT 640M that shipped in last year's entry-level iMac. With a few exceptions, the Iris Pro 5200 in the new iMac appears to be performance competitive with the 750M. Where it falls short however, it does by a fairly large margin. We noticed this back in our Iris Pro review, but Intel needs some serious driver optimization if it's going to compete with NVIDIA's performance even in the mainstream mobile segment. Low resolution performance in Metro is great, but crank up the resolution/detail settings and the 750M pulls far ahead of Iris Pro. The same is true for Sleeping Dogs, but the penalty here appears to come with AA enabled at our higher quality settings. There's a hefty advantage across the board in Bioshock Infinite as well. If you look at Tomb Raider or Sleeping Dogs (without AA) however, Iris Pro is hot on the heels of the 750M. I suspect the 750M configuration in the new iMacs is likely even faster as it uses GDDR5 memory instead of DDR3.

It's clear to me that the Haswell SKU Apple chose for the entry-level iMac is, understandably, optimized for cost and not max performance. I would've liked to have seen an option with a high-end R-series SKU, although I understand I'm in the minority there.

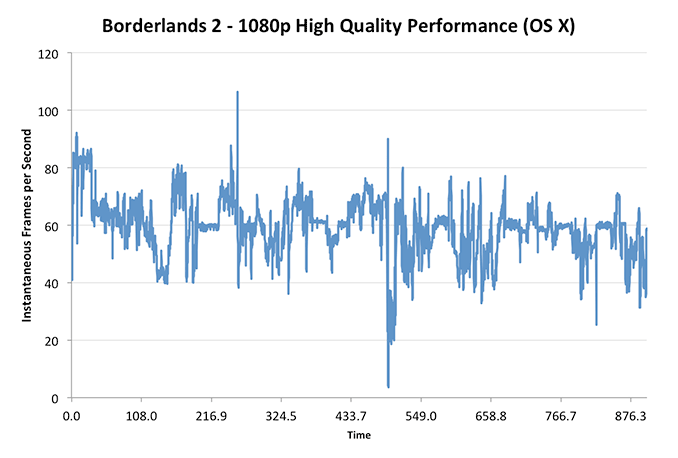

These charts put the Iris Pro’s performance in perspective compared to other dGPUs of note as well as the 15-inch rMBP, but what does that mean for actual playability? I plotted frame rate over time while playing through Borderlands 2 under OS X at 1080p with all quality settings (aside from AA/AF) at their highest. The overall experience running at the iMac’s native resolution was very good:

With the exception of one dip into single digit frame rates (unclear if that was due to some background HDD activity or not), I could play consistently above 30 fps.

Using BioShock Infinite I actually had the ability to run some OS X vs. Windows 8 gaming performance numbers:

| OS X 10.8.5 vs. Windows Gaming Performance - Bioshock Infinite | ||||

| 1366 x 768 Normal Quality | 1600 x 900 High Quality | |||

| OS X 10.8.5 | 29.5 fps | 23.8 fps | ||

| Windows 8 | 41.9 fps | 23.2 fps | ||

Unsurprisingly, when we’re not completely GPU bound there’s actually a pretty large performance difference between OS X and Windows gaming performance. I’ve heard some developers complain about this in the past, partly blaming it on a lack of lower level API access as OS X doesn’t support DirectX and must use OpenGL instead. In our mostly GPU bound test however, performance is identical between OS X and Windows - at least in BioShock Infinite.

127 Comments

View All Comments

Res1233 - Wednesday, October 30, 2013 - link

Mac OS X is the main reason I buy macs. I have a feeling that you are right about most mac users having no clue what they're buying, but I could go on for hours about the advantages of OS X (geek-wise). No, hackintoshes are not an option if you want any kind of reliability, so don't even go there. If you force me to, I will explain my reasoning in depth to practically anandtech-levels, but I'm not in the mood right now. Perhaps another time! :)tipoo - Monday, October 7, 2013 - link

What are the chances of the 13" Pro duo getting Iris Pro 5200? I'd really love that.Bob Todd - Monday, October 7, 2013 - link

Have they announced any dual core Iris Pro parts? I know the original SKU list just had them in the quads. I still assume the 13" rMBP will get the 28W HD 5100 (hopefully in base configuration, but there will probably be a lower spec i5 below that).Flunk - Monday, October 7, 2013 - link

It's unsure, a new part could be announced at any time. Intel has even made variants specifically for Apple before.tipoo - Monday, October 7, 2013 - link

It shouldn't have to be dual core to be in the 13" pros though. Intel has quads in the same TDP as the current duals in it. A quad core, with GT3e, that would make it an extremely tempting package for me.Sm0kes - Tuesday, October 8, 2013 - link

I think anything but GT3e in the 13'' Macbook Pro is going to be a disappointment at this point. How their 13'' "pro" machine has gone this long with sub-par integrated graphics is mind boggling. The move to a retina display really emphasized the weakness.I'd also venture a guess that cost is the real barrier, as opposed to TDP.

tipoo - Thursday, October 10, 2013 - link

Perhaps. Yeah, the 13" has been disappointing to me, I love the form factor, but hate the standard screen resolution, and the HD4000 is really stretched on the Retina. If it stays a dual core, I don't see a whole lot of appeal over the Macbook Air 13" either. To earn that pro name, it really should be a quad with higher end integrated graphics.Hrel - Monday, October 7, 2013 - link

"This is really no fault of Apple’s, but rather a frustrating side effect of Intel’s SKU segmentation strategy."So I take it I'm not the only one infuriated by the fact that Intel hasn't made Hyperthreading standard on all of it's CPU's.

I remember reading, on this site, that HT adds some insignificant amount of die area, like 5% or something, but is capable of adding up to 50% performance. (in theory). If that's the case the ONLY reason to not include it on EVERY CPU is to nickel and dime your customers. Except it should really be "$100" your customers since only the i7's have HT.

Isn't the physical capability of HT already on ALL cpu's? It just needs to be turned on in firmware right?

DanNeely - Monday, October 7, 2013 - link

With the exception of IIRC dual vs quad core dies and GT2 vs GT3 graphics almost everything that differs between CPUs in a generation is either binning or disabling components if too few dies with a segment non-functional are available for the lower bin.Intel could differentiate its product line without doing any segment disabling on the dies; but it would require several times as many different die designs which would require higher prices due to having to do several times as much validation. Instead we get features en/disabled with fuses or microcode because the cost of the 'wasted' die area is cheaper than the costs associated with validating additional die configurations.

Flunk - Monday, October 7, 2013 - link

Actually the GT2 is just a die-harvested GT3. Intel only have 2 and 4 core versions and crystalwell is an add-on die so there are essentially only 2 base dies, at least for consumers.I do agree about the hyper-threading, there is really no need to disable it. It's not like it really matters in consumer applications anyway.