Understanding AMD’s Mantle: A Low-Level Graphics API For GCN

by Ryan Smith on September 26, 2013 7:20 AM EST

Wrapping up our AMD product showcase coverage for the week, AMD’s final announcement for the showcase was a very brief announcement about a new API called Mantle. Mantle is something of an enigma at this point – AMD isn’t saying a whole lot until November with the AMD Developer Summit – and although it’s conceptually simple, the real challenge is trying to understand where it fits into AMD’s product strategy, and perhaps more importantly what brought them to this point in the first place. So although we don’t have all of the necessary details in hand quite yet, we wanted to spend some time breaking down the matters surrounding Mantle as much as we reasonably can.

Perspective #1: The Performance Case

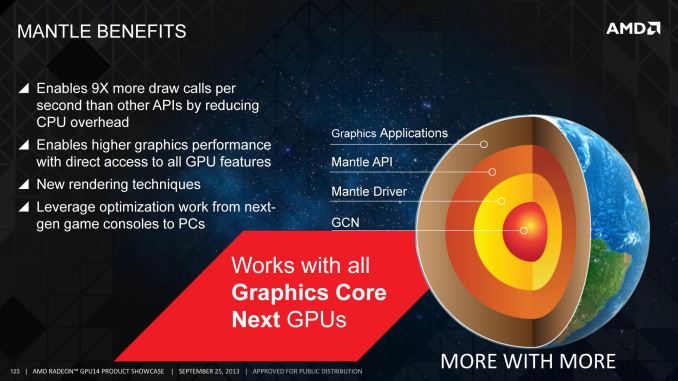

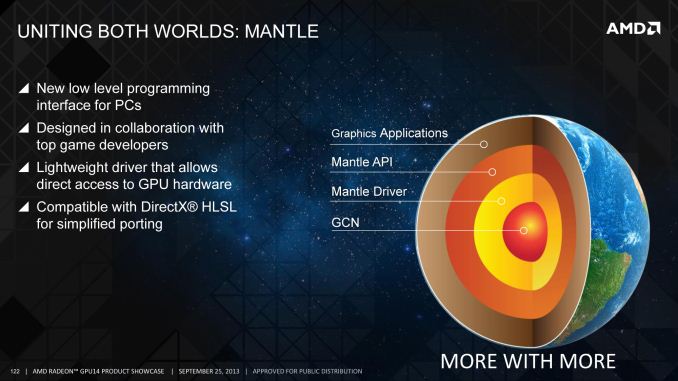

The best place to start with Mantle is a high level overview. What is Mantle? Mantle is a new low-level graphics API specifically geared for AMD’s Graphics Core Next architecture. Whereas standard APIs such as OpenGL and Direct3D operate at a high level to provide the necessary abstraction that makes these APIs operate across a wide variety of devices, Mantle is the very opposite. Mantle goes as low as is reasonably possible, with minimal levels of abstraction between the code and the hardware. Mantle is for all practical purposes an API for doing bare metal programming to GCN. The concept itself is simple, and although low-level APIs have been done before, it has been some time since we’ve seen anything like this in the PC space.

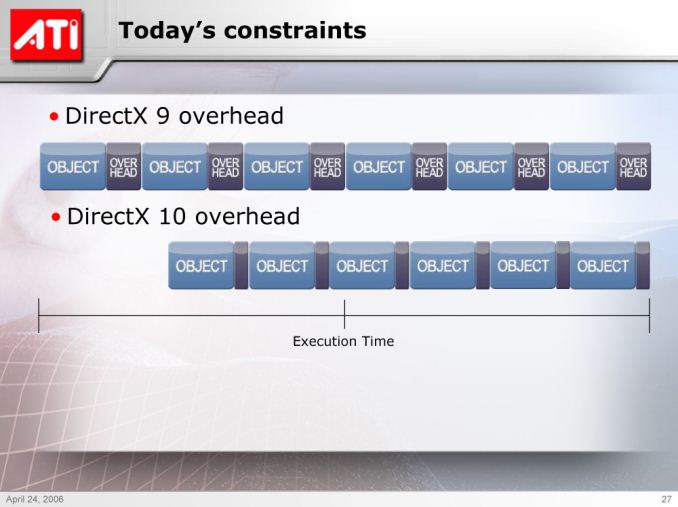

Simplicity gives way to complexity however when we begin discussing not just what Mantle is, but why it exists. At the highest level Mantle exists because high level API have drawbacks in exchange for their ability to support a wide variety of GPUs. Abstractions in these APIs hide what the hardware is capable of, and the code that holds those abstractions together comes with its own performance penalty. Of all of those performance issues the principle issue at hand is the matter of draw calls, which are the individual calls sent to the GPU to get objects rendered. A single frame can be composed of many draw calls, upwards of a hundred or more, and every one of those draw calls takes time to set up and submit.

Although the issue will receive renewed focus today with the announcement of Mantle, we have known for some time now that groups of developers on both the hardware and software side of game development have been dissatisfied with draw call performance. Microsoft and the rest of the Direct3D partners addressed this issue once with Direct3D 10, which significantly cut down on some forms of overhead.

But the issue was never entirely mitigated, and to this day the number of draw calls high-end GPUs can process is far greater than the number of draw calls high-end CPUs can submit in most instances. The interim solution has been to attempt to use as few draw calls as possible – GPU utilization takes a hit if the draw calls are too small – but there comes a point where a few large draw calls aren’t enough, and where the CPU penalty from generating more draw calls becomes notably expensive.

Ergo: Mantle. A low-level API that cuts the abstraction and in the process makes draw calls cheap (among other features).

Perspective #2: The Console Connection

However even with a basic understanding of draw calls and their overhead, so far we haven’t really explained why Mantle exists, and indeed the entire frame of reference that Mantle resides in requires better understanding just why it exists. If Mantle was merely about providing a new low level API for GCN, then the issue would be far more straightforward, and Mantle in most likelihood remain an underutilized curiosity. Instead we have to talk about what is not said and not even hinted at, but what is more critical than Mantle’s performance improvements: the console connection.

As the supplier of the APUs in both the Xbox One and PS4, AMD is in a very interesting place. Both of these upcoming consoles are based on their GCN technology, and as such AMD owns a great deal of responsibility in developing both of these consoles. This goes not only for their hardware but also portions of their software stack, as it’s AMD that needs to write the drivers and AMD that needs to help develop the APIs these consoles will use, so that the full features of the hardware are made available to developers.

At the same time, when it comes to writing APIs we also have to briefly mention the fact that unlike the PC world, the use of both high level and low level APIs are a common occurrence in console software. High level APIs are still easier to use, but when you’re working with a fixed platform with a long shelf life, low level APIs not only become practical, they become essential to extracting the maximum performance out of a piece of hardware. As good as a memory manager or a state manager is, if you know your code inside and out then there are numerous shortcuts and optimizations that are opened up by going low level, and these are matters that hardcore console developers will chase in full. So when we talk about AMD writing APIs for the new consoles, we’re really talking about AMD writing two APIs for the new consoles: a high level API, equivalent to the likes of Direct3D and OpenGL, and a low level API suitable for banging on the hardware directly for maximum performance.

This brings us to the crux of the matter: what’s not being said. Simply put, what would happen if you ported both the high level and low level APIs from a console – say the Xbox One – back over to the PC? We already know what that high level API would look like, because it exists today in the form of Direct3D 11.2, an API peppered with new features that coincide with AMD GCN hardware features. But what about a low level API? What would it look like?

What’s not being said, but what becomes increasingly hinted at as we read through AMD’s material, is not just that Mantle is a low level API, but rather Mantle is the low level API. As in it’s either a direct copy or a very close derivative of the Xbox One’s low level graphics API. All of the pieces are there; AMD will tell you from the start that Mantle is designed to leverage the optimization work done for games on the next generation consoles, and furthermore Mantle can even use the Direct3D High Level Shader Language (HLSL), the high level shader language Xbox One shaders will be coded against in the first place.

Let’s be very clear here: AMD will not discuss the matter let alone confirm it, so this is speculation on our part. But it’s speculation that we believe is well grounded. Based on what we know thus far, we believe Mantle is the Xbox One’s low level API brought to the PC.

If indeed Mantle is the Xbox One’s low level API, then this changes the frame of reference for Mantle dramatically. No longer is Mantle just a new low level API for AMD GCN cards, whose success is defined by whether AMD can get developers to create games specifically for it, but Mantle becomes the bridge for porting over Xbox One games to the PC. Developers who make extensive use of the Xbox One low level API would be able to directly bring over large pieces of their rendering code to the PC and reuse it, and in doing so maintain the benefits of using that low-level code in the first place. Mantle will not (and cannot) preclude the need for developers to also do a proper port to Direct3D – after all AMD is currently the minority party in the discrete PC graphics space – but it does provide the option of keeping that low level code, when in the past that would never be an option.

Perspective #3: Developers, Developers, Developers

With that said, the potential for Mantle is not the same as the actual execution for Mantle, and even if we’re correct about Mantle’s Xbox One origins, Mantle’s success is far from guaranteed. Mantle right now is merely the toolset to realize the possibility of bringing over existing low level code to the PC. To be successful, AMD at a minimum needs to convince multiplatform developers that it’s worth their time to do Mantle enabled versions of their rendering engines, as despite the easy porting it’s still more work than just doing the typical Direct3D port. Even then, that only captures multiplatform ports; native PC games are another matter entirely. Mantle does not necessarily need native PC games to be successful for AMD, but certainly it would strongly validate the existence and capabilities of Mantle if AMD was able to get native PC games developed against it too. After all, the draw call benefits of Mantle are still very real, regardless of which platform is the lead platform for a game.

In fact the significance of developers in this entire process should not be understated. Mantle exists because it’s faster than high level APIs, it makes porting low level console code to the PC easier, and as it turns out, because it’s something developers have been telling AMD they want. More than anything else about Mantle, this is the point AMD has been trying to drive home, as they are well aware of the potential controversy Mantle would bring. Mantle doesn’t just exist because AMD wants to leverage their console connection, but Mantle exists because developers want to leverage it too, and indeed developers have been coming to AMD for years asking for such a low level API for this very reason. As such the impression we're getting from AMD – or at least the impression they're trying their best to give off – is that Mantle was created to satisfy these requests, rather than being something AMD created and is trying to drum up interest for after the fact.

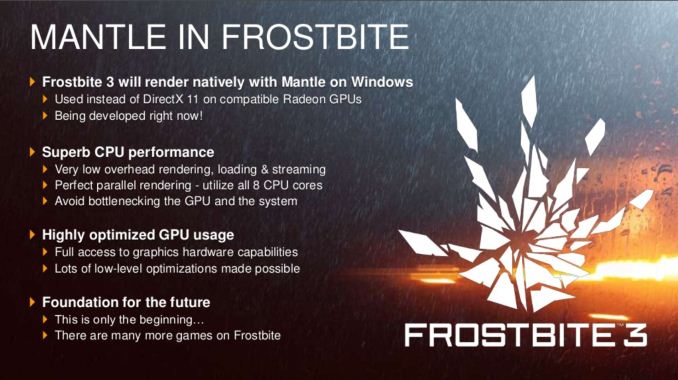

The first game to use Mantle will be Battlefield 4, and as part of the Mantle announcement AMD had DICE put together a video for today’s product showcase, in which they spent some time discussing their own interest in Mantle and low level APIs. It’s one thing for AMD to claim that developers want Mantle – something that would be difficult for the press and consumers to validate – but it’s something else entirely when developers are willing to put together lengthy testimonials in support of Mantle. To that end DICE is just one developer, but with any luck for AMD they are the first of many developers. If AMD’s claims about developers asking for this are true, then we should be able to see for ourselves soon enough if and when more developers pledge support for the API.

Perspective #4: The Drawbacks of Low Level APIs

With all of that in mind, while Mantle has the potential to provide benefits to users and developers alike, there are also some very clear downsides to using a low level API in PC game development.

Unlike consoles, PCs are not fixed platforms, and this is especially the case in the world of PC graphics. If we include both discrete and integrated graphics then we are looking at three very different players: AMD, Intel, and NVIDIA. All three have their own graphics architectures, and while they are bound together at the high level by Direct3D feature requirements and some common sense design choices, at the low level they’re all using very different architectures. The abstraction provided by APIs like Direct3D and OpenGL is what allows these hardware vendors to work together in the PC space, but if those abstractions are removed in the name of performance then that compatibility and broad support is lost in the process.

A lot of press – ourselves included – immediately began comparing Mantle to Glide. Glide was another low level API that was developed by the long-gone GPU manufacturer 3dfx, and at the height of their power in the mid-to-late 90s 3dfx wielded considerable influence thanks to Glide. Glide was easier to work with than the immature Direct3D API, and for a time games either supported only Glide, or supported Glide alongside Direct3D or OpenGL. Almost inevitably the Glide rendering path was better in some respect, be it performance, features, or a general decrease in bugs. This was fantastic for 3dfx card owners, but as both AMD (nee ATI) and NVIDIA can tell you, this wasn’t great for those parties that were on the outside.

As a result of this, as great as Glide was at the time, it’s widely considered a good thing that Glide died out and that Direct3D took over as the reigning king of PC graphics APIs. Developers stopped utilizing multiple rendering paths, and their single rendering path was better for everyone as a result. Having games written exclusively in a common, industry standard API was better for everyone.

Mantle by its very nature reverses that, by reestablishing a low level API that exists at least in part in competition with Direct3D and OpenGL. Consequently while Mantle is good for AMD users, is Mantle good for NVIDIA and Intel users? Do developers start splitting their limited resources between Mantle and Direct3D, spending less time and resources on their Direct3D rendering paths as a result?

At the risk of walking a very fine line here, like so many aspects of Mantle these are not questions we have the answer to today. And despite the reservations this creates over Mantle this doesn’t mean we believe Mantle should not exist. But these are real concerns, and they are concerns that developers will need to be careful to address if they intend to use Mantle. Mantle’s potential and benefits are clear, but every stakeholder in PC game production needs to be sure that Mantle doesn’t lead to a repeat of the harmful aspects of Glide.

Final Words

When AMD first told us about their plans for Mantle, it was something we took in equal parts of shock, confusion, and awe. The fact that AMD would seek to exploit their console connection was widely expected, however the fact that they would do so with such an aggressive move was not. If our suspicions are right and AMD is bringing over the Xbox One low level API, then this means AMD isn’t just merely exploiting the similarities to Microsoft’s forthcoming console, but they are exploiting the very heart of their console connection. To bring over a console’s low level graphics API in this manner is quite simply unprecedented.

However at this point we’ve just scratched the surface of Mantle, and AMD’s brief introduction means that questions are plenty and answers are few. The potential for improved performance is clear, as are the potential benefits to multiplatform developers. What’s not clear however is everything else: is Mantle really derived from the Xbox One as it appears? If developers choose to embrace Mantle how will they do so, and what will the real performance implications be? How will Mantle be handled as the PC and the console slowly diverge, and PC GPUs gain new features?

The answers to those questions and more will almost certainly come in November, at the 2013 AMD Developer Summit. In the interim however, AMD has given us plenty to think about.

244 Comments

View All Comments

JDG1980 - Thursday, September 26, 2013 - link

Writing low-level graphics drivers should be easier and less error-prone than writing high-level graphics drivers.chizow - Thursday, September 26, 2013 - link

How so? You have 3-4 lines of machine code replacing 1 line of HLSL, which requires much sharper matrix math skills to boot.Wreckage - Thursday, September 26, 2013 - link

So they are bringing back GLIDE?What's next are they going to bring back dial-up internet? Maybe some AMD branded floppy disk drives.

Remember what happened to the company that tried to push GLIDE?

Besides AMD fans have gone on record saying they hate proprietary solutions. So they will all reject this.

Creig - Thursday, September 26, 2013 - link

AMD's Mantle initiative is a fantastic idea to bring low-level cross platform development to a huge user base. And I don't think anybody saw it coming, least of all Nvidia. Because if they had, they would never have shrugged off AMD getting both the XBox One and PS4 contracts.Back when Glide was used, it was only on the PC. And even then it was a force to be reckoned with. But today with AMD hardware not only in PCs but also in BOTH of the most popular consoles that will most likely be in production for the next 6-10 years, it's a brilliant move. Developers of both PC and console games will have low level access across both platforms (PC and console). So now they will be able to develop games with increased performance that will also be easier to port. Whoever came up with this strategy at AMD deserves a raise!

Nvidia has every right to be very nervous by this unexpected move from AMD. Because now any game designed for a console will automatically have increased low-level performance and graphics enhancements built in that will work with any GCN equipped (AMD) PC.

dookiebot - Thursday, September 26, 2013 - link

The company that pushed glide work for NVIDIA now. Who have their proprietary solutions as well. So I expect NVIDIA fans will embrace Mantle.erple2 - Friday, September 27, 2013 - link

The company that pushed glide was very successful until they fancied themselves as retailers and not virtual chip makers. Their plant for mass producing chips was not in a well established area and had many quality control problems. Then there was that whole "making your customers your competitors that then flocked to nvidia" thing. That was ultimately their downfall. Developers really liked working in GLide from what I can remember. It was far easier to use than the directx at the time (and to a lesser extent OpenGL).wumpus - Friday, September 27, 2013 - link

There was also the issue that they never made a new core after voodoo 1 (everything else was shrinks and multi-core copies of those chips). Not sure if the guys who designed it weren't interested in another design because they liked their rewards or they felt ripped off, but I suspect that other startups have the same issue of lightening not striking twice.bill.rookard - Thursday, September 26, 2013 - link

This move really shouldn't be that much of a surprise. If you look at how things are rated in the PC gaming world, where a few extra FPS or a few extra ms of frame latency can make or break a game as well as drive sales of hardware, it doesn't shock me in the least that AMD would look to leverage their work with the game consoles to provide this type of functionality.From the hardware side of things, GPU's are big, powerful, fast chips, and soon they're going to be running into the same issues that the CPU's are in that dropping down process nodes in their construction (28nm -> 22nm -> 14(?)nm) will follow the same path - eventually, you'll hit a limit as to how fast/hot these chips can be pushed. Eventually, they'll run into what I like to call 'The Haswell Limit', and the chips will become exponentially more complicated, and thus more expensive to develop and produce.

What I see AMD doing here is seeing how this has already played out and recognizing that if they want to increase their performance, they need to do things -differently-. By eliminating some of the overhead (or a lot of the overhead - we're not sure yet), it's unlocking 'free' performance. If they could (theoretically) eliminate 15% of unnecessary overhead on the GPU, that's a 15% increase in performance. That's being able to lower thermals, extract more performance, drop power usage, however they want to utilize it, and all it requires (again) is software.

Comparatively speaking, software is cheap. Hardware is not. AMD is recognizing this and asking themselves a very logical question: Is it better to optimize our hardware/software interface, or continue in a GPU arms race with nVidia.

Apparently, they've decided on option A, and it makes perfect sense to me.

Kevin G - Thursday, September 26, 2013 - link

There are simply two limiting factors in GPU hardware design today: power consumption and die size. Today modern GPU's are very programmable and while they could improve performance per clock, they can extract greater benefits from increased parallelism. Thus they won't approach the 'Haswell Limit' until after they've reached a usefulness to parallelism in their designs. Adding more parallelism is mainly a copy/paste job of a shader cluster, ROP, TMU etc. and a bit of work connecting them all together. With each die shrink, they'll be able to add more units into the same area.nVidia is willing to approach die size limitations around 550 mm^2.**. Beyond that figure, chips greatly suffer from yield issues. AMD had a small die strategy in place with the Radeon 4000 and 5000 series. They loosened up that a bit with the high end Radeon 6900 and 7900 series. It is formally gone now with the R9 and the estimated 425 mm^2 of the new chip.

The other limitation has been power. Since the Radeon 2900 introduced the supplementary 8 pin power connector, the upper limit to the PCI-E spec has been 300W. Modern cards like the Radeon 7970 and nVidia's Titan are capable of drawing that much power with modest overclock. Only recently have we seen AMD and nVidia flirt with cards consuming more power and they are strictly dual GPU solutions. Make no mistake, both AMD and nVidia could design a GPU to consume 375W or 450W with ease (mainly increase voltage a notch and clock speeds on current designs). The problem is cooling such a chip. They are already using rather exotic vapor chambers to quickly move thermal energy from the die to the heat sink. Either moving to liquid cooling or ditching the PCI-e card format to a motherboard socket is likely necessary to permit further increases in power consumption.

**For reference, lithography die limits is a bit over 700 mm^2. The largest die I've heard of is the Tukwilia based Itanium which weighed in at 699 mm^2.

ssiu - Thursday, September 26, 2013 - link

This Mantle applies to current GCN cards (HD77xx - HD79xx) too, not just upcoming cards, correct?