The iPhone 5s Review

by Anand Lal Shimpi on September 17, 2013 9:01 PM EST- Posted in

- Smartphones

- Apple

- Mobile

- iPhone

- iPhone 5S

GPU Architecture

Dating back to the original iPhone, Apple has relied on GPU IP from Imagination Technologies. In recent years, the iPhone and iPad lines have pushed the limits of Img’s technology - integrating larger and higher performing GPUs than all other Img partners. Apple definitely attempted to obfuscate its underlying GPU architecture this time around for some reason.

Dating back to a year ago I got a lot of tips saying that Apple would be integrating Imagination Technologies’ PowerVR Series 6 GPU this generation, but I needed more proof.

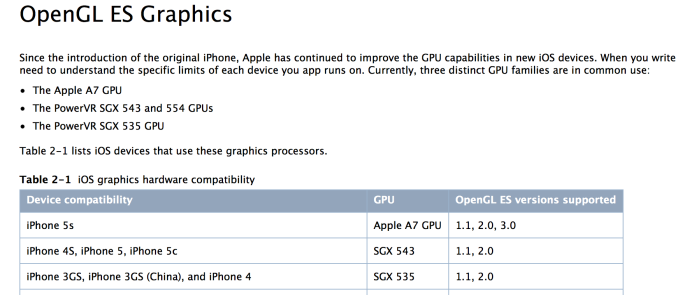

The first indication that this isn’t simply a Series 5XT part is the listed support for OpenGL ES 3.0. The only GPUs presently shipping with ES 3.0 support are Qualcomm’s Adreno 3xx (which is only integrated into Qualcomm silicon), ARM’s Mali-T6xx series and PowerVR Series 6. NVIDIA’s Tegra 4 GPU doesn’t support ES 3.0, and it’s too early for Logan/mobile Kepler. With Qualcomm out of the running that leaves Mali and PowerVR Series 6.

“All GPUs used in iOS devices use tile-based deferred rendering (TBDR).”

Apple’s developer documentation lists all of its SoCs as supporting Tile Based Deferred Rendering (TBDR). If you ask Imagination, they will tell you that they are the only ones with a true TBDR implementation. However if you look at ARM’s Mali-T6xx documentation, ARM also claims its GPU is a TBDR.

The real hint comes with anti-aliasing support:

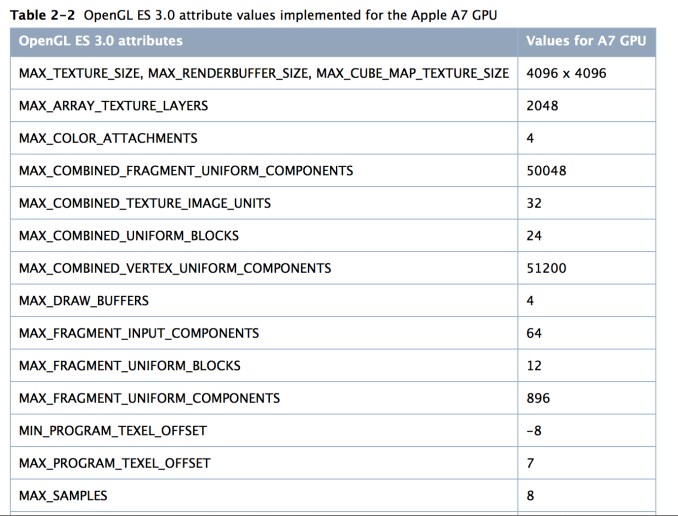

The last line in the screenshot above, MAX_SAMPLES = 8. That’s a reference to 8 sample MSAA, a mode that isn’t supported by ARM’s Mali-T6xx hardware - only PowerVR Series 6 (Mali-T6xx supports 4x and 16x AA modes).

There are some other hints here that Apple is talking about PowerVR Series 6 when it references the A7’s GPU:

“The A7 GPU processes all floating-point calculations using a scalar processor, even when those values are declared in a vector. Proper use of write masks and careful definitions of your calculations can improve the performance of your shaders. For more information, see “Perform Vector Calculations Lazily” in OpenGL ES Programming Guide for iOS.

Medium- and low-precision floating-point shader values are computed identically, as 16-bit floating point values. This is a change from the PowerVR SGX hardware, which used 10-bit fixed-point format for low-precision values. If your shaders use low-precision floating point variables and you also support the PowerVR SGX hardware, you must test your shaders on both GPUs.”

As you’ll see below, both of the highlighted statements apply directly to PowerVR Series 6. With Series 6 Imagination moved to a scalar architecture, and in ImgTec’s developer documentation it confirms that the lowest precision mode supported is FP16.

All of this leads me to confirm what I heard would be the case a while ago: Apple’s A7 is the first shipping mobile silicon to integrate ImgTec’s PowerVR Series 6 GPU.

Now let’s talk about hardware.

The A7’s GPU Configuration: PowerVR G6430

Previously known by the codename Rogue, series 6 has been announced in the following configurations:

| PowerVR Series 6 "Rogue" | ||||||||||||

| GPU | # of Clusters | # of FP32 Ops per Cluster | Total FP32 Ops | Optimization | ||||||||

| G6100 | 1 | 64 | 64 | Area | ||||||||

| G6200 | 2 | 64 | 128 | Area | ||||||||

| G6230 | 2 | 64 | 128 | Performance | ||||||||

| G6400 | 4 | 64 | 256 | Area | ||||||||

| G6430 | 4 | 64 | 256 | Performance | ||||||||

| G6630 | 6 | 64 | 384 | Performance | ||||||||

Based on the delivered performance, as well as some other products coming down the pipeline I believe Apple’s A7 features a variant of the PowerVR G6430 - a 4 cluster Rogue design optimized for performance (vs. area).

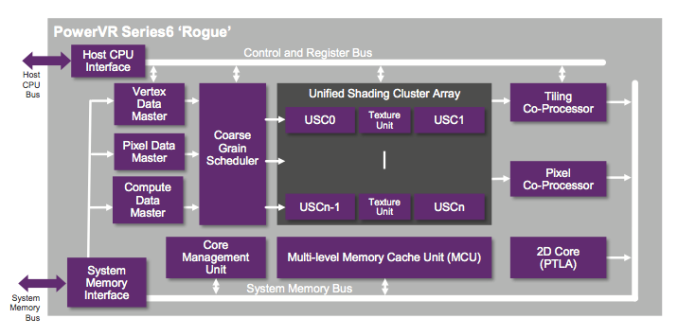

Rogue is a significant departure from the Series 5XT architectures that were used in the iPhone 5, iPad mini and iPad 4. The biggest change? A switch to a fully scalar architecture, similar to the present day AMD and NVIDIA GPUs.

Whereas with 5XT designs we talked about multiple cores, the default replication unit in Rogue is a “cluster”. Each core in 5XT replicated all hardware, while each cluster in Rogue only replicates the shader ALUs and texture hardware. Rogue is still a unified architecture, but the front end no longer scales 1:1 with shading hardware. In many ways this approach is a lot more sensible, as it is typically how you build larger GPUs.

In 5XT, each core featured a number of USSE2 pipelines. Each pipeline was capable of a Vec4 multiply+add plus one additional FP operation that could be dual-issued under the right circumstances. Img never detailed the latter so I always counted flops by looking at the number of Vec4 MADs. If you count each MAD as two FP operations, that’s 8 FLOPS per USSE2 pipe. Each USSE2 was a SIMD, so that’s one instruction across all 4 slots and not some combination of instructions. If you had 3 MADs and something else, the USSE2 pipe would act as a Vec3 unit instead. The same goes for 1 or 2 MADs.

With Rogue the USSE2 pipe is gone and replaced by a Unified Shading Cluster (USC). Each USC is a 16-wide scalar SIMD, with each slot capable of up to 4 FP32 ops per clock. Doing the math, a single USC implementation can do a total of 64 FP32 ops per clock - the equivalent of a PowerVR SGX 543MP2. Efficiency obviously goes up with a scalar design, so realizable performance will likely be higher on Rogue than 5XT.

The A7 is a four cluster design, so that four USCs or a total of 256 FP32 ops per clock. At 200MHz that would give the A7 twice the peak theoretical performance of the GPU in the iPhone 5. And from what I’ve heard, the G6430 is clocked much higher than that.

There’s more graphics horsepower under the hood of the iPhone 5s than there is in the iPad 4. While I don’t doubt the iPad 5 will once again widen that gap, keep in mind that the iPhone 5s has less than 1/4 the number of pixels as the iPad 4. If I were a betting man, I’d say that the A7 was designed not only to drive the 5s’ 1136 x 640 display, but also a higher res panel in another device. Perhaps an iPad mini with Retina Display? There’s no solving the memory bandwidth requirements, but the A7 surely has enough compute power to get there. There's also the fact that Apple has prior history of delivering an SoC that wasn’t perfect for the display (e.g. iPad 3).

GPU Performance

As I mentioned earlier, the iPhone 5s is the first Apple device (and consumer device in the world) to ship with a PowerVR Series 6 GPU. The G6430 inside the A7 is a 4 cluster configuration, with each cluster featuring a 16-wide array of SIMD pipelines. Whereas the 5XT generation of hardware used a 4-wide vector architecture (1 pixel per clock, all 4 color components per SIMD), Series 6 moves to a scalar design (think 16 pixels per clock, one color per clock). Each pipeline is capable of two FP32 MADs per clock, for a total of 64 FP32 operations per clock, per cluster. With the A7's 4 cluster GPU, that works out to be the same throughput per clock as the 4th generation iPad.

Imagination claims its new scalar architecture is not only more computationally dense, but also far more efficient. With the transition to scalar GPU architectures in the PC space we generally saw efficiency go up, so I'm inclined to believe Imagination's claims here.

Apple claims up to a 2x increase in GPU performance compared to the iPhone 5, but just looking at the raw numbers in the table above there's far more shading power under the hood of the A7 than only "2x" the A6.

| Mobile SoC GPU Comparison | ||||||||||||

| PowerVR SGX 543 | PowerVR SGX 543MP2 | PowerVR SGX 543MP3 | PowerVR SGX 543MP4 | PowerVR SGX 554 | PowerVR SGX 554MP2 | PowerVR SGX 554MP4 | PowerVR G6430 | |||||

| Used In | - | iPad 2/iPhone 4S | iPhone 5 | iPad 3 | - | - | iPad 4 | iPhone 5s | ||||

| SIMD Name | USSE2 | USSE2 | USSE2 | USSE2 | USSE2 | USSE2 | USSE2 | USC | ||||

| # of SIMDs | 4 | 8 | 12 | 16 | 8 | 16 | 32 | 4 | ||||

| MADs per SIMD | 4 | 4 | 4 | 4 | 4 | 4 | 4 | 32 | ||||

| Total MADs | 16 | 32 | 48 | 64 | 32 | 64 | 128 | 128 | ||||

| GFLOPS @ 300MHz | 9.6 GFLOPS | 19.2 GFLOPS | 28.8 GFLOPS | 38.4 GFLOPS | 19.2 GFLOPS | 38.4 GFLOPS | 76.8 GFLOPS | 76.8 GFLOPS | ||||

GFXBench 2.7

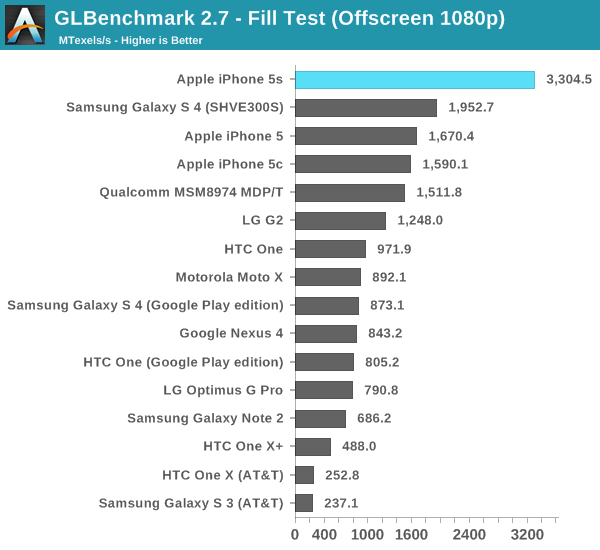

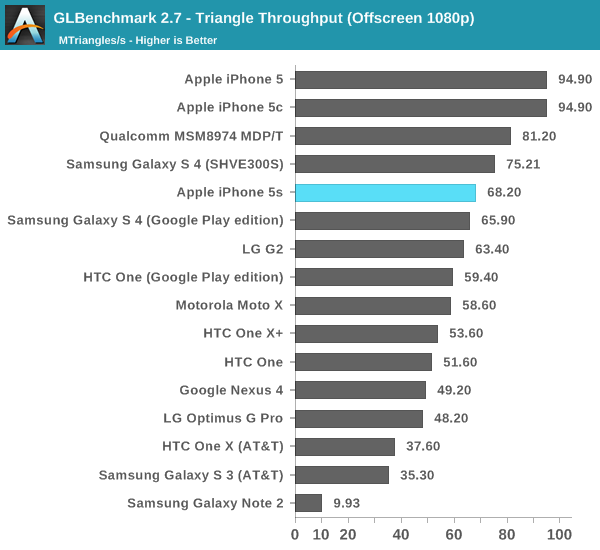

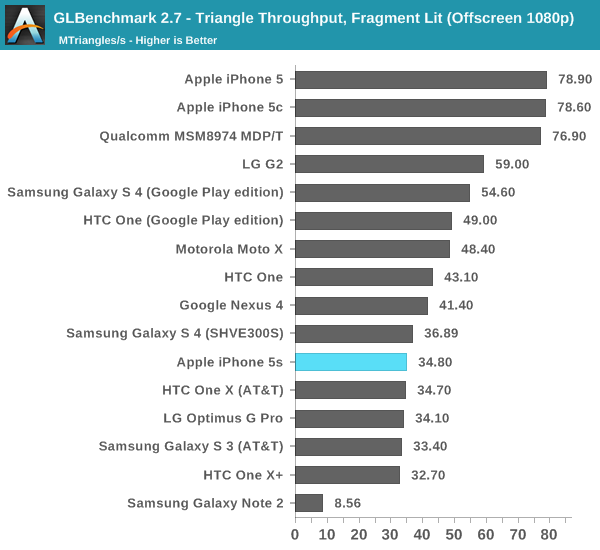

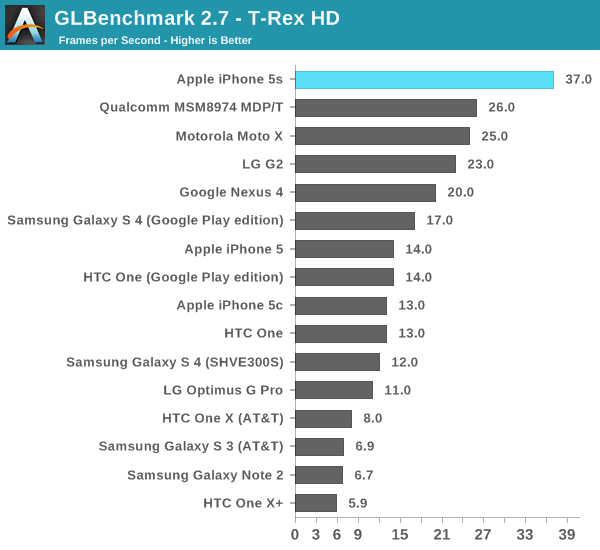

As always, we'll start with GFXBench (formerly GLBenchmark) 2.7 to get a feel for the theoretical performance of the new GPU. GFXBench 2.7 tends to be more computationally bound than most games as it is frequently used by silicon vendors to stress hardware, not by game developers as an actual performance target. Keep that in mind as we get to some of the actual game simulation results.

Twice the fill rate of the iPhone 5, and clearly higher than anything else we've tested. Rogue is off to a good start.

What's this? A performance regression? Remember what I said earlier in the description of Rogue. Whereas 5XT replicated nearly the entire GPU for "multi-core" versions, multi-cluster versions of Rogue only replicate at the shader array. The result? We don't see the same sort of peak triangle setup scaling we did back on multi-core 5XT parts. I don't suppose this will be a big issue in actual games (and likely a better balance between triangle setup/rasterization and shading hardware), but it's worth pointing out.

This is the worst case regression we've seen from 5XT to Rogue. Its clear that per chip triangle rates are much higher on Rogue, but with a many core implementation of 5XT there's just no competing. I suspect this change is part of how Img was able to increase the overall density of Rogue vs. 5XT. Now the question is whether or not this regression will actually appear in games? To find out we turn to the two game simulation tests in GFXBench 2.7, starting with the most stressful one: T-Rex HD.

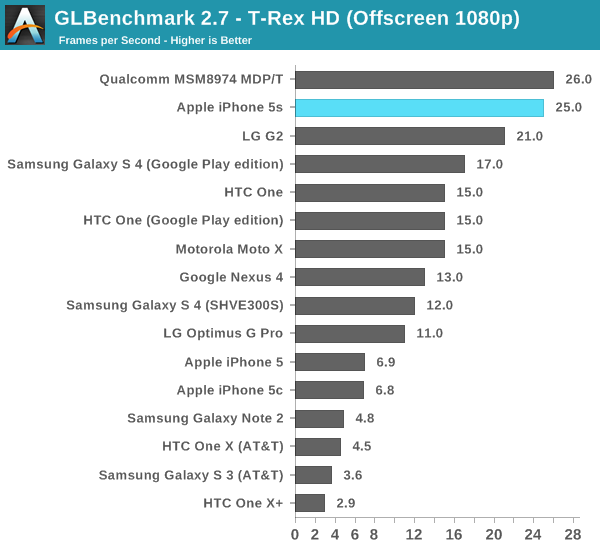

As always, the onscreen tests run at a device's native resolution with v-sync enabled, while the offscreen results happen at 1080p and v-sync disabled.

As expected, the G6430 in the iPhone 5s is more than twice the speed of the part in the iPhone 5. It is also the first device we've tested capable of breaking the 30 fps barrier in T-Rex HD at its native resolution. Given just how ridiculously intense this test is, I think it's safe to say that the iPhone 5s will probably have the longest shelf life from a gaming perspective of any previous iPhone.

The offscreen test helps put the G6430's performance in perspective. Here we show the 5s barely falling behind Qualcomm's Adreno 330 (Snapdragon 800). There are obvious thermal differences between the two platforms, but if we look at the G2's performance (another S800/A330 part) we get a better indication of an apples to apples comparison. Looking at the leaked Nexus 5 (also S800/A330) T-Rex HD scores confirms what we're seeing above. In a phone, it looks like the G6430 is a bit quicker than Qualcomm's Adreno 330.

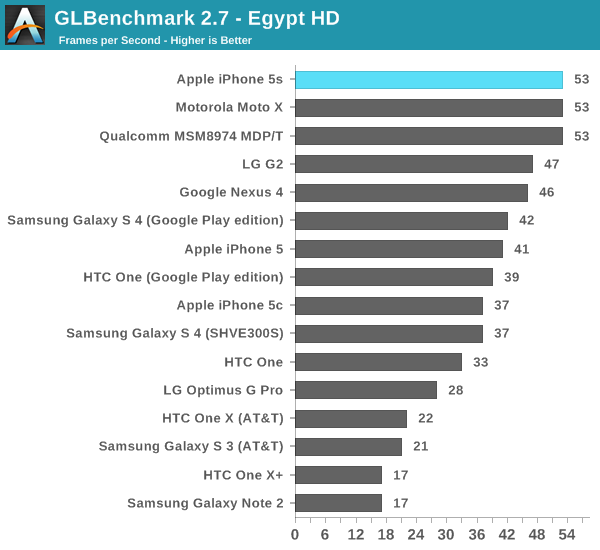

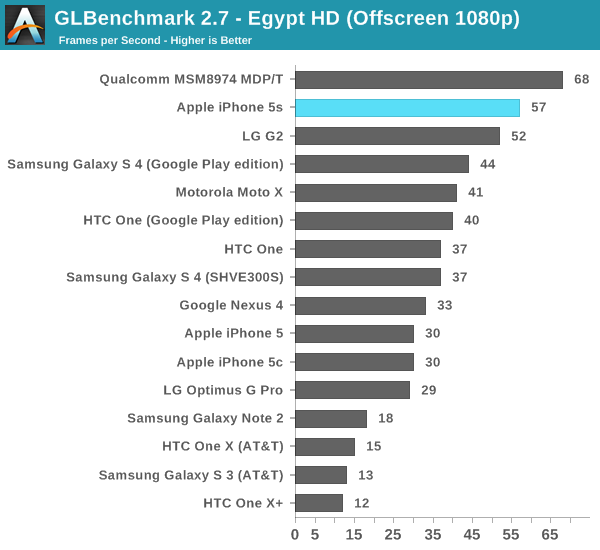

The Egypt HD tests are much lighter and a lot closer to the workload of a lot of games on the store today, although admittedly it is getting a little light.

Onscreen we're at Vsync already, something the iPhone 5 wasn't capable of doing. The 5s should have no issues running most games at 30 fps.

Offscreen, even at 1080p, performance doesn't really change. Qualcomm's Adreno 330 is definitely faster, at least in the MDP/T. In the G2, its performance lags behind the G6430. I really want to measure power on these things.

3DMark

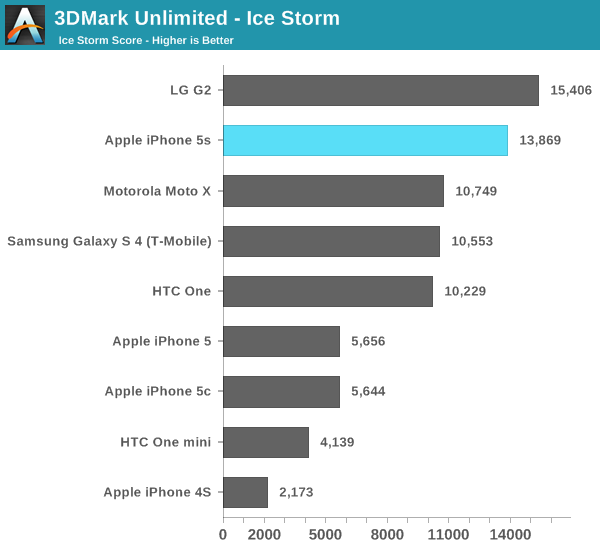

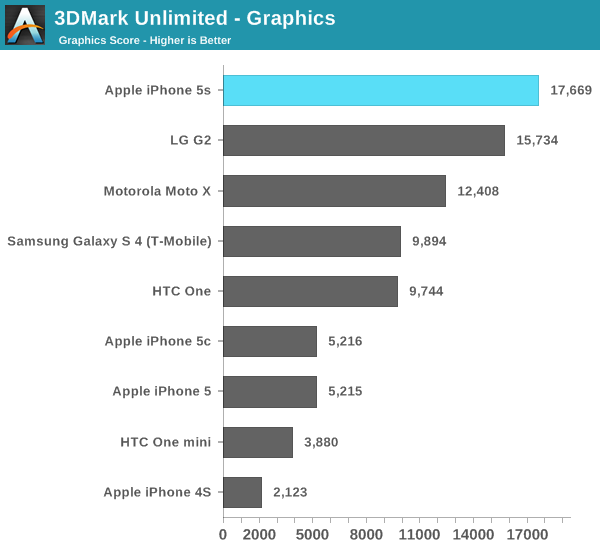

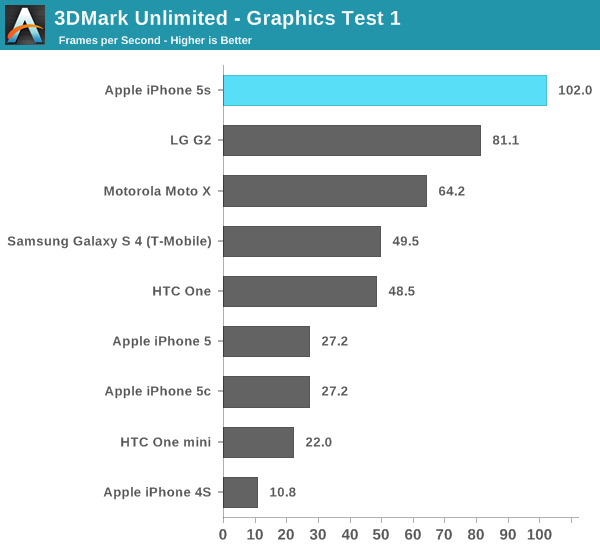

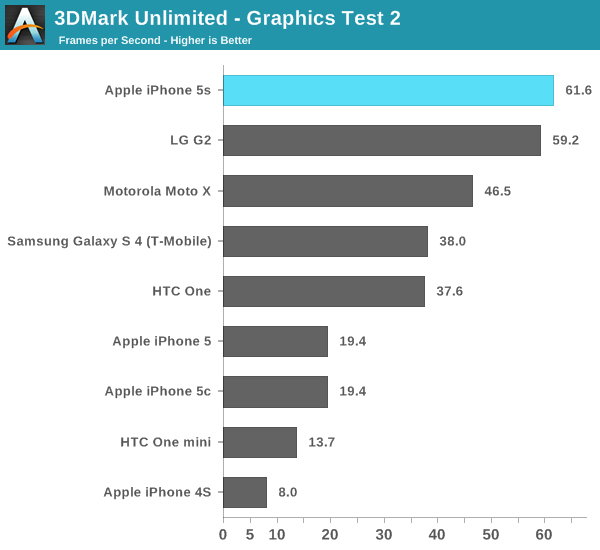

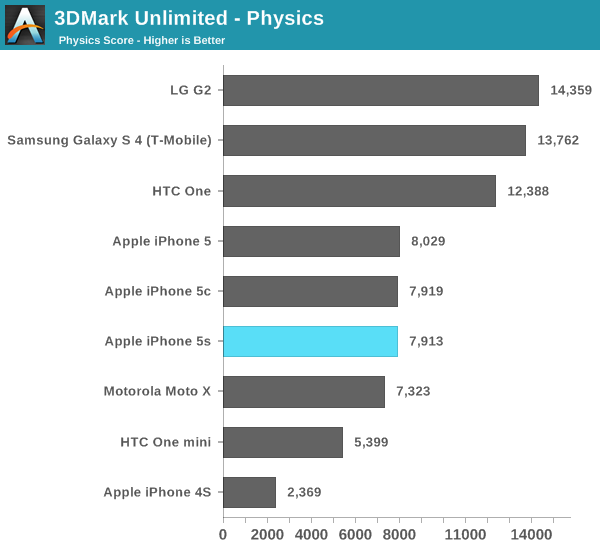

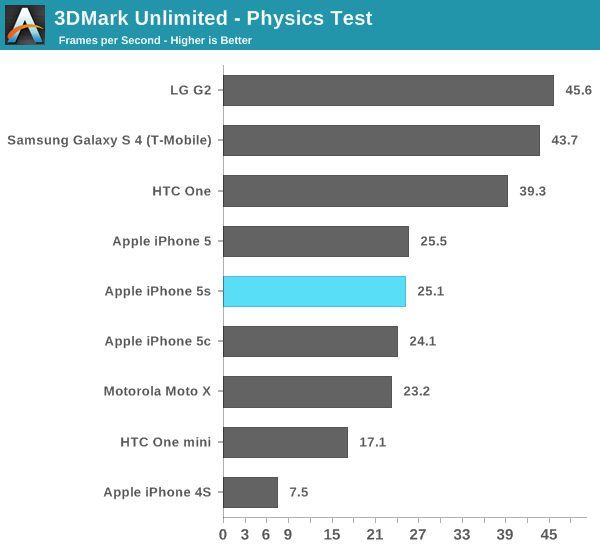

3DMark finally released an iOS version of its benchmark, enabling us to run the 5s through on yet another test. As we've discovered in the past, 3DMark is far more of a CPU test than GFXBench. While CPU load will range from 6 - 25% during GFXBench, we'll see usage greater than 50% on 3DMark - even during the graphics tests. 3DMark is also heavily threaded, with its physics test taking advantage of quad-core CPUs.

With the iOS release of the benchmark comes a new offscreen rendering mode called Unlimited. The benchmark is the same but it renders offscreen at 720p with the display only being updated once every 100 frames to somewhat get around vsync. Because of the new test we don't have a ton of comparison data, so I've included whatever we've got at this point.

3DMark ends up being more of a CPU and memory bandwidth test rather than a raw shader performance test like GFXBench and Basemark X. The 5s falls behind the Snapdragon 800/Adreno 330 based G2 in overall performance. To find out how much of that is GPU performance and how much is a lack of four cores, let's look at the subtests.

The graphics test is more GPU bound than CPU bound, and here we see the G6430 based iPhone 5s pull ahead. Note how well the Moto X does because of its very high clocked CPU cores rather than its GPU. Although this is a graphics test, it's still well influenced by CPU performance.

The physics test hits all four cores in a quad-core chip and explains the G2 pulling ahead in overall performance. Note that I saw no improvement in this largely CPU bound test, leading me to believe that we've hit some sort of a bug with 3DMark and the new Cyclone core.

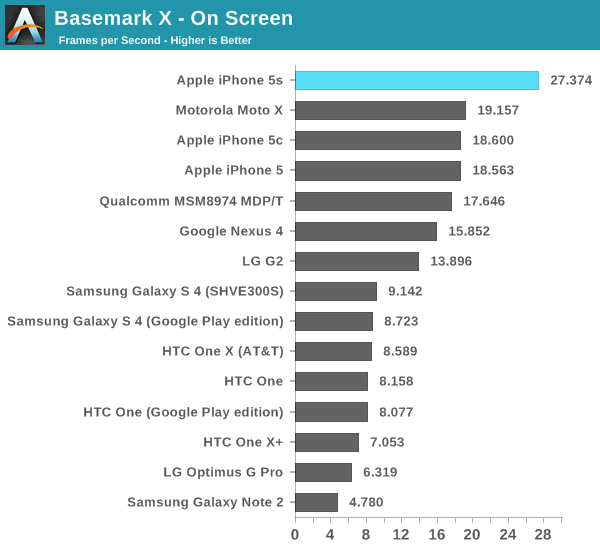

Basemark X

Basemark X is a new addition to our mobile GPU benchmark suite. There are no low level tests here, just some game simulation tests run at both onscreen (device resolution) and offscreen (1080p, no vsync) settings. The scene complexity is far closer to GLBenchmark 2.7 than the new 3DMark Ice Storm benchmark, so frame rates are pretty low.

Unfortunately I ran into a bug with Basemark X under iOS 7 on the iPhone 5/5c/5s that prevented the off screen test from completing, leaving me only with on-screen results at native resolution.

Once again we're seeing greater than 2x scaling comparing the iPhone 5s to the 5.

464 Comments

View All Comments

CyberAngel - Thursday, September 19, 2013 - link

Hurray!name99 - Wednesday, September 18, 2013 - link

I imagine he would say "I heard exactly the same shit about the A6 and how it couldn't possibly be as good as I claimed. Come back when you have NUMBERS to back up your complaints."monaarts - Wednesday, September 18, 2013 - link

You are mistaking a more natural transition to a 64-bit mobile world with a "PR stunt." Yes, Android will probably make a huge jump and switch to 64-bit and include 8GB of memory, or something crazy like that, but will only add to fragmentation they are already burdened with. However, Apple is trying to avoid that by building steps that lead to where they eventually want the iPhone to go.ddriver - Wednesday, September 18, 2013 - link

The PC market is fragmented as well, but is still goes, doesn't it? Surely, as a bigger ecosystem, android will be slower to adopt changes. But HPC doesn't really make sense in a phone, that is why most ARM chip vendors are focusing on server v8 chips and infrastructure, which will be more lucrative than consumer electronics. v8 is the future, no doubt about it, but apple has no other winning hand besides offering v8 a little too early, knowing they can make up for the cost of the premature transition with profit margins other manufacturers cannot dream of asking for the same hardware. Surely, other brands have their fanboys too, but nowhere nearly as fanatically devoted and eager to "just take my money".tbrizzlevb - Wednesday, September 18, 2013 - link

I've never had any problem with this "fragmentation" that you cut and pasted from somewhere. How exactly has that kept you from buying Android? Do you normally keep your phone for 5 years or more? I'd be willing to bet you aren't using an iPhone 3 right now.. If you upgrade your phone every few years anyways, what do you care if the first generation Droid doesn't run the latest OS update?AaronJ68 - Wednesday, September 18, 2013 - link

The fact that there was never an iPhone 3 might have something to do with that.CyberAngel - Thursday, September 19, 2013 - link

As a programmer I say there is a HUGE fragmentation problem with all the Androids.I code for Jelly Beans only and I do have an "old" 4.0 mono CPU device for testing the lag.

Focher - Wednesday, September 18, 2013 - link

You lost me when you used the phrase "apple fanboys" and "declining sales". You don't seem to understand the difference between market share versus unit sales.ddriver - Wednesday, September 18, 2013 - link

So you must have taken "apple fanboys" personally, and understandably "declining sales" in a context regarding apples conflicts you. Like all other vendors, apple sales drop, thus the periodic refreshes, which do need their selling points, this time around it is v8. In the past, apple resorted to similar strategies, like making exclusive deals, purchasing the entire initial batches of new generation parts, and again making up for the cost of this "innovation" with their profit margins.I don't recall any other brand which pushed kids to selling their organs, do you? If that is not fanatical fanboyism, I don't know what it is...

akdj - Thursday, September 19, 2013 - link

Weird---seems to me EVERY time an iPhone is released, it sells MORE than the previous version FASTER! In fact, it's never been surpassed by another electronic item in history...and it's sales continue to climb. News Flash! Android (High end; S4/Note/HTC 1/XPeria) also sell @ a premium and almost @ the exact price of Apple's handsets. The rest of your post is nonsense. It's not just Anand's site and review praising the performance of the A7---it's ubiquitous. Is Apple paying Ars? TechCrunch? MacRumors? WSJ? Engadget? CNet?Dude---this is one HELL of an SoC. It's so much MORE than a 64bit chess match. A company licensing and building from the ground up a CPU/GPU/IPU that matches their OS and allows the tools to developers (For Free!) in XCode so the transition is seamless! The speed of this chip is awesome. Setting themselves up early is smart---the iPad release is imminent and has competition from both sides...Microsoft and Android. With a significantly larger 'body' and area to allow for more RAM...and future releases of other ARM based products, Apple is making in-roads within the mobile sector that ONLY bozos that cherish other brands, OEMs, or otherwise have some weird bone to pick with Apple can't realize. Mind blowing. I'm brand agnostic---use OSx, Windows and even own a couple of Android devices....but to me as dismissive as you are about the A7s build and putting it in a product the size of a pack of smokes is about as silly and should I say 'ignorant' a stance as I've seen in a long LONG time.

This move by Apple is HUGE. Moore's law now attributed to mobile. Still a dual core. Still 1GB of RAM (albeit DDR3 vs DDR2)---yet doubling, tripling, sometimes quadrupling performance of the other 'off the shelf' SoCs on the market that other OEMs are using. It's no secret---Apple has been hiring and head hunting chip designers from Intel and AMD for some time now. These guys and gals are some of the brightest minds on earth....but you've got it all figured out and somehow Anand's been blinded by the conspiracy---as has EVERY other reviewer on the 'net.

Un-Believable.

J