Intel Core i7 4960X (Ivy Bridge E) Review

by Anand Lal Shimpi on September 3, 2013 4:10 AM EST- Posted in

- CPUs

- Intel

- Ivy Bridge

- Ivy Bridge-E

Gaming Performance

Chances are that any gamer looking at an IVB-E system is also considering a pretty ridiculous GPU setup. NVIDIA sent along a pair of GeForce GTX Titan GPUs, totalling over 14 billion GPU transistors, to pair with the 4960X to help evaluate its gaming performance. I ran the pair through a bunch of games, all at 1080p and at relatively high settings. In some cases you'll see very obvious GPU limitations, while in other situations we'll see some separation between the CPUs.

I haven't yet integrated this data into Bench, so you'll see a different selection of CPUs here than we've used elsewhere. All of the primary candidates are well represented here. There's Ivy Bridge E and Sandy Bridge E of course, in addition to mainstread IVB/SNB. I threw in Gulftown and Nehalem based parts, as well as AMD's latest Vishera SKUs and an old 6-core Phentom II X6.

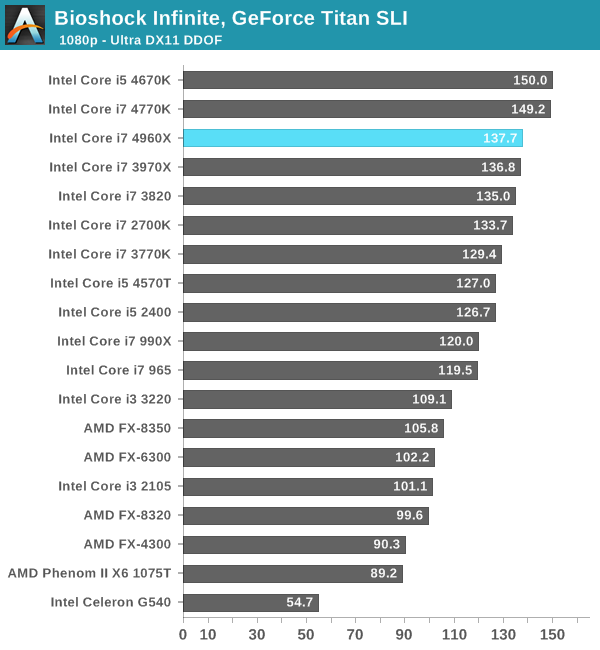

Bioshock Infinite

Bioshock Infinite is Irrational Games’ latest entry in the Bioshock franchise. Though it’s based on Unreal Engine 3 – making it our obligatory UE3 game – Irrational had added a number of effects that make the game rather GPU-intensive on its highest settings. As an added bonus it includes a built-in benchmark composed of several scenes, a rarity for UE3 engine games, so we can easily get a good representation of what Bioshock’s performance is like.

We're running the benchmark mode at its highest quality defaults (Ultra DX11) with DDOF enabled.

We're going to see a lot of this I suspect. Whenever we see CPU dependency in games, it tends to manifest as being very dependent on single threaded performance. Here Haswell's architectural advantages are appearent as the two quad-core Haswell parts pull ahead of the 4960X by about 8%. The 4960X does reasonably well but you don't really want to spend $1000 on a CPU just for it to come in 3rd I suppose. With two GPUs, the PCIe lane advantage isn't good for much.

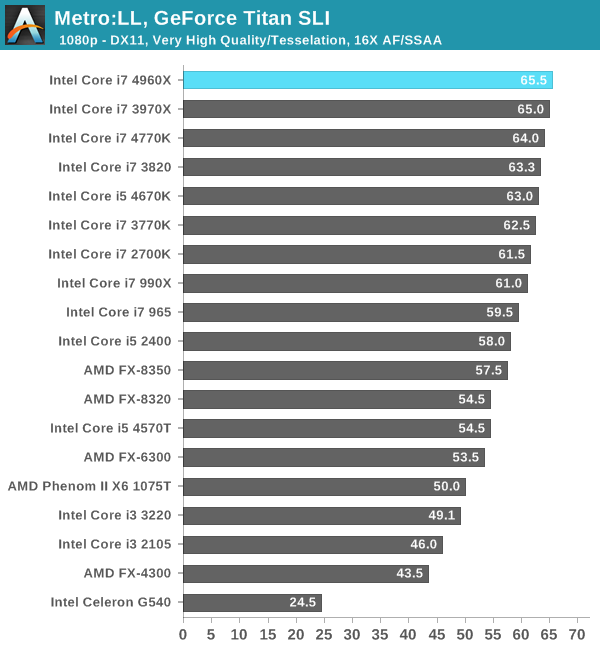

Metro: Last Light

Metro: Last Light is the latest entry in the Metro series of post-apocalyptic shooters by developer 4A Games. Like its processor, Last Light is a game that sets a high bar for visual quality, and at its highest settings an equally high bar for system requirements thanks to its advanced lighting system. We run Metro: LL at its highest quality settings, tesselation set to very high and with 16X AF/SSAA enabled.

The tune shifts a bit with Metro: LL. Here the 4960X actually pulls ahead by a very small amount. In fact, both of the LGA-2011 6-core parts manage very small leads over Haswell here. The differences are small enough to basically be within the margin of error for this benchmark though.

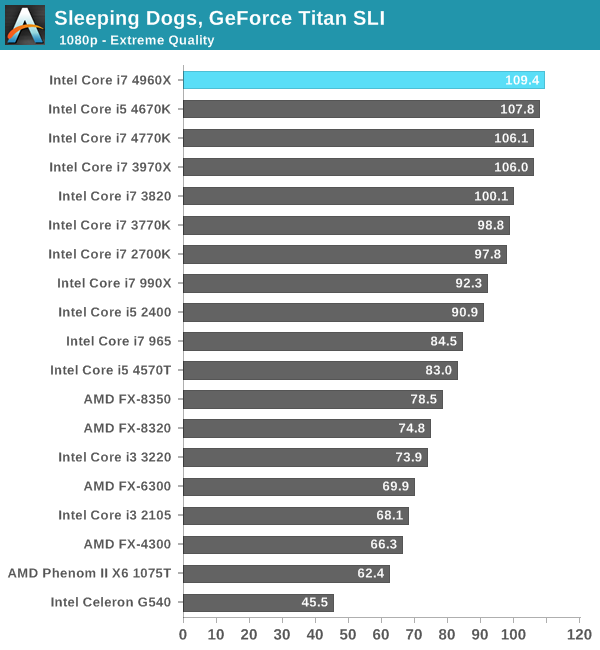

Sleeping Dogs

A Square Enix game, Sleeping Dogs is one of the few open world games to be released with any kind of benchmark, giving us a unique opportunity to benchmark an open world game. Like most console ports, Sleeping Dogs’ base assets are not extremely demanding, but it makes up for it with its interesting anti-aliasing implementation, a mix of FXAA and SSAA that at its highest settings does an impeccable job of removing jaggies. However by effectively rendering the game world multiple times over, it can also require a very powerful video card to drive these high AA modes.

Our test here is run at the game's Extreme Quality defaults.

Sleeping Dogs shows similar behavior of the 4960X making its way to the very top, with Haswell hot on its heels.

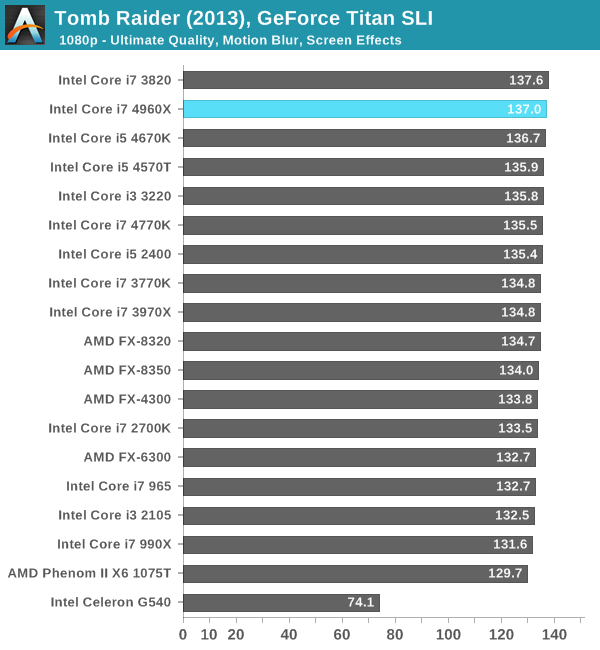

Tomb Raider (2013)

The simply titled Tomb Raider is the latest entry in the Tomb Raider franchise, making a clean break from past titles in plot, gameplay, and technology. Tomb Raider games have traditionally been technical marvels and the 2013 iteration is no different. Like all of the other titles here, we ran Tomb Raider at its highest quality (Ultimate) settings. Motion Blur and Screen Effects options were both checked.

With the exception of the Celeron G540, nearly all of the parts here perform the same. The G540 doesn't do well in any of our tests, I confirmed SLI was operational in all cases but its performance was just abysmal regardless.

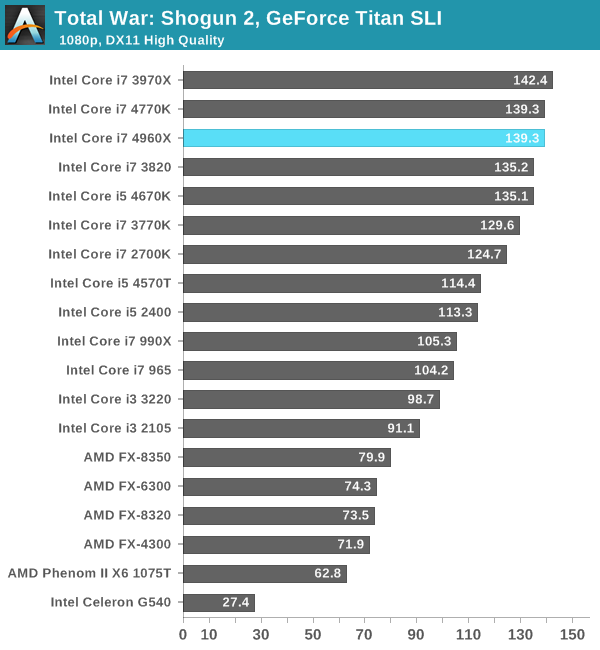

Total War: Shogun 2

Our next benchmark is Shogun 2, which is a continuing favorite to our benchmark suite. Total War: Shogun 2 is the latest installment of the long-running Total War series of turn based strategy games, and alongside Civilization V is notable for just how many units it can put on a screen at once. Even 2 years after its release it’s still a very punishing game at its highest settings due to the amount of shading and memory those units require.

We ran Shogun 2 in its DX11 High Quality benchmark mode.

We see roughly equal performance between IVB-E and Haswell here.

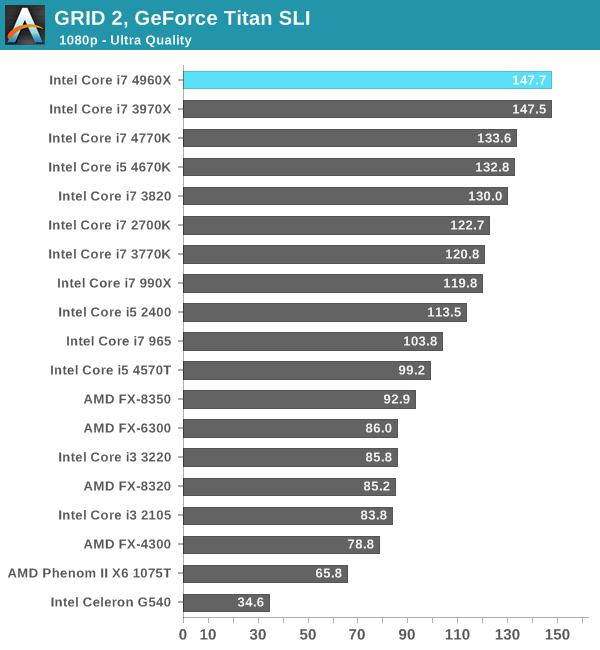

GRID 2

GRID 2 is a new addition to our suite and our new racing game of choice, being the very latest racing game out of genre specialty developer Codemasters. Intel did a lot of publicized work with the developer on this title creating a high performance implementation of Order Independent Transparency for Haswell, so I expect it to be well optimized for Intel architectures.

We ran GRID 2 at Ultra quality defaults.

We started with a scenario where Haswell beat out IVB-E, and we're ending with the exact opposite. Here the 10% advantage is likely due to the much larger L3 cache present on both IVB-E and SNB-E. Overall you'll get great gaming performance out of the 4960X, but even with two Titans at its disposal you won't see substantially better frame rates than a 4770K in most cases.

120 Comments

View All Comments

madmilk - Tuesday, September 3, 2013 - link

If you invested in the 980 or the 970 (not the extreme ones) you got an awesome deal. Three years old, $600, overclockable, and within 30% of the 4960X on practically everything.bobbozzo - Tuesday, September 3, 2013 - link

True, but my Haswell i5-4670k was around $200 for the CPU (on sale), and under $150 for an ASUS Z87-Plus motherboard.It's running on air cooling at 4.5/4.5/4.5/4.4GHz.

I wasn't expecting it to be as fast for gaming as an i7-4770k, but looking at the gaming benchmarks in this article, I'm extremely pleased that I did not spend more for the i7.

althaz - Tuesday, September 3, 2013 - link

I had a launch model Core 2 Duo (the E6300) that with overclocking (1.86Ghz => 2.77Ghz) was a pretty decent CPU until last year (when I replaced it with an Ivy Bridge Core i5). That's what? Six years out of the CPU and it's still going strong for my buddy (to whom it now belongs).Kevin G - Tuesday, September 3, 2013 - link

"My biggest complaint about IVB-E isn't that it's bad, it's just that it could be so much more. With a modern chipset, an affordable 6-core variant (and/or a high-end 8-core option) and at least using a current gen architecture, this ultra high-end enthusiast platform could be very compelling."I think that you answered why Intel isn't going this route earlier in the article. Consumers are getting the smaller 6 core Ivy Bridge-E chip. There is also a massive 12 core chip due soon for socket 2011 based servers. Harvesting an 8 core versions from the 12 core die is an expensive proposition and something Intel may not have the volumes for (they're not going to hinder 10 and 12 core capable dies to supply 8 core volumes to consumers). Still, if Intel wanted to, they could release an 8 core Sandy bridge-E chip and use that for their flag ship processor since the architectural differences between Sandy and Ivy Bridge are minor.

The chipset situation just sucks. Intel didn't even have to release a new chipset, they could have released an updated X79 (Z79 perhaps?) that fixed the initial bugs. For example, ship with SAS ports enabled and running at 6 Gbit speeds.

Sabresiberian - Tuesday, September 3, 2013 - link

"The big advantages that IVB-E brings to the table are a ridiculous number of PCIe lanes , a quad-channel memory interface and 2 more cores in its highest end configuration."I'm going to pick on you a little bit here Anand, because I think it is important that we convey an accurate image to Intel about what we as end-users want from the hardware they design. 40 PCIe 3.0 lanes is NOT "ridiculous". In fact, for my purposes I would call it "inadequate". Sure, "my purposes" are running 3 2560x1440 screens @ 120Hz and that isn't the average rig today, but I want to suggest it isn't far off what people are now asking for. We should be encouraging Intel to give us more PCIe connectivity, not implying we have too much already. :)

canthearu - Tuesday, September 3, 2013 - link

Actually, you would find that you are still badly limited by graphics power, rather than limited by system bandwidth.A modern graphics card doesn't even stress out 8 lanes PCIe 3.0.

I'm also not saying that it is a bad thing to have lots of I/O, It isn't. However you do need to know where your bottlenecks are. Otherwise you spend money trying to fix the wrong thing.

The Melon - Tuesday, September 3, 2013 - link

Not all high bandwidth PCI-e cards are graphics cards.I for one would like to be able to run 2x PCIe x16 GPU's and at least 1 each of LSI SAS 2008, dual port DDR or QDR Infiniband, dual port 10GBe and perhaps an actual RAID card.

Sure that is a somewhat extreme example. But you can only run one of the expansion cards plus 2 GPU before you run out of lanes. This is an enthusiast platform after all. Many of us are going to want to do Extreme things with it.

Flunk - Tuesday, September 3, 2013 - link

Now you're just being silly, sending $10,000 on a system without any real increase in performance for anything you're going to do on a desktop/workstation is just stupid.Besides, if you're being incredibly stupid you'd need to go quad Xeons anyway (160 PCI-E lanes FTW).

Azethoth - Tuesday, September 3, 2013 - link

On the one hand, good review. On the other hand, my dream of a new build in the "performance" line is snuffed out. It just seems so lame making all these compromises vs Haswell, and basically things will never get better because the platform target is shifting to mobile and so battery life is key and performance parts will just never be a focus again.f0d - Tuesday, September 3, 2013 - link

i feel the same waythe future doesnt look too bright for the performance enthusiast - i dont want low power smaller cpu's i want BIG 8/12 core cpus and i dont really give a crap about power usage